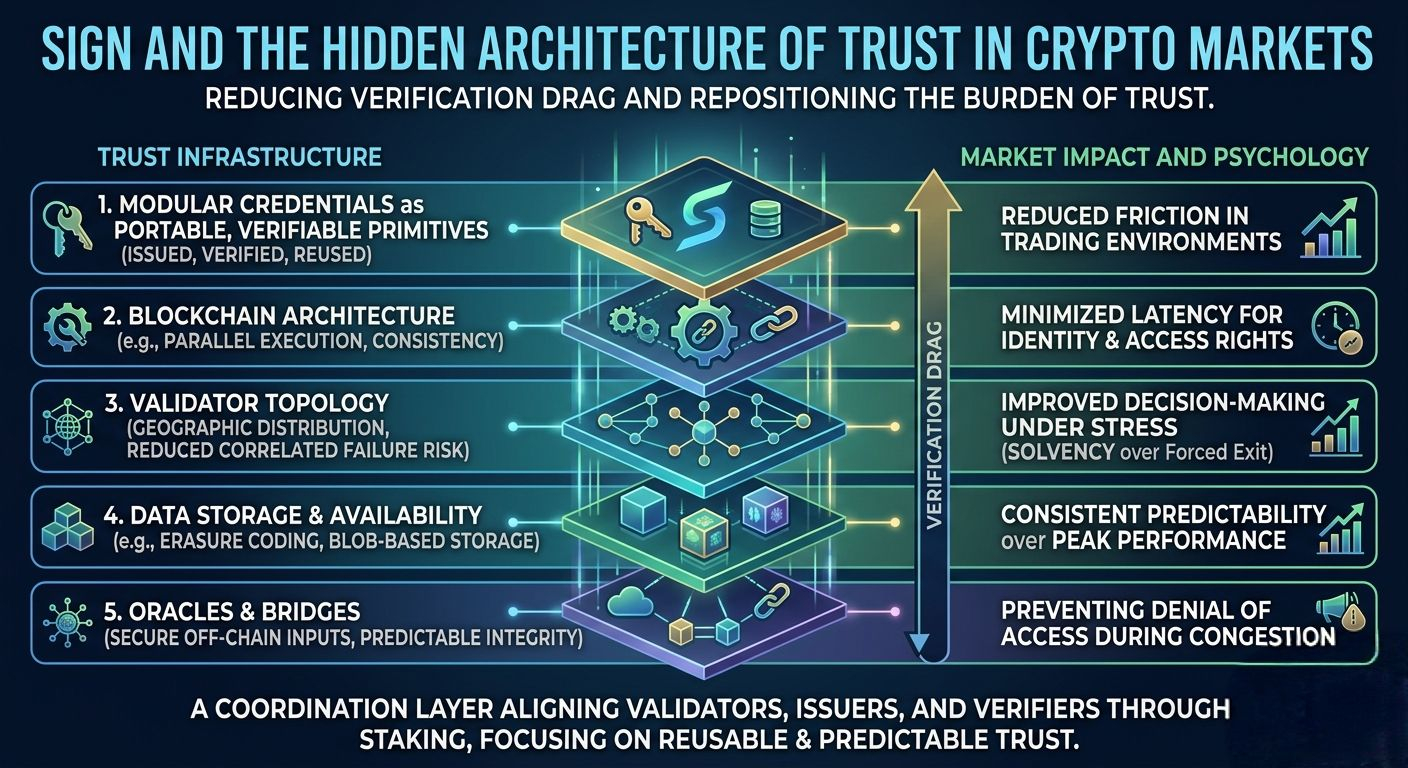

I’m starting to notice a pattern that doesn’t get talked about enough. There’s a hidden cost in crypto that I keep running into, something I think of as verification drag, the slow, almost invisible friction that builds every time trust has to be rebuilt from zero. It shows up in small delays, in repeated checks, in that slight hesitation before execution when you’re not fully sure the system will respond the way it should.

When I look at this, what stands out to me is not just the ambition to build credential infrastructure, but the attempt to reduce that verification drag at a structural level. Not eliminate trust, that’s unrealistic, but reshape where it lives and how often it needs to be invoked.

Because the truth is, decentralization loses its meaning the moment data ownership quietly recentralizes. You can distribute execution across validators, parallelize transaction processing, even compress state updates into efficient blobs, but if the credentials that define identity, access, or reputation sit behind opaque or privileged systems, then the user is still negotiating with a gatekeeper. Just a quieter one.

I’ve seen this play out in trading environments. A simple execution flow, say rotating capital across two venues during a volatility spike, quickly becomes a chain of dependencies. You’re not just executing a trade. You’re trusting that your identity or credentials will be recognized, that access rights won’t lag, that oracle fed conditions reflect reality, and that settlement won’t get caught in some asynchronous mismatch. Each layer introduces delay. Each delay alters behavior.

Sometimes it’s subtle. You hesitate. You widen your acceptable entry range. You reduce size. Not because the market moved, but because the system might.

That’s where infrastructure design stops being abstract and starts shaping psychology.

This approach attempts to modularize credentials into something closer to portable, verifiable primitives. Not tied to a single application, not locked into a specific execution environment. The idea is that identity and credentials become composable objects, issued, verified, and reused without forcing users to re enter the same trust loop repeatedly.

But that only works if the underlying infrastructure respects that separation.

The choice of blockchain architecture matters here more than people admit. Parallel execution environments, for example, can improve throughput, but they also introduce complexity in state consistency. If credential verification depends on cross shard communication or delayed finality, then the system risks reintroducing the very latency it aims to remove.

And latency, in markets, is never neutral.

Validator topology is another quiet variable. A geographically concentrated validator set might offer faster consensus under normal conditions, but it introduces correlated failure risks. Network partitions, regional outages, or coordinated delays can ripple through credential verification processes in ways that aren’t immediately visible but become critical under stress.

I tend to think of it like this, credentials are only as reliable as the slowest layer that confirms them.

Then there’s the question of how data is actually stored and distributed. Systems that rely on techniques like erasure coding or blob based storage can improve availability and reduce redundancy costs, but they also change the trust model. Data is no longer whole in any single place. It’s reconstructed on demand.

That’s efficient. But it raises a subtle question, what happens when reconstruction fails under load.

In a calm market, probably nothing noticeable. In a stressed one, where multiple actors are querying, verifying, and acting simultaneously, even small delays can cascade. A credential that verifies in 200 milliseconds under normal conditions might take 800 milliseconds under congestion. That difference doesn’t sound dramatic, but in a liquidation cascade, it’s the difference between execution and slippage.

Or between solvency and forced exit.

Block time consistency plays into this more than raw speed. I care less about how fast a block can be produced and more about how predictable that timing is. Irregular block intervals introduce jitter into every dependent system, credential checks, oracle updates, liquidity routing. Predictability, not peak performance, is what allows participants to form reliable expectations.

And expectations are what markets run on.

This system sits in an interesting position because it doesn’t operate purely as a transactional layer. It’s closer to a coordination layer, something that other systems depend on to make decisions about access, trust, and eligibility.

That makes its failure modes different.

If a high performance chain slows down, trades get delayed. If a credential system becomes unreliable, entire classes of actions become uncertain. Access might fail. Permissions might lag. Systems that depend on verified identity might default to denial rather than risk.

That’s a different kind of fragility.

There are also trade offs that shouldn’t be ignored. Full decentralization of credential issuance is difficult to achieve without sacrificing some level of efficiency or introducing governance overhead. At some point, someone, or some mechanism, decides what constitutes a valid credential. Whether that’s a DAO, a federation of issuers, or a set of predefined rules, there’s always a boundary where decentralization meets coordination.

And coordination, by definition, introduces structure.

Compared to other high performance chains that prioritize execution speed or liquidity aggregation, this design shifts toward identity and verification as first class primitives. That’s a different axis of optimization. It doesn’t compete directly on transaction throughput, it competes on reducing the need for redundant trust verification.

But that also means its success depends on integration, not isolation.

Adoption isn’t just about developers building on top. It’s about systems choosing to rely on it for something as sensitive as identity or credentials. That requires predictable costs, consistent performance, and most importantly, long term data accessibility. A credential that can’t be reliably retrieved or verified years later isn’t infrastructure. It’s a temporary artifact.

Incentives play a role here, but not in the usual speculative sense. The native token functions more as a coordination mechanism. It aligns validators, issuers, and verifiers around maintaining the integrity and availability of credential data. Staking isn’t just about securing consensus, it’s about signaling commitment to the reliability of the system.

Governance, then, becomes less about control and more about adaptation. Credential standards will evolve. Privacy expectations will shift. Regulatory pressures will emerge. The system needs to adjust without fragmenting.

That’s harder than it sounds.

Oracles and bridges introduce another layer of complexity. Credentials often depend on off chain data, real world events, institutional records, or external validations. If those inputs are delayed, manipulated, or inconsistent, the entire verification pipeline inherits that uncertainty.

I’ve seen trades fail not because the market moved, but because an oracle update lagged just enough to invalidate an assumption. Now imagine that dependency extended to identity itself.

Liquidity flows are also affected. If access to certain pools or strategies depends on verified credentials, then delays or inconsistencies in verification can distort capital allocation. Funds might sit idle not due to lack of opportunity, but due to uncertainty in eligibility.

That’s a quiet inefficiency. But it compounds.

Stress testing a system like this requires thinking beyond normal conditions. What happens during network congestion when credential verification requests spike. How does the system handle partial failures, where some validators are responsive and others lag. What’s the fallback when oracle data becomes inconsistent or delayed.

Designing for these scenarios isn’t optional. It’s the difference between a system that works in theory and one that survives real markets.

Because real markets are not forgiving environments.

They don’t care about architectural elegance. They care about outcomes. Execution, access, settlement. In that order.

What I find compelling here is not that it solves all of this, no system does, but that it attempts to reposition where the burden of trust sits. It acknowledges that verification is a cost, not just a feature. And it tries to compress that cost into something more reusable, more predictable.

But the real structural test isn’t in early integrations.

It’s in repetition.

Can the system verify credentials reliably under sustained load, across different applications, without introducing new forms of friction. Can it maintain data availability and integrity over long time horizons, not just in bursts of activity. Can it distribute trust without quietly recentralizing it in practice.

If it can, then verification drag starts to fade. Not disappear, but become manageable.

If it can’t, then it becomes just another layer. Another dependency. Another point where the system hesitates.

And in markets, hesitation is never neutral.

@SignOfficial #SignDigitalSovereignInfra $SIGN