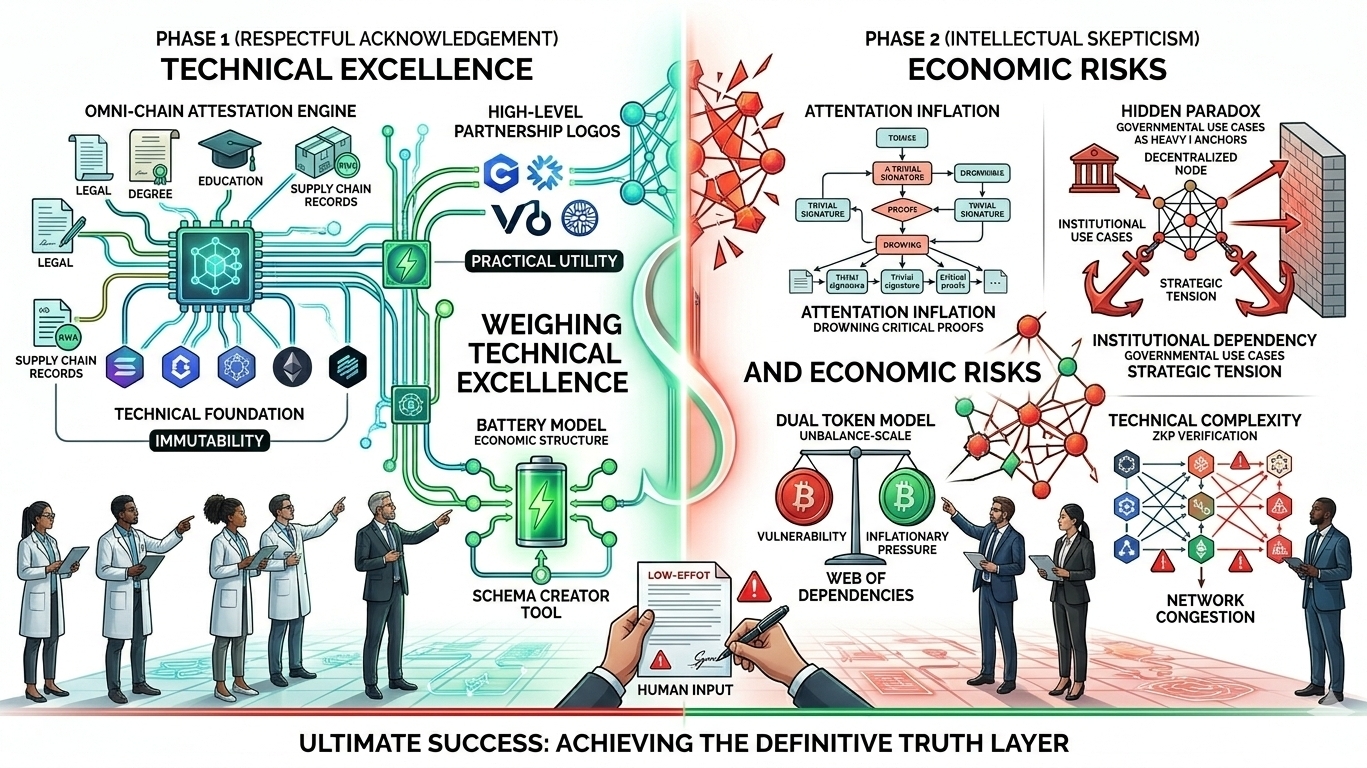

I recognize the ambition behind the $SIGN Protocol as a sophisticated omni chain attestation layer designed to solve the fundamental problem of trust in a fragmented digital world. By providing a decentralized infrastructure that allows any data point whether a legal contract or an educational degree to be cryptographically signed and verified the project has achieved a high level of technical excellence. Its ability to operate across multiple blockchain ecosystems through a schema based architecture is a tangible milestone in the push for interoperable decentralized identity.  The integration of high level partnerships with major protocols and the focus on verifiable computing for institutional use cases demonstrates a clear move toward practical utility. These are not merely speculative promises but functional tools that address the growing global demand for data integrity and secure information management in an era of increasing digital fraud. The development of the battery model for its economic structure also reflects a deep understanding of the need for sustainable long term resource allocation within decentralized networks.$SIGN

The integration of high level partnerships with major protocols and the focus on verifiable computing for institutional use cases demonstrates a clear move toward practical utility. These are not merely speculative promises but functional tools that address the growing global demand for data integrity and secure information management in an era of increasing digital fraud. The development of the battery model for its economic structure also reflects a deep understanding of the need for sustainable long term resource allocation within decentralized networks.$SIGN

I must acknowledge that the core strength of this system lies in its flexibility. Unlike previous attempts at digital identity that forced users into a single rigid format the schema based approach allows developers to define exactly what they are verifying. This makes the protocol highly adaptable for everything from real world asset tokenization to simple social media badges. By anchoring these attestations on a decentralized ledger the protocol ensures that once a piece of information is signed it remains immutable and verifiable by any third party without needing a central intermediary. This technical foundation is a necessary step toward a more transparent digital economy where the provenance of data is just as important as the data itself.

However as I transition into a more critical and analytical phase I must highlight the inherent paradoxes and economic tensions that could challenge the long term viability of the protocol. While the dual token model is designed to provide stability it risks creating a complex web of dependencies where the value of the network is overly tied to high velocity signing activity rather than genuine high quality data validation. There is a subtle but significant risk that the system could inadvertently facilitate the verification of falsehoods if the initial data sources are not subjected to the same level of decentralized scrutiny as the signatures themselves. I must also consider the potential for attestation inflation where the sheer volume of verified data points makes it difficult for users to discern between critical proofs and trivial noise. If every minor interaction becomes a signed attestation the cryptographic weight of the protocol might be diluted by a sea of inconsequential data.

Furthermore the reliance on institutional and governmental use cases while providing immediate credibility creates a strategic tension with the core tenets of absolute decentralization. If the protocol becomes too deeply embedded in state level compliance frameworks it may lose the agility and neutrality that are essential for a truly global permissionless trust layer. We must ask whether a system that prides itself on being decentralized can remain truly independent when its primary utility is serving as a digital notary for centralized authorities. This creates a functional paradox where the success of the protocol depends on the very entities it aims to disrupt.

From a technical perspective the complexity of maintaining zero knowledge proof verification across diverse chains introduces a surface area for bugs or synchronization failures that could undermine user confidence during periods of high network congestion. While the goal of being omni chain is noble it requires a level of cross chain communication that is notoriously difficult to secure. A single failure in the bridging or attestation relay could lead to a loss of trust that would be difficult to recover. This suggests that while the technical foundation is robust the path to becoming the universal layer of trust requires a rigorous and transparent recalibration of its economic incentives and a more cautious approach to centralized data dependencies to ensure the protocol does not become a victim of its own architectural complexity.

I also observe that the human element of these attestations remains a significant hurdle. Even with perfect cryptographic security a signature is only as good as the intent behind it. If users are incentivized solely by token rewards to sign data they might not adequately vet the information they are confirming. This could lead to a scenario where the protocol is technically sound but logically flawed because it is built on a foundation of low effort human inputs. To truly succeed the protocol must find a way to verify the validator as rigorously as it verifies the signature itself.@SignOfficial

Ultimately the success of Sign will depend on its ability to balance these competing forces. It must remain decentralized enough to be trusted by the crypto community while being compliant enough to be used by institutions. It must be efficient enough for mass adoption without sacrificing the security that makes it valuable in the first place. The coming years will be a trial by fire for this infrastructure as it moves from controlled environments into the chaotic reality of global data markets. Only by addressing these systemic risks head on can the protocol hope to achieve its vision of becoming the definitive truth layer for the digital age.