There was a time when I thought verification was a solved problem in digital systems. If something is on-chain, signed and publicly verifiable, then trust should naturally follow. That assumption feels logical on the surface. But the more I looked at how real systems operate the more that idea started to break down.

Verification does not eliminate trust. It reorganizes it.

Most modern systems that deal with credentials, ownership or eligibility rely on a structure where claims are issued, formatted and later verified. A degree, a license, a whitelist eligibility or even a transaction condition is no longer just raw data. It becomes a structured claim that follows a predefined format often called a schema. That schema defines what the claim means, what fields it includes and how it should be interpreted by any system that reads it later.

At first glance, this looks like a clean solution. Standardize the format, attach a signature and let any application verify it without repeating the entire process. In theory, this reduces friction across systems. In practice, it introduces a different kind of dependency that is easy to overlook.

The system can verify that a claim is valid. It cannot verify whether the claim was issued under the right conditions.

This distinction matters more than it sounds.

Two different entities can issue the same type of credential using the exact same schema. On-chain, both will appear equally valid. Both will pass verification checks. Both will be accepted by systems that rely purely on structure and signatures. But the actual rigor behind those credentials can be completely different. One issuer may enforce strict requirements, while another may apply minimal checks. The verification layer treats them as equivalent unless additional context is introduced.

This is where trust quietly shifts.

Instead of trusting a centralized database, users and systems begin to rely on issuers. These issuers become the starting point of truth. They decide who qualifies, what evidence is required and under what conditions a claim can be revoked or updated. By the time a credential reaches a user or an application most of the meaningful decisions have already been made upstream.

Verification in this model becomes a confirmation process, not a judgment process.

That creates an interesting tension. On one hand, structured verification makes systems more scalable and interoperable. Applications no longer need to rebuild logic for every new integration. They can simply read and validate existing claims. This reduces duplication, speeds up workflows and allows data to move more freely across platforms.

On the other hand, the system becomes sensitive to the quality of its inputs.

If issuers are inconsistent, biased or loosely governed the entire network inherits that inconsistency. The infrastructure does not fail visibly. It continues to operate exactly as designed. Claims remain verifiable. Signatures remain valid. But the underlying meaning of those claims starts to drift.

This is not a technical failure. It is a governance problem expressed through technical systems.

The challenge becomes even more complex when multiple environments are involved. Modern verification systems often rely on a mix of on-chain records, off-chain storage and indexing layers that make data accessible in real time. This hybrid structure is necessary for scale and cost efficiency, but it introduces additional points of failure. Data may exist, but not be easily retrievable. Indexers may lag. Storage layers may become temporarily unavailable.

In those moments, the question is no longer whether something is verifiable in theory but whether it is accessible and usable in practice.

That gap between theoretical trust and operational trust is where most real-world issues appear.

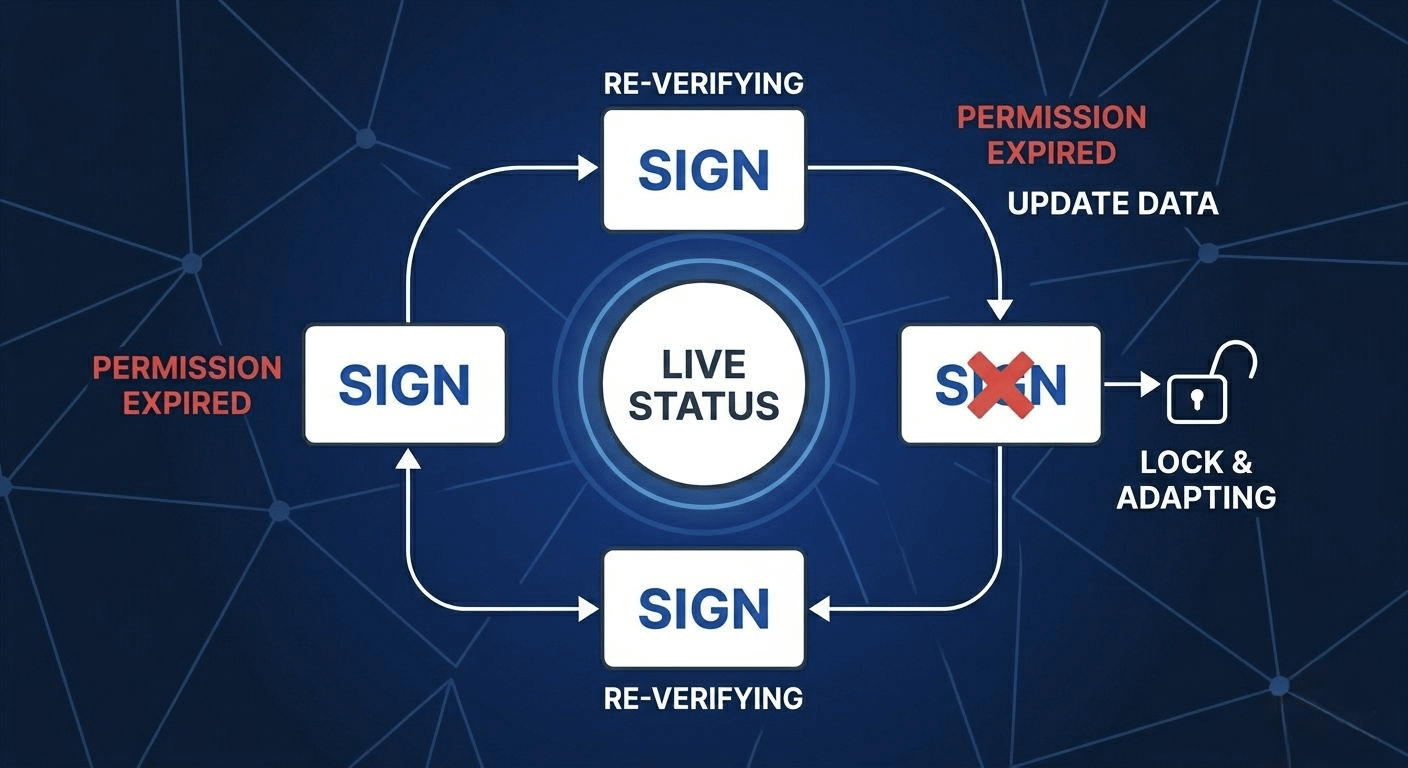

Another layer of complexity comes from revocation and lifecycle management. A credential is rarely permanent. Licenses expire. Permissions change. Ownership can be transferred. Systems need to account not just for the existence of a claim but for its current state. This requires continuous updates, reliable status tracking and clear rules around who has the authority to modify or invalidate a claim.

Again, the infrastructure can support these features. But it cannot enforce how responsibly they are used.

All of this points to a broader realization. Verification systems are not replacing trust. They are redistributing it across different layers issuers, standards, storage systems and verification logic. Each layer introduces its own assumptions and risks.

What looks like decentralization at one level can still depend heavily on coordination at another.

This does not make the model flawed. It makes it incomplete.

For these systems to work reliably at scale, there needs to be more than just technical standardization. There needs to be alignment around issuer reputation, governance frameworks and shared expectations about what a valid claim actually represents. Without that, verification remains technically correct but contextually fragile.

So the real question is not whether a system can verify data.

The question is whether the ecosystem around that system can maintain the integrity of what is being verified.

Because in the end, trust is not just about proving that something exists.

It is about being confident that what exists actually means what we think it does.