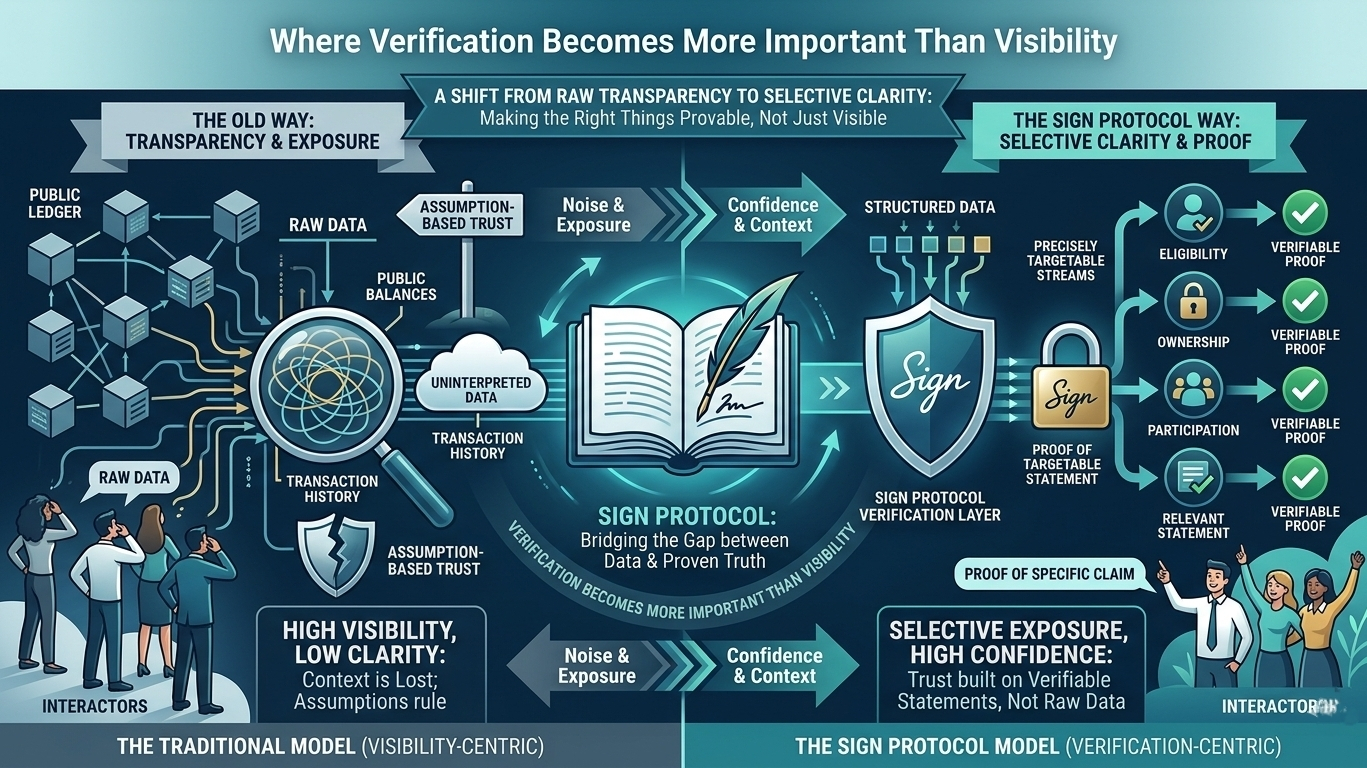

One thing I’ve been thinking about lately is how much of crypto still depends on visibility rather than actual verification. Most systems kind of rely on the idea that if it’s public, it must be trustworthy.But that’s not always true. Just because data is visible doesn’t mean it’s meaningful, and just because something is hidden doesn’t mean it can’t be trusted. That’s the gap where Sign Protocol starts to make more sense to me not as another identity layer, but as a way to separate verification from exposure.

Right now, most on-chain systems lean heavily toward transparency. Everything is out there balances, transactions, interactions but interpreting that data still requires assumptions. You see activity, but you don’t always understand context. On the other side, when systems try to protect privacy, they often lose verifiability in the process. It becomes harder to prove anything without revealing too much. What Sign seems to be doing is shifting the focus away from “what is visible” to “what can be proven.”

That difference might sound small, but it changes how systems can be designed. Instead of exposing full datasets, you can prove specific things eligibility, ownership, participation without sharing everything behind them. It’s a more precise way of interacting. You’re not showing your entire history, just the part that matters for that moment. That kind of control becomes important when systems start dealing with more sensitive or real-world use cases.

I also think this changes how trust is formed. Instead of relying on patterns or assumptions, trust can be built on verifiable statements. You don’t need to analyze someone’s entire activity to understand their position you just need a proof that confirms what’s relevant. That reduces noise. It makes interactions cleaner, even if the underlying systems are still complex.

What stands out to me is that this doesn’t really break when you move between systems. Public chain or private setup, the logic stays the same. It’s still about proving something only the access around it changes.That consistency makes it easier for systems to interact without losing meaning, because they’re not relying on completely different logic.

Of course, this only works if the proofs themselves are reliable. If the way something is verified isn’t strong enough, then the whole structure weakens. So the focus isn’t just on hiding or revealing data it’s on making sure whatever is being proven can stand on its own without needing extra trust.

At a broader level, this feels like a shift away from raw transparency toward selective clarity. Not everything needs to be visible, but everything that matters should be provable. And that’s a different way of thinking compared to how most systems are built today.

Maybe that’s the key idea here. It’s not about showing more or hiding more it’s about making sure the right things can always be verified..

#signdigitalsovereigninfra @SignOfficial $SIGN