Lately, I’ve been stuck on this one thought: what if systems like $SIGN aren’t actually uncovering the truth, but quietly manufacturing it?

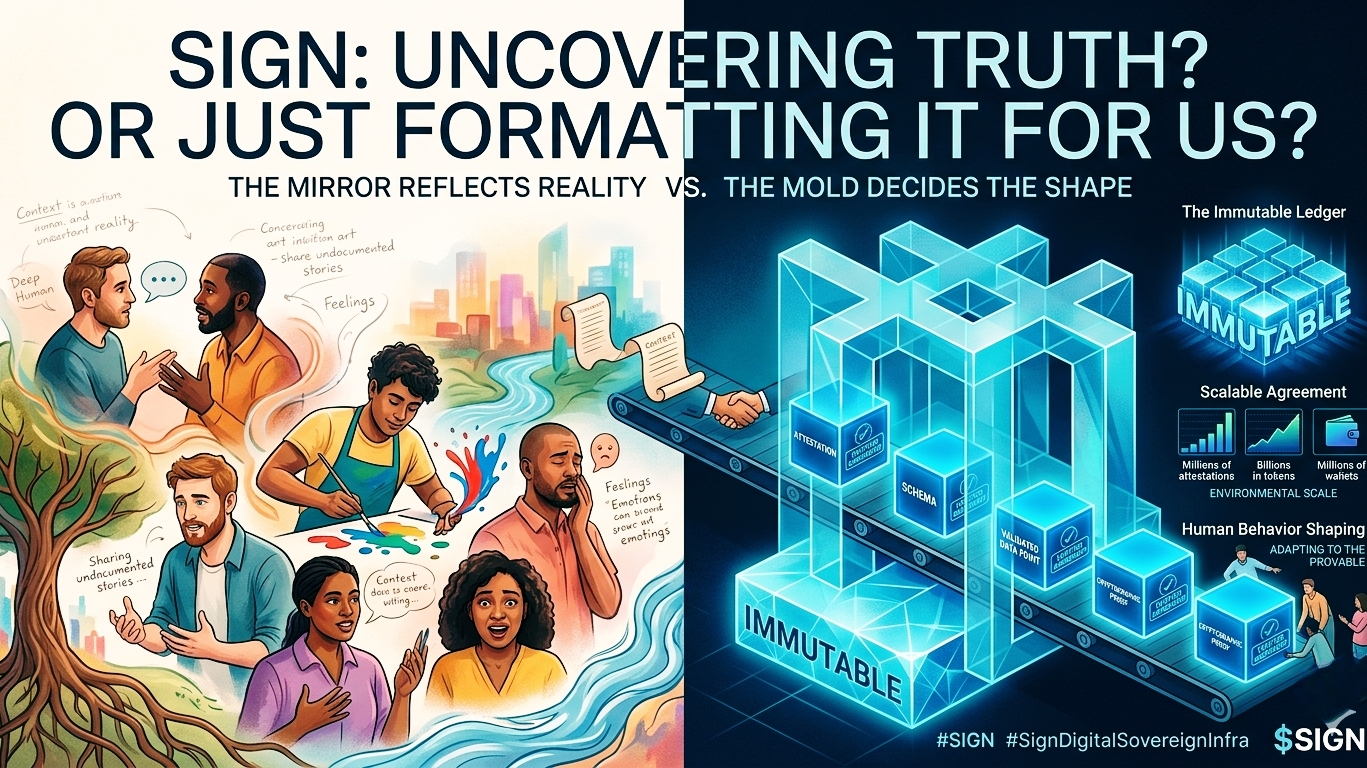

The more I sit with it, the more "verification" feels less like a mirror and more like a mold. A mirror just reflects what’s already there. A mold decides the shape of something before it’s even formed. With all the schemas, attestations, and programmable rules in SIGN, it definitely feels closer to a mold.

Think about it—before anything can be verified, it has to be structured. It has to fit neatly into a schema. That tiny, almost invisible requirement actually changes everything. It means reality basically has to agree to become "data" before it can be acknowledged. Anything that resists being put into a box just... quietly disappears.

It makes me wonder: are we discovering truth here, or are we just formatting it?

Attestations make this even weirder. On the surface, they look like hard proof. But if you slow down and really look at them, they aren’t "truth" itself. They’re just cryptographic proof that someone agreed on a version of the truth. And once that claim is recorded, shared, and reused enough times, it magically starts to feel like absolute reality—even if it just started as an assumption.

Maybe we aren’t building a system that proves reality. Maybe we’re just building a machine that locks in agreement.

And then there’s the sheer scale of it. Millions of attestations. Billions in tokens. Tens of millions of wallets. When something gets this big, it’s no longer just a tool—it becomes an environment. People don’t just use it; they adapt to it.

If your rewards, access, and opportunities rely on what you can prove, human behavior will naturally start bending toward whatever is provable. Not necessarily what’s deeply true, but what checks the system’s boxes. In that light, token distribution isn’t just economics. It’s subtle conditioning. It silently teaches people which actions matter and what kind of identity actually counts.

Even privacy starts to feel a bit different. Selective disclosure sounds super empowering, but you’re really just choosing from a menu that someone else designed for you. It’s a very quiet, structural kind of control.

And don't get me started on immutability. It used to feel like a safeguard, but the more I think about it, the heavier it feels. Humans are supposed to change. We mess up, we grow, we leave old versions of ourselves behind. But a blockchain never forgets. What happens when a constantly evolving human is tied to a frozen, non-living record?

Even cross-chain trust feels less like actual certainty and more like we are just porting our assumptions around, unquestioned.

It all comes down to this: in a world where everything has to be cryptographically proven, what happens to the things that can’t be? What happens to intuition, context, and the messy human parts of life that refuse to be compressed into a smart contract field?

Maybe the real shift here isn’t technological. It’s philosophical.

We used to live in a world where truth existed first, and systems tried their best to capture it. Now, we are moving into a world where systems dictate the very conditions under which truth is allowed to exist.

If that’s the case, the question isn’t whether protocols like SIGN are working. The much more uncomfortable question is:

Are we still discovering truth... or are we just learning to live inside the version of truth our tech allows us to see?

I’ve spent years watching tech projects claim they’re going to "solve trust." Honestly, whenever I hear that phrase, my guard goes up. Human behavior is messy—we lie, panic, chase trends, and act completely irrationally even when the incentives are obvious. You can't just cram that into a neat little protocol.

But that’s exactly why I’m keeping a close eye on SIGN, and why the project actually feels grounded to me. It doesn’t pretend we’re all going to suddenly act like perfectly predictable network nodes. Instead, it’s trying to build a fluid, contextual version of credibility that actually travels with you.

Turning Claims into Portable Proof

If I had to boil down what SIGN actually does, it’s this: it turns claims into portable proof. Think about how annoying the internet is right now. Every time you log into a new platform, try to use a financial service, or join a crypto community, you have to prove who you are from scratch. It’s a massive waste of time, and worse, it keeps trust trapped on isolated islands.

SIGN flips the script. It lets verifiable claims (attestations) live on their own, outside of any single app. It sounds simple, but it totally changes the game for how systems talk to each other.

Beyond Crypto: Real-World Potential

Take healthcare, for example. Moving medical records around is a nightmare. Your history is scattered across doctors, labs, and insurers who can barely communicate. Imagine a SIGN-like model here: you wouldn’t have to hand over your entire medical file just to prove one thing. You could just share a cryptographic stamp saying, "I’m eligible for this treatment," or "I have this specific condition," while keeping everything else private. It’s the perfect sweet spot between protecting your data and actually getting things done.

I see the exact same thing happening with AI. Lately, everyone is rightfully stressing over where training data comes from. Is it ethical? Was it tampered with? Right now, we basically just have to cross our fingers and trust the big institutions. But what if datasets carried verifiable, tamper-proof receipts of their origin and usage rights? Instead of blind trust, systems could actually validate the math. SIGN fits perfectly into this future where data brings its own verifiable history along for the ride.

Solving for Human Coordination

What gives me a lot of hope is that SIGN isn’t just geeking out over the tech layer; it’s tackling real human coordination problems. Look at token airdrops. How many times have we seen projects get drained by bots and farmers playing the system? It’s basically a lottery right now. But if we use attestations to prove real participation and contribution, suddenly token distribution becomes intentional. You reward the right people.

The Reality Check: Friction and Governance

But let’s be real—there are some serious hurdles here:

The Adoption Hurdle: I’ve seen incredibly brilliant tech die just because nobody actually used it. For SIGN to win, developers have to build with it, and users have to interact with it without even noticing. If it feels clunky, people will bail immediately. The best version of SIGN is invisible.

The Governance Trap: Who actually gets to decide what a "valid" attestation is? Decentralization sounds great on paper, but in the real world, big players usually end up setting the rules. If a small club gets to define what makes someone "credible," we’re just recreating the same gatekeeping we already have in traditional finance.

Human Nature: Even with rock-solid math, people will find a way to game the system—creating misleading claims or exploiting loopholes. But I appreciate that SIGN embraces this reality instead of hiding from it. By making everything transparent, it’s much easier to spot the inconsistencies early. It won’t prevent all failures, but it stops the silent, catastrophic ones.

Why 2026 is the Right Time

Looking at the landscape right now in 2026, the timing feels incredibly right. The AI world is screaming for data integrity, healthcare is desperate for interoperability, and the crypto space is finally maturing past pure speculation into actual, useful infrastructure. SIGN sits right at the intersection of all three.

Still, I’m not getting ahead of myself. A great narrative doesn't guarantee a great product, and execution is everything. The ultimate test for SIGN isn’t whether it can build a cool attestation system—it’s whether it can quietly become the default trust layer of the internet without anyone even realizing it’s there.@SignOfficial #SignDigitalSovereignInfra #sign $SIGN