There was a moment when I looked at a transaction that had already been confirmed, and for some reason, I didn’t move on right away. Everything was technically correct the signature checked out, the data was there, nothing looked unusual. But I still paused. I remember thinking, “I can see that this happened… but do I really understand what I’m trusting here?” It wasn’t doubt exactly, just a quiet feeling that verification alone didn’t fully answer the question in my head.

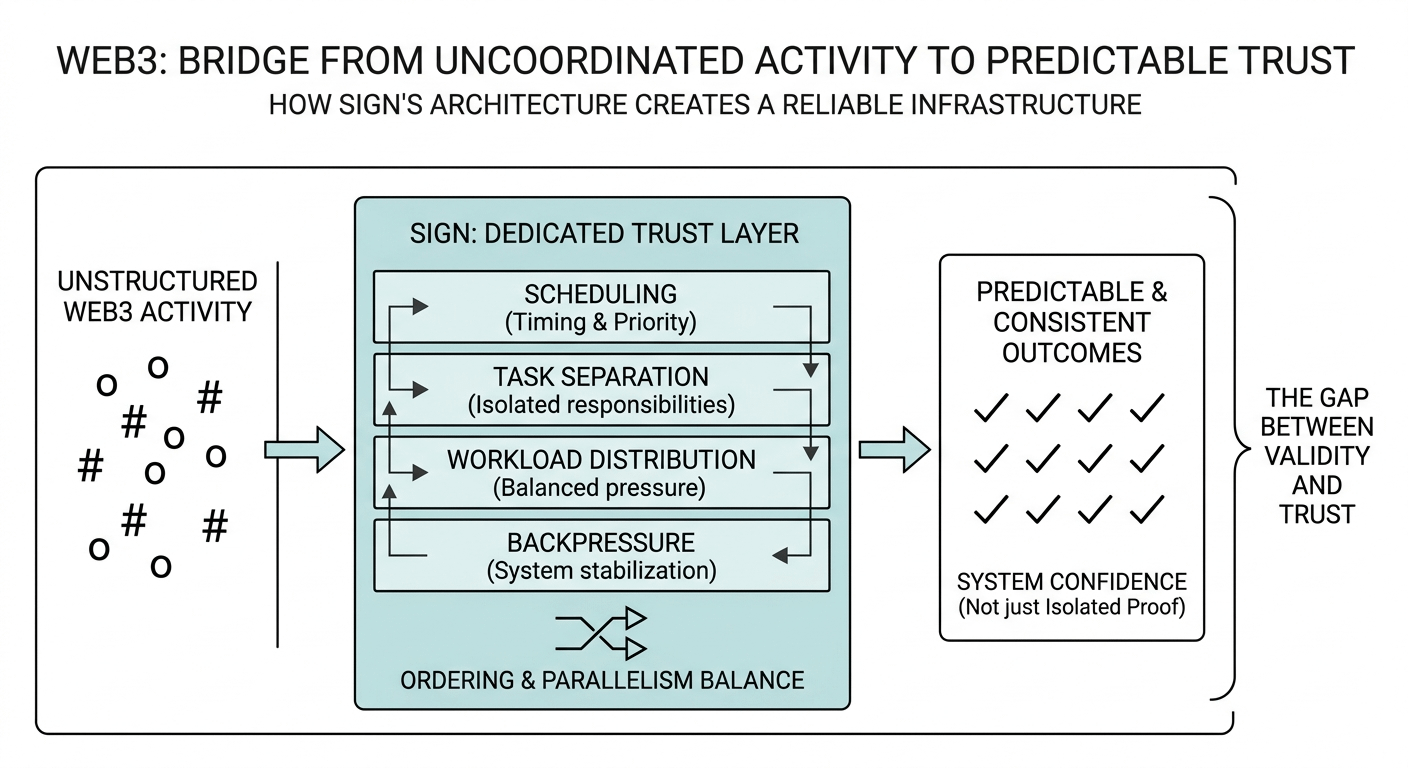

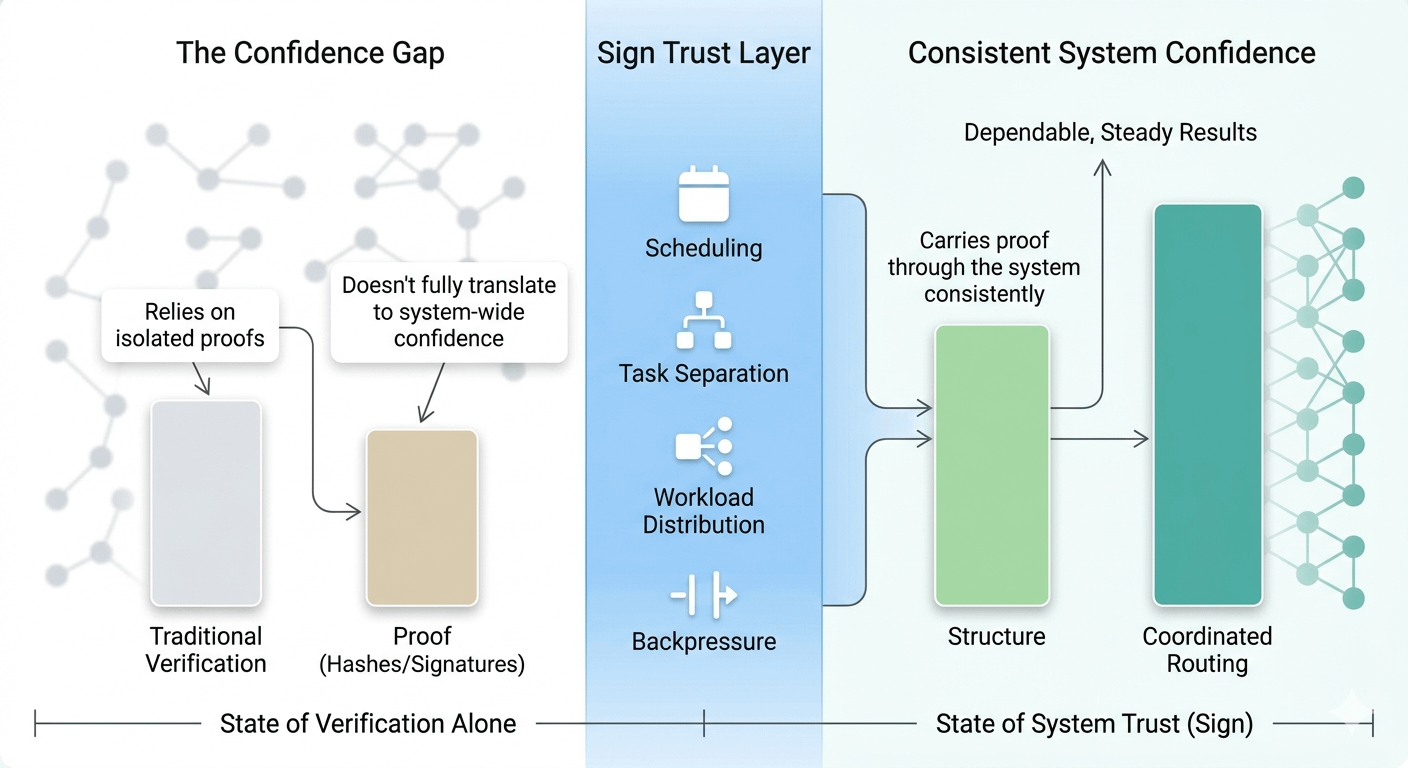

After experiencing that a few times, I started paying more attention to how often this happens in Web3. We rely heavily on proof hashes, signatures, confirmations but what I noticed is that proof doesn’t always translate into confidence. There’s a subtle gap between something being valid and something feeling trustworthy. That gap usually shows up when systems are under pressure when transactions overlap, when data arrives out of order, or when different parts of the network interpret things slightly differently.

I think of it like a large postal system. Every package gets scanned and stamped at each checkpoint, so technically everything is verified. But if the routing between centers isn’t well coordinated, or if timing becomes inconsistent during busy periods, the system starts to feel unreliable even if every individual scan is correct. Trust, in that sense, isn’t just about confirmation. It’s about how smoothly everything connects together.

When I look at how Sign approaches this, what caught my attention is that it seems to focus on that missing layer the part between isolated proofs and overall system confidence. It doesn’t feel like it treats trust as something that automatically emerges. Instead, the design seems to acknowledge that trust needs structure. From a system perspective, that means thinking about how information flows, not just how it’s verified.

What interests me more is how that idea shows up in the architecture. Scheduling plays a role in deciding when things enter the system, which becomes important when activity isn’t evenly distributed. Task separation keeps different responsibilities from interfering with each other, so one process doesn’t quietly slow everything else down. Workload distribution helps spread pressure across the network, and backpressure gives the system a way to stabilize itself instead of breaking under stress.

Then there’s the balance between ordering and parallelism. Real world activity doesn’t happen in neat sequences, but systems still need to create a sense of order. Too much strict ordering can slow things down. Too much parallelism can make outcomes feel inconsistent. In my experience watching networks, the systems that feel the most reliable are the ones where you don’t notice this balance at all it just works in the background.

The more I reflect on it, the more I realize that Web3 doesn’t just need ways to prove things happened. It needs a way to carry that proof through the system in a way that feels consistent and dependable over time. That’s where a dedicated trust layer starts to make sense not as an extra feature, but as something foundational.

A reliable system, at least from what I’ve seen, isn’t the one that simply produces correct results. It’s the one that makes those results feel steady, even when everything behind the scenes is complex or unpredictable. Good infrastructure doesn’t ask you to think about it. It just quietly holds everything together.