I used to believe governance in crypto was something systems added once they matured.

Build the protocol first. Let users come. Then layer governance on top to manage growth. It felt like a natural sequence, almost inevitable. If a system worked, coordination would follow.

But over time, that assumption started to feel incomplete.

What unsettled me wasn’t governance failing. It was governance existing without consequence. Systems had proposals, votes, and frameworks. But very little of it shaped behavior in a durable way.

And that gap was hard to ignore.

Looking closer, the problem wasn’t obvious at first.

Everything appeared functional. Interfaces were clean. Participation metrics were visible. Communities seemed active. But behavior told a different story.

The same wallets dominated outcomes. Most users interacted once, then disengaged. Governance remained optional, available, but rarely consequential.

And optional systems tend to be ignored.

What emerged wasn’t overt centralization, but something quieter. Influence concentrated not through control, but through absence. When most participants don’t act, coordination collapses into a small, active minority.

The ideas sounded important, decentralization, coordination, collective input but they didn’t translate into consistent participation.

It felt less like governance.

More like simulation.

That’s when my framework started to shift.

I stopped evaluating governance as a feature and began evaluating it as behavior.

Instead of asking whether a system had governance, I started asking whether governance actually shaped outcomes over time.

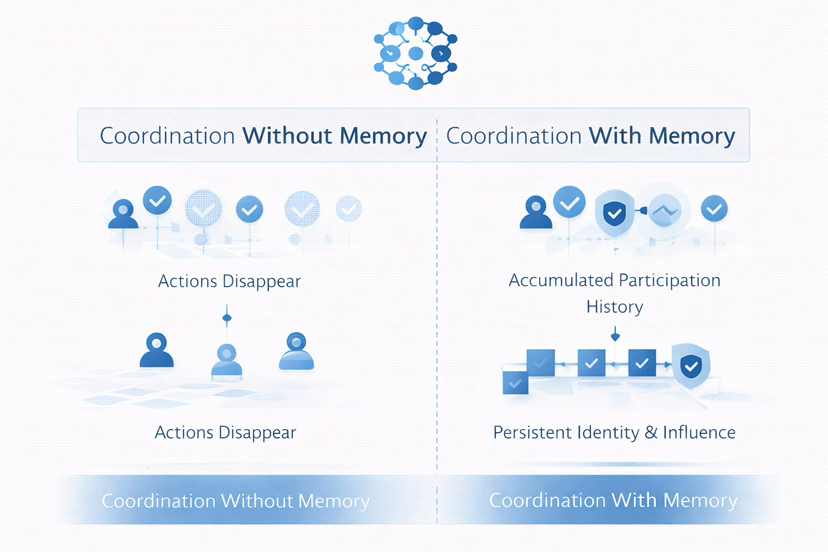

Metrics like voter turnout or proposal counts became less meaningful. What mattered was continuity. Did users return? Did their actions accumulate? Did the system remember anything about participation?

Most systems, I realized, don’t remember.

They reset.

This is where @SignOfficial entered my thinking, not as a solution, but as a different starting point.

At first, it didn’t resemble governance at all.

There were no familiar voting interfaces or token-weighted mechanisms. No emphasis on episodic participation. It felt understated, almost too foundational to notice.

But upon reflection, that was the point.

$SIGN wasn’t asking how to improve governance.

It was asking a more structural question:

What if coordination didn’t depend on optional participation at all?

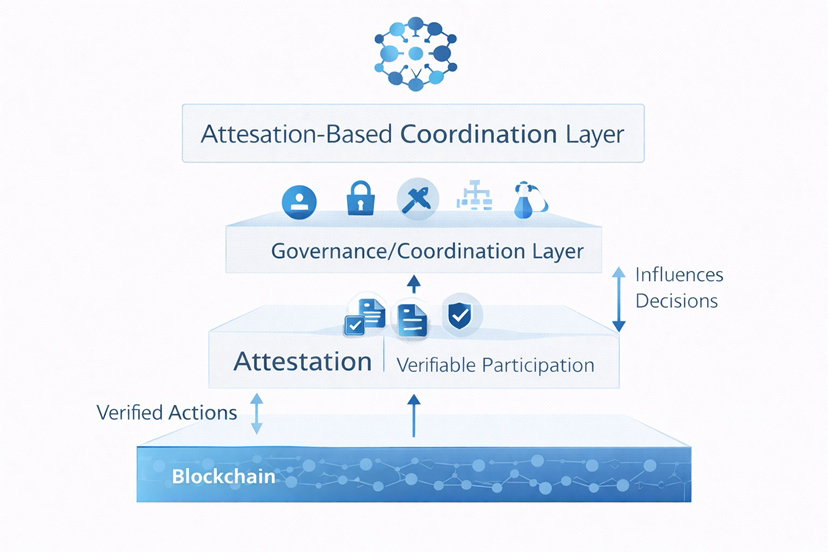

The shift becomes clearer at the level of design.

Most governance systems measure ownership. Influence is derived from what you hold.

#SignDigitalSovereignInfra begins somewhere else.

It introduces attestations, signed, verifiable records of actions, roles, or claims. Not symbolic inputs, but cryptographically provable data that any system can independently verify.

This changes the unit of participation.

Instead of asking who holds what, the system starts tracking who did what and whether that action can be verified.

But what matters isn’t just that actions are recorded.

It’s what happens to them.

These attestations are:

persistent

portable

verifiable

They don’t disappear after a single interaction. They can be reused across systems. And their credibility depends not just on existence, but on who issued them and how they’re validated.

And importantly, this verification is permissionless.

No single authority defines trust. Any system can read and validate these records without recreating context from scratch.

To make sense of it, I had to simplify it in practical terms.

Most governance systems today resemble meetings you’re invited to attend. You can vote if you choose to. If you don’t, the system still moves forward often without you.

Sign feels different.

It resembles a system where your role is continuously reflected through your actions. Where influence isn’t something you activate occasionally, but something the system derives from what you consistently contribute.

Not episodic governance.

Continuous coordination.

What stood out to me wasn’t the mechanism itself.

It was what this structure signals.

Most systems today separate activity from authority. You can be active without influence, or influential without participation.

Sign begins to compress that gap.

By anchoring coordination in verifiable behavior, it aligns influence with contribution over time. Not perfectly, but more transparently.

And transparency changes incentives.

Because once behavior is recorded and reusable, participation is no longer invisible.

Stepping back, this connects to a deeper limitation in crypto.

We’ve removed centralized trust, but we haven’t fully replaced how trust operates in practice.

Because trust isn’t just rules.

It’s patterns:

repeated interaction

visible contribution

consistency over time

Without these, systems feel stateless even when they’re technically decentralized.

And stateless systems don’t retain participants.

This becomes more pronounced as systems scale.

Early coordination relies on shared context and informal alignment. But as systems grow, that breaks down.

Without memory, participation resets.

Without structure, coordination fragments.

Attestations don’t just add data.

They preserve continuity.

And continuity changes how systems behave.

Of course, the market doesn’t reward this immediately.

Attention flows toward what is visible price, liquidity, rapid growth. Systems building coordination layers tend to move quietly, often overlooked.

But that creates a distortion.

We start optimizing for what can be measured quickly, not what compounds over time.

And most of what compounds is not immediately visible.

Still, this approach isn’t without risk.

Enforced coordination requires clarity. Users need to understand how their actions translate into influence. Without that, participation remains shallow.

There’s also a balance to maintain. Too much structure can reduce accessibility. Too little, and systems revert to optional behavior.

And perhaps most critically, this only works if it extends beyond a single system.

Portability matters.

If attestations aren’t recognized across applications, their value remains limited.

Coordination only becomes meaningful when it is shared.

This leads to a broader question.

Can systems replace trust, or do they simply reshape it?

Technology can verify actions, but it doesn’t assign meaning. That still depends on context, interpretation, and collective recognition.

In that sense, coordination isn’t just enforced technically.

It’s reinforced socially.

So I’ve started to look for different signals.

Not whether governance exists but whether it is unavoidable.

Not whether users can participate but whether their participation persists.

Not whether decisions are made but whether those decisions reflect accumulated, verifiable behavior.

These signals are quieter.

But they are harder to fake.

In the end, my perspective has shifted in a way I didn’t expect.

I no longer see governance as something systems add.

I see it as something systems either encode or fail to.

Sign may or may not become the defining model.

But it clarified something important:

The future of coordination isn’t optional governance.

It’s systems where participation is recorded, verified, and carried forward whether users explicitly engage or not.

Not enforced through control.

But enforced through structure.

And that distinction feels subtle at first.

Until you realize it changes everything about how systems actually hold together.