SIGN caught my attention not because it felt revolutionary, but because it sat in a place that felt unresolved.

Not identity. Not tokens. But the uncomfortable space in between.

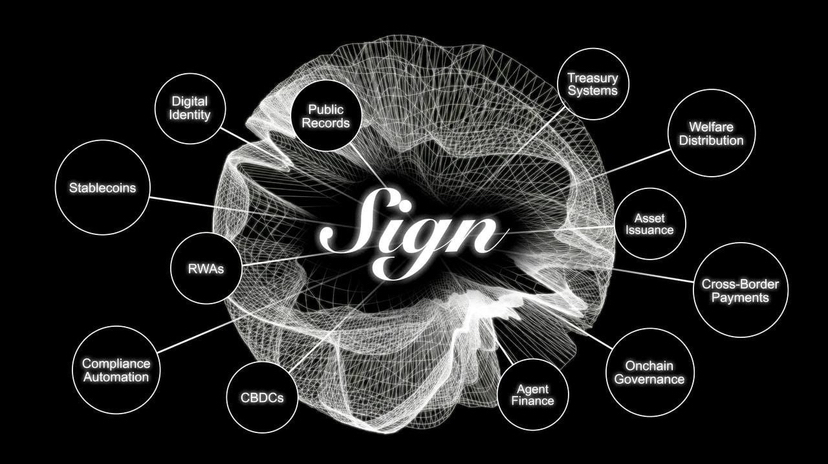

At a surface level, the idea behind SIGN is almost obvious: verifiable credentials. A way to prove something about yourself your work, your achievements, your history in a way that others can trust without needing to rely on centralized platforms.

We already have fragments of this everywhere. Degrees, certificates, GitHub commits, work history, social graphs. Proof exists. In abundance.

But it’s fragmented.

Your credibility is scattered across platforms that don’t talk to each other, systems that don’t interoperate, and contexts that don’t translate. What counts as proof in one place is meaningless in another. And more importantly, none of it really belongs to you in a portable sense.

You don’t carry your credibility. You rebuild it, over and over again.

SIGN is trying to change that.

What makes it interesting isn’t just verification. It’s portability.

The idea that proof—if structured correctly—can move with you. That credentials can exist independently of the platforms that issued them. That you can selectively reveal what matters, when it matters, without exposing everything else.

That last part is where things stop being obvious and start becoming uncomfortable.

Because trust, in the real world, has never been cleanly separable from exposure.

We tend to equate credibility with visibility. The more someone reveals, the more we trust them. The more data, the more confidence. But that model doesn’t scale well in a world where data is permanent, searchable, and increasingly weaponized.

So SIGN leans into a different idea: selective disclosure.

Not proving everything just proving enough.

Take healthcare as an example.

Right now, proving a medical condition usually means handing over far more information than necessary. Full records, history, identifiers. Systems are designed for completeness, not restraint.

But what if you could prove you have a condition say, eligibility for a treatment without exposing your entire medical history?

That’s the kind of shift SIGN hints at. Proof without oversharing.

It sounds simple, but it forces a deeper question: how much should someone need to reveal to be trusted?

Or consider research and AI.

As AI-generated content becomes indistinguishable from human work, the ability to prove authorship or contribution starts to matter more. But at the same time, raw data training sets, proprietary methods, early drafts can be sensitive.

There’s a tension there.

You want to prove you did the work without exposing how you did it.

SIGN model attestations that can be verified without full transparency starts to make sense in that context. It allows contribution to be acknowledged without forcing disclosure that could compromise privacy or competitive edge.

Hiring is another obvious case.

Resumes are, at best, loosely verified narratives. References help, but they’re subjective. Platforms try to fill the gap, but they end up becoming gatekeepers.

What if reputation could be built from verifiable signals instead?

Not a full identity dump. Not every job, every detail—but a set of credentials that are cryptographically verifiable and contextually relevant.

You prove what matters for the role, not your entire life history.

That’s a very different model of trust.

Underneath all of this, SIGN combines two things that don’t often work well together: verifiable credentials and tokenized incentives.

On their own, both are incomplete.

Verification systems without incentives tend to stagnate. They rely on voluntary participation, which usually means slow adoption and limited coverage.

Incentive systems without strong verification get gamed. Quickly. Efficiently. Predictably.

By tying attestations to incentives, SIGN is trying to create a loop where truth is not just recorded, but rewarded and ideally, harder to fake.

But this is also where the skepticism comes back.

Because any system that assigns value to proof will eventually be optimized for that value.

People will learn how to produce the “right” signals.

Issuers of credentials may accumulate disproportionate power. If certain entities become widely trusted sources of attestations, they effectively become arbiters of credibility.

And once credibility becomes portable, it also becomes persistent.

That has psychological weight.

Right now, identity is somewhat fluid. You can start over, reframe yourself, move between contexts. But if your reputation becomes a permanent, verifiable layer that follows you everywhere, that flexibility starts to shrink.

What does it mean to make a mistake in a world where proof doesn’t fade?

This is where the broader context matters.

By 2026, the internet feels different. Not fundamentally, but perceptibly.

AI-generated content has blurred the line between real and synthetic. Trust, already fragile, is eroding further. It’s no longer just about misinformation it’s about uncertainty at scale.

At the same time, there’s a growing push toward privacy preserving technologies. Zero knowledge proofs, selective disclosure systems, mechanisms that allow verification without exposure.

People want to be seen accurately, but not completely.

That’s a subtle but important distinction.

SIGN sits right in the middle of that shift.

It doesn’t try to eliminate trust issues. It tries to structure them.

And maybe that’s the most honest way to look at it.

Not as a solution, but as an attempt to formalize something that has always been messy.

Trust has never been purely technical. It’s contextual, emotional, sometimes irrational. Systems can support it, but they can’t fully define it.

What SIGN does is take one layer of that complexity the layer of proof and make it more portable, more flexible, and potentially more aligned with how people actually want to share information.

Whether that’s enough is still unclear.

If it works, it could reshape how credibility flows across systems. It could reduce friction in how we prove things about ourselves, while giving us more control over what we reveal.

If it doesn’t, it risks becoming just another metric layer. Another system people learn to game. Another abstraction that looks meaningful but doesn’t fundamentally change behavior.

Right now, it sits somewhere in between.

And maybe that’s exactly where it should be.