SIGN: Trying to Turn Trust Into Infrastructure

SIGN: Trying to Turn Trust Into Infrastructure

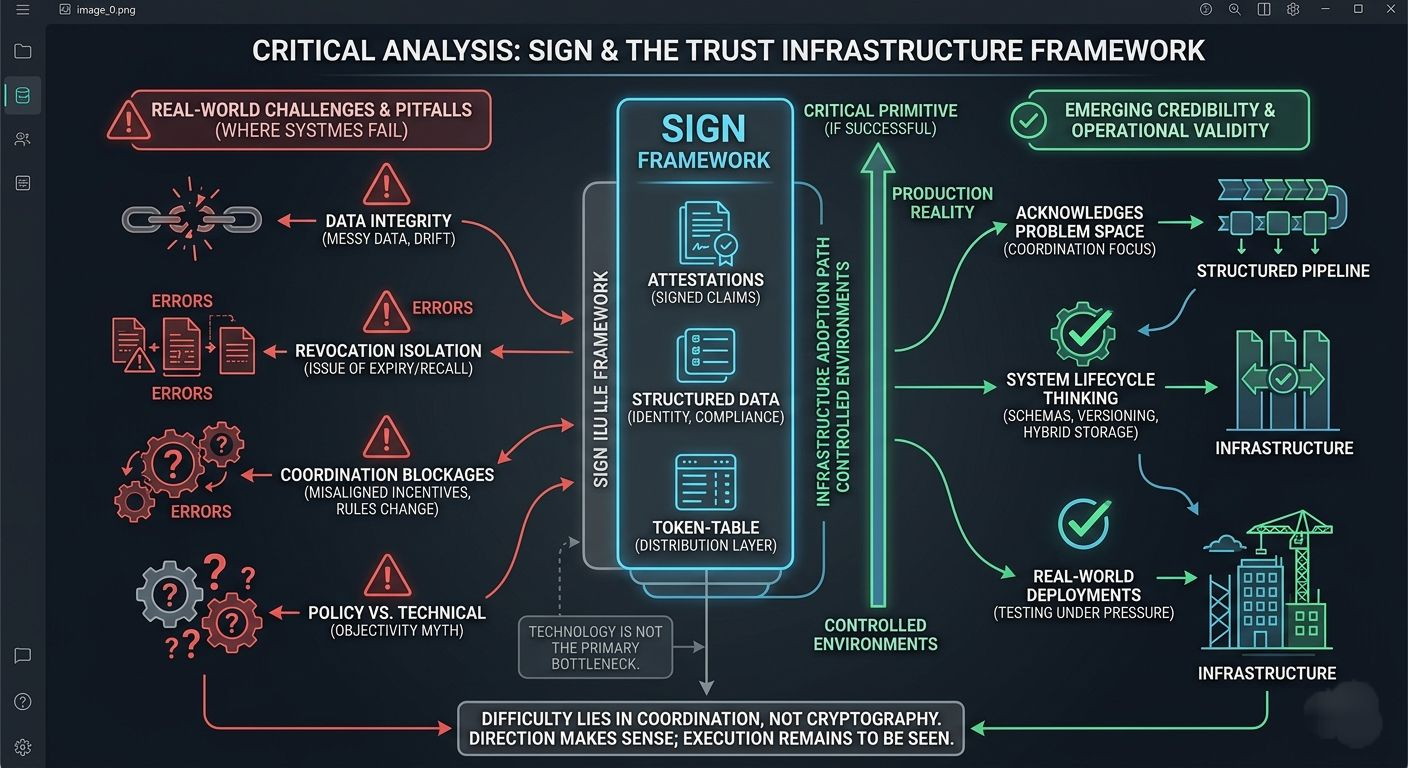

SIGN has been showing up more often in conversations lately, usually framed as part of some emerging “trust infrastructure” layer.

I don’t buy that framing. Not yet.

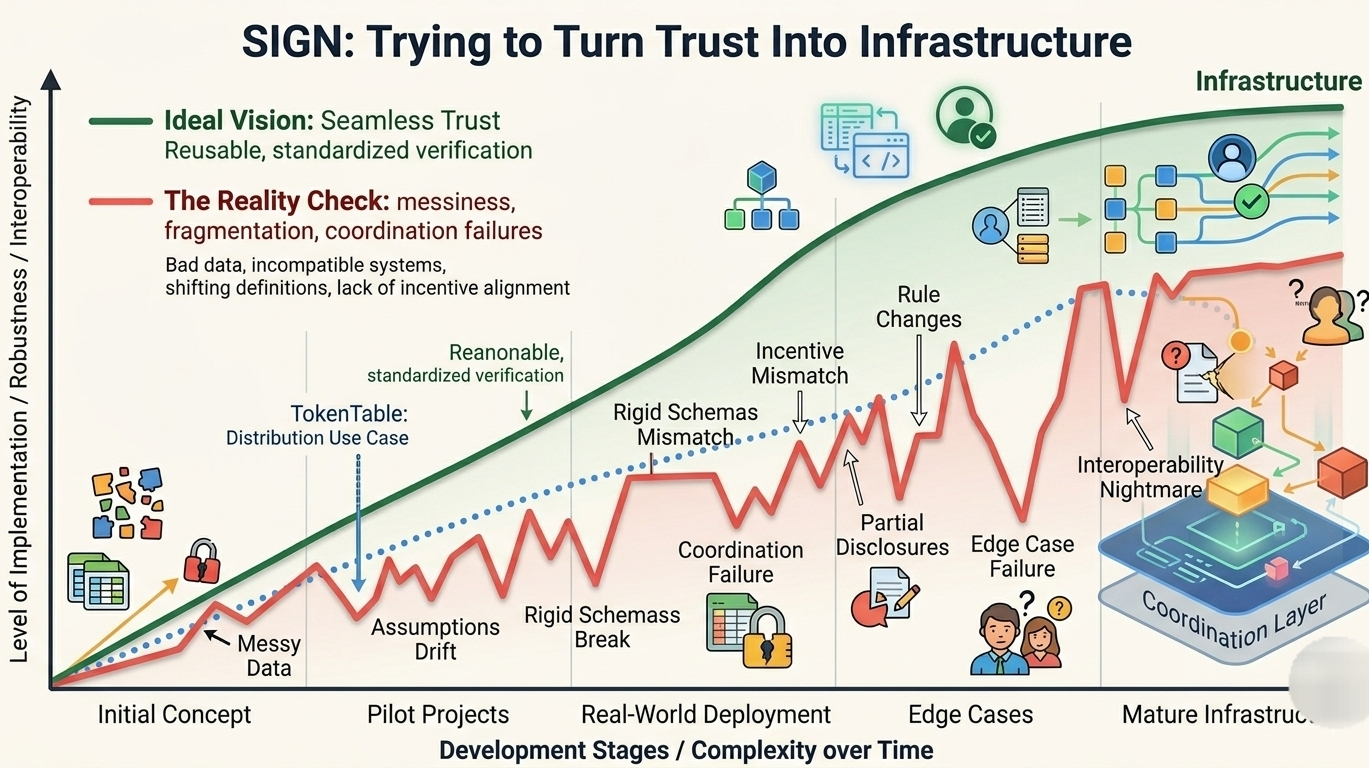

I’ve worked around identity systems, compliance workflows, and distribution pipelines long enough to know how these things behave under pressure. They look fine in controlled environments. Then reality shows up. Data gets messy. Assumptions drift. Systems stop agreeing with each other. I’ve seen this fail in production more times than I can count.

What SIGN is doing isn’t new in spirit. Standardize trust. Make verification reusable. Cut down redundant checks. That idea has been pitched across enterprise software, government infrastructure, and Web3 stacks for years.

Most of those efforts break down.

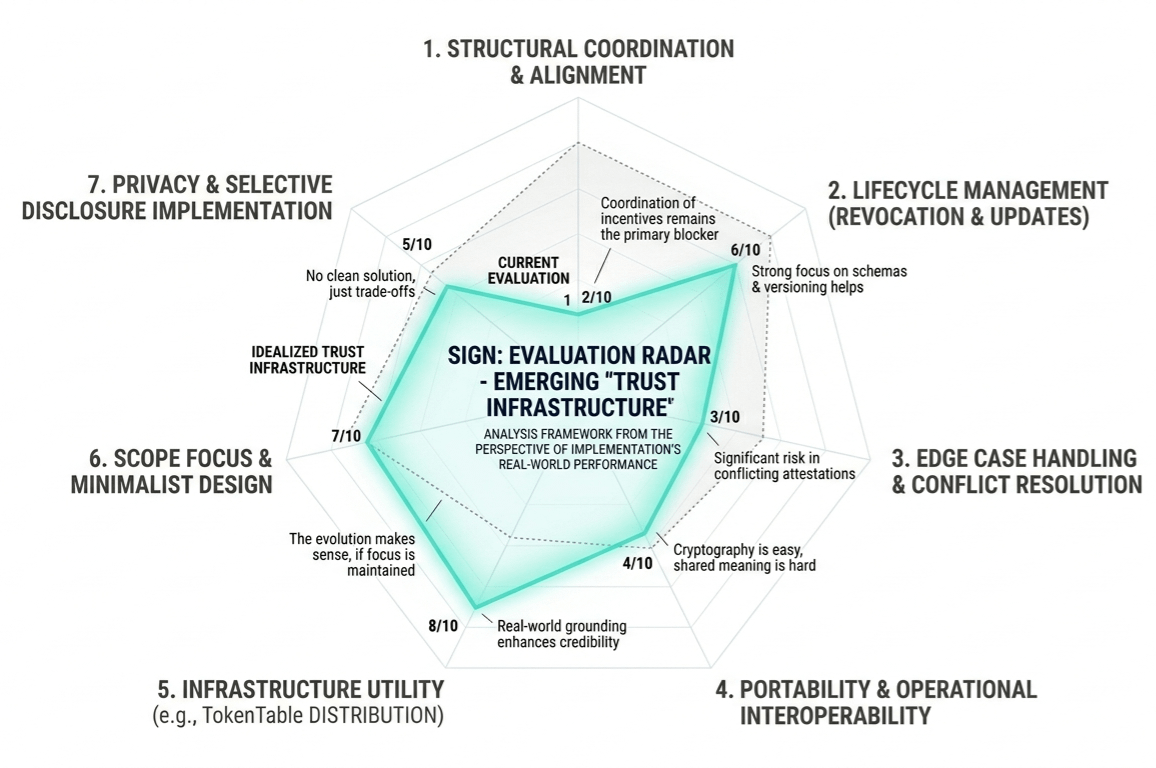

Sometimes it’s bad data. Sometimes it’s rigid schemas that can’t adapt. More often, it’s coordination—systems that simply don’t want to align because their incentives don’t match.

SIGN at least seems to acknowledge that problem space.

At its core, the system is built around attestations. Signed claims. Structured statements that something is true—identity, eligibility, compliance status, whatever the schema defines. The goal is to turn verification into something portable instead of something you redo every time.

I understand the appeal.

I’ve also seen where it goes wrong.

Data doesn’t stay clean. Identities aren’t fixed. Rules change constantly. Revocation is where things get ugly, and most systems don’t handle it well. Issuing credentials is easy. Maintaining their validity over time is not.

SIGN doesn’t completely ignore that. Schema versioning, revocation mechanisms, hybrid storage—it suggests they’re thinking about lifecycle, not just issuance. That already puts them ahead of a lot of similar systems I’ve looked at.

But the real problems don’t show up in the happy path.

They show up in edge cases.

Expired credentials that still circulate. Conflicting attestations from different issuers. Partial disclosures that break downstream logic. Systems that interpret the same data differently. This is where “shared trust” tends to fall apart.

Portability sounds good. Interoperability sounds better. Both are hard.

Not because of cryptography. Because of alignment.

Different organizations don’t share the same definition of truth, risk, or accountability. You can standardize formats, but you can’t standardize trust relationships. I’ve seen integrations stall for months over things that had nothing to do with code.

That doesn’t go away just because you introduce attestations.

Then there’s TokenTable—the distribution layer. This is where SIGN starts to feel more grounded.

Because distribution, as it exists today, is a mess.

I’ve seen token allocations run through spreadsheets, glued together with scripts, and finalized with manual wallet filtering hours before execution. I’ve seen bot activity completely distort “fair” distributions. I’ve seen teams rewrite eligibility rules mid-process because they stopped trusting their own data.

It’s fragile. And it breaks often.

Connecting distribution to verified attestations is a logical step. If the inputs are trustworthy, the outputs should be more predictable.

The reality is messier.

Eligibility is rarely objective. “Real user” is not a technical definition. Neither is “meaningful participation.” These are policy decisions, and they change depending on context, incentives, and pressure.

SIGN doesn’t solve that. It gives you a cleaner way to enforce whatever rules you define.

That’s fine. That’s where infrastructure should stop.

What I find more credible here is the positioning. SIGN isn’t trying to be a front-facing product. It sits underneath. Quietly. If it works, nobody notices. If it fails, everything built on top of it feels it.

I’ve seen systems like this become critical without ever being visible. I’ve also seen them collapse and take entire workflows down with them.

The evolution from document signing into a broader attestation framework makes sense. Once you start treating truth as structured, verifiable claims, the scope expands naturally—identity, compliance, reputation. Same pattern, different domains.

That expansion can go wrong. I’ve seen platforms lose focus and try to do everything. Others manage to stay minimal and become reliable primitives.

SIGN hasn’t fully committed either way yet.

The move toward real-world deployments—government systems, regulated environments—that’s where things will get tested properly. Those environments don’t tolerate ambiguity. Edge cases show up fast. Assumptions get challenged immediately.

Privacy will be another pressure point. Selective disclosure sounds straightforward until you try implementing it across jurisdictions with different legal requirements and incompatible systems. Full transparency doesn’t work. Full opacity doesn’t either.

There’s no clean solution there. Just trade-offs and constant adjustments.

What SIGN is aiming for is straightforward:

Treat trust as something reusable.

Not rebuilt every time. Not locked inside isolated systems. Something that can move across boundaries without losing meaning.

I think that direction makes sense.

I’ve just seen enough systems fail to know how difficult it is to get there.

Because the hardest part isn’t the cryptography or the data model.

It’s coordination.

Getting different systems—and the people behind them—to agree on shared structures of truth is where things slow down, or stop entirely.

Technology helps. It doesn’t solve that.

Still, this is the layer worth working on.

We don’t need faster ways to move data if we can’t trust what’s being moved.

SIGN is at least trying to address that.

That’s enough to keep it on my radar.

@SignOfficial #signdigitalsovereigninfra $SIGN