There’s a quiet assumption I keep noticing in Sign, and it’s this idea that trust can be packaged, stored, and then reused later without losing meaning. On paper, that sounds efficient. In practice… I’m not fully convinced it’s that simple.

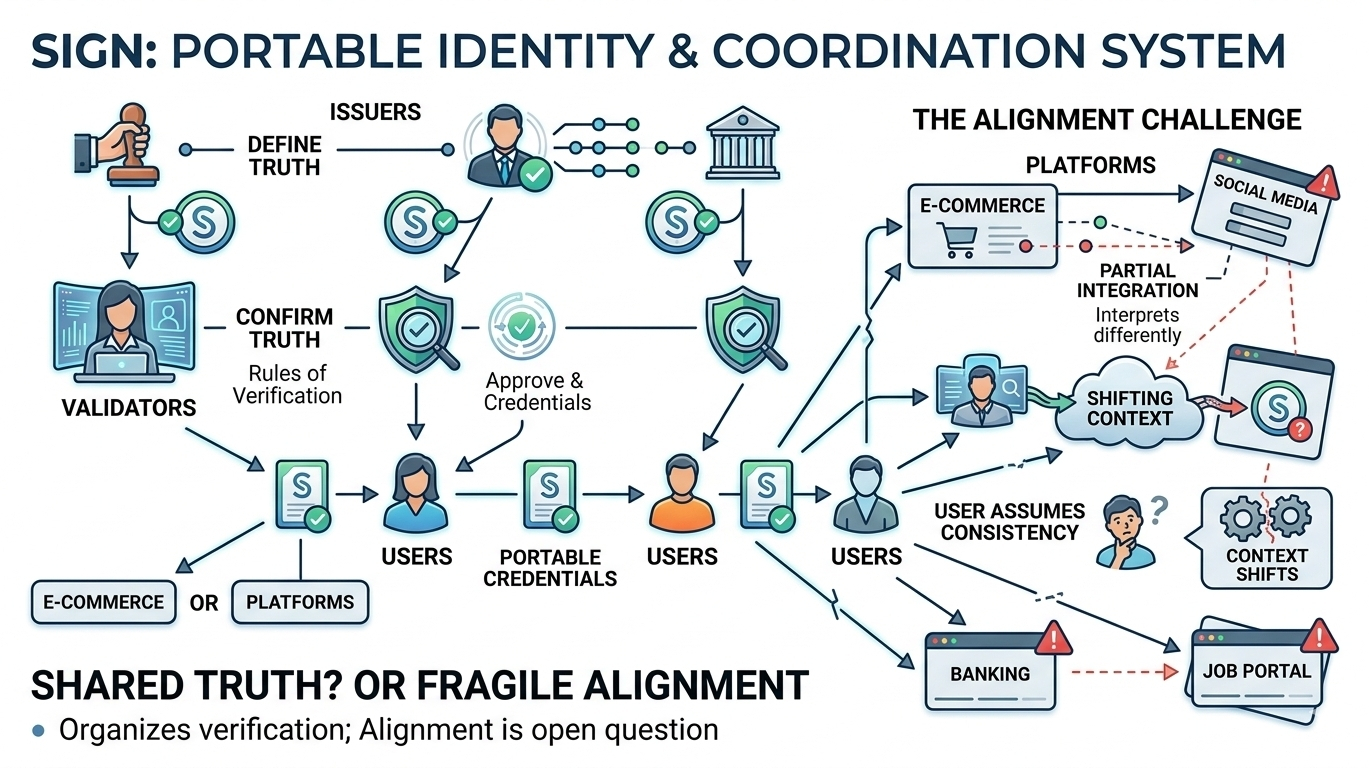

The system splits roles neatly. An issuer creates a credential, validators confirm it, and then I, as a user, just carry it around like a passport for the internet. No repeated checks. No redundant friction. That part feels logical. Almost too logical. Because real-world trust usually isn’t this clean.

What I find interesting is how Sign reduces interaction. Instead of platforms constantly verifying users themselves, they rely on a shared layer of validation. It’s less work for everyone involved. But it also means everyone is leaning on the same underlying assumptions. If those assumptions are slightly off, the effects don’t stay isolated. They spread.

And I keep coming back to this: verification is treated as a moment, not a process. Once something is validated, it’s considered reliable. But reality changes. Context shifts. People change. Systems don’t always reflect that fast enough. So the question becomes… how often does “valid” drift away from “true”?

There’s also a subtle trade-off here. By making credentials portable, Sign increases usability. But it might also reduce scrutiny. When something is easy to reuse, it’s also easier to trust without questioning. And honestly, I get why the system leans this way. Too much friction kills adoption. But too little friction can blur edges that probably shouldn’t be blurred.

Then there’s the validator layer itself. Incentives exist, penalties exist, but incentives don’t eliminate variation. Different validators might interpret edge cases differently. Most of the time, that won’t matter. But sometimes it will. And those “sometimes” moments are usually where systems get tested.

Real-world pressure doesn’t come from theory. It comes from messy execution. A delay in validation, a misissued credential, or a platform interpreting data slightly differently… none of these are dramatic failures. But stack them together, and you start seeing cracks. Not breaks. Just small inconsistencies that slowly matter more over time.

Still, I can’t ignore what Sign is trying to simplify. Repeated verification is inefficient, and solving that does feel necessary. The architecture is clean, the intention is clear, and the direction makes sense.

But I’m left with a quiet tension. Sign assumes that trust, once verified, can travel safely across contexts. I’m not sure trust behaves that way. It might… but only until it doesn’t.

And that uncertainty feels like the real story here.