I used to believe that better identity meant more visibility. If you could prove more about yourself then systems would trust you more. It felt like a clean trade. You give data and you receive access. Over time that belief started to crack. Not because the systems failed but because they worked too well.

The hidden problem is not surveillance in the obvious sense. It is the quiet expectation that participation requires exposure. Every login every credential every on chain action leaves a trace that becomes part of a growing surface. You are not just proving who you are in a moment. You are slowly constructing a version of yourself that others can inspect without limits.

The system does not force you in one step. It nudges you over time until disclosure feels normal.

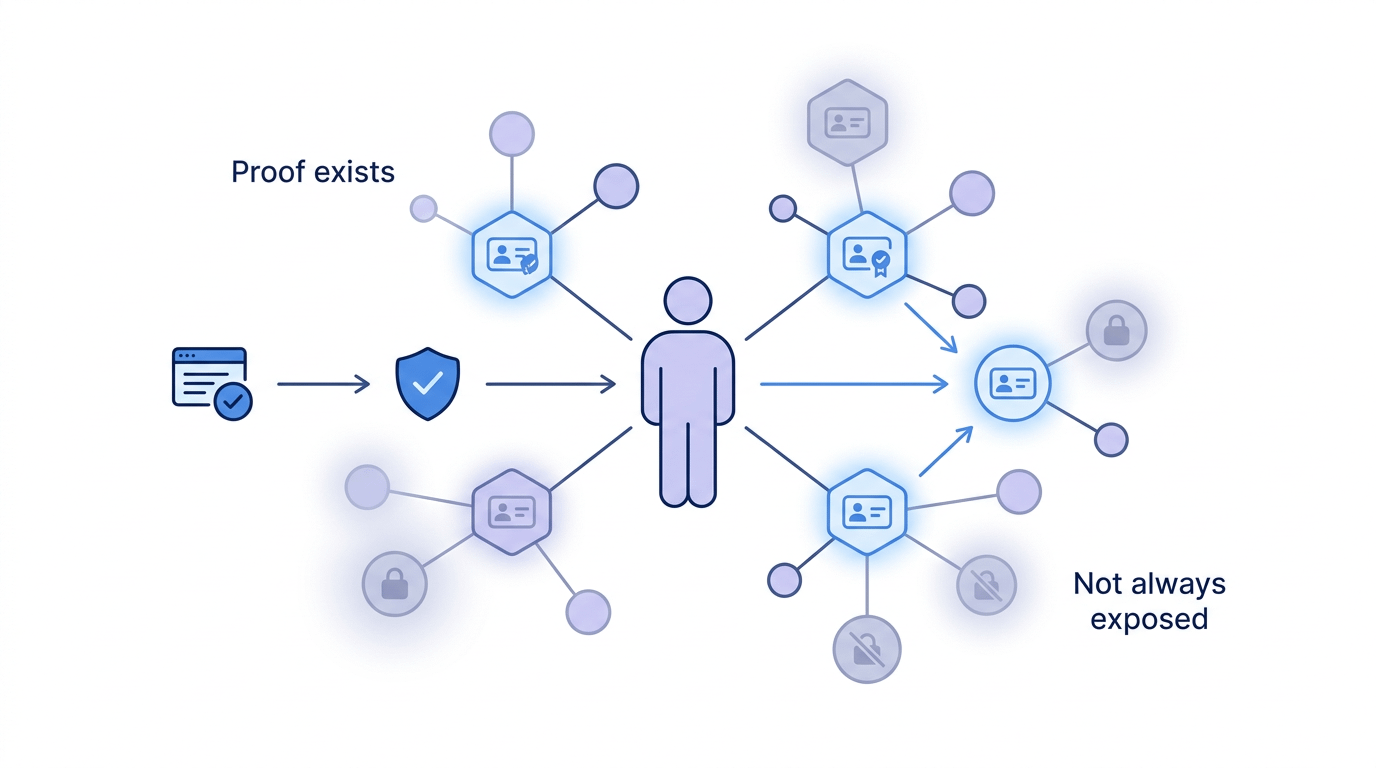

This is where SIGN takes a different path. It does not try to remove proof or weaken verification. It restructures how proof exists. Attestations are not just static records. They are context bound signals that can be verified when needed without being universally visible.

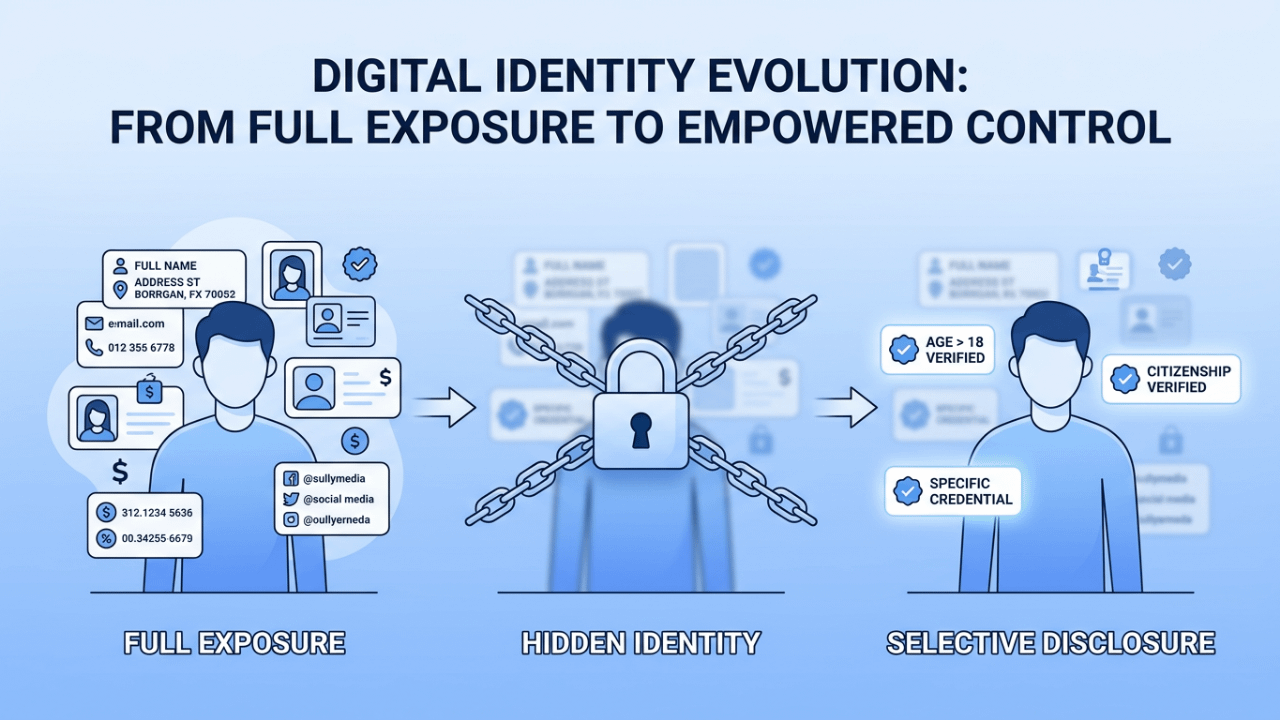

The design shifts identity from a public artifact into a controlled interaction. You hold claims that can be resolved into truth within a specific frame instead of broadcasting them by default.

That may sound subtle but it changes everything. Most systems treat identity as something that must always be ready to show.

SIGN treats identity as something that can remain dormant until invoked. The difference is not technical at first glance. It is behavioral.

When exposure is no longer the default people begin to act differently. They stop optimizing for what can be seen and start focusing on what actually matters in a given interaction.

Proof should not demand presence. It should respond to intent.

What many miss is that the real leverage here is not privacy alone. It is timing. Timing defines control.

When you decide when a claim becomes visible you regain ownership over your narrative. Without that control even the most secure system can still reduce you to a transparent object. With that control even a simple claim becomes powerful because it exists on your terms.

There is also a deeper implication for trust itself. Trust has always been treated as a function of accumulated data. The more history the more confidence. But that model assumes that all context is equal. It ignores that relevance changes from one moment to the next. SIGN introduces a different idea.

Trust can be constructed dynamically based on what is revealed in the moment rather than what is permanently stored. That creates a form of trust that is lighter and more precise.

Exposure is not power. Control over exposure is.

If identity is always visible it turns into a liability that others can analyze and exploit. If identity is always hidden it loses its utility and becomes friction. The balance is not a midpoint. It is a mechanism. A way to let truth exist without forcing it into constant display.

We are moving toward a world where digital interactions define reputation at scale. In that world the architecture of identity will shape behavior more than any policy. If systems continue to reward exposure then people will trade control for access without noticing the cost.

If systems like SIGN gain traction then identity may evolve into something more deliberate. Something that reflects choice instead of pressure.

So the real question is not whether we can verify who someone is. That problem is close to solved.

The real question is whether identity will remain something we perform for systems or become something we control within them.