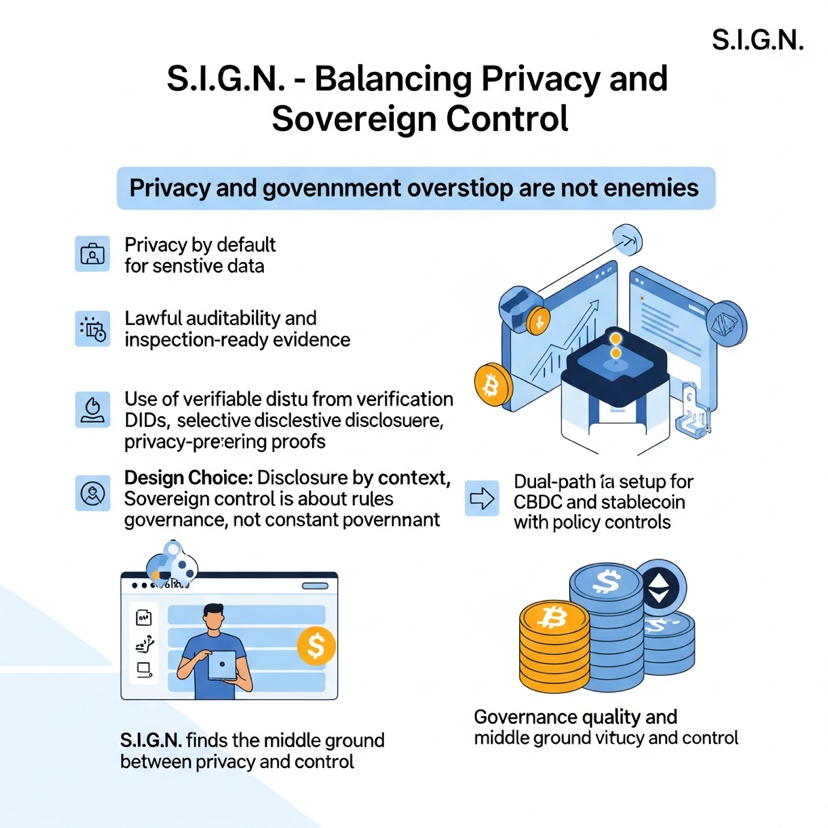

Look, one thing I keep coming back to with SIGN is how it refuses to treat privacy and government oversight like two enemies forced into the same room. Most systems pick a side way too early. They either go full privacy-bro mode so institutions start sweating about who can check anything if things go south, or they crank the control dial to eleven and suddenly “verification” just means surveillance with extra steps. That friction hits hardest in identity systems, payment rails, and benefits programs—exactly where the sensitive stuff actually lives.

What pulls me in about SIGN is that the docs don’t pretend the tension magically vanishes. They straight-up call it sovereign-grade infrastructure for money, identity, and capital, then lay out the rules in plain English: privacy by default for anything sensitive, but also lawful auditability, inspection-ready evidence, and tight operational control over keys, upgrades, and emergency moves. This isn’t some fluffy consumer app pitch. It’s systems thinking, and honestly, that’s the whole point.

What pulls me in about SIGN is that the docs don’t pretend the tension magically vanishes. They straight-up call it sovereign-grade infrastructure for money, identity, and capital, then lay out the rules in plain English: privacy by default for anything sensitive, but also lawful auditability, inspection-ready evidence, and tight operational control over keys, upgrades, and emergency moves. This isn’t some fluffy consumer app pitch. It’s systems thinking, and honestly, that’s the whole point.

The magic happens because they separate disclosure from verification. Their New ID approach uses verifiable credentials, DIDs, selective disclosure, privacy-preserving proofs, revocation checks, and offline presentations. In normal language, it means you don’t query some central database every single time you need to know something about a person. Instead, you just prove the exact claim that matters—eligibility, compliance status, identity attributes, whatever—without spilling everything else. That’s how privacy stays alive.

But here’s the part most people miss: SIGN doesn’t stop at privacy. It also demands real inspection-ready evidence. Not fluffy proof, not vibes. Actual records that can answer who approved what, under which authority, when it happened, which rules applied, and what backs the claim later on. That job falls to the Sign Protocol—the evidence layer built on schemas and attestations that can be public, private, hybrid, or even ZK-based when needed.

This design choice is huge. Privacy-preserving verification only works with sovereign control if the sovereign side doesn’t need to see every raw detail all the time. They just need governed access to evidence, audit trails, policy controls, and real authority over the system itself. The docs are crystal clear on this. Private mode is for confidentiality-first programs, but governance still runs through permissioning, membership rules, and audit access policies. Hybrid mode mixes public checks with private execution. Public mode handles the transparency cases. So it’s never “hide everything” or “show everything.” It’s disclosure by context, under real governance.

That’s the institutional pivot right there. Sovereign control isn’t defined as constant omniscience. It’s control over the rules, the operators, the access policies, emergency measures, interoperability, and audit rights. Policy and oversight stay with the sovereign while the tech stays verifiable. Governments don’t have to personally eyeball every private payload in day-to-day operations. They just need to stay in charge of the rails—accrediting issuers, enforcing revocation, setting trust boundaries, and inspecting when law or policy actually requires it.

This is nothing like the usual blockchain daydreams. One fantasy says “privacy fixes everything” and ignores accountability. The other screams “public transparency fixes everything” and forgets how states actually handle citizen data. SIGN sits right in the messy middle: credentials can be shown selectively, attestations can be private or hybrid, sensitive stuff runs on confidential rails, yet supervisors still get reporting visibility, rule enforcement, and lawful inspection paths.

Take the money stack as an example. The docs talk about a dual-path setup—privacy-first, permissioned CBDC flows on one side and transparent regulated stablecoin paths on the other—both living under the same infrastructure with serious policy controls and supervisory oversight. You don’t have to expose an entire identity file just to prove eligibility for a program. You don’t have to make every domestic payment public just to keep it auditable. Verification becomes something narrower and more disciplined than raw data dumps.

Sure, I still have one hesitation. The boundary of “lawful auditability” always looks clean on paper. In the real world it depends on governance quality, operator incentives, trust registries, access policies, emergency procedures, and plain old political restraint. The architecture can keep the technical possibility of privacy plus control alive. It can’t magically create institutional virtue—that part still has to be earned in actual deployments.

Even with that caveat, I keep coming back to this stack because it gets the problem right. SIGN doesn’t confuse privacy with total invisibility or control with total exposure. It treats privacy as selective provability and control as governed authority over the system, not constant peeking at every detail. That feels like a much more grown-up answer to how real institutions actually work than most crypto projects offer.

And maybe that’s exactly why it keeps pulling my attention. Sovereign systems don’t just need trustless execution. They need a way to verify what matters, reveal only what’s necessary, and still keep the state clearly in charge when the stakes are real. SIGN is aiming at exactly that uncomfortable, important middle ground.

@SignOfficial #SignDigitalSovereignInfra $SIGN