I used to believe public goods in crypto would naturally sustain themselves if they were useful enough. If something created value, the ecosystem would support it. Builders would contribute, users would adopt, and over time, the system would stabilize.

But that’s not what I saw.

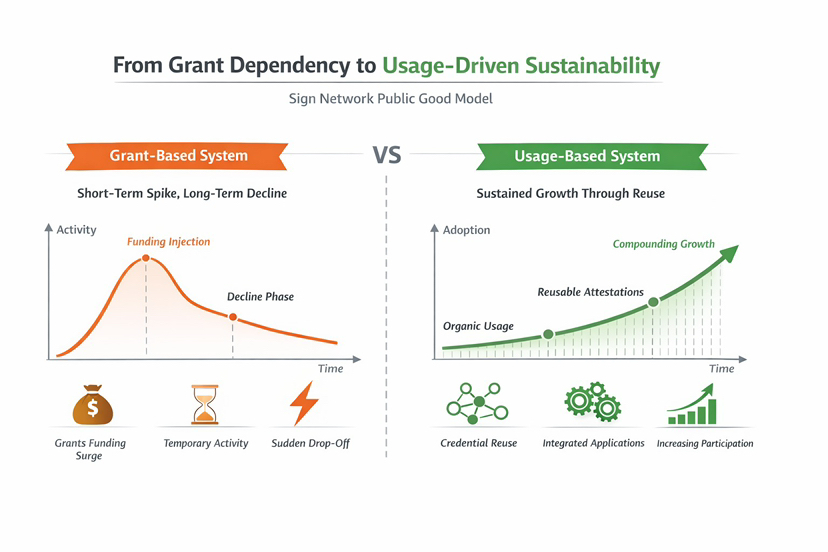

What I saw instead were cycles. Funding would arrive, activity would spike, contributors would gather and then slowly, things would fade. Not because the ideas were wrong, but because the incentives weren’t durable. Participation followed funding, not function.

At first, this felt like a coordination problem. But over time, it started to feel deeper than that.

When I looked closer, something felt off.

Public goods in crypto are often framed as neutral infrastructure, open, permissionless, beneficial to all. But neutrality comes with a tradeoff. If no one owns the system, who is responsible for sustaining it?

Ideas sounded important, but they didn’t translate into practice.

Grants would fund development, but not long term maintenance. Contributions would happen, but not persist. Systems were built, but rarely operated as living infrastructure. They existed, but they didn’t evolve.

And without sustained incentives, even useful systems began to drift.

That’s when my evaluation started to change.

I stopped asking whether something was valuable, and started asking whether it could sustain participation without external support. Whether contributors had a reason to stay involved after the initial push. Whether usage itself reinforced the system.

A surface level metric like “number of integrations” began to feel less meaningful. What mattered more was whether those integrations persisted, whether they reduced friction over time, whether they created repeatable behavior.

Because if a system needs continuous external input to stay alive, it isn’t infrastructure, it’s dependency. That shift in thinking is what led me to look more closely at @SignOfficial

Not because it presented itself as a solution, but because it approached the problem from a different angle.

It didn’t just frame attestations as a public good. It treated the ecosystem around them as something that needed to sustain itself without compromising neutrality.

That raised a more grounded question for me:

Can a public good remain neutral while still having incentives strong enough to keep it alive?

That question sits at the center of the problem.

Most systems either lean toward incentives or neutrality but rarely both. Strong incentives often introduce control, bias, or extractive behavior. Pure neutrality, on the other hand, often leads to fragility.

What stood out in $SIGN Protocol wasn’t a claim to solve this but an attempt to structure around it.

Attestations act as reusable, verifiable records. They can be issued, shared, and validated across systems. But more importantly, they introduce a layer where usage can begin to reinforce itself.

Verification doesn’t have to restart each time. Credentials can carry forward. Systems can rely on prior state.

And that subtle shift from one time verification to reusable evidence starts to change how participation behaves.

The design becomes clearer when I think about it in real world terms.

In traditional systems, institutions don’t re verify everything constantly. They rely on established records, trusted issuers, and standardized formats. Once something is verified, it becomes part of a broader system of trust.

#SignDigitalSovereignInfra attempts to replicate that continuity digitally.

Issuers create attestations based on defined schemas. These schemas ensure that data is structured and interpretable across systems. Verifiers don’t just check the data, they check who issued it and how it was defined.

Credibility isn’t assumed. It’s inherited from the issuer and anchored through structured trust.

And over time, this creates a system where verification becomes less about repetition and more about reference.

What this signals isn’t just efficiency, it’s a shift in how trust is coordinated.

Because trust, in practice, isn’t built through isolated interactions. It’s built through continuity.

And continuity changes incentives.

If users know their verified actions persist, they behave differently. If systems can rely on prior verification, they integrate differently. If issuers are accountable for credibility, they operate differently.

The system begins to align around long-term behavior, not short term interaction.

This matters beyond crypto.

In many parts of the world, public systems struggle with the same problem, verification is fragmented, trust is localized, and coordination is expensive. People repeatedly prove the same things, across disconnected systems.

At the same time, institutions struggle to maintain neutrality while staying operational. Funding models introduce bias. Centralization introduces control. And without sustainable incentives, even well-designed systems degrade.

An approach that allows trust to be reused while keeping the system open, starts to address both sides of that tension.

It doesn’t remove the problem. But it changes the structure around it.

Still, the market doesn’t always reward that kind of design.

Attention tends to flow toward metrics that are easy to measure, volume, activity, short term growth. These can signal momentum, but not necessarily durability.

A system can show high usage while still relying on constant re verification. It can grow quickly without retaining meaningful state. It can attract contributors without giving them a reason to stay.

The real question is whether participation compounds.

Does the system become easier to use over time? Does it reduce friction? Does it allow trust to accumulate?

If not, then it’s not solving the underlying problem, it’s just moving around it.

But even with the right structure, there are real risks.

For something like Sign Protocol to work, adoption has to go beyond surface integration. Issuers need to maintain credibility over time. Schemas need to be standardized without becoming rigid. Verifiers need to trust external attestations enough to rely on them.

And users need to experience a clear benefit.

If carrying attestations doesn’t meaningfully reduce friction, they won’t engage. If systems don’t treat attestations as core infrastructure, they remain optional and optional systems rarely sustain.

There’s also a deeper challenge.

Neutral systems depend on broad participation. But broad participation is hard to coordinate without strong incentives. And strong incentives, if not carefully designed, can compromise neutrality.

That balance is difficult to maintain.

I think about this more simply sometimes.

People don’t engage with systems because they’re ideologically aligned. They engage because it makes their lives easier. Because it reduces effort. Because it works.

Technology can enable that but it can’t guarantee it.

There’s always a gap between what a system allows and what people actually do.

For me, conviction comes down to observing behavior over time.

Are attestations being reused across different applications? Are systems relying on them for real decisions, not just display? Are issuers maintaining credibility consistently? Are users interacting in ways that build on prior actions?

Those are the signals that matter.

Not announcements. Not narratives. Not short-term activity.

Sustained, repeated use.

I don’t think the problem Sign Protocol is addressing is just about identity or attestations.

It’s about something more difficult.

How to build a system that remains open and neutral but still has enough incentive alignment to survive.

Because without incentives, public goods fade. And without neutrality, they stop being public.

What I’ve started to realize is this:

The hardest systems to build aren’t the ones that scale the fastest.

They’re the ones that can stay alive, without losing what made them worth building in the first place.