I’ve been paying closer attention to @SignOfficial lately, not because of hype, but because of what’s actually happening on the ground. For a while, I saw it the same way most people do, just another attestation layer, another tool for verifying data on-chain. Nothing particularly new in a space already full of verification systems. But the more I watched, especially how they’ve been running hackathons and encouraging real development, the more my perspective started to shift.

What stood out first was the focus on building, not just talking. Events like Bhutan’s national digital identity hackathon weren’t just for show, they produced over a dozen working applications. Some were clearly aimed at government use cases, others leaned toward private sector potential. That kind of output matters. It shows that people aren’t just experimenting for the sake of demos; they’re actually trying to solve problems that could exist beyond the event itself.

The structure behind these hackathons also feels more intentional than usual. It’s not the typical “here are the tools, go figure it out” approach. There’s documentation, guidance, access to the protocol, and even some level of mentorship. That changes the experience. If you pay attention, you can genuinely learn something useful instead of just rushing to build a flashy prototype that gets forgotten the next day.

That said, I’m not buying into the usual hackathon narrative. Most of these events are chaotic. Ideas are rushed, teams are scrambling, and things often fall apart at the last minute. A few teams manage to pull something together, but most projects don’t survive beyond the event. The real value has always been in the process, learning under pressure, figuring things out quickly, and connecting with people who are actually serious about building.

Still, this feels slightly different. There’s a visible pattern of people shipping and testing real tech. You can start to tell who’s genuinely engaged and who’s just there for the experience. That alone makes it worth watching.

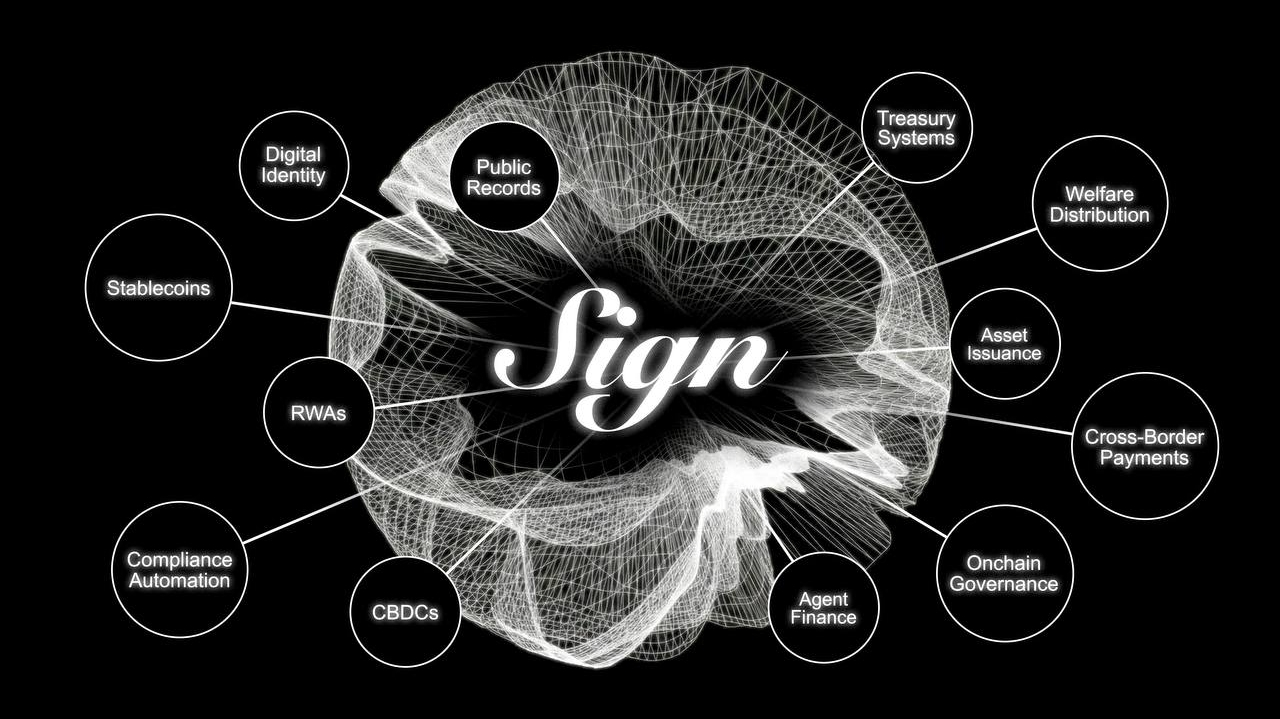

As I dug deeper, I realized something more fundamental: $SIGN isn’t just working with data, it’s working with decisions. That’s a subtle but important shift. In crypto, we spend a lot of time talking about speed, fees, and liquidity, but rarely question the validity of the data itself. This protocol seems to focus on that missing layer, how trust is actually formed and used.

On the technical side, there’s clear progress. Deployments across multiple chains - EVM, non-EVM, even Bitcoin Layer 2, show that this isn’t just theoretical. There’s also confidence around handling high volumes of attestations, which sounds promising. But real-world conditions are different. It’s one thing to perform well in controlled environments, and another to operate under the pressure of government systems, financial compliance, or cross-border identity frameworks. That’s where things get complicated, not just technically but politically.

The presence of tools like an explorer adds a layer of transparency, but it also raises an important question: who decides what is valid? That uncertainty becomes more relevant as adoption grows. Integrations across gaming, social graphs, and DeFi are a start, but true adoption will only happen when people use systems powered by this infrastructure without even realizing it. That stage hasn’t been reached yet.

There’s also the push toward standardization. On paper, it makes sense, shared schemas create consistency. But standards also introduce control. Whoever defines those schemas influences behavior, incentives, and ultimately how the system evolves. That’s where decentralization can quietly become more complex than it appears on the surface.

From a cost perspective, the design is efficient. Storing proofs and schemas without full on-chain data reduces expenses and improves scalability. Using Layer 2 solutions and off-chain attestations makes participation more accessible. But these benefits come with trade-offs. Off-chain components reduce transparency and increase reliance on trust, which introduces a social layer of risk even if the technology itself is sound.

In the end, what I see is not a finished system, but an evolving experiment. The idea behind SIGN is strong, building a trust logic layer that can influence how decisions are executed across systems. That’s powerful. But it also raises deeper questions. If the verification layer isn’t fully trustworthy, can the outcomes ever be? And if control shifts from data to proofs, are we solving the problem or just moving it?

There aren’t clear answers yet. But that uncertainty is what makes it interesting. It could become invisible infrastructure that quietly powers critical systems, or it could introduce new forms of control that we don’t fully understand yet.

For now, I’m just watching, learning, and paying attention to what people are actually building, because that always tells the real story.