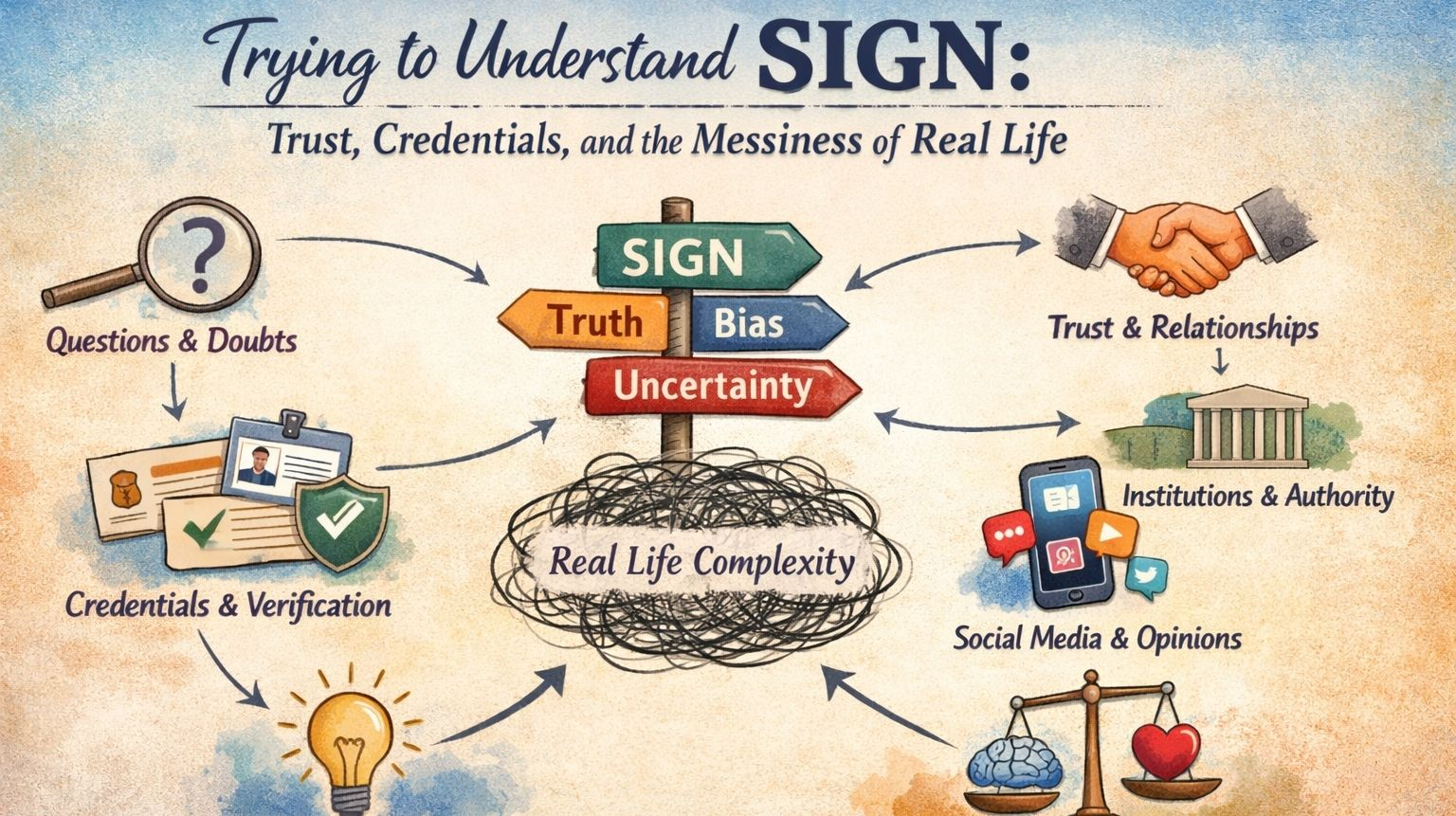

I’ve been thinking about this thing called SIGN, and honestly, I’m still not sure I fully “get” it—but in a way that makes me want to keep circling back to it. You know how sometimes an idea sounds very clean when you first hear it, almost too neat? “A global infrastructure for credential verification and token distribution.” It rolls off the tongue like it already makes sense. But the more I sit with it, the more it starts to feel less like a tidy system and more like something that’s trying to map onto the messy, unpredictable nature of people.

I tried explaining it to myself in simple terms first. Okay, so it’s about credentials—proof that someone did something, learned something, belongs somewhere. That part isn’t new. We’ve always had credentials. Degrees, certificates, references, even something as informal as someone saying, “yeah, I trust this person.” But SIGN seems to be asking a slightly bigger question: what if all of that could live in a shared space? Not locked inside institutions, not scattered across platforms, but something more open, more portable.

And that’s where I start to pause.

Because as soon as you say “shared infrastructure,” it stops being just technical. It becomes social. Almost political, in a quiet way. Someone has to decide what counts as a credential. Or maybe not someone—maybe many people, many groups. But even then, those decisions carry weight. If a credential can unlock tokens—actual value, not just recognition—then suddenly it’s not just about recording reality. It’s about shaping behavior.

I can’t help imagining how people might react to that. If I know that getting a certain credential means I’ll receive some kind of reward, do I still pursue it for the same reasons? Or does the incentive start to blur things a little? Not necessarily in a bad way—it’s just… human nature, I guess. We respond to incentives, often without realizing it. And systems like this don’t just observe that—they amplify it.

There’s something else that keeps bothering me, in a quiet, nagging way. Trust.

SIGN talks about verification, and I get that—cryptography, proofs, all the technical machinery that makes something “verifiable.” But trust doesn’t disappear just because something is verifiable. It shifts. Instead of trusting a single institution, you’re trusting the network of issuers, the rules they follow, the assumptions baked into the system. You’re trusting that a credential actually means what it claims to mean.

And that’s where things get a little fuzzy again.

Because meaning isn’t fixed. A credential isn’t just data—it’s context. A degree from one place doesn’t always carry the same weight somewhere else. A badge in one community might be meaningless in another. So even if SIGN can verify that something is real, it doesn’t necessarily tell you how much it matters. And that gap—that space between verification and meaning—feels important.

I also keep thinking about what happens when things go wrong. Because they will, right? Not in some dramatic, system-breaking way, but in small, everyday ways. Someone issues a credential they shouldn’t have. Or someone finds a way to game the system. Or maybe it’s just a misunderstanding—something that looked valid at the time but later turns out to be questionable.

Can those credentials be undone? And if they can, who gets to decide that? The moment you introduce the idea of revocation, you’re also introducing authority, even if it’s distributed. There has to be some process, some form of judgment. And that’s where systems often start to feel less like neutral infrastructure and more like living, breathing ecosystems—with disagreements, tensions, maybe even conflicts.

I don’t think that’s a flaw. If anything, it makes the whole thing feel more real. But it does make me wonder how prepared a system like SIGN can be for those kinds of situations.

Then there’s the transparency side of it, which I find both reassuring and slightly uncomfortable at the same time. On paper, transparency sounds great. Everything is visible, auditable, open to inspection. You don’t have to take things on faith—you can verify them yourself.

But I keep thinking about how that feels from a human perspective. What does it mean to have your credentials, your activities, your associations all sitting in a system that others can examine? Even if it’s abstracted, even if it’s technically “private enough,” there’s still something about it that feels… exposed. Like you’re being reduced to a collection of proofs.

Maybe that’s inevitable. Maybe that’s the trade-off for having a system that’s this open. But it doesn’t feel like a small trade-off.

And I guess what I keep coming back to, over and over, is how this would actually feel to use. Not in a demo or a controlled environment, but in real life. When you’re tired, distracted, just trying to get something done. Would SIGN feel like a helpful layer in the background, quietly organizing trust? Or would it feel like another system you have to think about, another set of rules to navigate?

There’s a difference between a system being powerful and it being natural. The most successful infrastructures are often the ones you barely notice. They just work. But for something like SIGN, which deals with identity, value, and trust, I’m not sure it can ever be completely invisible. And maybe it shouldn’t be.

The more I think about it, the more it feels like SIGN isn’t just a piece of technology—it’s a kind of experiment. A way of asking: what happens if we try to formalize trust at a global scale? What happens if we turn credentials into something fluid, portable, and tied to value?

And I don’t think there’s a clean answer to that.

Because people are unpredictable. Communities evolve in strange ways. Systems that look balanced at the start can drift over time. Power can concentrate in places you didn’t expect. Meanings can shift. Incentives can create behaviors no one really planned for.

I guess that’s why I can’t quite settle on a clear opinion about SIGN. It’s not that I think it’s flawed, or perfect—it’s that it feels unfinished in a very fundamental way. Not unfinished as in incomplete, but unfinished as in… open. Dependent on how people choose to use it, shape it, maybe even bend it.

And maybe that’s the part that keeps me interested.

Because I can’t help wondering what this will look like a few years down the line, when it’s no longer just an idea you can think about in isolation. When it’s tangled up in real communities, real incentives, real disagreements. When people start relying on it, questioning it, maybe even pushing against it.

Does it become something quietly essential, like a layer of trust we stop noticing? Or does it remain something we’re always negotiating, always trying to understand?

I don’t know. And I’m not sure I’m supposed to know yet.

But it does make me curious in that slow, lingering way—the kind that doesn’t demand answers right away, but keeps asking better questions the longer you sit with it.

And maybe that’s where it becomes something more than just infrastructure.

Not a system we simply use, but one we slowly grow into—and question along the way.

A place where trust isn’t fixed, but constantly negotiated in quiet, unseen ways.

Where every credential tells a story, but never the whole story.

Where value flows, but not always in the directions we expect.

And somewhere in that uncertainty, something new begins to take shape.

Not fully understood, not fully controlled—but undeniably alive.

@SignOfficial #SignDigitalSovereignInfra $SIGN