I often catch myself wondering how much of what we trust is actually layered, rather than obvious. With Sign, that layering feels intentional and almost architectural — each credential, each validation, each verifier adds a small brick to a larger structure that I carry with me. It’s elegant in its simplicity, yet I can’t shake the question: how much of that elegance relies on things going perfectly?

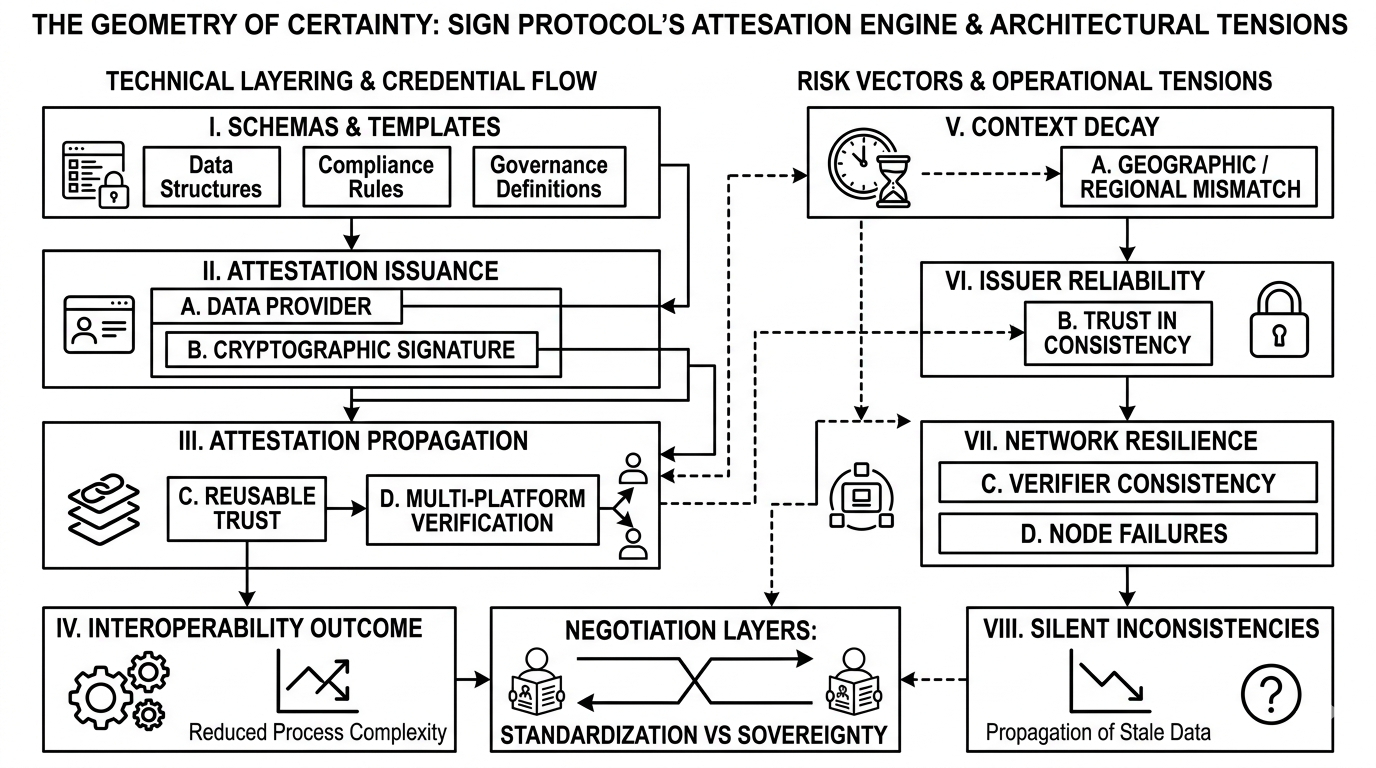

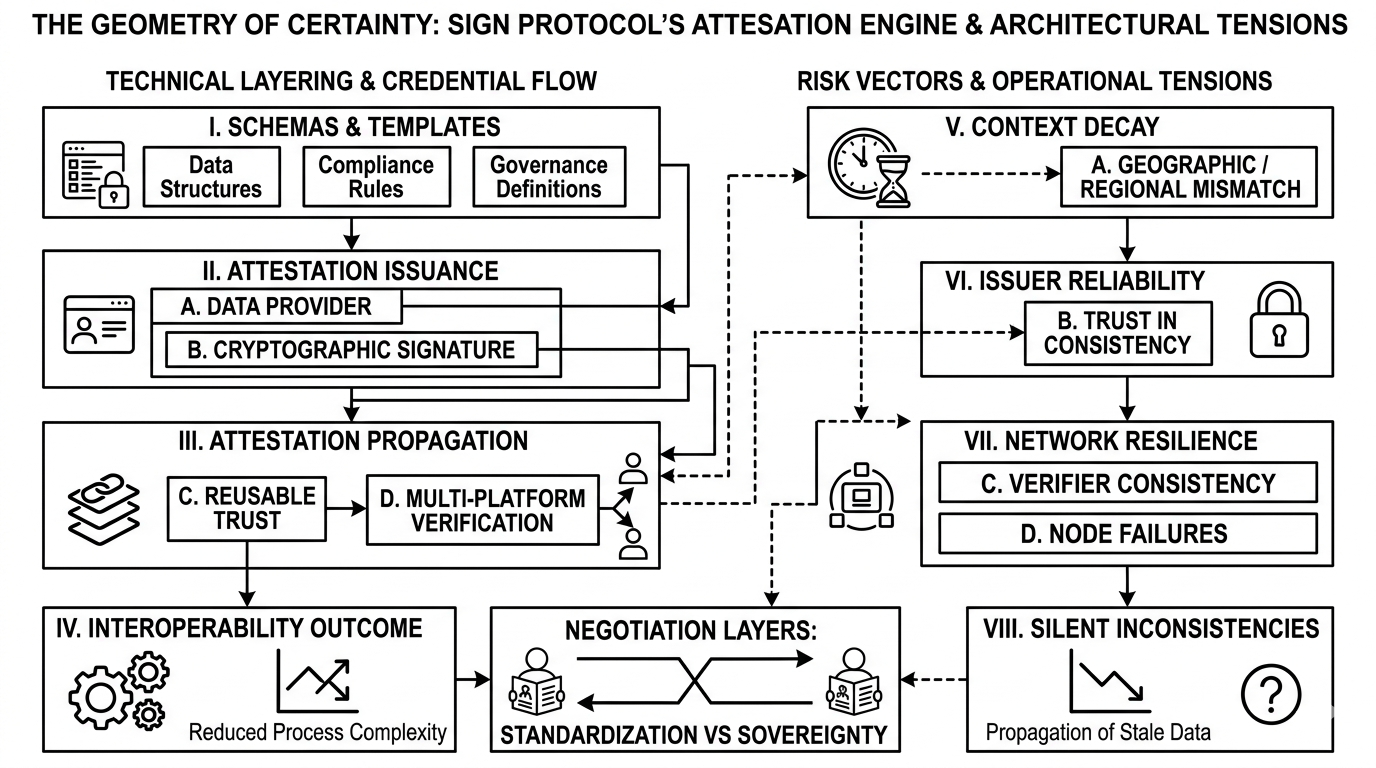

The workflow is clear on paper. Issuers create credentials, validators confirm them, and I can then reuse that proof across platforms without repeating the verification process. It’s solving a real inefficiency: instead of me proving the same thing multiple times, the system lets one moment of trust propagate. That alone is intriguing, because it shifts the cognitive load off me and onto the network. But as I trace that logic, I start noticing subtle tensions. A credential is only as strong as the validator who signed it. And a validator is only as reliable as the rules and economic incentives that govern it. Context can shift, yet the credential does not. What happens when small assumptions break? What risks emerge if adoption is uneven, or if a verifier node fails or behaves inconsistently?

What I find most curious is the redistribution of responsibility. Sign creates this hierarchy where no single layer holds the full picture, yet the system depends on each layer performing accurately. It’s a distributed trust model, but it’s also brittle in quiet ways. A missing validation or a stale credential doesn’t crash everything, but it introduces silent inconsistencies that ripple outward. I wonder how resilient the design is under real-world stress, especially when adoption is partial or geographically skewed.

At the same time, the portability of credentials is compelling. I can hold a credential once and reuse it anywhere, which in theory should reduce friction and simplify access. That’s where the project’s value becomes concrete for me: it’s not about flashy mechanics, it’s about reducing cognitive and procedural load. But simplicity hides nuance. How does the system handle conflicts, updates, or revocations? How often does human judgment intersect with automated verification, and what happens when they disagree? These aren’t trivial questions, because in practice, even a small mismatch can cascade.

Sign feels like a careful negotiation between trust, verification, and practicality. The network aims to automate confidence, yet it can’t fully eliminate doubt. I see its strengths — efficient reuse, structured accountability, distributed responsibility — but I also see its limits. Each node, each validator, each issuer adds power, but also points of potential friction. It’s not fragile, exactly, but it’s not infallible either.

Reflecting on it, I realize the project isn’t just about credentials or verification. It’s about defining how digital systems agree on what counts as truth — and then living with the consequences when those agreements are imperfect. I keep circling the tension between elegance and vulnerability, between efficiency and unseen risk. That tension is precisely what makes me pause and think, because the system is only as reliable as the weakest link in its chain. And in the messy real world, links break.