I have been turning this over in my head for days now, the kind of half-formed thought that sticks because it shows up everywhere once you notice it. Not in some grand theoretical debate, but in the everyday mess of trying to make something work in this space. Picture a studio lead staring at their dashboard at 2 a.m.,

watching reward payouts leak because bots are farming quests again, or a regular player who finally cashes out a decent stack only to get hit with a surprise KYC request that feels less like safety and more like someone rifling through your pockets. Or the regulator on the other side, buried in reports, trying to spot laundering patterns in what looks like innocent game activity. The friction isn’t abstract. It’s the cost of compliance eating into margins, the trust that evaporates when data ends up in the wrong hands, the quiet churn when users decide it’s not worth the hassle.

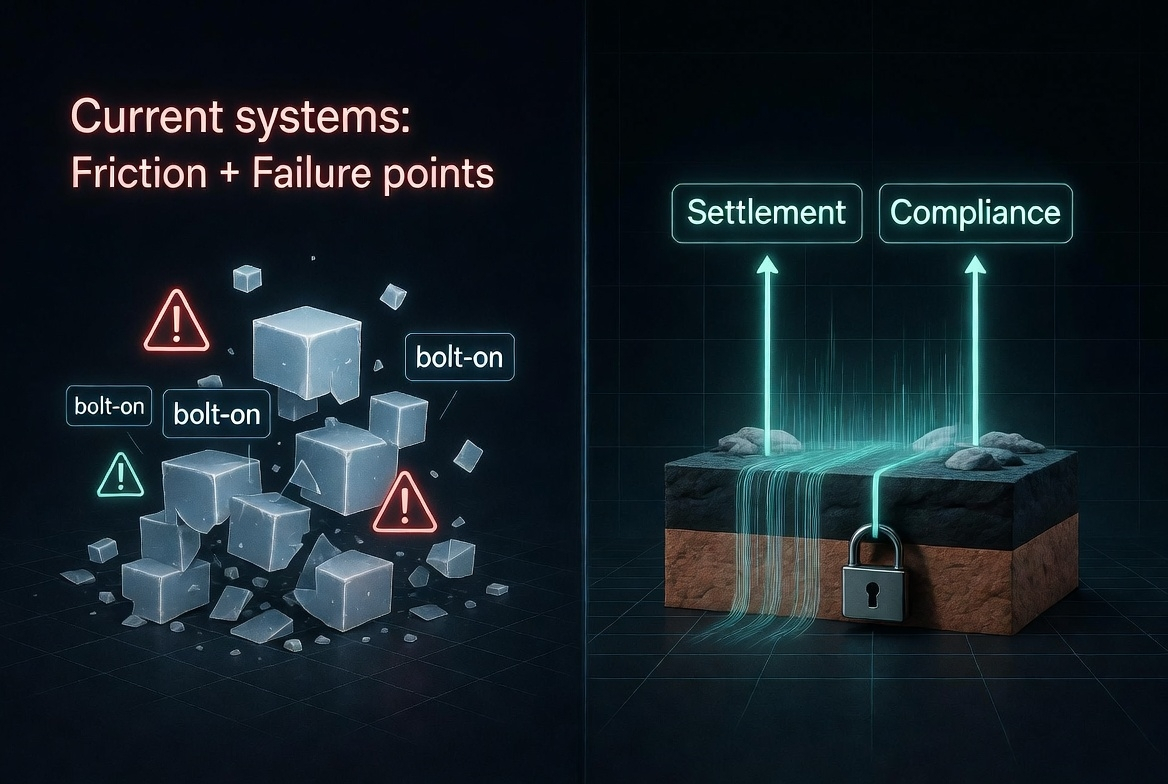

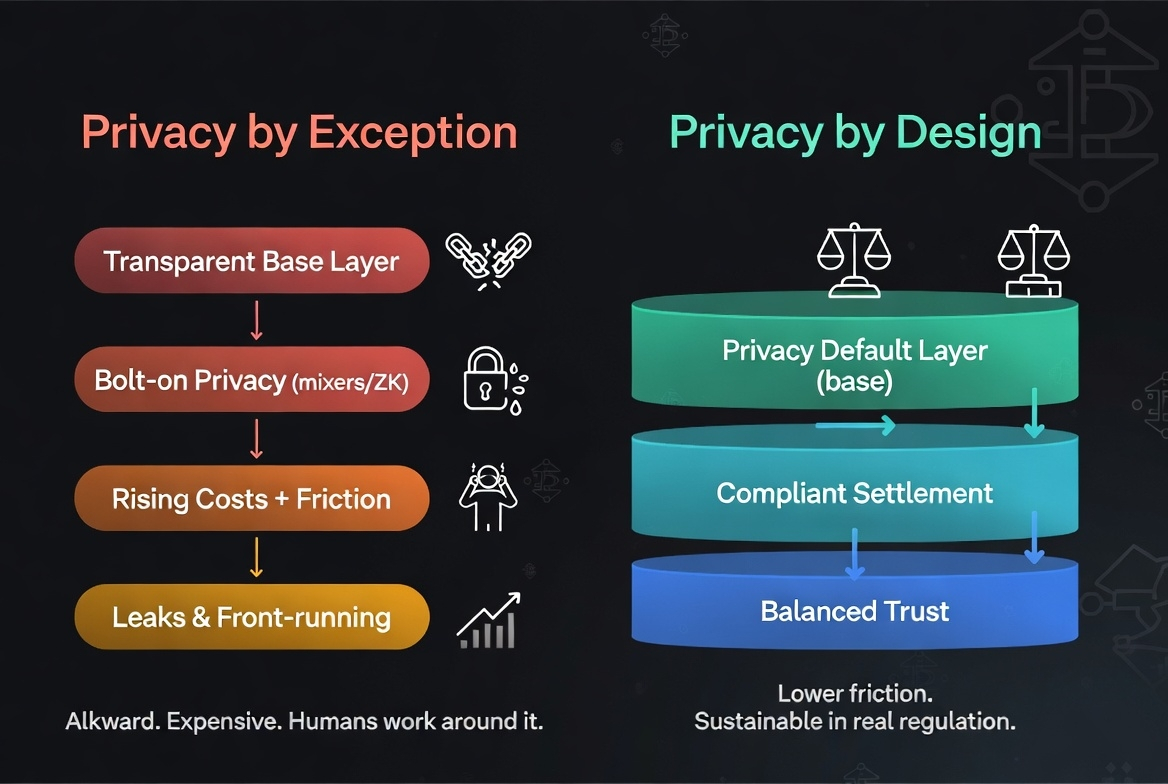

The problem sits right there in the middle of it all. Regulation isn’t going away governments and watchdogs are looking harder at Web3 because money moves fast and anonymity can hide ugly stuff. You need trails for settlement, proof that rewards went to real people, controls so one bad actor doesn’t drain the whole pool. But privacy keeps getting handled as the exception, not the rule. You build the system first transparent ledgers for ownership, centralized tracking for efficiency, reward logic that needs player signals to work and then you layer on the privacy bits later. Opt-in consent forms. Jurisdiction-specific toggles. “We anonymize where possible.” It sounds reasonable on paper, but in practice it always feels patched and fragile. Data still flows through too many hands. Audits balloon because you have to prove you’re not over-collecting. Players sense the inconsistency and pull back; they’ll complete a streak for rewards but won’t stick around if every move feels logged for someone else’s benefit. Builders pay twice—once for the tech, again for the legal bandages and fragmented tools that never quite sync. Human behavior makes it worse. People aren’t robots; they’ll exploit any loophole, whether that’s gaming a reward system or simply walking away when the privacy trade-off stops feeling worth it. I’ve watched too many early setups collapse under exactly this weight: great on incentives until the first regulatory letter lands, then everything shifts to damage control and the economics never recover.

Most fixes I’ve seen just paper over the cracks. Full anonymity sounds clean until regulators treat it as a red flag for money laundering. Heavy KYC everywhere kills casual play and drives costs through the roof for small transactions that should settle in seconds. Centralized data stores promise control but become juicy targets, and the “we only share with trusted partners” line rarely survives first contact with a data breach or a partner pivot. Even the on-chain transparency crowd runs into walls—great for proving ownership, terrible for keeping everyday behavior private when every wallet link becomes a permanent record. Settlement gets messy too. You want fast, cheap cashouts that feel like real money in a player’s hand, not a compliance obstacle course. Compliance teams want verifiable trails without turning the whole ecosystem into a surveillance machine. The costs compound: legal reviews, third-party auditors, ongoing monitoring that eats into the very rewards you’re trying to distribute sustainably. And all of it rests on shaky human ground—users who say they don’t care about privacy until they suddenly do, or builders who swear they’ll do the right thing until growth pressure pushes them to cut corners.

That’s why I keep coming back to the idea that regulated environments—whether we’re talking crypto rewards, Web3 gaming economies, or any settlement layer that touches real value—actually need privacy by design, not bolted on later. Not as a marketing checkbox or a feature you activate for EU users, but as the default architecture from day one. The system decides upfront what signals it truly needs, keeps them contained where they belong, and only surfaces the minimum for compliance or fraud checks. No selling data to third parties. No sprawling lakes of player habits waiting for the next leak. It wouldn’t magically fix every regulatory headache, but it might turn compliance from a constant drag into something closer to a built-in cost of doing business. Fraud detection without needing to expose everything. Reward matching that respects the line between useful insight and overreach. Settlement that feels legitimate to both the player and the regulator because the design never promised more privacy than it could deliver.

I’m not pretending this is easy or inevitable. I’ve seen enough projects swear by “privacy-first” only for the reality to look a lot messier once scale hits and the token economics start to wobble. Design choices that look bulletproof in a small test environment can crumble when real money, real users, and real regulatory questions collide. There’s always the risk that regulators move the goalposts anyway—demanding more transparency than any privacy-by-design setup can comfortably give—or that teams, under pressure, quietly expand data use because “it improves retention.” Human behavior doesn’t change overnight; players will still chase rewards, and builders will still chase growth. Costs could still creep if the infrastructure requires more upfront engineering than the usual quick-and-dirty approach.

Still, when I look at infrastructure that seems to lean this direction without the usual fanfare, it feels worth watching. The way some setups keep gameplay signals inside the system for better reward logic and fraud controls, rather than shipping them off or monetizing them separately, at least tries to treat privacy as a constraint baked into the economics instead of an after-the-fact exception. It aligns with how people actually behave: they’ll engage more consistently when the system doesn’t feel like it’s constantly watching and selling.

PIXEL ends up doing real work in that layer powering rewards across titles without turning into pure speculation fodder because the underlying design forces sustainability over endless emissions. I’m cautious about over-reading any single example, but Pixels and the way they’ve approached their Stacked layer strike me as one of the less hyped attempts at treating this as infrastructure rather than just another game feature. pixel It’s not perfect, and I wouldn’t bet the farm on it solving every regulatory friction, but it’s the kind of pragmatic containment I’ve rarely seen executed without the usual marketing gloss.

In the end, the people who would actually lean on something built this way are probably the studios and builders who have already lived through the boom-bust cycles and want something that can survive regulatory scrutiny without killing user trust or their own margins. Institutions sniffing around Web3 gaming as a real asset class might give it a longer look too—lower data-liability risk, clearer paths to compliant settlement, fewer surprise audits. It might work because it’s already running in production, generating measurable revenue and retention without the usual extraction problems, and because it lines up with how humans actually play and earn when the incentives don’t feel rigged or invasive. What would make it fail? A sudden regulatory shift that demands full wallet-level transparency no matter what, slow partner adoption that leaves the infrastructure underutilized, or the token economics drifting back toward hype over utility. I’m not certain any of this scales everywhere, or that privacy by design will ever feel complete in a world that still wants both openness and protection. But after watching enough systems crack under the weight of awkward exceptions, this approach at least feels like one you could trust to hold up longer than the alternatives. It’s quiet, it’s conditional, and right now that’s probably the most realistic thing you can say about it.