I have been turning this over in my head for days now, the way you do when something small keeps snagging on every transaction or decision you make. Last week it was a routine cross border transfer I was helping a builder friend withnothing flashy, just moving funds to pay a contractor who’d delivered some code for a compliance dashboard. The bank side wanted full wallet histories, the exchange required fresh KYC refresh, and the on-chain trail had to be squeaky clean or it sat there frozen while someone in compliance ran manual checks. The friction wasn’t theoretical. It was two extra days, higher fees, and that familiar low-level resentment that makes you wonder why the system works against the very people trying to stay inside it. That’s the real starting point for me: not some grand philosophy about surveillance or freedom, but the daily, practical grind where regulated finance meets actual human behavior.

The problem exists because regulation was built for a pre-digital world where transparency meant paper trails you could physically audit and privacy was whatever stayed off the ledger. Now everything is on-chain, visible by default, and regulators quite reasonably worry about money laundering, sanctions evasion, terrorist financing real risks we’ve all watched materialize in spectacular failures. Institutions can’t afford the fines or the reputational hit, so they demand full visibility. Builders trying to ship anything useful hit the same wall: integrate with regulated rails and you expose user data in ways that feel disproportionate; stay siloed and you never scale beyond speculation. Users, even the careful ones, end up caught in the middle handing over more personal information than they ever would in a traditional bank just to move value they already earned legitimately. Human behavior being what it is, people adapt. Some route through jurisdictions with looser rules, some use intermediaries that add layers of cost and delay, others simply opt out and keep activity off platform. None of that makes the system safer; it just pushes the gray areas underground where oversight is even harder.

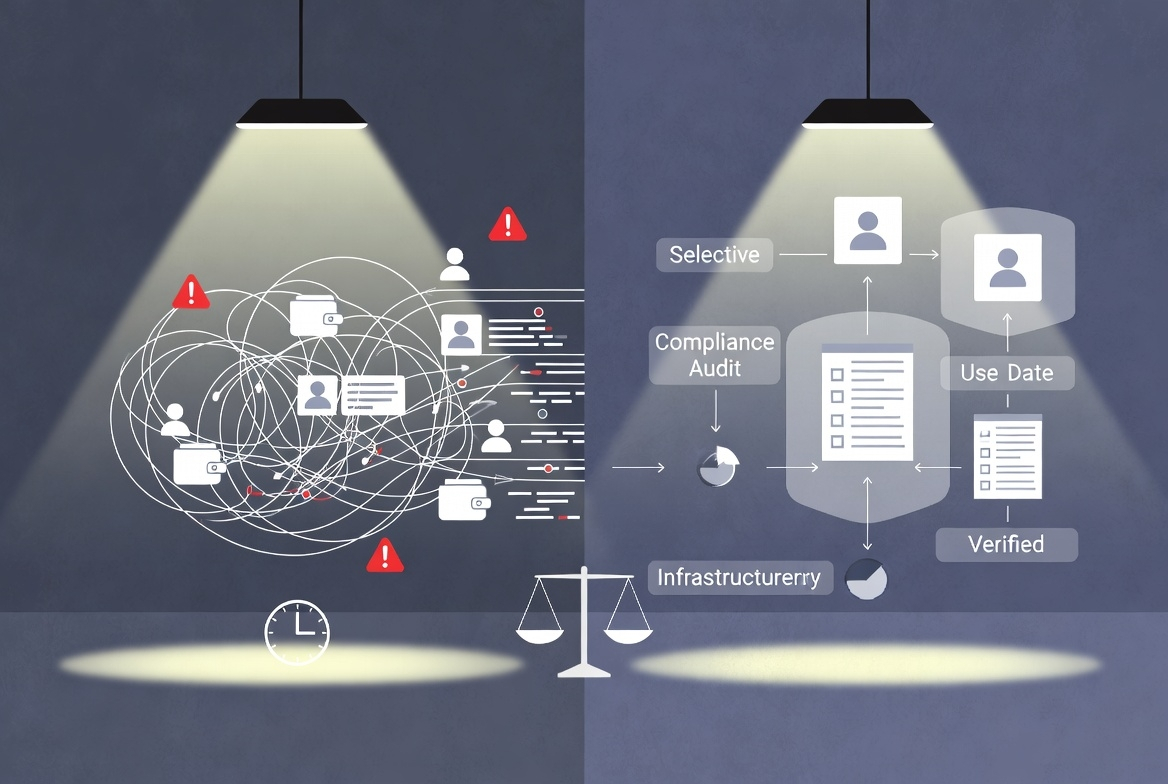

What strikes me most is how incomplete most privacy approaches feel in practice. They arrive as exceptions bolt-on tools, optional toggles, or separate chains that regulators treat with immediate suspicion. You use them and suddenly your address gets flagged on compliance lists because the very act of seeking privacy looks like you have something to hide. Settlement becomes messier, not cleaner, because counterparties on the regulated side won’t touch it without extra manual verification. Compliance teams drown in data they can’t meaningfully analyze, while the real risks slip through the cracks anyway. Costs pile up: legal reviews, third-party audits, insurance premiums against the chance that an exception blows up into a regulatory headache. I’ve seen enough systems fail protocols that started with good intentions but ended up delisted or blacklisted because they couldn’t square the circle between law and usability to know that exceptions rarely age well. They create two classes of activity: the fully exposed, fully compliant path that feels invasive and slow, and the hidden path that carries its own set of existential risks. Neither satisfies institutions that need defensible audit trails, nor users who just want to transact without feeling constantly watched.

That’s why the notion of privacy by design keeps resurfacing for me, not as marketing, but as the only path that might actually hold up under real pressure. Design it in from the infrastructure level so that compliance proofs are native verifiable without broadcasting every detail to every node or every regulator. Settlement could happen faster because the checks are precise and automated rather than blanket and manual. Costs come down because you’re not paying for endless data storage and human review of transactions that are 99.9% routine. Law gets what it needs (assurances that rules were followed) without turning every user’s financial life into public record. Human behavior aligns better too: when the regulated path doesn’t feel like a panopticon, more people stay on it. Builders can focus on actual product instead of compliance gymnastics. Institutions can allocate capital without boards second-guessing every exposure risk. Even regulators might sleep easier knowing the system is auditable by design rather than by constant, expensive policing.

I’m not pretending this is straightforward or guaranteed. I’ve watched too many infrastructure bets collapse under their own weight promising seamless integration only to discover that regulators move at their own pace, that legacy systems don’t bend easily, or that subtle implementation flaws create new attack surfaces. Privacy by design has to be boringly robust: no clever loopholes that clever actors will eventually exploit, no hidden centralization that a single subpoena can unravel, no performance penalties that make it impractical for high-volume settlement. It also has to speak the language of existing law Travel Rule, AML directives, whatever the local jurisdiction demands without forcing everyone to rewrite their compliance manuals from scratch. That’s the part I remain cautious about. Theory is easy; real-world adoption, especially among institutions that move billions and answer to boards and auditors, is where most ideas quietly die.

Lately this line of thinking has me paying attention to what the Pixels team is putting together. Not with any breathless certainty, just the quiet interest of someone who’s seen infrastructure matter more than hype. The project account Pixels feels like it’s grappling with these exact tensions treating the space as regulated infrastructure rather than an escape hatch. I don’t claim to know every detail or outcome; that would be premature and I’ve been burned by overconfidence before. But the direction building rails where privacy is native rather than an awkward exception lines up with the practical frictions I keep running into. It’s the kind of long, unglamorous work that rarely makes headlines but could actually change how settlement, compliance, and daily usage feel in regulated environments.

The grounded takeaway, for me at least, is pretty narrow. The people who would actually use this are the ones already operating at the intersection of real money and real rules: institutions dipping into digital assets who need compliance that doesn’t paralyze them, builders shipping products for actual economies rather than pure speculation, and users in heavily regulated jurisdictions who want both legitimacy and a boundary around their personal data. It might work if it stays relentlessly practical proving itself through small, boring pilots that regulators can kick the tires on, integrating with existing settlement flows without demanding the world rewrite its rulebooks, and keeping costs and complexity low enough that adoption feels inevitable rather than aspirational. What would make it fail is the usual quiet killers: underestimating regulatory inertia, letting implementation drift into something too complex or too centralized, or losing sight of human behavior by assuming perfect actors on all sides. I have seen it happen enough times that I stay skeptical by default.