Privacy by Design, Not by Exception:

Quiet Reflections on What Regulated Rewards Actually Need

I have been chewing on this for a while now, the kind of low-level irritation that shows up every time a player tries to turn in-game progress into something real. Last month it was a friend cashing out some rewards from a Web3 game nothing huge, just steady play, missions completed, a bit of pixel earned. The exchange side wanted full transaction histories, the tax authority in his jurisdiction started asking for player behavior logs, and the game’s own payout system suddenly felt exposed because every on-chain move was public by default. Two days of back-and-forth, extra KYC refreshes, and that familiar drag where the regulated path makes you wonder if staying inside the rules is even worth the hassle. That’s where my mind keeps landing, not on grand theories about surveillance states or decentralized utopias, but on the everyday friction that real users, builders, and even regulators run into when value crosses from game to regulated finance.

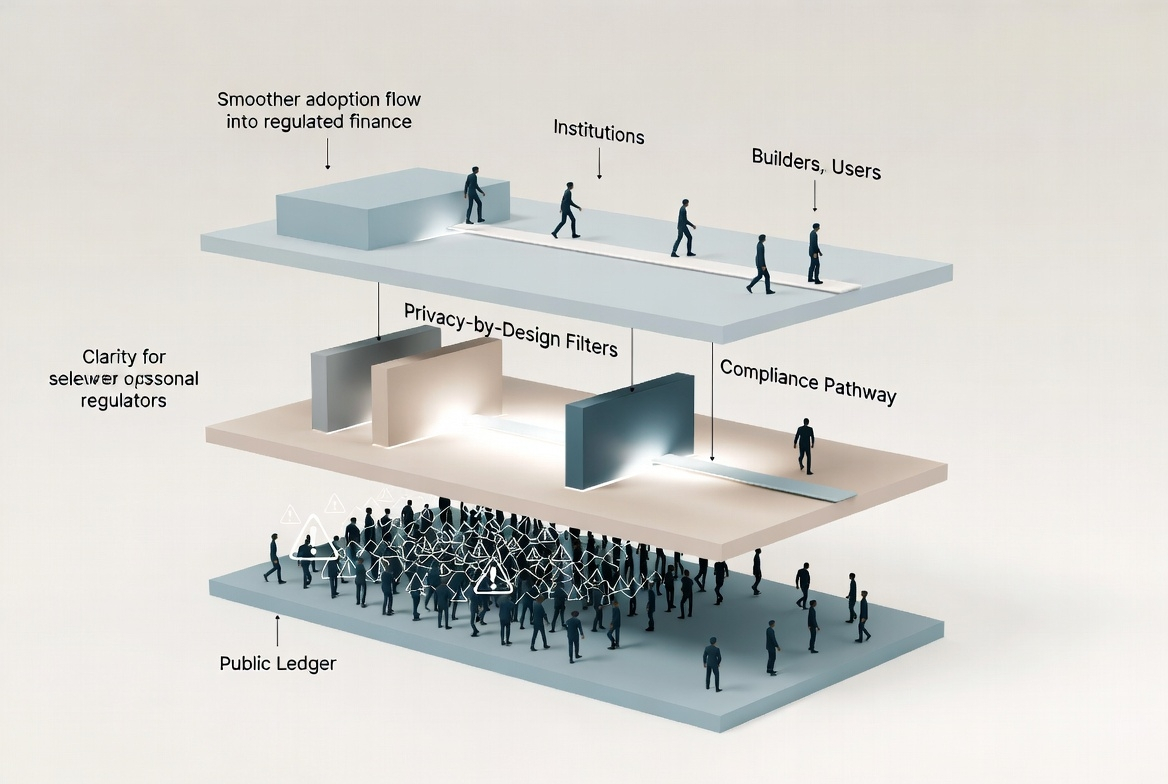

The problem is baked in from the start. Play-to-earn or whatever we’re calling sustainable Web3 gaming these days involves actual economic activity. Rewards have real-world value, cash-outs hit banks or exchanges, and suddenly you’re in the same regulatory bucket as remittances or securities: AML rules, Travel Rule obligations, tax reporting, sanctions screening. Regulators aren’t being unreasonable; we’ve seen enough rug pulls and wash trading to understand why they demand visibility. Builders trying to scale face the same bind: build something fun and rewarding, but integrate with regulated rails and you end up collecting and exposing far more player data than feels proportional. Stay too closed off and you never reach the volumes that make the economics work. Users, even the honest ones grinding missions day after day, end up handing over more personal and behavioral information than they would for a regular mobile game just to prove their earnings are legitimate. Human nature does what it always does: some route through gray-market off-ramps, others slow down or quit when the process feels too invasive, and a few simply accept the surveillance because the alternative is worse. None of this makes the ecosystem safer or more sustainable; it just creates more hidden costs and quiet resentment.

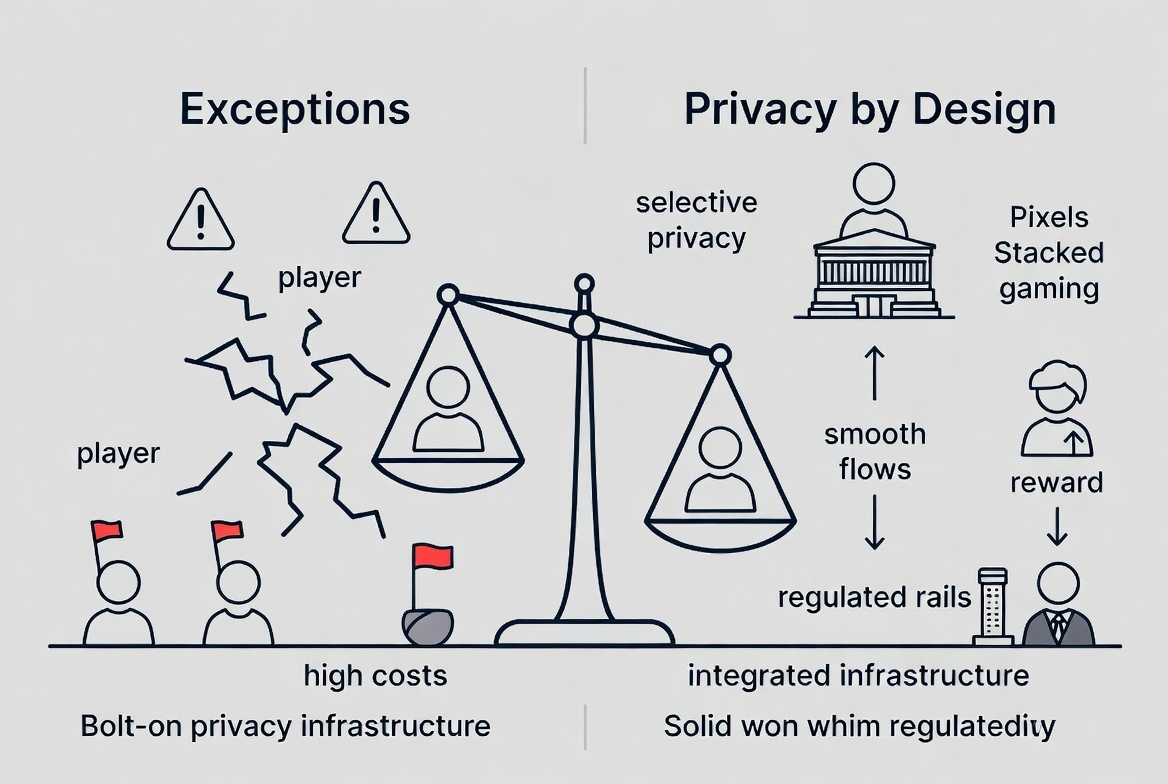

What gets me is how most privacy approaches in this space end up feeling like afterthoughts. They show up as exceptions optional privacy toggles, separate side-chains, or third-party mixers that regulators immediately flag as suspicious. You opt in and suddenly your wallet or player profile gets extra scrutiny because choosing privacy looks like you’re trying to hide something. Settlement for rewards becomes slower, not faster, because counterparties on the regulated side demand manual reviews. Compliance teams get flooded with data they can’t usefully parse, while the actual risks (bots, sybils, coordinated farming) still slip through. Costs stack up: legal opinions, audit fees, insurance against the chance that an “exception” blows up into a regulatory fine. I’ve watched enough systems crack under their own weight early GameFi projects that started with good intentions around player ownership but ended up delisted or throttled because they couldn’t make privacy and compliance coexist to know that bolt-on solutions rarely survive real pressure. They create two parallel worlds: the fully transparent, fully compliant lane that feels slow and invasive, and the semi-hidden lane that carries its own long-term risks. Neither works well for studios trying to build lasting economies, nor for players who just want fair rewards without feeling constantly audited.

That’s why the idea of privacy by design keeps pulling at me not as some flashy feature, but as the only structure that might actually hold up when real money and real rules collide. Build it into the infrastructure layer so that compliance proofs are native and automated: verifiable that rules were followed without broadcasting every behavioral signal or personal detail to every node, every exchange, or every regulator. Rewards settlement could move quicker because checks are precise rather than blanket. Costs drop because you’re not paying for endless data hoarding and human review of 99% routine player activity. Law gets what it needs assurance without turning every gamer’s session data into public record. Human behavior shifts too: when the regulated path stops feeling like a panopticon, more people stay on it. Builders can focus on actual gameplay loops instead of compliance workarounds. Even regulators might find it easier to oversee something auditable by design rather than chasing shadows.

I am not claiming this is simple or inevitable. I’ve seen too many infrastructure experiments quietly stall promising seamless integration only to hit regulatory inertia, legacy system mismatches, or subtle design flaws that create new vulnerabilities. Privacy by design has to be relentlessly boring and robust: no clever loopholes that get exploited later, no hidden points of centralization that a single subpoena can collapse, no performance hits that make it unusable for high-volume reward payouts. It also has to speak the language regulators already understand local AML directives, tax reporting standards without forcing every studio to rewrite their compliance playbook. That’s the part I stay cautious about. Theory sounds clean; actual adoption, especially when cash-outs start hitting traditional finance rails in volume, is where most ideas fade.

Lately this line of thinking has me watching what Pixel is doing with its Stacked system. Not with any certainty, just the steady interest of someone who’s seen these things play out before. From the outside, Stacked looks like they’re treating rewards infrastructure seriously retaining gameplay signals internally for better matching and fraud controls, explicitly not selling personal data to third parties, building it as a shared layer across multiple games rather than isolated gimmicks. It feels less like another hype cycle and more like an attempt at plumbing that could actually sit underneath regulated flows: privacy native to the reward engine, compliance verifiable without full exposure. I don’t pretend to know every implementation detail or how it will hold up under heavier scrutiny that would be premature and I’ve been disappointed enough times to stay skeptical by default. But the direction aligns with the practical frictions I keep running into when rewards cross into regulated territory. Treating the whole thing as infrastructure, not spectacle.

The grounded takeaway, at least for me, stays narrow. The people who would actually use something like this are the ones already living at the intersection of real play and real rules: players who want legitimate earnings they can cash out without constant surveillance, studios and builders trying to scale sustainable economies instead of short-term extraction, and institutions or exchanges that might eventually integrate these flows if compliance feels defensible rather than burdensome. It might work if it stays relentlessly practical proving itself through steady, boring iterations that regulators and partners can actually test, keeping costs and complexity low enough that adoption feels natural, and never losing sight of how human players actually behave when incentives are aligned. What would make it fail is the usual quiet stuff: underestimating how slowly regulated rails move, letting the implementation drift into something too complex or too centralized, or forgetting that no system survives if it assumes perfect actors on every side. I’ve watched it happen often enough that caution feels like the only honest posture.

Still, the alternative treating privacy as a perpetual exception in Web3 gaming feels increasingly unsustainable.

The friction is real, the costs keep climbing, and the behavior it encourages isn’t building anything durable. If what pixel is building with Stacked can shift even part of that dynamic toward infrastructure that works with regulated reality instead of against it, that would be worth paying attention to. Not exciting in the headline sense. Just useful. And in this space, useful is rare enough to notice.