Habibies, let me put this simply: I thought a “rewarded LiveOps engine” was just another dressed-up feature. A nicer UI for handing out incentives. Maybe a smarter task board.

It’s not.

It’s someone trying to fix a system that never actually worked.

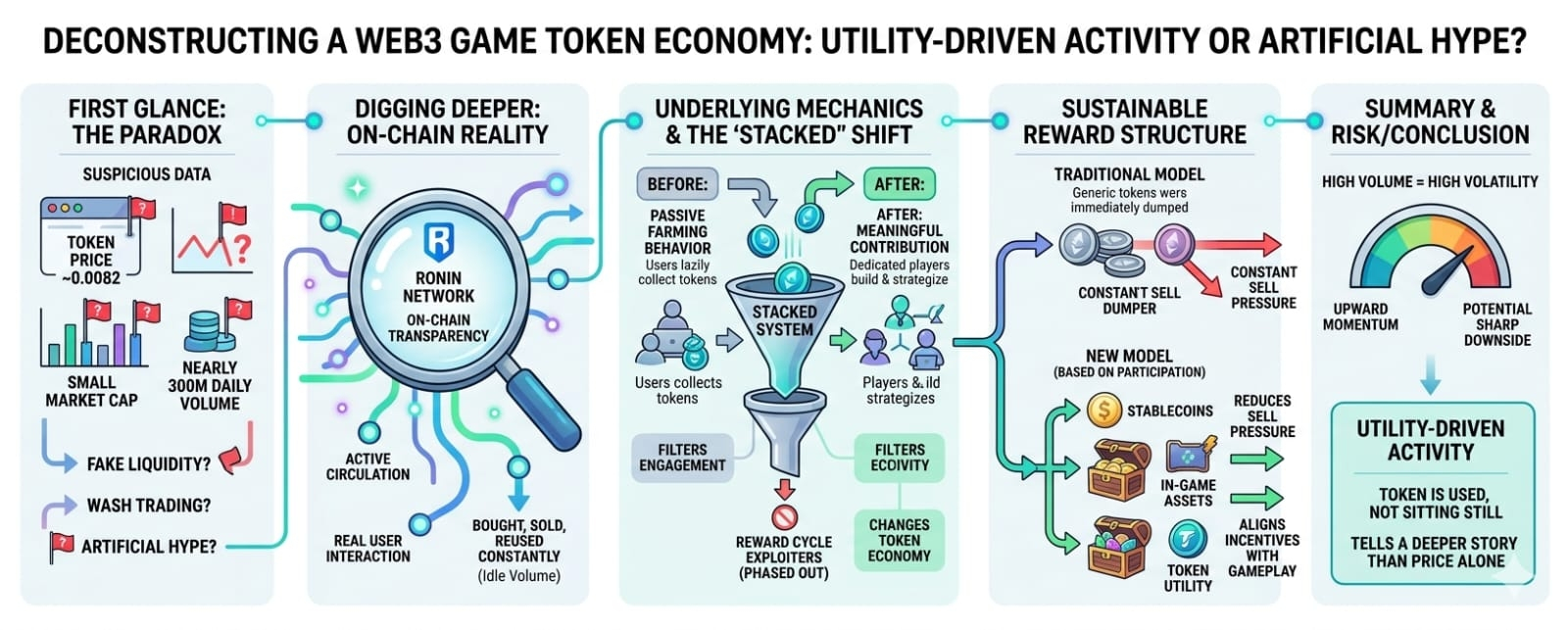

Because the real problem in games—especially anything touching play-to-earn—was never rewards themselves. It was distribution. Who gets rewarded, when they get it, and why they get it.

Most systems treated rewards like a faucet. Always on. Poorly controlled. And the outcome was predictable. A small group captured most of the value—bots, grinders, or hyper-optimized players—while everyone else slowly disengaged.

When 20% of players take 80% of rewards, that’s not imbalance. That’s design failure.

What systems like “Stacked” do is reframe rewards entirely. On the surface, it still looks simple: complete tasks, earn rewards across games. But underneath, something more precise is happening.

An AI layer is deciding which tasks exist, who sees them, and when they appear.

That last part—timing—is doing most of the work.

Because a reward given at the wrong moment is wasted. But given at the right moment, it becomes leverage.

If a player is about to churn, a reward isn’t a bonus anymore. It’s retention. If a player is already highly engaged, over-rewarding them can actually reduce long-term value by flattening progression.

So instead of broadcasting incentives across the entire player base, the system narrows its focus. Right player, right moment.

It sounds clean. What it really means is constant behavioral adjustment.

Early data from systems like this often shows retention lifts in the 15–30% range when rewards are targeted instead of uniform. That range matters more than it looks.

At 15%, you stabilize a game.

At 30%, you reshape its entire growth curve.

And the difference between those outcomes comes down to how well the system understands player intent.

That’s where the idea of an “AI game economist” stops sounding like a buzzword.

Traditionally, game economists design reward loops manually. They monitor inflation, tweak drop rates, and react to imbalances over time. Updates happen weekly, sometimes monthly.

But player behavior shifts daily.

That gap has always been the weakness.

An AI-driven system compresses that loop. Instead of reacting after the fact, it adjusts in real time. If a task is being over-farmed, exposure drops. If a feature isn’t getting traction, rewards are attached to guide players toward it.

What looks like a static task board is actually a moving surface.

Constantly reshaped underneath.

That creates a second-order effect: scale.

Instead of designing 10–20 meaningful tasks per day, systems like this can generate hundreds. Not just more tasks, but more variation. More personalization.

But scale alone isn’t the advantage.

Relevance is.

Two hundred tasks only work if each one feels like it belongs to the player seeing it. Otherwise, it collapses into noise. And players are very good at ignoring noise.

Then there’s the economic layer—the part most systems fail.

Real-money rewards introduce pressure that most game economies can’t handle. In-game inflation is manageable. Real-world value leakage isn’t.

Too generous, and the system collapses.

Too conservative, and players leave.

That tension has killed most play-to-earn models.

What’s different here is how rewards are framed. They’re no longer just costs. They’re treated as investments tied to measurable outcomes—retention, revenue, lifetime value.

If a $1 reward increases expected lifetime value by $3, it makes sense.

If it doesn’t, it gets adjusted.

Quietly. Continuously.

But that introduces a different kind of risk.

When everything is optimized for measurable lift, systems tend to favor short-term gains over long-term experience. Players may stay longer. They may spend more.

But something subtle starts to flatten.

The edges of the game—the unpredictability, the friction, the texture—begin to fade.

Everything becomes efficient.

Not necessarily meaningful.

That’s why experience matters here. The team behind Pixels has already lived through a full cycle of play-to-earn hype, explosive growth, and correction.

At its peak, Pixels reached over a million daily active users. And like many systems driven by incentives, that scale didn’t hold cleanly.

It unraveled where alignment broke.

So what’s being built now doesn’t feel theoretical. It feels reactive. Learned.

At the same time, the broader market is shifting. Traditional studios are cautiously re-exploring incentives, while Web3-native projects are moving away from open farming toward more controlled systems.

You can see it in tighter token models. Conditional rewards. Reduced emissions.

There’s a convergence happening.

Systems like Stacked sit in the middle—blending LiveOps discipline with economic awareness.

If it works, it doesn’t just improve play-to-earn.

It changes how incentives are used across games entirely.

Because once rewards can be measured with precision, they stop being guesses.

They become tools.

And tools spread.

But there’s still an open question.

How much control is too much?

At what point does a system stop feeling responsive and start feeling engineered?

If every action is subtly guided, does the experience lose something human?

Or does it simply become more adaptive?

So far, players don’t seem to mind—as long as rewards feel fair and progression feels natural.

But that balance is fragile.

Push too far, and the system becomes visible.

And once players can clearly see the system, they stop playing the game.

They start playing the system.

If this model holds, the future of game economies won’t be defined by how much they give away.

But by how precisely they give it.

And that shift is quieter than it sounds.

Because the real change isn’t rewards.

It’s control.