@Mira - Trust Layer of AI #Mira $MIRA

Introduction

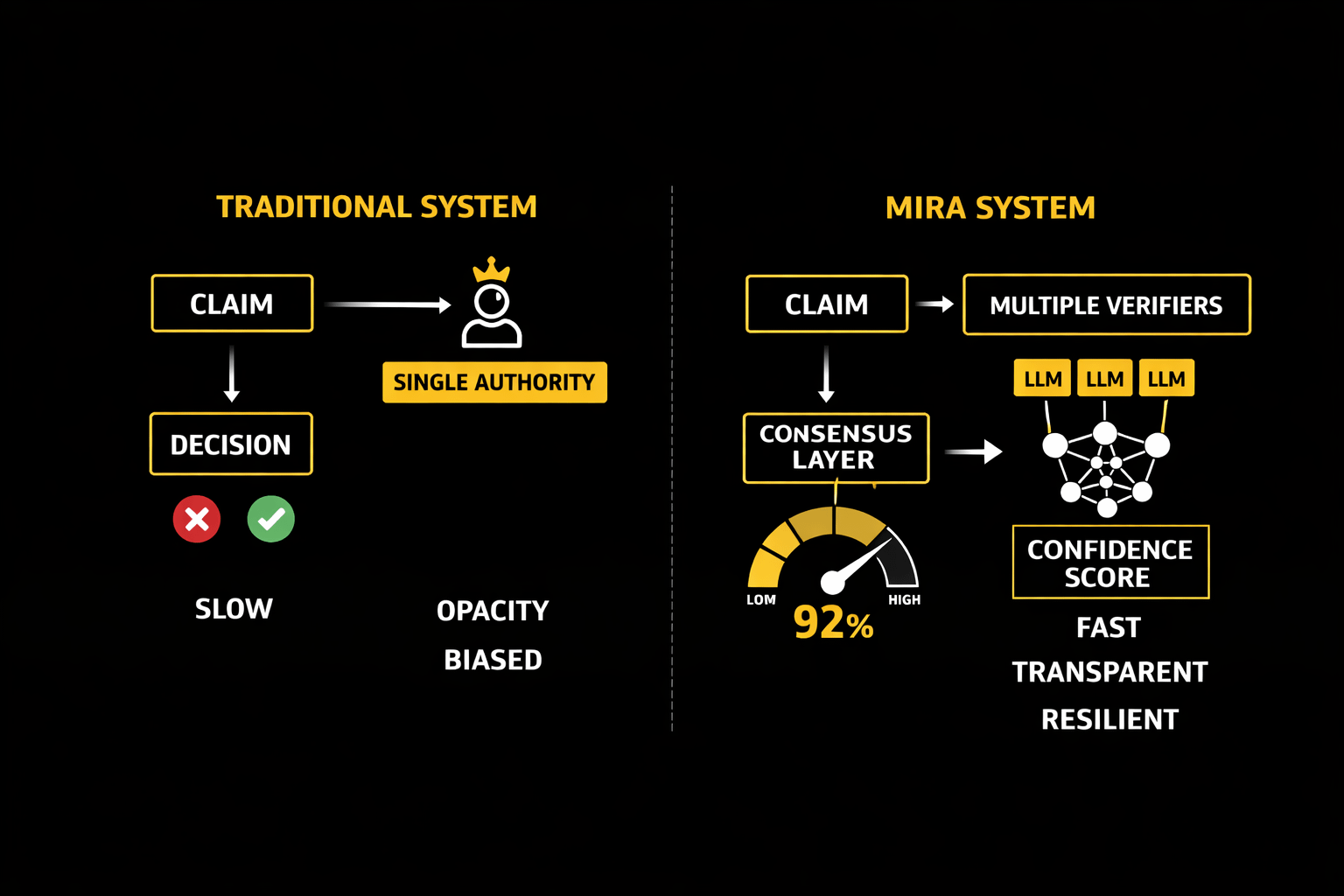

As artificial intelligence becomes a primary source of information, the challenge is no longer access to answers—it is trust in them. Traditional fact-checking systems rely on centralized authorities, human reviewers, or single-model outputs. These methods often struggle with bias, delays, and limited scalability. Mira introduces a decentralized verification framework that replaces single-point validation with consensus-driven analysis from multiple independent language models, offering a fundamentally stronger approach to reliability.

The Limits of Centralized Fact-Checking

Centralized verification works like a gatekeeper system: a claim is submitted, reviewed by one authority, and approved or rejected. While straightforward, this structure has three core weaknesses. First, it creates a bottleneck, slowing response time. Second, it concentrates decision-making power, which increases the risk of bias or manipulation. Third, it lacks transparency because users cannot easily inspect how conclusions were reached. In fast-moving digital environments, these limitations make centralized methods increasingly impractical.

Mira’s Distributed Verification Model

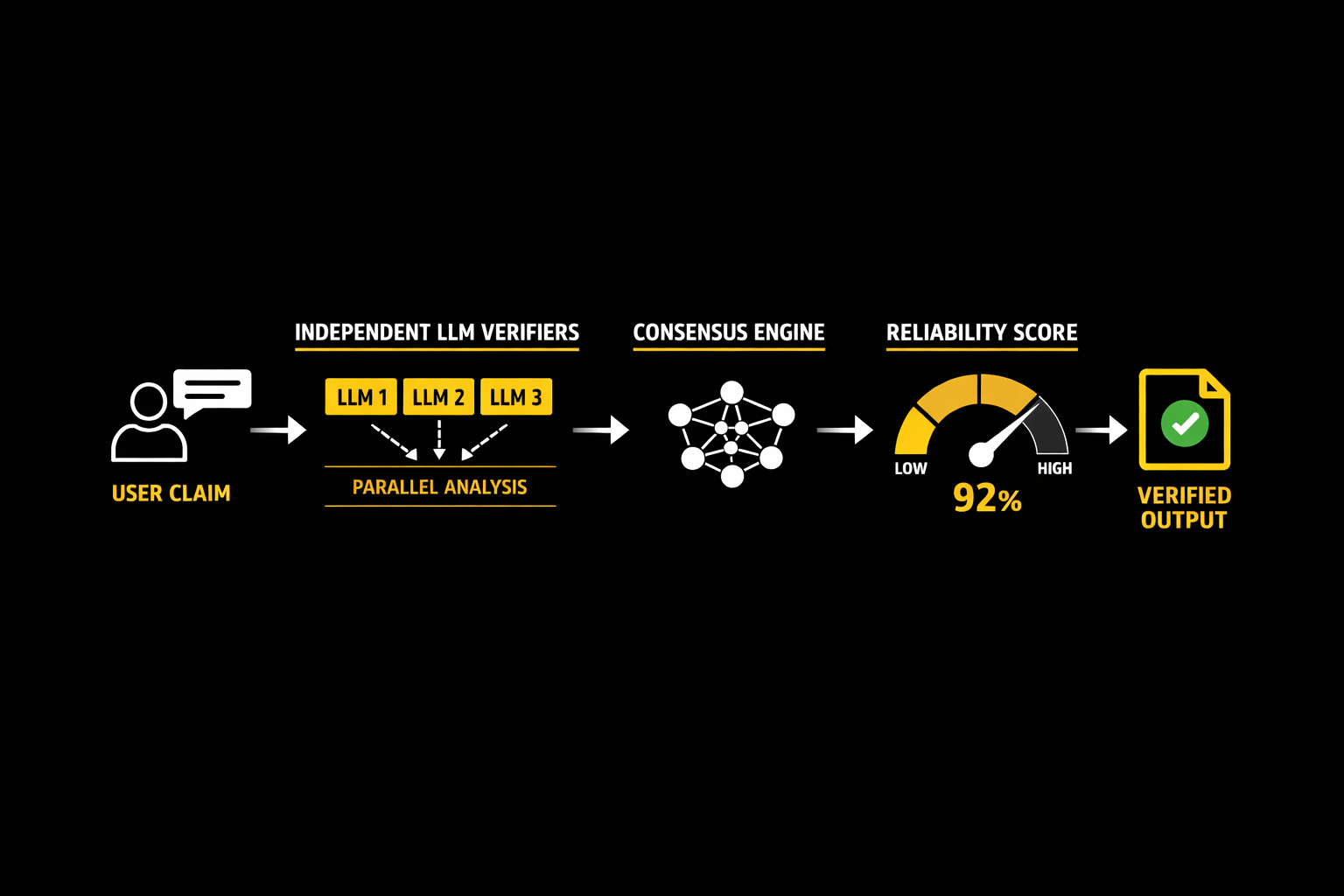

Mira replaces the single authority with a network of independent AI verifiers. Each model analyzes the same claim separately, using different training data, architectures, or reasoning paths. Instead of producing one answer, the system generates multiple assessments. These are then passed into a consensus engine that compares results, identifies agreement patterns, and calculates a reliability score.

This parallel validation structure mirrors scientific peer review: conclusions gain credibility when multiple independent evaluators reach similar results. Because no single model controls the outcome, errors or hallucinations from one system are diluted by the collective analysis.

The Power of Diverse LLM Verifiers

Diversity is the core strength of Mira’s mechanism. Different language models interpret prompts differently, detect inconsistencies uniquely, and cross-check facts against varied knowledge representations. When these perspectives converge, confidence increases. When they diverge, the system flags uncertainty rather than presenting a potentially false claim as fact.

This diversity also improves robustness against adversarial manipulation. A malicious input designed to trick one model is unlikely to deceive an entire network simultaneously. As a result, the system becomes more resilient than any standalone AI.

Transparency and Trust Scoring

Another advantage is measurable trust. Instead of a simple true/false label, Mira outputs a reliability score derived from consensus strength, source agreement, and reasoning consistency. Users can see not just the answer but how strongly it is supported. This transforms verification from a hidden process into an auditable one.

Why Consensus Beats Authority

The key difference between Mira and traditional fact-checking is philosophical as well as technical. Centralized systems assume authority guarantees truth. Mira assumes truth emerges from independent agreement. In complex information ecosystems, the latter model scales better, adapts faster, and reduces systemic bias.

Conclusion

Decentralized AI verification represents a shift from trust by reputation to trust by computation. By orchestrating multiple independent verifiers and synthesizing their judgments through consensus, Mira provides a more transparent, scalable, and reliable method for validating information. As AI continues to shape how people learn and decide, systems built on distributed verification may become the new standard for digital truth.