@Mira - Trust Layer of AI #Mira $MIRA

Introduction: The Hallucination Problem in AI

Artificial intelligence systems can generate fluent and convincing responses, yet they sometimes produce statements that are incorrect, unsupported, or fabricated—commonly called hallucinations. These errors pose a serious challenge, especially in fields like education, research, healthcare, and finance where accuracy is critical. As AI adoption grows, solving hallucinations has become one of the most important technical priorities in the industry.

What Is Mira’s Core Idea?

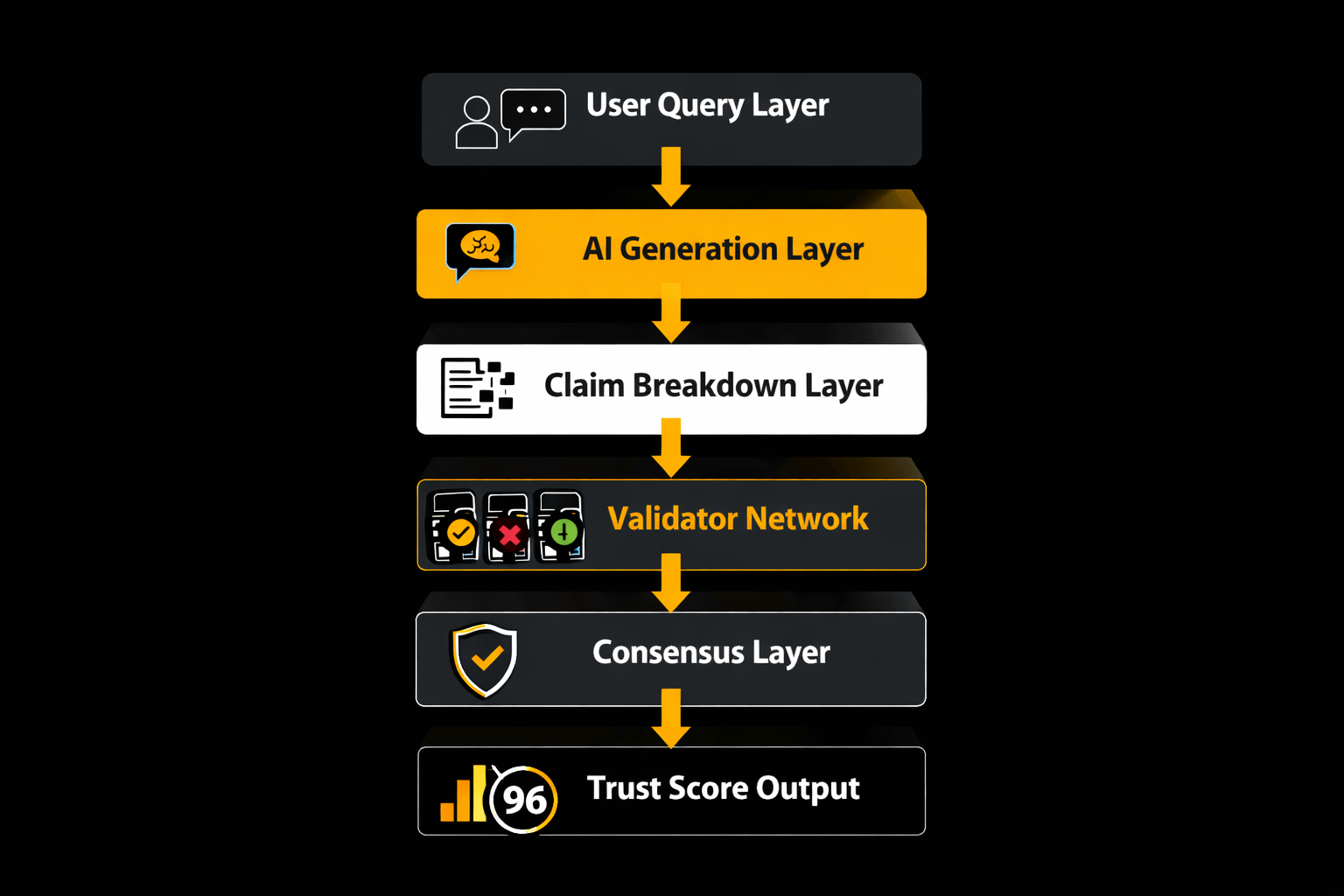

Mira operates as a verification layer rather than a standalone AI model. Instead of generating answers itself, it checks the output of other AI systems before users see them. Its key innovation is transforming long responses into smaller factual units and validating each piece independently through multiple systems. This layered approach dramatically increases reliability because a single mistake no longer compromises an entire answer.

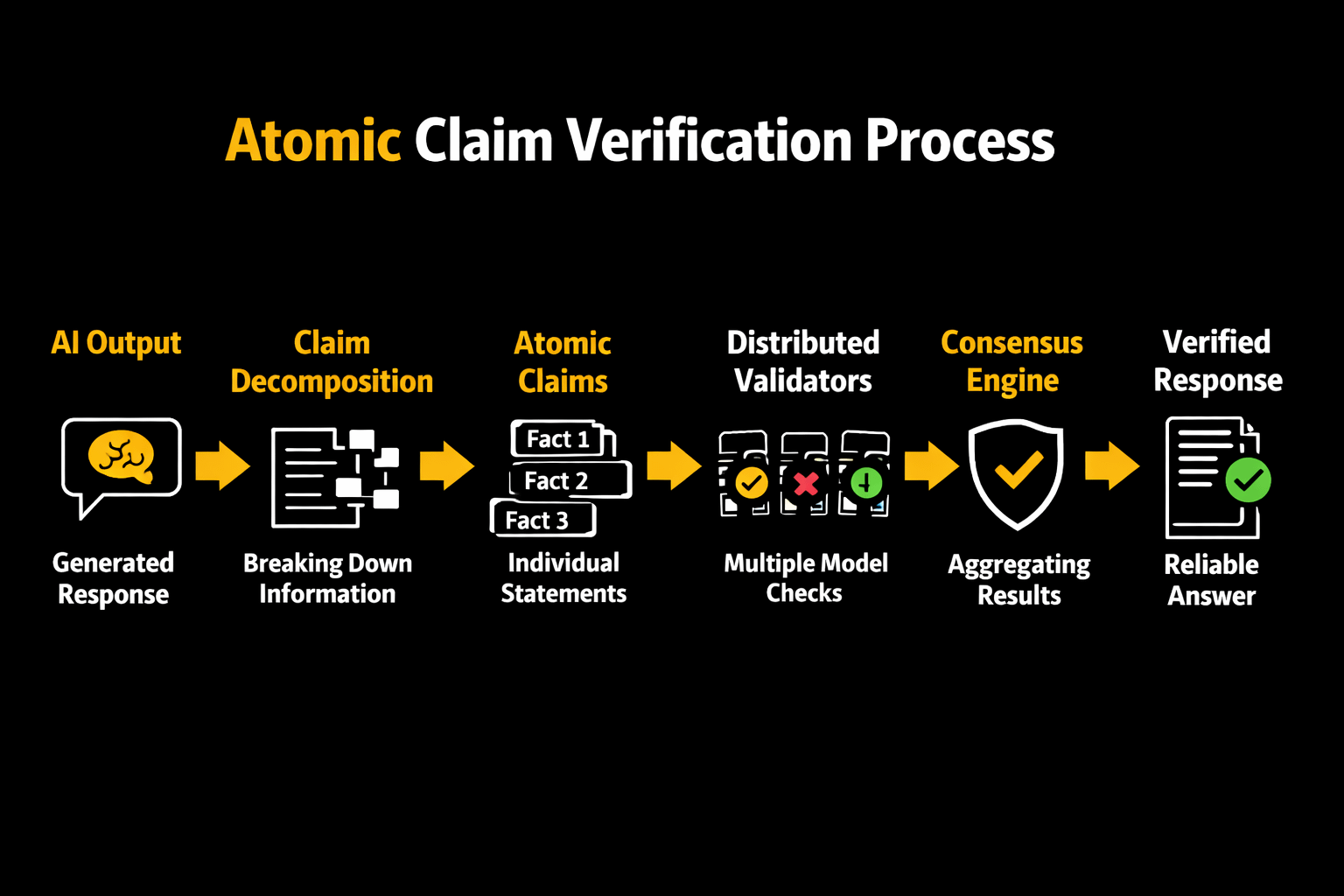

Step 1 — Claim Decomposition: Turning Text into Atomic Facts

The first stage is claim decomposition. Rather than analyzing a paragraph as a whole, Mira splits it into discrete factual statements known as atomic claims. Each claim contains one verifiable fact.

For instance, a sentence containing several facts is separated into individual statements so they can be tested separately. This method allows precise detection of which specific detail is incorrect instead of rejecting or accepting the whole response blindly. It also improves transparency because users can see exactly which claims passed verification.

Step 2 — Distributed Validation Across Models

Once the text is divided, each atomic claim is sent to multiple independent validator systems. These validators may use different architectures, datasets, or reasoning methods. Each evaluates the claim and labels it true, false, or uncertain.

The platform then aggregates their judgments and applies a consensus rule. If enough validators agree, the claim is accepted. If not, it is flagged or rejected. Because the validators are independent, weaknesses or biases in one model are balanced by others, reducing the chance of shared error.

Step 3 — Consensus Certificates and Auditability

After validation, the system generates a verification record showing how each claim was evaluated. This creates an auditable trail that developers, researchers, or regulators can inspect. Instead of trusting an opaque output, users gain visibility into how conclusions were reached and which claims were supported.

Why Atomic Verification Works

Breaking complex statements into atomic claims improves accuracy for a simple reason: smaller facts are easier to verify than large arguments. When multiple independent validators check each claim, the probability of false information slipping through decreases sharply. This statistical advantage makes decentralized verification systems more reliable than single-model responses.

Conclusion

Claim decomposition represents a structural shift in how AI reliability is achieved. By converting complex responses into verifiable atomic statements and validating them through distributed consensus, Mira transforms uncertain AI outputs into evidence-backed information.

The broader takeaway is that trustworthy AI will not depend only on larger models, but on smarter systems that verify every claim before it reaches the user.