Earlier today, I was looking through some notes on various AI projects, and something kept coming to mind: AI is becoming ridiculously powerful. You ask it a complex question, and it responds instantly. But there’s still a gap.

We’re trusting the answers more than we’re verifying them.

That’s the challenge Mira Network seems to be addressing. MIRA

Treating AI Responses Like Claims

One thing that really stood out to me about Mira is how they treat “AI outputs”. Instead of just seeing the response from an AI as a final answer, Mira views it more like a claim that needs verification.

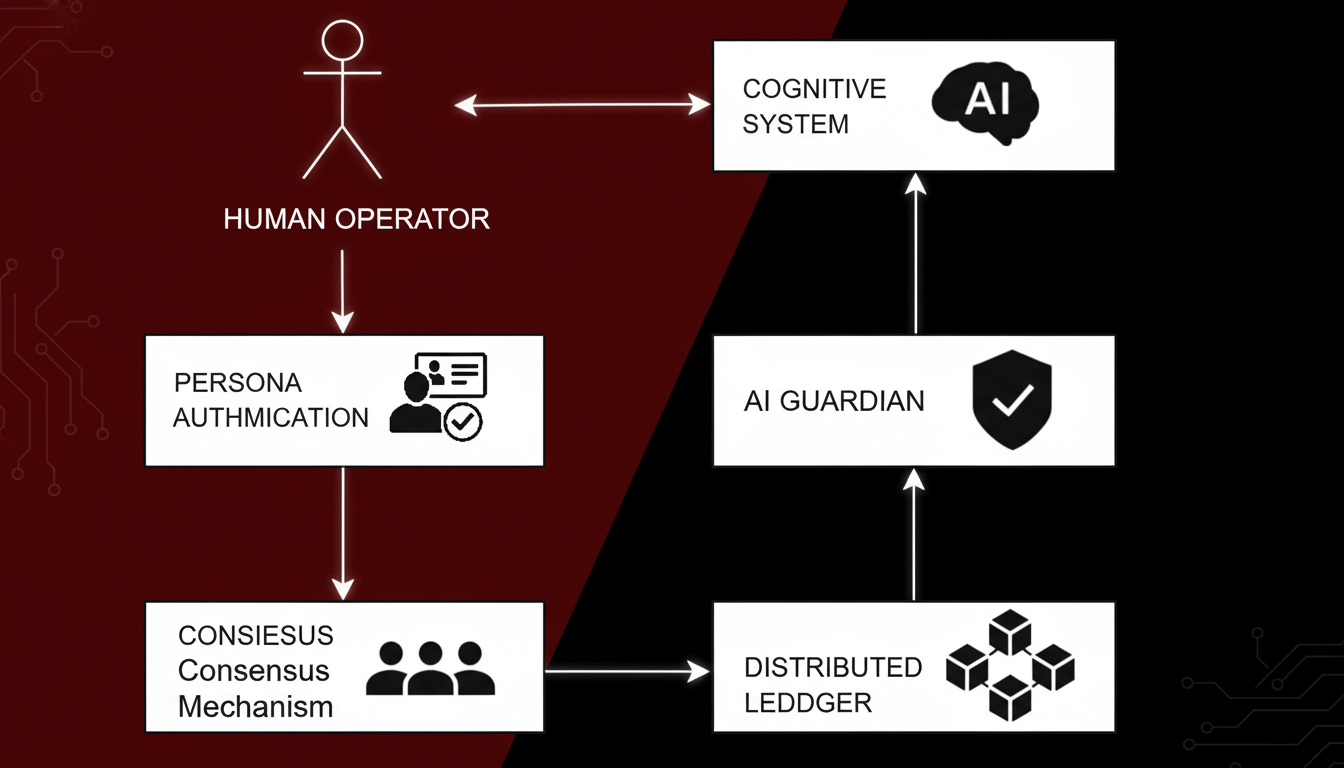

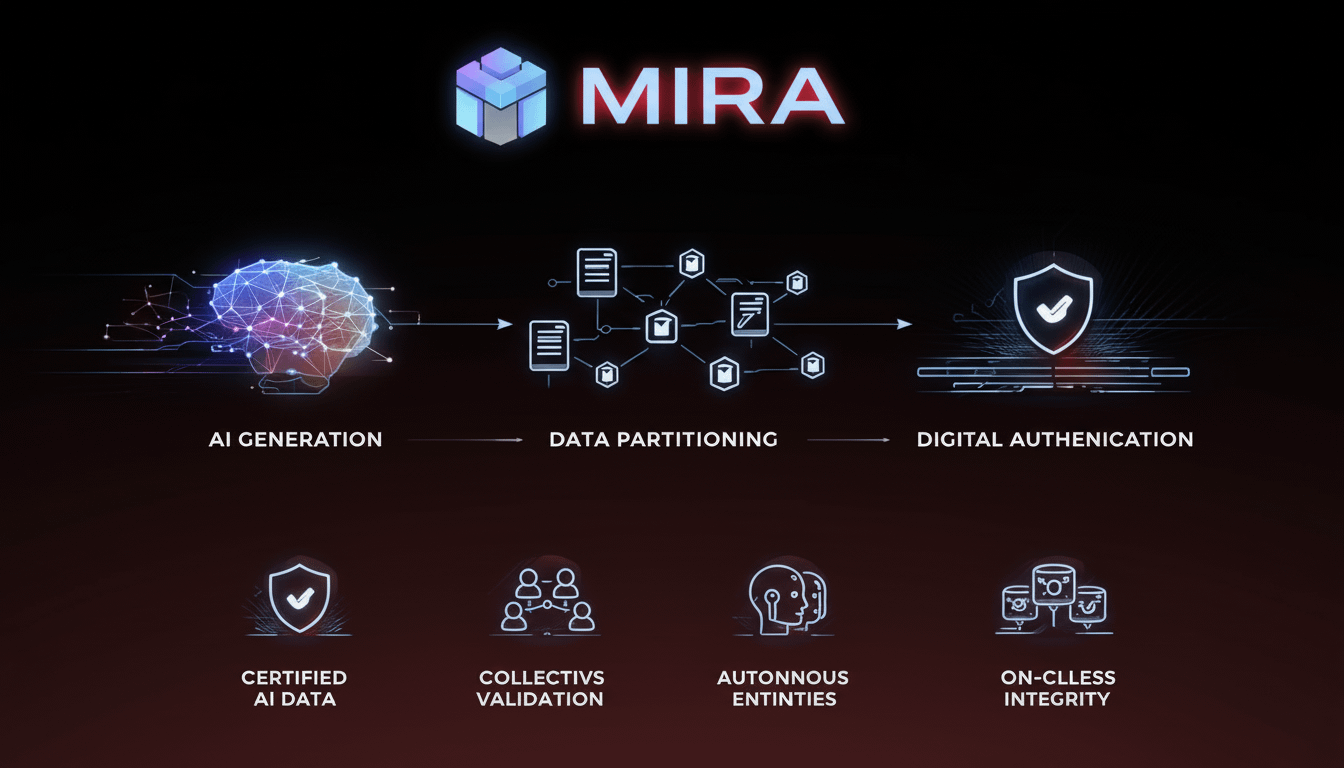

This small shift really changes how things work. If an AI generates a detailed response, Mira breaks it down into smaller claims. Each of these claims is then checked independently by different models or validators in the network. $MIRA

This means instead of relying on one system, you get multiple checks on the same information.

Why It Matters

If you’ve been using AI tools long enough, you’ve probably seen this yourself. Sometimes the answers are spot-on. Other times, they sound great, but turn out to be completely off.

That’s the strange part about AI now — it delivers answers with such confidence, but that doesn’t always mean they’re right.

What Mira is working on is making sure AI’s answers don’t immediately become accepted as truth. Instead, they go through a verification process from several different sources. $MIRA

The Infrastructure Side

Another interesting aspect of Mira is how they record the verification process transparently. Rather than happening behind the scenes, the network makes an audit trail that shows exactly how a claim was checked and confirmed.

In fields like finance, research, or law, this level of transparency could become incredibly important. It’s not about replacing AI, but making sure we can trust what it says.

A New Way to Think About AI

What I really like about this approach is that it changes the conversation around AI. Most AI projects are focused on making the models smarter. Mira is asking a different question:

What if the next big step isn’t about building a smarter AI… but making it verifiable?

If Mira’s approach works on a larger scale, we could end up with a world where AI doesn’t just generate information fast — it also proves that it can be trusted.