I used to watch transaction confirmations like they were proof of progress. Faster meant better. Instant meant advanced. It felt intuitive, almost unquestionable.

But over time, I noticed something I couldn’t ignore: I was trusting outcomes I didn’t actually understand.

I knew when transactions finalized. I didn’t know how they got there.

What feels off about most blockchain systems isn’t just scalability, it’s the assumption that every transaction must pass through the same universal process.

Every node executes. Every node validates. Every transaction competes for the same ordering pipeline.

It creates a kind of enforced equality. Clean in theory, but inefficient in practice.

At scale, it starts to resemble coordination overload, not coordination efficiency.

And yet, we rarely question that structure.

I didn’t either until I looked more closely at transaction settlement in Hyperledger Fabric.

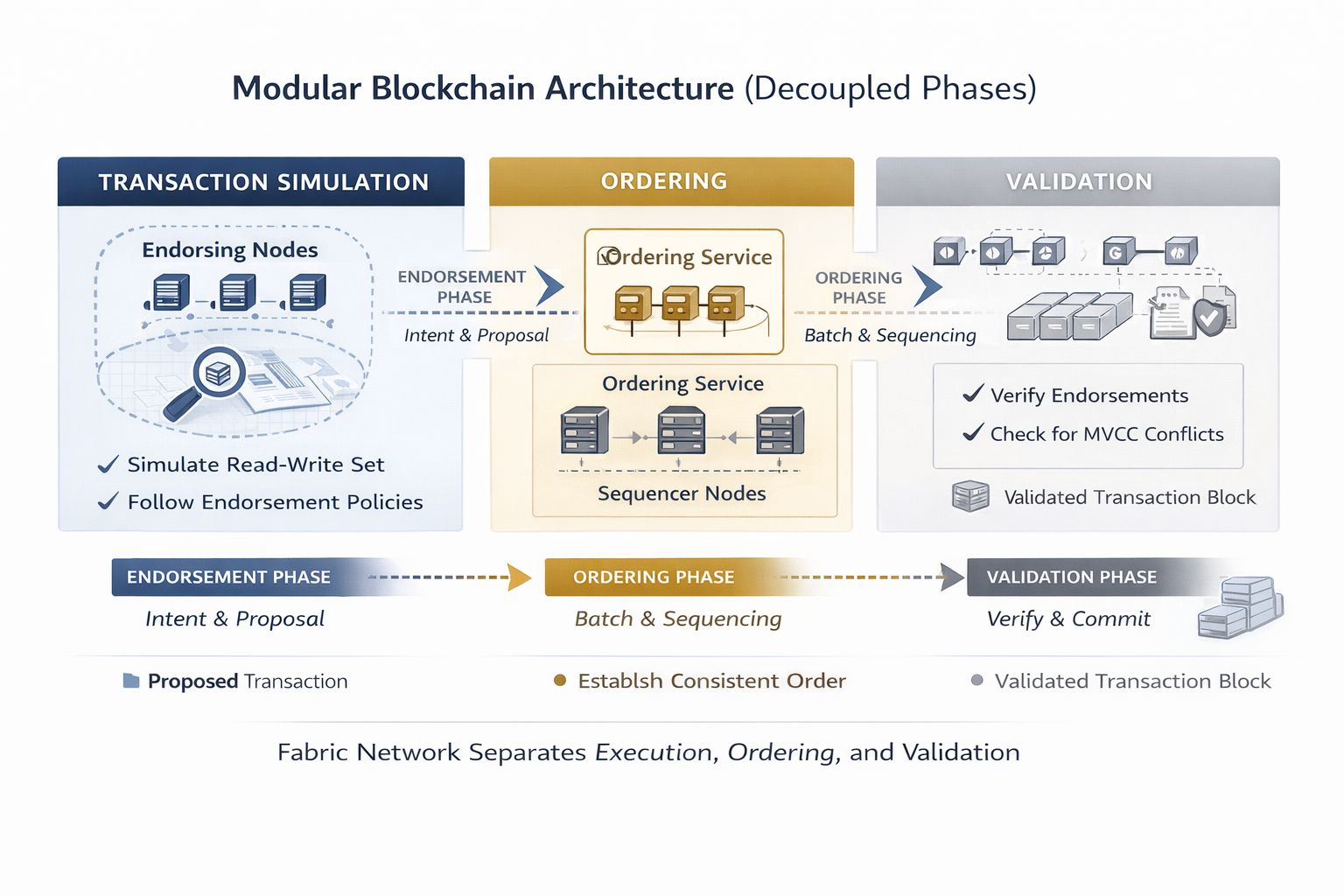

At first, it felt fragmented. Execution, endorsement, ordering, validation split into distinct phases instead of one unified flow.

It almost seemed like unnecessary complexity.

Why separate what other systems try so hard to compress?

But that assumption didn’t hold for long.

@Fabric Foundation doesn’t treat settlement as a single moment. It treats it as a pipeline of responsibilities.

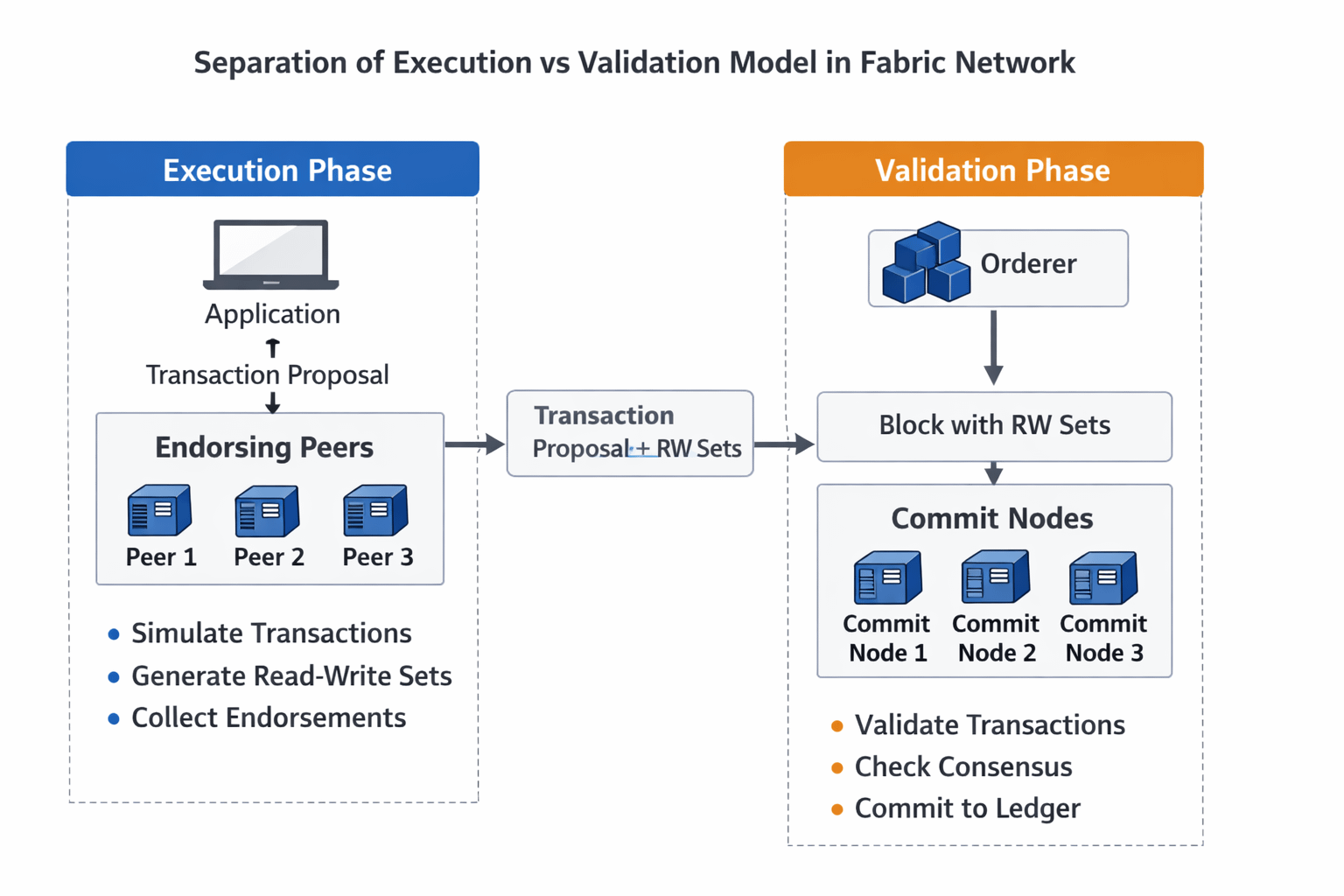

First, transactions are simulated, not executed for finality, but for intent. Endorsing peers generate a read write set based on current ledger state.

At first, this felt like duplication.

But it isn’t agreement. It’s preparation.

Only after this simulation do transactions enter ordering, where they are sequenced, not validated. The ordering service doesn’t check correctness; it simply establishes a consistent order across the network.

Validation comes later.

And that’s where the system quietly shifts.

During validation, each peer checks two things:

whether the transaction satisfies its endorsement policy, and whether the underlying data has changed since simulation, a mechanism often referred to as MVCC (multi-version concurrency control).

This means a transaction can be ordered but still invalid.

That detail stayed with me.

Because it separates visibility from finality, something most systems blur together.

What stood out wasn’t just the architecture, it was the change in participation.

Not every node executes every transaction. Only relevant endorsers simulate it. Not every participant is equally involved at every stage.

At first, this felt like reduced security.

But upon reflection, it’s actually targeted trust.

#ROBO c assumes a permissioned environment where identities are known. Instead of minimizing trust entirely, it structures it defining exactly who needs to agree, and when.

That changes incentives.

Participation becomes intentional, not mandatory.

Most scalability conversations focus on making consensus faster.

Fabric sidesteps that by reducing how much work needs consensus in the first place.

Execution is no longer network wide. It’s selective.

Validation is deterministic. Ordering is streamlined.

Consensus still exists but it’s no longer burdened with unnecessary computation.

That distinction matters.

Because Fabric doesn’t eliminate coordination cost. It redistributes it.

The more I thought about it, the more it resembled real-world systems.

In a supply chain, not every participant verifies every transaction. Only those directly involved validate the exchange, while others rely on structured guarantees.

$ROBO mirrors that.

Endorsement policies act like contractual boundaries. Channels and private data collections further segment who sees and processes what.

It’s not just about scaling throughput.

It’s about scaling relevance.

There’s also a subtle behavioral shift this model introduces for builders.

When execution is separated from validation, you’re forced to think differently about state.

You don’t just write transactions you design them to survive time gaps between simulation and validation.

You anticipate conflicts. You respect data dependencies.

It creates a discipline that monolithic execution models often abstract away.

And that discipline compounds over time.

In a broader sense, Fabric reflects a larger trend in system design.

We’re moving from systems where everyone does everything

to systems where roles are defined, scoped, and intentional.

From redundancy as security

to structure as efficiency.

It’s not about removing trust assumptions, it’s about making them explicit.

If there’s a way forward for transaction settlement scalability, it may not come from faster consensus alone.

It may come from asking a more uncomfortable question:

How much of this process actually needs to be shared by everyone?

Fabric’s answer is clear, less than we think.

I still notice transaction speeds. That instinct hasn’t gone away.

But now, I hesitate before equating speed with quality.

Because what Fabric made me realize is this:

Settlement isn’t defined by how quickly a transaction appears final,

but by how intentionally the system decided who needed to be involved at all.

And maybe scalability isn’t about accelerating agreement

but about learning where agreement was never necessary to begin with.