I remember the first time I proudly showed someone my wallet activity.

Every transaction, every interaction, visible, verifiable, clean.

At the time, it felt like proof of participation.

Like I was part of something honest.

But over time, that same visibility started to feel excessive.

Not unsafe exactly, just exposed in ways I hadn’t consciously agreed to.

What began to feel “off” wasn’t the system’s integrity,

but the assumption behind it.

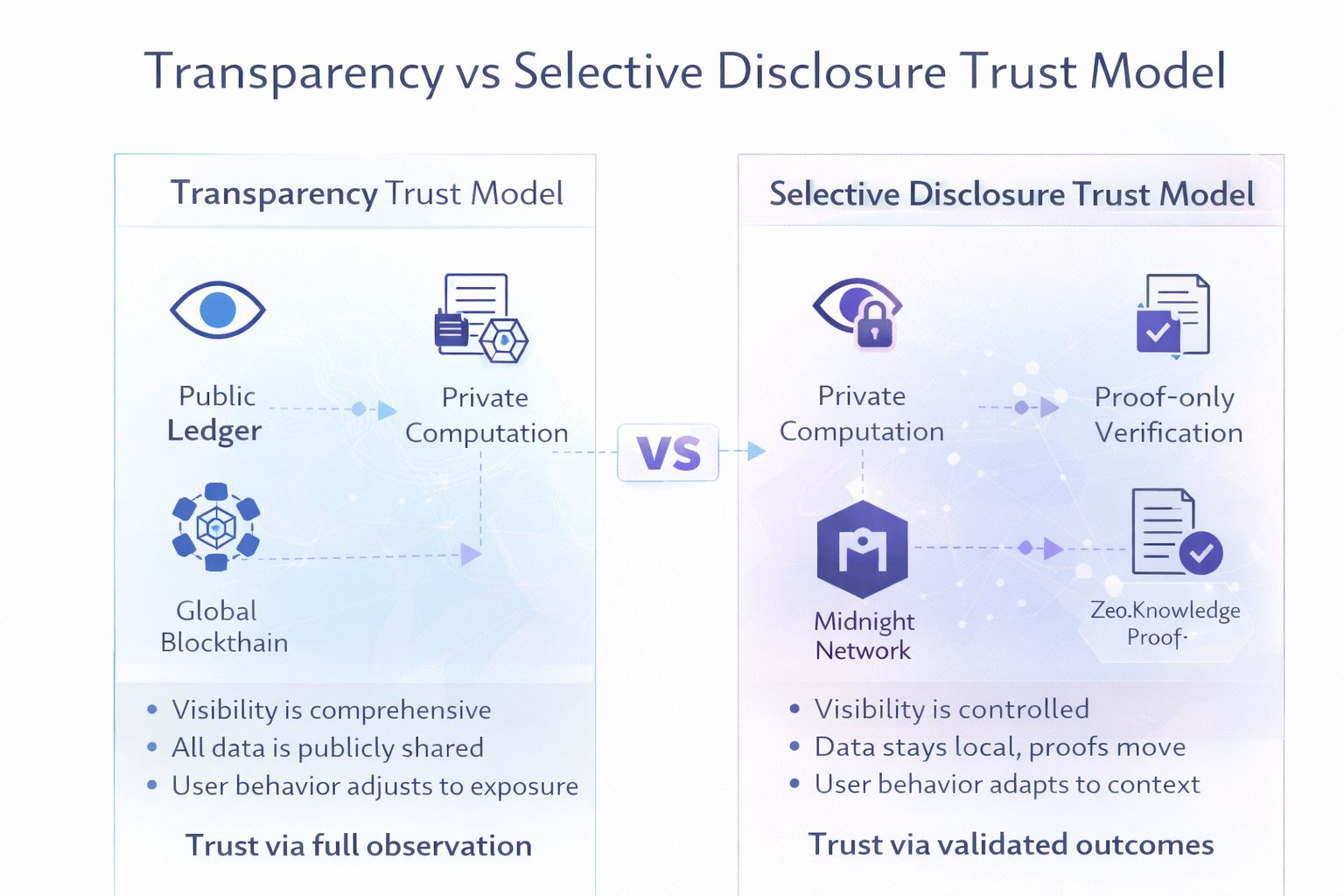

That more transparency always produces more trust.

In reality, I noticed something quieter.

People weren’t becoming more open, they were becoming more strategic.

They split identities across wallets.

They avoided certain protocols.

They optimized behavior not for utility, but for perception.

The system was transparent.

But the users were adapting around it.

I came across @MidnightNetwork

At first, it felt like a contradiction

blockchain was supposed to eliminate opacity, not reintroduce it.

But as I read deeper, it became clear:

Midnight wasn’t reducing transparency.

It was redefining where transparency belongs.

What stood out wasn’t privacy as a feature,

but selective disclosure as a design principle.

Through zero knowledge proofs, #night allows computation to happen privately,

while only the validity of that computation is revealed on-chain.

Not the inputs.

Not the full history.

Just the proof that conditions were satisfied.

This isn’t hiding information.

It’s minimizing what needs to be exposed in the first place.

That shift changes behavior more than it changes technology.

Because when everything is visible,

people don’t become more truthful, they become more performative.

They anticipate observation.

They hesitate before acting.

They optimize for how actions look, not what they achieve.

Transparency, in that sense, becomes a subtle constraint.

Midnight removes that pressure through programmable selective disclosure.

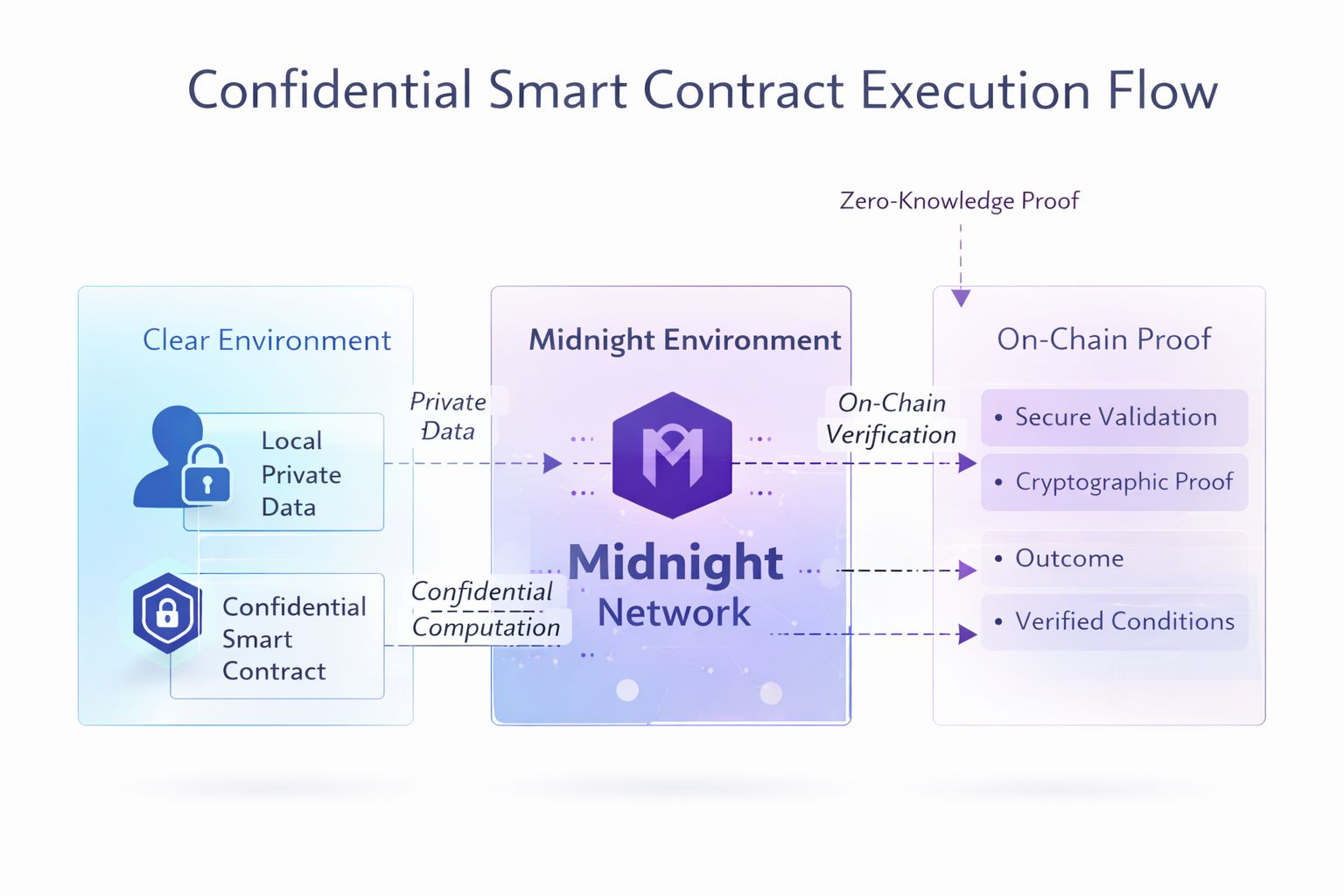

It separates data from verification.

Data remains local, private, controlled, context specific.

Verification is what moves on chain, secured through cryptographic proof.

Unlike traditional blockchains that replicate full state across every node,

Midnight minimizes shared data by design, only proofs are propagated, not the underlying information.

And that separation matters.

Because it restores something most systems quietly erode:

the ability to act without constant exposure.

Underneath this, the mechanics are deliberate.

Midnight introduces confidential smart contracts,

where execution happens over private data rather than public state.

Zero-knowledge systems ensure that outcomes are verifiable

without revealing the inputs that produced them.

And even the resource model reflects this philosophy.

Resources aren’t spent broadcasting data to the network.

They are spent generating verifiable proofs, often described as DUST,

aligning computational cost with privacy preservation instead of exposure.

This is where the $NIGHT token becomes structurally important.

It doesn’t simply pay for transactions.

It powers execution and incentivizes validators to verify proofs

rather than replicate raw data across the network.

In doing so, it aligns the network’s economics with its philosophy:

Not visibility.

But validity.

From a trust perspective, this feels surprisingly familiar.

In real life, we don’t demand full transparency from people.

We rely on proofs, signals, and outcomes.

We trust that a contract was honored,

without needing to observe every private conversation behind it.

Midnight aligns more closely with that model.

It acknowledges that trust doesn’t come from seeing everything.

It comes from knowing that what matters can be verified when required.

Builder behavior shifts alongside this.

When every action must be public,

developers design for auditability first, and experience second.

But when privacy becomes programmable,

design starts focusing on intent.

Applications can validate eligibility, identity, or conditions

without exposing the underlying data.

That unlocks use cases that were previously uncomfortable or impractical,

not because they were impossible, but because they were too revealing.

Zooming out, this feels like part of a broader transition.

We’re moving from global state replication

to contextual verification.

From forcing all data into shared visibility,

to systems where only necessary truth is disclosed.

Because as participation scales,

not everyone wants or should be required, to operate in public by default.

So the resolution isn’t less transparency.

It’s precision in transparency.

Expose what must be verified.

Protect what does not need to be known.

Let proofs travel.

Let data stay where it belongs.

But upon reflection, the most interesting shift isn’t technical.

It’s psychological.

When people feel less observed,

they behave more naturally.

They explore more.

They take more meaningful risks.

They engage without constantly managing perception.

And that creates a different kind of network effect,

one rooted in participation, not performance.

I used to think transparency was blockchain’s greatest strength.

Now I see it as something more precise.

Unfiltered visibility builds systems.

But selective visibility builds trust.

And if blockchain is meant to support real human behavior at scale,

then maybe the future isn’t about making everything visible.

It’s about knowing exactly what deserves to be seen.