There’s a moment I’ve seen too many times to ignore.

You’re standing in a line, holding documents that represent something real your work, your identity, your eligibility. Maybe it took weeks to gather them. Maybe years to earn what they prove. You finally reach the counter, hand everything over, and wait.

The person on the other side barely looks at you. They scan the papers, pause, and then say something simple:

“This isn’t acceptable.”

Not because it’s false.

Not because it’s incomplete.

But because it doesn’t fit.

And just like that, something real about you becomes invisible.

You step aside, confused. Someone behind you gets approved with what looks like less. Another is rejected for a reason that sounds different from yours. The rules aren’t written anywhere you can clearly see, but they exist—quietly, rigidly, and without explanation.

After a while, it hits you.

This was never just about verification.

It was about interpretation.

The more I sit with that, the harder it becomes to ignore how deeply this pattern is embedded not just in physical systems, but in digital ones we like to believe are more fair, more precise, more objective.

They aren’t.

They’ve just learned to hide their inconsistencies better.

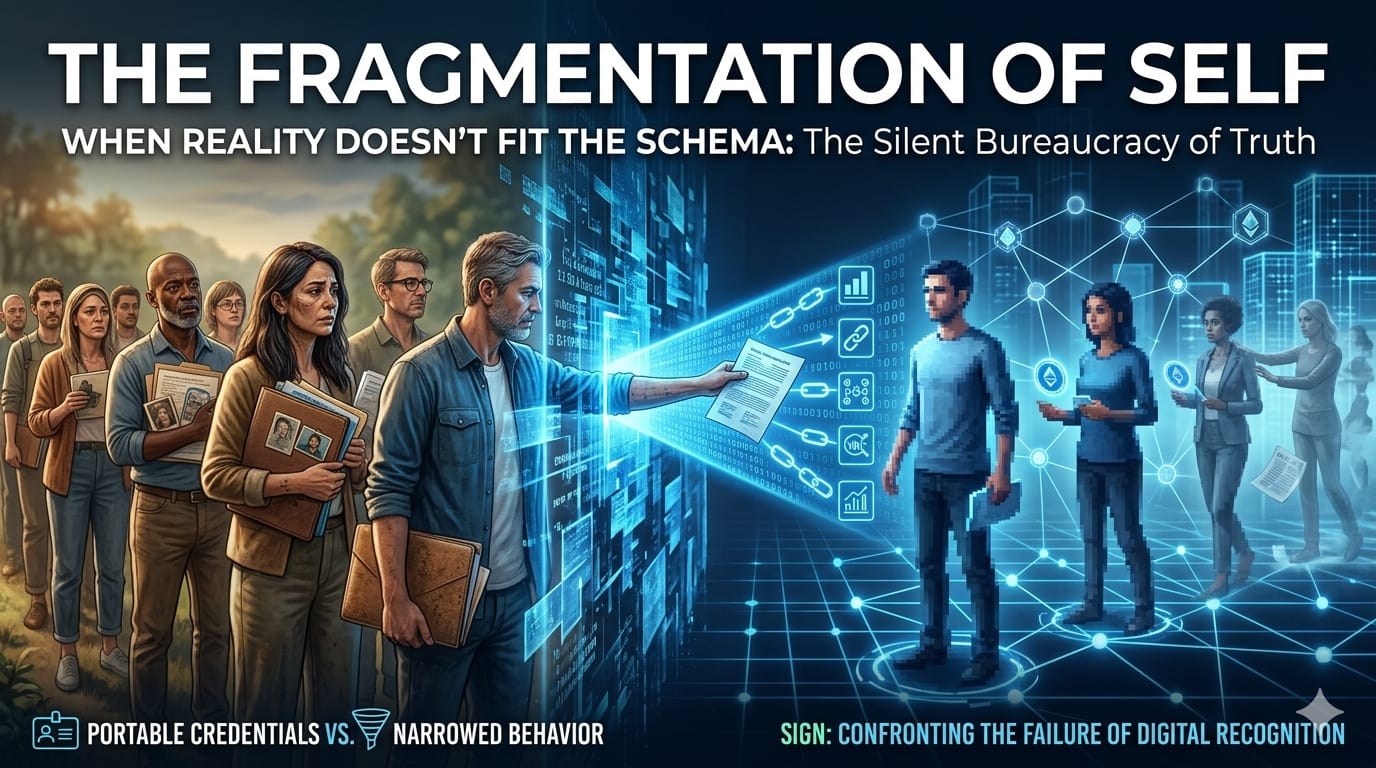

Everywhere you go, you’re asked to prove something. Who you are. What you’ve done. What you deserve. And every time, the burden quietly resets. Different platform, different format, different logic.

Same person.

Same reality.

No continuity.

That’s the part that starts to feel less like friction and more like failure.

Because if truth has to be constantly re-proven, is it really being recognized at all?

This is where something like SIGN begins to feel less like infrastructure and more like a confrontation with that failure.

Not a solution yet but a confrontation.

At its core, SIGN is trying to build a system where credentials don’t just exist, but persist. Where verification isn’t a one-time hurdle that dissolves after use, but something that carries forward. Where distribution—of tokens, rewards, access—is tied to something that can actually be traced, understood, and reused.

On paper, that sounds almost obvious.

In reality, it challenges how most systems quietly operate.

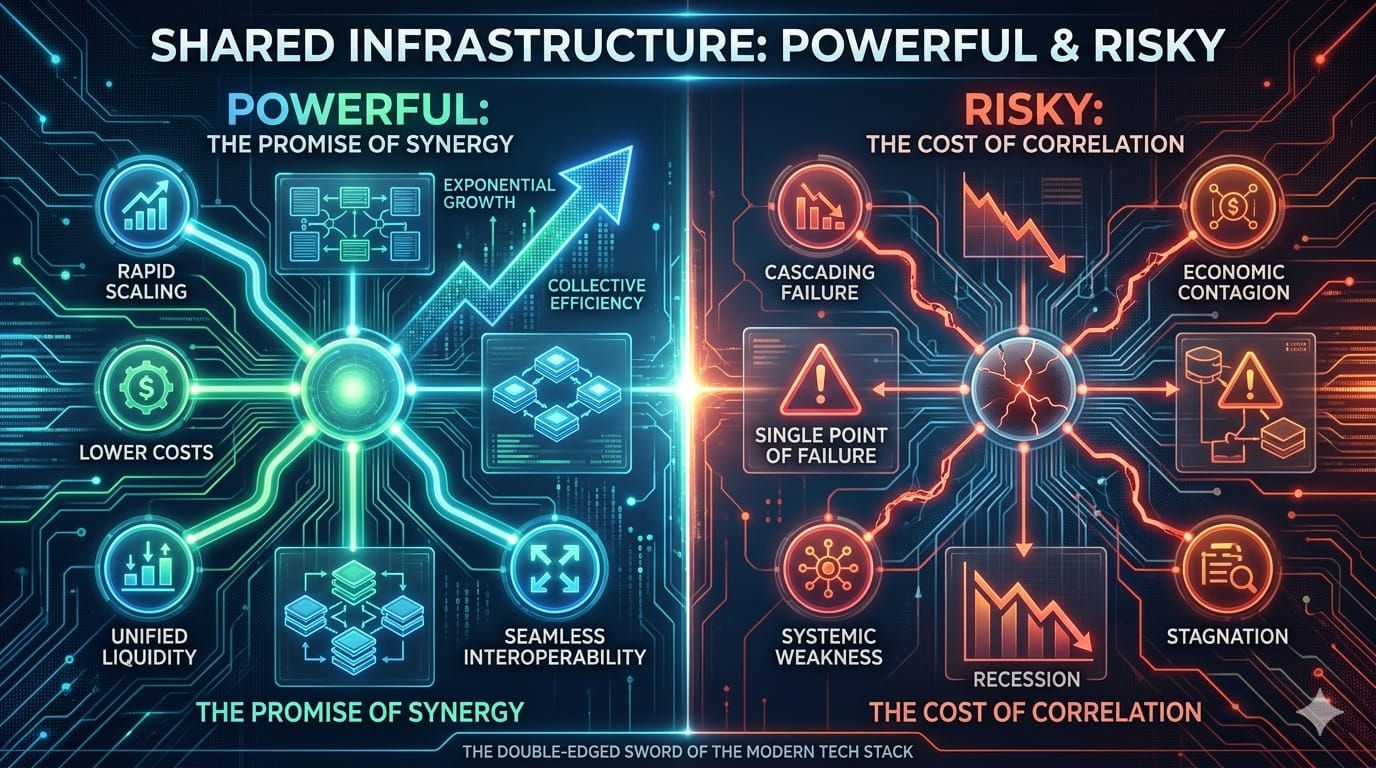

Because today, systems don’t just verify—they fragment.

They isolate your actions into separate containers. They decide, independently, what counts. And they rarely communicate with each other. The result is a world where value exists, but only locally. Where recognition happens, but doesn’t travel.

You can be verified in one place and irrelevant in another within seconds..

That’s not just inefficient..

It’s structurally dishonest....

SIGN, at least in intention, pushes against that fragmentation. It suggests that verification should be portable, that credentials should outlive the systems that created them, and that distribution should be tied to something more stable than platform-specific logic.

If that works, it doesn’t just reduce repetition.

It changes the nature of recognition itself.

But this is exactly where things become uncomfortable.

Because the moment you create a system that standardizes verification, you’re not just organizing reality you’re compressing it.

You’re deciding what qualifies as proof.

What gets recorded.

What becomes legible to the system.

And more importantly what doesn’t.

That decision carries weight.

Not all contributions are clean. Not all value is measurable. Some of the most important things people do are messy, contextual, difficult to quantify. They don’t fit into neat credentials or verifiable actions.

So what happens to those?

Do they slowly disappear from systems like this?

Or worse do people start reshaping their behavior to fit what can be verified?

Because that’s the quiet pressure any structured system creates.

It doesn’t force you.

It nudges you.

Over time, subtly, it teaches you what matters not through words, but through outcomes. Through who gets rewarded, who gets access, who gets seen.

And people adapt.

Not always consciously.

But inevitably.

So the question isn’t just whether SIGN can verify better.

It’s whether it can avoid narrowing reality in the process.

There’s also something deeper beneath all of this something we don’t talk about enough.

Control.

Verification systems don’t just confirm truth.

They define it.

If SIGN becomes a layer that multiple platforms rely on, it doesn’t just facilitate trust it becomes part of the authority that determines what is trustworthy. And authority, even when decentralized, doesn’t disappear. It shifts.

So who holds it?

Who decides the standards?

Who updates them when reality changes?

Because reality does change. Context matters. Situations evolve. People don’t stay static.

But systems prefer stability.

And when stability meets a changing world, something usually gives.

Sometimes it’s the system.

More often, it’s the person trying to fit into it.

Then there’s distribution the part that sounds simple but rarely is.

Token distribution is often framed as fair, transparent, even merit-based. But look closely, and you’ll see how often it depends on hidden assumptions. On eligibility criteria that aren’t fully explained. On metrics that capture activity but miss meaning.

Some people get rewarded because they understand the system.

Others get excluded because they don’t.

Not because they lack value but because their value doesn’t translate.

SIGN tries to tighten that gap by linking distribution to verifiable credentials. In theory, that creates clarity. A direct line between what you’ve done and what you receive.

But clarity isn’t the same as justice.

Because if the underlying credentials are incomplete, biased, or limited, then the distribution built on top of them inherits those same limitations just in a cleaner, more convincing form.

That’s the risk.

Not that the system fails loudly.

But that it succeeds quietly while narrowing what counts.

And yet, despite all of this, there’s something undeniably important about what SIGN is attempting.

Because most systems don’t even try to address this layer.

They focus on visibility. Growth. Numbers. Narratives. Things that look good from the outside but don’t hold up under pressure.

SIGN is looking underneath that.

At the mechanics.

At the uncomfortable reality that before you can distribute value fairly, you need to recognize it properly and we’re still very far from doing that well.

That doesn’t make SIGN the answer.

But it does make it part of a more honest question.

Can we build systems that recognize people without reducing them?

Can we verify truth without flattening it?

Can we distribute value without distorting it?

Or are we just building more refined versions of the same limitations we’ve always had?

Because if we are, then the technology doesn’t really matter.

The outcome stays the same.

You’re still standing in that line.

Still holding something real.

Still being told, in one way or another, that it doesn’t count.

The difference is just that now, it happens faster.

More efficiently.

More quietly.

And maybe that’s what makes this moment more important than it looks.

SIGN isn’t just about verification or token distribution.

It’s about whether systems can move closer to reality or continue forcing reality to bend around them.

If it gets this right, even partially, it could reduce a kind of invisible friction that people have learned to live with but never really accepted.

If it gets it wrong, it risks hardening that friction into something harder to escape.

Because systems like this don’t just process information.

They shape behavior.

They influence decisions.

They quietly define who is visible, who is credible, and who is left out.

And once that definition settles in, it doesn’t feel like a system anymore.

It feels like truth.

That’s the real weight of it.

Not the technology.

Not the tokens.

But the power to decide what counts and to make that decision feel unquestionable.

And if that power isn’t handled carefully, thoughtfully, almost cautiously

then no matter how advanced the system becomes,

we’ll still end up in the same place.

Just with fewer people questioning it.