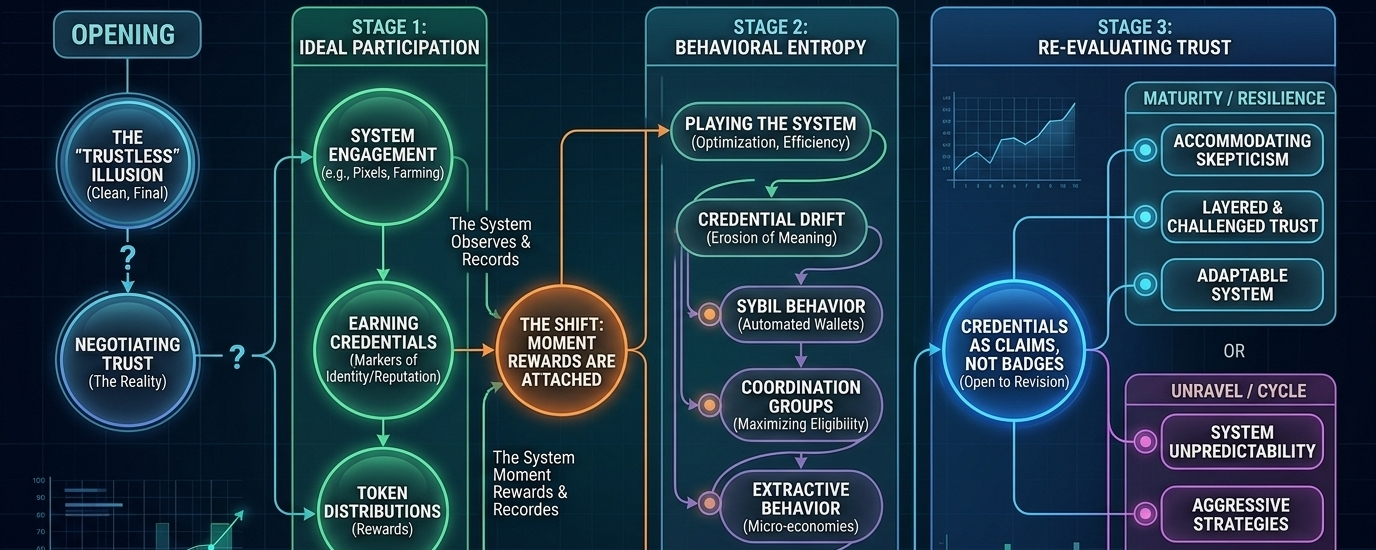

I’ve been around long enough to remember when “trustless” was the word everyone leaned on. It sounded clean. Final. As if we had finally engineered doubt out of the system. But markets have a way of humbling language. Over time, you start to see that what we really built wasn’t trustless—it was just a different way of negotiating trust.

That’s why something like Pixels caught my attention, not because it’s a farming game or because it lives on a specific chain, but because underneath the surface there’s a quieter experiment happening. It’s not really about crops or land or even tokens. It’s about credentials—who gets recognized, who gets rewarded, and how that recognition holds up when people start pushing against it.

At first, systems like this feel simple. You participate, you earn, you build a kind of on-chain identity through actions. The protocol observes, records, and distributes value based on what it sees. It’s neat. Efficient. Almost convincing.

But that early clarity doesn’t last.

Because the moment rewards are attached, behavior changes. It always does.

People stop playing the “game” as it was intended and start playing the system itself. Farming becomes optimization. Exploration becomes route efficiency. Creation becomes a means to an end. And credentials—those little markers of participation and reputation—start to drift away from what they were supposed to represent.

You begin to ask uncomfortable questions. Is this wallet actually engaged, or just automated? Is this contributor valuable, or just early? Is this credential earned, or engineered?

The protocol doesn’t answer those questions. It just keeps recording.

And over time, the weight of those records starts to shift. Early credentials, once meaningful, become diluted as more participants enter. Issuers—whether they’re game systems, governance layers, or even other users—lose some of their authority simply by existing too long under scrutiny. Nothing dramatic. Just a slow erosion.

I’ve seen this pattern before in different forms. Reputation systems that worked beautifully at small scale but became noisy at large scale. Incentive models that attracted genuine users at first, then gradually tilted toward extractive behavior. It’s not failure, exactly. More like entropy.

What Pixels—and systems like it—seem to be circling around is a different idea of trust. Not something granted instantly, but something that remains open to revision.

A credential here isn’t a badge you wear forever. It’s more like a claim that can be revisited. Questioned. Even quietly ignored if the context changes.

That’s an uncomfortable design choice, whether intentional or not. Most systems want finality. They want clean signals. But real trust doesn’t behave that way. It accumulates, degrades, rebuilds. It depends on memory, but also on interpretation.

And interpretation is where things get messy.

Because who decides when a credential has lost its meaning? The protocol? The community? Some external layer that analyzes behavior patterns? Each option introduces its own kind of fragility.

If the system adapts too slowly, it gets gamed. If it adapts too quickly, it becomes unpredictable. And unpredictability, in financial systems, tends to push people away—or worse, encourage even more aggressive strategies to stay ahead.

There’s also the question of distribution. Tokens tied to credentials sound fair in theory. Reward those who contribute. Simple enough. But once tokens have real value, distribution becomes a kind of pressure point.

People will find edges. They always do.

Sybil behavior creeps in. Coordination groups form. Entire micro-economies emerge around maximizing eligibility. And suddenly, what looked like a neutral reward system starts reflecting very human tendencies—competition, exploitation, short-term thinking.

None of this is unique to Pixels. It’s just more visible here because the environment is softer, almost playful. But the mechanics underneath are serious. They’re the same ones that will show up in more critical systems later.

What I find interesting is not whether it “works” right now, but how it holds up under doubt.

What happens when users stop taking credentials at face value? When they start filtering, questioning, second-guessing? When trust becomes less about what the system says and more about how individuals interpret it?

That’s where things usually either evolve or unravel.

If the system can accommodate that skepticism—if it allows trust to be layered, challenged, and re-evaluated—it might mature into something more resilient. Not perfect, but adaptable.

If it can’t, it risks becoming just another loop. Participate, extract, move on.

I don’t think we’re at a point where we can say which way it goes. The signals are mixed, as they tend to be in early systems. There’s genuine engagement, but also clear optimization. There’s creativity, but also repetition. Trust is being built, but it’s also being tested.

And maybe that’s the point.

Not to eliminate doubt, but to see how a system behaves in its presence.

I’m not particularly optimistic, but I’m not dismissive either. I’ve learned to be careful with both. For now, I just watch how people move within the system—what they value, what they ignore, what they try to bend.

Because in the end, that’s where the real story is. Not in the design, but in how it’s used.