I’ve stopped getting excited about new systems that promise fairness.

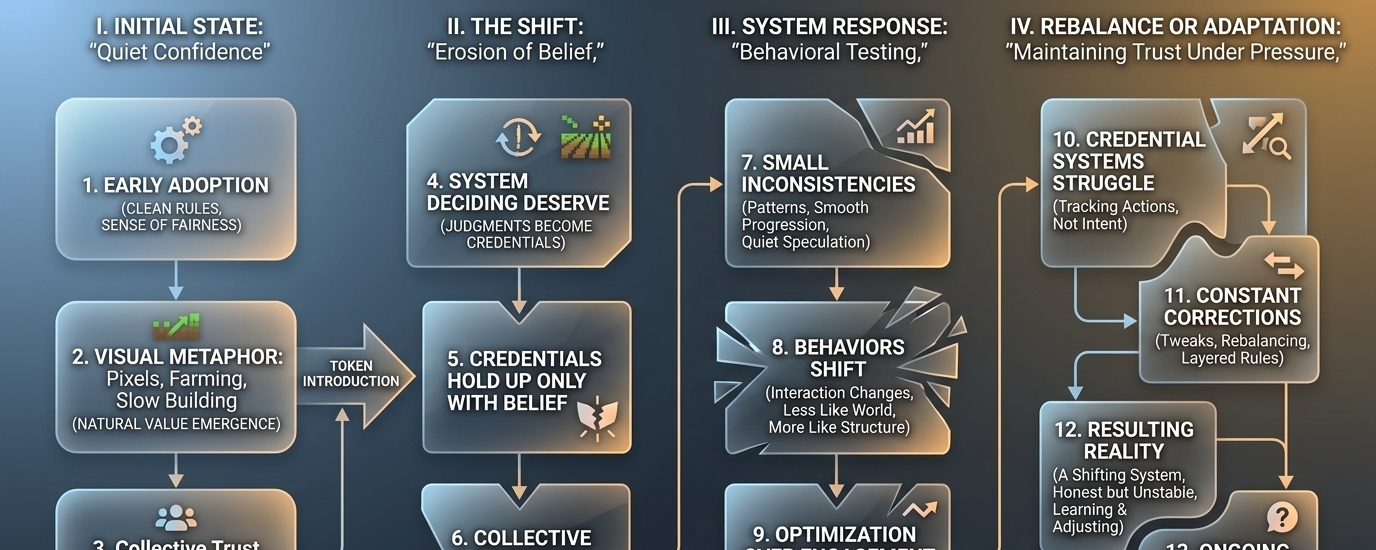

Not because I think they’re lying, but because I’ve seen how these things unfold. Early on, everything feels clean. Rules make sense. Rewards feel earned. People move through the system with a kind of quiet confidence that what they’re doing matters—and that it’s being measured correctly.

Something like Pixels fits that early phase almost perfectly. It doesn’t come at you like a financial machine. It’s softer than that. Farming, wandering, building things slowly. It gives you the impression that value emerges naturally from participation, not from aggressive extraction.

But once tokens are involved, the tone changes. It always does.

Underneath the relaxed surface, there’s a system deciding who deserves what. Not in an obvious way, not with rigid labels—but it’s there. Every action becomes a signal. Every signal feeds into some form of judgment. And over time, those judgments become credentials, whether the system calls them that or not.

That’s the part people tend to overlook at first.

Credentials sound solid, but they aren’t. They hold up only as long as people believe in how they’re assigned. And belief is a strange thing in these environments—it doesn’t disappear all at once. It erodes.

At the beginning, nobody questions much. If you’re rewarded, you assume it’s because you did something right. If someone else progresses faster, you assume they’re just more active or more efficient. There’s a kind of collective agreement to trust the structure.

Then the cracks start to show.

Not in dramatic ways. Just small inconsistencies. Someone finds a pattern that seems… off. Another player moves through the system a little too smoothly. You start hearing quiet speculation—nothing loud, just enough to make you pause.

And once that pause exists, it doesn’t really go away.

What’s actually happening in that moment is more important than it looks. The system is being tested, not by design, but by behavior. Because once rewards have value, people stop interacting with the experience as it was intended. They start looking at it differently.

Less like a world, more like a structure to navigate.

That shift is subtle, but it changes everything.

Farming becomes optimization. Exploration becomes repetition. Creation becomes efficiency. You’re no longer asking “what can I do here?” but “what gives the best return for my time?” And that question, once it settles in, reshapes how people behave.

It’s not even malicious. It’s just… logical.

But now the system has a problem. It’s trying to assign meaning to actions that are no longer driven by meaning. It’s trying to measure authenticity in an environment where imitation is often more efficient.

And that’s where credential systems start to struggle.

Because they weren’t really built to understand intent. They track actions, outcomes, patterns—but they can’t fully distinguish between someone engaging genuinely and someone simply following the most efficient loop. From the outside, those two things can look identical.

So the system leans on adjustments. Tweaks. Rebalancing. Maybe new rules layered on top of old ones. Each change meant to restore some sense of fairness.

But every adjustment also raises a quiet question: if the system needs constant correction, what exactly are these credentials worth?

I’ve seen this before. Not just once.

Over time, issuers—whether that’s a protocol, an algorithm, or some hybrid of both—start losing a bit of their authority. Not completely. Just enough that people begin to second-guess outcomes. Trust doesn’t collapse; it thins out.

And when that happens, something interesting starts to take shape.

The system can no longer rely on blind acceptance. It has to allow for doubt. It has to function even when people question it. That’s a much harder problem than simply assigning rewards.

Because now you’re not building trust—you’re maintaining it under pressure.

And maintaining trust means accepting that it’s going to be challenged. That credentials might need to be revisited. That some decisions will age poorly. That some participants will exploit gaps faster than the system can close them.

There’s no clean fix for that.

You can tighten rules, but that risks pushing out legitimate users. You can loosen them, but that invites more exploitation. You can add layers of verification, but those layers come with friction—and eventually, fatigue.

Every choice has a cost.

So what you end up with isn’t a perfect system, but a shifting one. Something that learns, adjusts, sometimes overcorrects, sometimes lags behind. It’s not stable in the way early users expect, but it might be more honest in the long run.

Pixels feels like it’s somewhere at the beginning of that transition. Still in that phase where things mostly work, where the assumptions haven’t been fully stress-tested yet. The world is calm, the mechanics are engaging, and the underlying economy hasn’t been pushed to its limits.

But it will be.

It always is.

And when that happens, the real question won’t be how well it distributes tokens. That part is straightforward. The harder question is whether it can hold up when people start pulling at the edges—when behaviors shift, when incentives get sharper, when trust isn’t automatic anymore.

I don’t think there’s a clear answer yet.

Maybe it adapts. Maybe it becomes one of those systems that quietly absorbs pressure and keeps going, even if it’s never perfectly fair. Or maybe the gaps widen over time, and the credentials it relies on start to feel less meaningful.

Right now, it’s too early to land anywhere definitive.

I’m not convinced by it.

But I’m not ready to write it off either.

I’m just watching—waiting to see what happens when the system is no longer taken at its word, and has to prove itself again, and again, and again.