I've seen a ton of folks talking about speed like it's the only thing that needs optimizing in trading. Faster is better, right? Fewer steps mean more efficiency. And when Binance AI Pro announced they could compress the workflow research for a token listing from 50-90 minutes down to about 10 minutes, the first reaction from most was just nodding and moving on.

I nodded too. But then I paused at a question that the intro didn’t raise: what’s inside that cut timeframe?

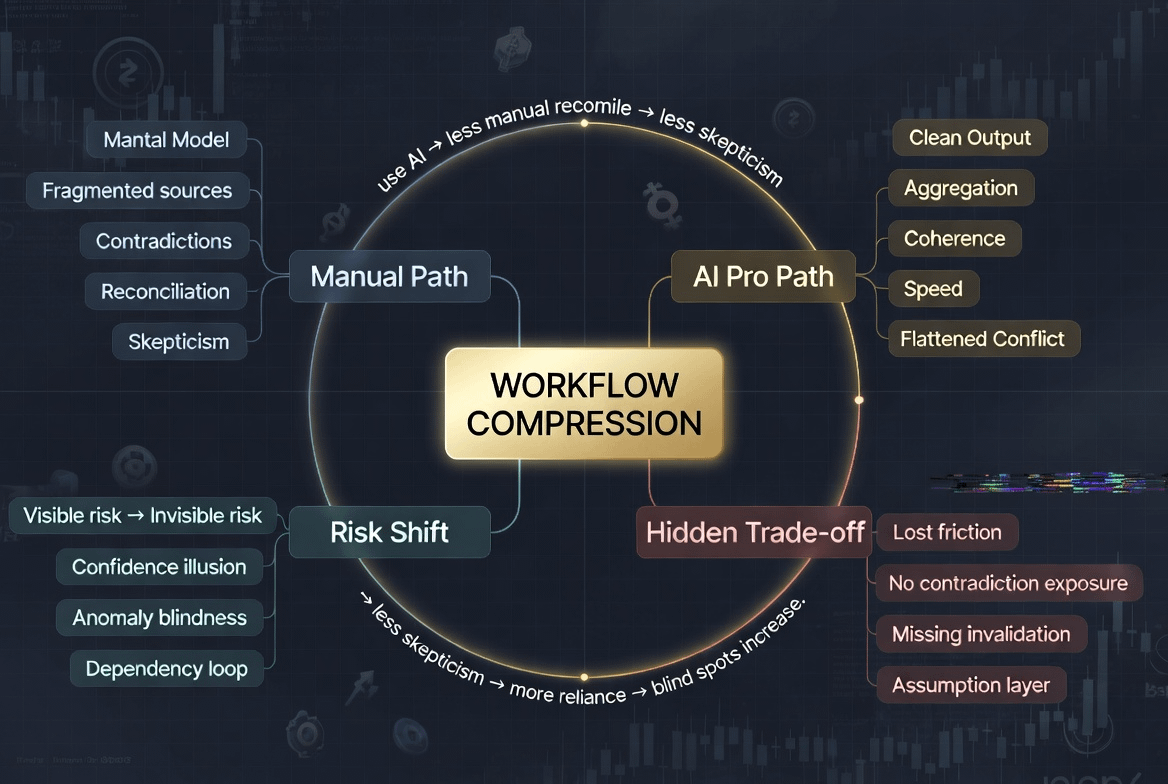

When you manually research a token, you’re not just gathering information. You’re navigating through inconsistent sources; the whitepaper says one thing, the unlock schedule has different numbers, and sentiment on social media pulls in another direction, while on-chain data sometimes contradicts the narrative being pushed. That inconsistency isn’t noise to be filtered out. It’s a signal. More importantly, it forces you to continuously ask why these two things don’t match.

That reconciling process is where skepticism is formed.

You’re not just learning about that token. You’re learning about the limits of what you know about that token. You’re building an imperfect but conditional mental model, a kind of understanding that accurately identifies where it might be wrong.

What does Binance AI Pro do with that timeframe? It aggregates all sources, processes the inconsistencies within them, and returns a coherent output. Clear structure, easy to read, time-saving. All of this is operationally correct. But that coherence doesn’t come naturally. It’s the result of a layer of processing that occurred before you saw anything, a layer that you weren’t part of.

When you receive aggregated output, you no longer see the contradictions. Not because they disappear, but because they’ve been flattened out beforehand. You consume conclusions without going through the process that generates them. And when you don’t go through that process, you fail to build something more important than the output: the invalidation conditions.

You know how good your trade looks. But you don’t know where it breaks down.

In normal market conditions, this doesn’t create any visible issues. The signal is clear, variance is low, and the AI Pro path and manual path yield similar results. And this is when a subtle mechanism starts to form: because AI Pro performs well in normal conditions, you use it more, and using it more means less self-reconciling, less reconciling leads to greater dependence on AI. This feedback loop reinforces itself smoothly, with no point in it looking like a mistake.

The problem only becomes apparent when there’s an anomaly.

An unlock cliff isn’t clearly presented in the tokenomics. A hidden correlation with a narrative that’s weakening, which is absent from the aggregate data. A small signal on-chain that requires long-term context and multiple reads to realize it’s unusual. These are things that 45 minutes of self-research can catch, not because you’re smarter than AI, but because you’ve gone through enough friction to recognize when something doesn’t add up.

Users on the AI Pro path aren’t less knowledgeable. They just lack a reference point to gauge how much to trust this output in a specific condition. Their confidence is built from the coherence of the output, not from having resolved contradictions themselves. And those two types of confidence behave very differently when the market starts to diverge.

This is the point I find most important and least directly addressed when discussing workflow compression in trading.

AI Pro doesn’t lose information. It loses the experience of processing information. And it’s that experience that creates skepticism, boundary awareness, the ability to recognize when a conclusion no longer holds. Risk doesn’t vanish when the workflow is compressed. It merely shifts from a visible form, which are the contradictions you read and question yourself, to a less visible form, which are the assumptions within the processing layer that you don’t realize you’ve overlooked.

One type is more exhausting. The other is more dangerous in a less recognizable way.

But I don’t think the answer is to go back to doing everything manually. That’s a wrong way to frame the issue.

What really needs to change is how users interact with the output of AI Pro, not the speed of receiving it, but what they do with it afterward. Binance AI Pro in its recent guide mentioned a noteworthy habit: after receiving a bullish setup, ask for the opposing case. Ask AI to pinpoint the exact conditions that would invalidate this thesis. This isn’t a side feature. It’s the only way to recreate part of the reconciling process that workflow compression has overlooked.

If the first output is a conclusion, then the second output should be the invalidation conditions. Not to negate that conclusion, but to understand how valuable it is and under what conditions it no longer holds true.

Another habit worth building alongside is periodically self-researching some tokens, not all, not every time, but often enough to keep your reconciling skills from atrophying. Like any skill, if you don’t use it, you lose it, and you often don’t realize you’ve lost it until you need to use it.

Binance AI Pro can compress a lot of things in your workflow, and most of those things deserve to be compressed. But skepticism doesn’t. The ability to recognize when an output is depicting the market under conditions it has been trained to handle, and when the market is doing something outside those conditions—this cannot be aggregated and returned to you in 10 minutes.

That has to be built from within the user. And AI Pro works best when the user is skeptical enough to know when not to trust it.

Trading is always fraught with risks. AI-generated suggestions are not financial advice. Past performance does not reflect future results. Please check the availability of products in your area.