I read a line in the Pixels documentation that I had to read twice, not because it was complicated but because it raised a question that most gaming studios have never really been able to ask: "spot churn patterns."

Not 'reduce churn.' Not 'understand why players leave.' But rather, seeing the churn pattern before it happens.

This is a major difference; it sounds like it.

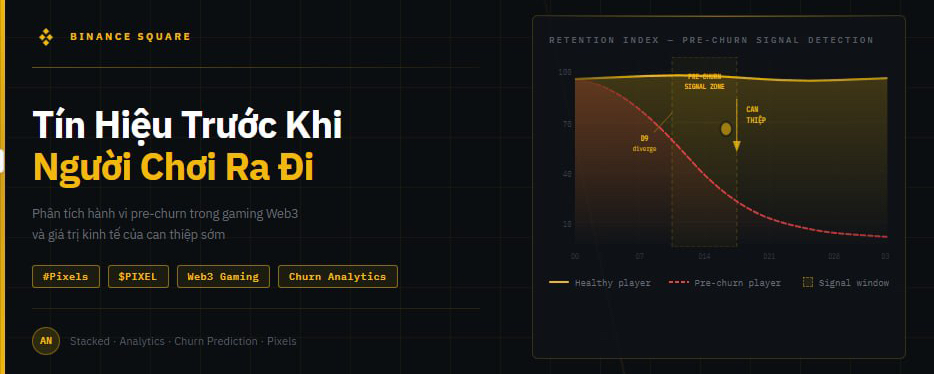

In most current game analytics, churn is defined after it has occurred. A player not logging in for 7 days is marked as churned. It could be 14 days. It depends on the studio. By the time that label is assigned, the player has long since decided to leave, and that decision wasn't made on the last day they logged in. It was made at some moment prior, when their in-game experience reached a point where nothing was pulling them back.

The key is that those moments always leave a trace in the data. Players about to leave often have shorter sessions, stop exploring new content, cease engaging with social features, or start skipping daily rewards instead of claiming them. Those behaviors aren't churn. They are pre-churn signals, and they appear days to weeks before a player actually disappears.

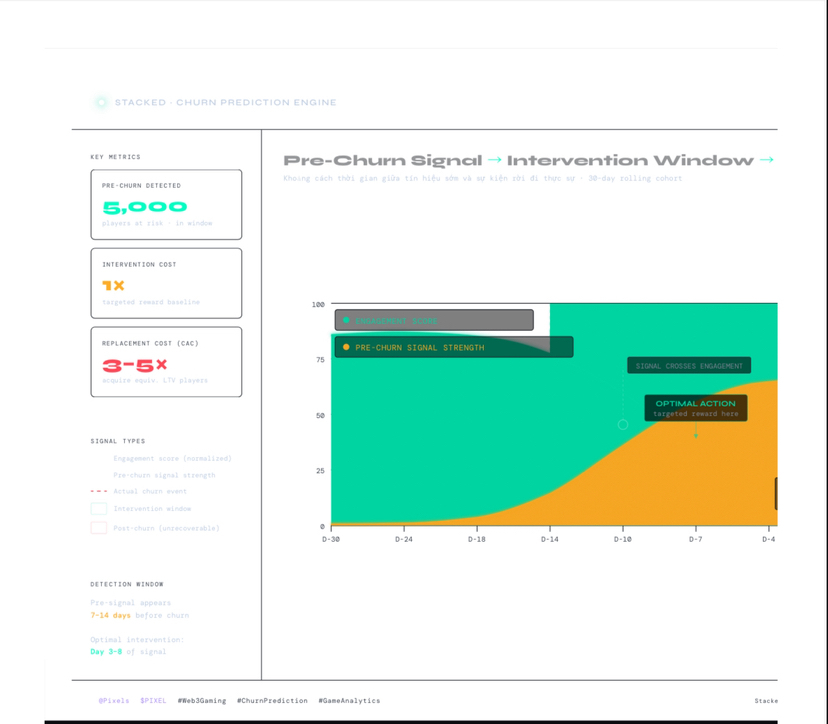

To understand why spotting those signals early is economically crucial, I need to explain a cost problem that most studios don’t calculate accurately. Retaining a player is always cheaper than acquiring a new one. In Web3 gaming, that gap is even wider due to high CAC and new players needing time to grasp the game economy before creating real value. If you can intervene before a high-value player decides to leave, the cost of that intervention is almost always less than the cost to replace them.

Looking at that intervention window, I tried to run a simple calculation. If a studio has 100,000 active players and 5,000 of them are in a pre-churn state that no one knows about, what is the cost to retain those 5,000 through targeted rewards compared to the cost of acquiring 5,000 new players with the same LTV from the market? Under normal conditions in Web3 gaming, the second number is 3 to 5 times larger than the first, sometimes even more when considering the time a new player needs to understand the game economy and start contributing real value.

This is why I think "spot churn patterns" isn't just a feature analytics but rather an economic asset. And that asset is only valuable if it's directly tied to the action loop. Seeing a pattern without being able to act within the same system is just a prettier dashboard.

Stacked tackles that problem by putting insight and action on the same platform. The AI economist spots churn patterns, the system recommends interventions, and the studio runs targeted rewards for the right cohort in the right time window. No export step, no meetings to approve, no intermediate pipeline delaying action until the window has closed.

I want to be honest about what I don't know.

The ability to spot churn patterns depends on the quality and diversity of the training data. If Stacked is primarily trained on data from Pixels, the patterns it identifies will reflect the behavior of Pixels players, not the universal behavior of Web3 gaming players. A studio integrated with a different genre, demographic, or game mechanics may find that churn signals in their game look different from what the model has learned.