I’ve been around long enough to recognize when something sounds elegant on paper but starts to creak the moment real traffic hits it. That’s the vibe I get watching people talk about AI coins lately—especially stuff like Bittensor (TAO). Everyone’s excited again. New narrative, new cycle, same confidence. But under the hood? This isn’t your usual crypto toy problem. It’s messier. Way messier.

People keep describing these systems like they’re some kind of decentralized AI cloud. Plug in compute, collect tokens, done. I wish. That’s not what this is. What you actually have is a competitive system where nodes are constantly trying to prove they’re “useful,” except nobody fully agrees on what useful even means. I’ve built ranking systems before. Recommendation engines, matchmaking, reward loops. They all look clean until you introduce incentives. Then everything starts bending in weird ways.

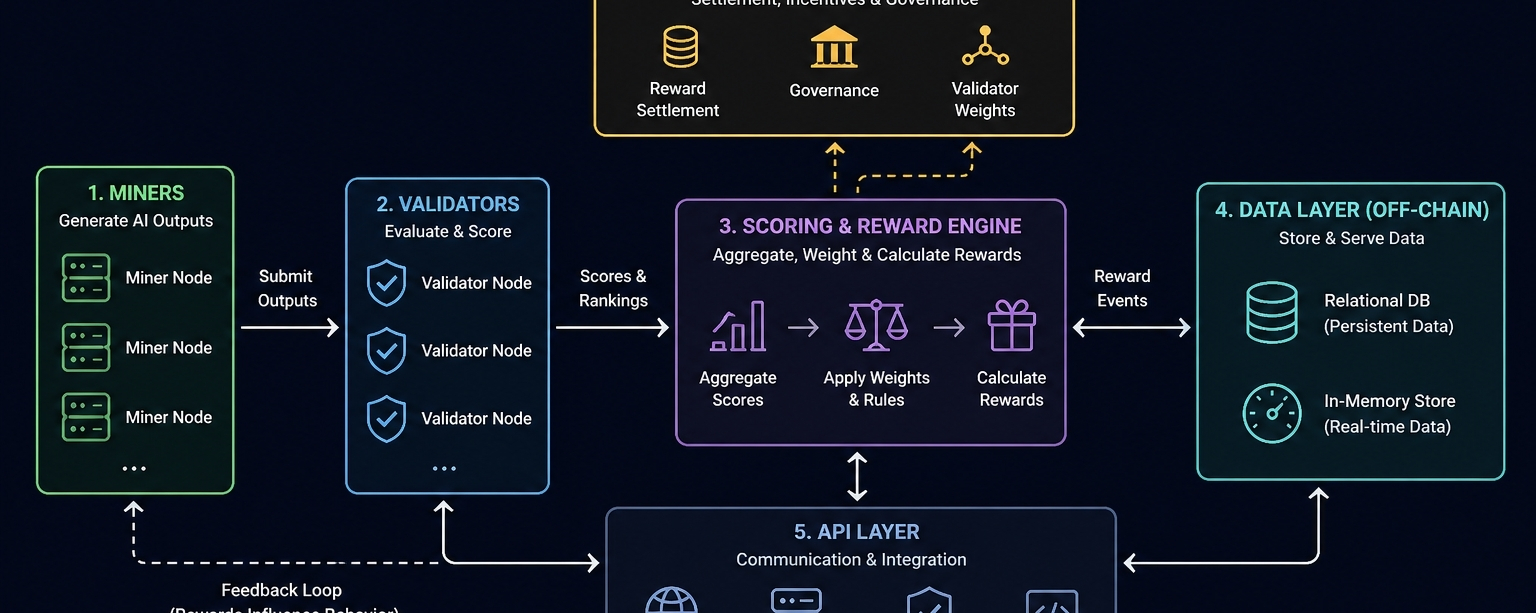

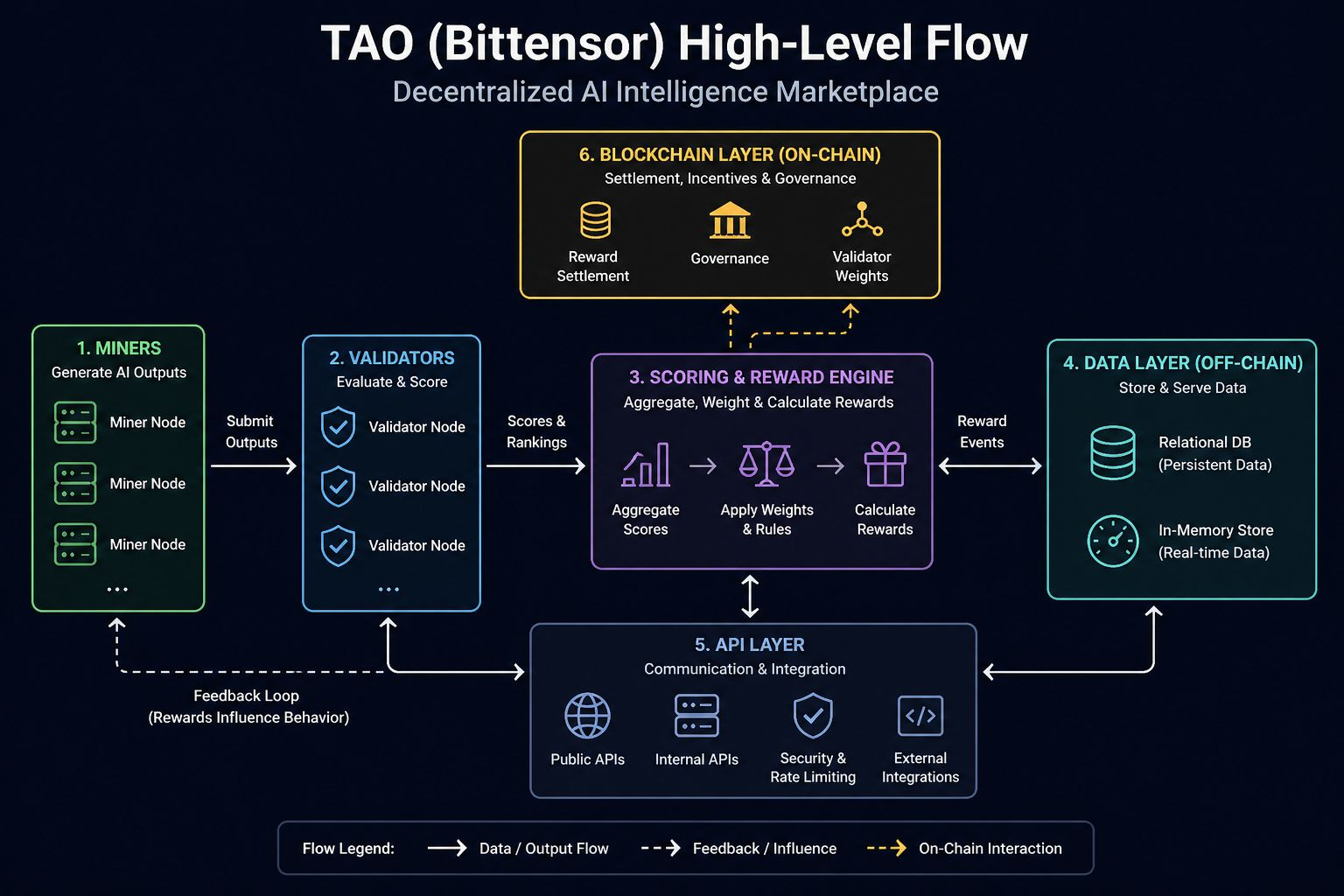

Here, miners aren’t just running workloads—they’re generating outputs that get judged. And those judgments? They’re coming from validators who are also part of the same incentive loop. That should make you pause. Because I’ve seen this go wrong. You introduce subjective scoring into a competitive environment and suddenly you’re not just building infrastructure—you’re managing behavior. And behavior is where systems get unpredictable.

The architecture tries to keep up with this. On paper, it’s this nice decentralized loop—miners produce, validators score, rewards adjust. In reality, it feels more like a constantly running feedback machine that you’re hoping doesn’t spiral. Everything is event-driven, constantly reacting, constantly updating. There’s no real “steady state,” which is already a red flag if you’ve ever had to keep a live system stable for months at a time.

And let’s just be honest about something: most of this “decentralized” system is running on centralized infrastructure. Cloud GPUs. Autoscaling clusters. The usual suspects. I’ve deployed enough backend systems to know what that looks like. You’re juggling costs, dealing with flaky instances, praying your orchestration doesn’t choke under load. The protocol might be decentralized. The actual execution? Not even close.

The data layer is where things start getting... interesting. You can’t rely purely on traditional databases here. Too slow, too rigid. But you also can’t go full in-memory because you need some notion of persistent truth. So you end up with this split personality system—part of it trying to be consistent and reliable, the other part just trying to keep up in real time. I’ve built systems like that. They work, until they don’t. And when they don’t, debugging them is a nightmare because your “truth” depends on timing.

Latency is another beast entirely. These systems can’t afford to feel slow. If responses lag, the whole thing loses relevance. So they cheat a little. Parallel processing, local evaluation, asynchronous scoring. All the usual tricks. You sacrifice clean consistency for speed because you have to. I’ve made that trade before. Everyone does eventually. You tell yourself it’s fine because users care about responsiveness. And they do. Until something weird happens and now you’ve got inconsistent state across nodes and no easy way to reconcile it.

The blockchain side of things? Honestly, it’s doing less than people think. And that’s probably a good thing. You don’t want AI workloads anywhere near on-chain execution unless you enjoy pain. The chain handles rewards, maybe some weights, governance if you’re lucky. Everything else happens off-chain where you can actually move fast. That split is necessary, but it creates this awkward boundary where you’re trusting off-chain systems to behave while the chain just records outcomes. It’s a compromise. Not a clean one.

The API layer ends up carrying a lot of hidden complexity. It’s not just passing data around—it’s dealing with untrusted participants who might spam, manipulate, or just send garbage. I’ve dealt with that kind of traffic. It’s exhausting. You start building defensive systems—rate limiting, validation layers, fallback logic—and suddenly your “simple API” is anything but simple. It becomes a battlefield.

And then come the trade-offs. Everyone likes to talk about decentralization like it’s a free win. It’s not. It slows things down. It complicates coordination. So you start sneaking in centralization where it helps. Maybe in evaluation. Maybe in coordination. It’s subtle at first. Then it’s not. Same with speed versus trust—you push for faster systems, you loosen guarantees. There’s always a cost. Always.

I’ve seen systems like this buckle under pressure. Heavy load hits, validators can’t keep up, scoring lags behind generation. Suddenly low-quality outputs start slipping through because the system is overwhelmed. Or worse, people figure out how to game the incentives. And they will. They always do. You don’t design for honest participants—you design for the worst ones. If you don’t, they’ll teach you the hard way.

You can throw mitigation at it—reputation systems, dynamic weighting, redundancy—but none of that is bulletproof. It just raises the bar. And raising the bar means increasing complexity, which introduces new failure modes. It’s a loop. I’ve lived that loop.

What really nags at me is the long-term picture. On paper, sure, this scales. More nodes, more compute, more participation. But scaling coordination? Scaling fair evaluation? That’s a different story. That’s where things get expensive. And not just financially—operationally, cognitively. The system gets harder to reason about.

There’s also this uncomfortable possibility that evaluation becomes the bottleneck. Generating outputs gets cheaper over time—models improve, hardware improves. But judging quality? That doesn’t scale as cleanly. If validators become the choke point, you start drifting toward centralization again, whether you like it or not.

I’m not saying this whole thing doesn’t work. It clearly works to some extent, or we wouldn’t be talking about it. But I’ve been burned enough times to know that systems like this don’t fail loudly at first. They degrade. Slowly. Quietly. Until one day you’re staring at dashboards at 3 AM wondering how everything got so complicated.

Maybe this time it holds together. Maybe the incentives are strong enough, the architecture flexible enough. Or maybe we’re just watching another system inch toward the same trade-offs we always end up making, just dressed up in a new narrative.

Either way, I wouldn’t bet on it being as clean as people are hoping.