I'm often thinking about a quiet cost that hides inside decentralized systems. I call it coordination drag. It’s the subtle friction that appears when a network claims to distribute power, yet the flow of data, timing, or execution still bends toward a few invisible centers. Most users never notice it directly. But when I watch markets move, when I observe how traders react to delays or uncertainty, coordination drag becomes very real. It shapes trust, behavior, and sometimes entire liquidity flows.

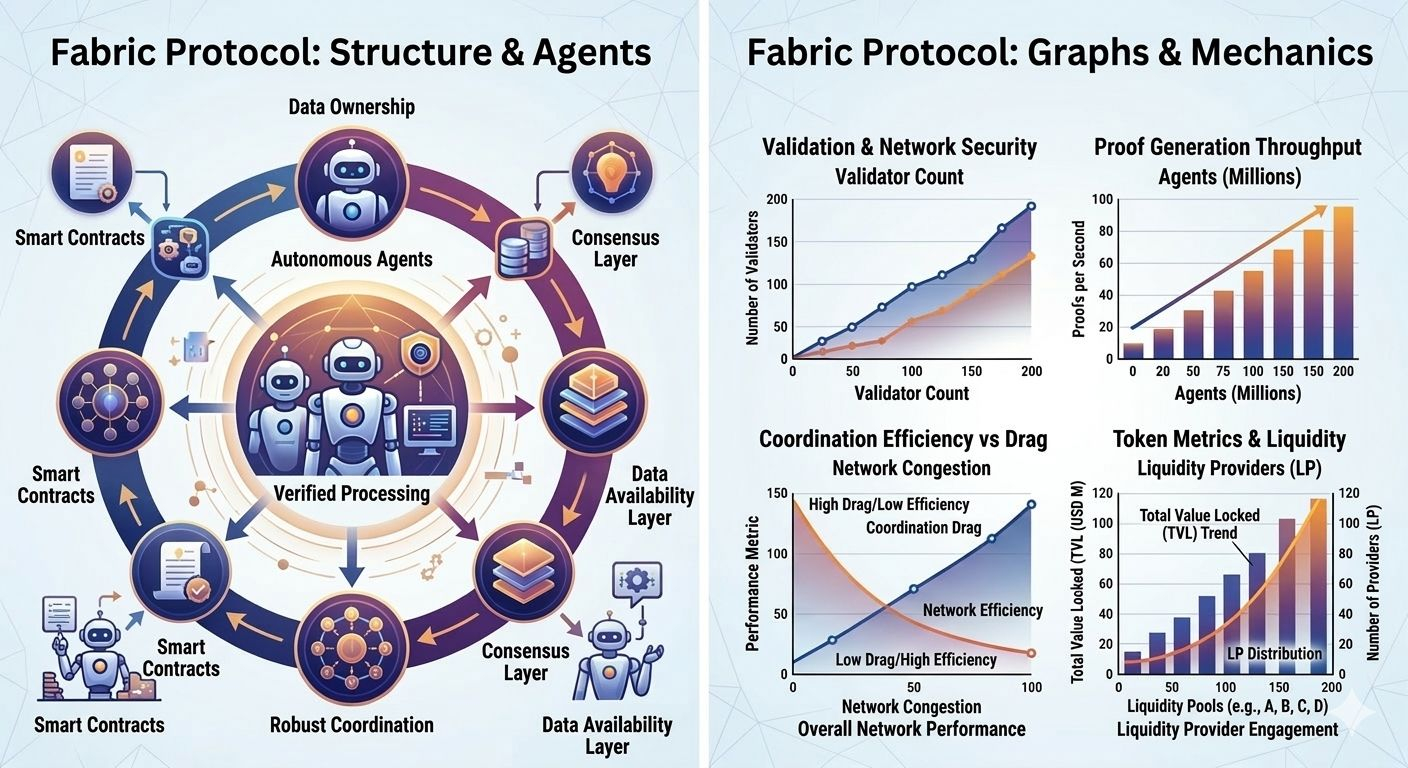

When I look at Fabric Protocol, what stands out to me is how it approaches decentralization from a direction most blockchain projects rarely explore. Instead of focusing primarily on financial assets, Fabric treats machines and robotic agents as active participants in the network. That single design choice shifts the entire conversation. Decentralization stops being only about wallets sending tokens. It becomes about how machines sense information, compute outcomes, verify actions, and coordinate with humans in an open environment.

The moment machines enter the system, the importance of data ownership becomes impossible to ignore.

I’ve seen many networks that appear decentralized at the consensus layer but quietly centralize the data layer. Validators may be widely distributed, yet most users depend on a handful of indexing services, APIs, or analytics dashboards to understand what the chain is actually doing. Traders know this dynamic well. I remember watching a market event where a slight delay in oracle updates triggered liquidation cascades across several protocols. The blockchain itself was operating normally. Blocks were produced on time. But the external data pipeline feeding price information lagged just long enough to distort behavior across the system.

Execution is where decentralization proves itself or quietly fails.

In live trading environments, latency is not theoretical. A delay of even a few hundred milliseconds can change the outcome of a hedge or turn a neutral position into a loss. When networks require repeated transaction signing, unpredictable gas mechanics, or inconsistent confirmation timing, the psychological effect appears quickly. Traders hesitate. Liquidity providers widen spreads. Market participants begin searching for more predictable environments.

What interests me about Fabric Protocol is that robotic systems cannot tolerate this kind of uncertainty. A robotic agent performing a real-world task cannot simply pause while waiting for a chain to finalize consensus if that delay affects safety or operational flow. That constraint forces the infrastructure to prioritize verification and coordination in ways that typical financial chains sometimes overlook.

Verifiable computing becomes the central mechanism that supports this structure. Instead of trusting that an agent performed an action correctly, the system produces cryptographic proofs that can be verified independently by the network. That shift might sound technical, but its implications are psychological as well. Trust moves away from identity and toward verifiable evidence.

When trust becomes evidence-based, coordination becomes more resilient.

From a market perspective, reliability often matters more than speed. Traders can adapt to a predictable delay, but inconsistent execution introduces uncertainty that spreads quickly through liquidity pools. I’ve seen strategies built entirely around block timing assumptions. When those assumptions break under congestion, entire systems begin to behave unpredictably.

Fabric Protocol attempts to reduce that unpredictability by structuring computation and data availability across modular layers. Instead of forcing every node to store complete datasets, information can be fragmented and distributed across the network. Techniques like erasure coding allow pieces of data to be stored redundantly while remaining recoverable even if several nodes disappear.

This distribution is not only about storage efficiency. It’s about resilience.

If thousands of machine agents begin submitting computation proofs simultaneously, the network must avoid bottlenecks where verification becomes concentrated in a few locations. Distributed data availability allows fragments of information to be reconstructed even if parts of the system temporarily fail.

Still, every architecture carries trade-offs.

High-performance blockchains often balance decentralization against coordination efficiency. Some networks reduce validator counts to maintain predictable communication. Others expand participation but accept slower synchronization between nodes. Fabric Protocol sits somewhere within that tension, attempting to maintain performance while preserving distributed verification.

What makes its approach interesting is the context in which the network operates. Most chains are optimized primarily for financial throughput. Fabric Protocol appears designed for interactions between autonomous agents that may not even be human participants. That shift changes how reliability is evaluated.

Machines are less forgiving than people.

A robotic system executing instructions based on blockchain state cannot interpret ambiguous conditions or delayed information easily. For that reason, confirmation reliability and data availability become critical components of the system’s credibility.

User experience also plays a surprisingly important role here.

When I observe traders interacting with decentralized platforms, small design decisions often shape behavior more than technical documentation ever will. If executing a simple action requires multiple signatures, fragmented gas payments, or uncertain timing, users gradually reduce their engagement.

Fabric Protocol attempts to reduce that friction by allowing agents to operate under defined permissions and verifiable instructions rather than requiring constant manual approval. In practice, this transforms the interaction model. Instead of orchestrating every individual transaction, participants coordinate persistent autonomous processes.

Of course, autonomy introduces its own structural risks.

Infrastructure requirements can quietly concentrate influence among participants who possess the resources to operate stable nodes. Hardware demands, bandwidth requirements, and verification workloads can create barriers that limit who participates in maintaining the network.

This is a familiar tension across most high-performance blockchain systems. The real question is not whether centralization pressure exists, but whether incentives and governance mechanisms can counterbalance it.

In Fabric Protocol, the native token plays a role in maintaining this balance. Validators stake tokens to participate in consensus. Agents pay for computation and verification services. Governance mechanisms allow the network to adjust parameters as usage patterns evolve.

When designed carefully, these incentives form feedback loops that reward reliability and penalize dishonest behavior.

Governance becomes less about controlling the system and more about adapting it over time.

No decentralized network launches in perfect equilibrium. Real usage reveals hidden assumptions. Parameters change. Infrastructure expands. What matters is whether governance can evolve the system without destabilizing its economic foundation.

Liquidity flows and oracle systems add another layer of complexity. Even if Fabric Protocol focuses on coordinating machines and computational agents, it cannot fully escape the gravitational influence of financial markets. Tokens circulate through exchanges, bridges connect external liquidity, and price feeds influence collateral structures across connected ecosystems.

This is where external dependencies can introduce unexpected vulnerabilities.

I’ve seen moments where oracle delays triggered simultaneous liquidations across multiple protocols. Each system followed its own logic, yet the shared dependency created cascading outcomes. Decentralized networks rarely fail alone. They fail through interconnected relationships.

Fabric Protocol attempts to reduce blind reliance on external inputs through verifiable computation wherever possible, but the challenge of reliable data feeds never disappears entirely.

The real strength of any infrastructure becomes visible under stress.

Imagine a sudden surge of robotic agents submitting computation proofs during a network event. Validators must process large volumes of verification while maintaining consistent block production. If the network schedules these processes efficiently, coordination continues smoothly. If not, queues build up, delays propagate outward, and market participants begin adjusting behavior almost immediately.

Designing systems that survive these moments requires anticipating failure rather than avoiding discussion of it.

When I step back and observe Fabric Protocol through this lens, what I see is an attempt to build a coordination layer where humans and machines can interact through verifiable rules rather than centralized supervision.

That ambition carries both promise and pressure.

As the network grows, the real structural test will not be how impressive the technology appears in early demonstrations. The deeper test will be whether the infrastructure can preserve decentralized ownership of the data generated by the agents it coordinates.

If machines compute and verify actions but the data they produce ultimately flows back into centralized repositories, the system simply recreates old power structures in a new form.

True decentralization requires something more demanding.

It requires distributed knowledge, resilient infrastructure, and incentives that reward long-term participation instead of short-term extraction.

Most of these qualities rarely capture attention during speculative cycles.

But over time, they determine whether a network becomes another experiment in distributed systems or evolves into a durable foundation for a new kind of machine economy.

@Fabric Foundation #ROBO $ROBO