I’ve noticed that most conversations about privacy in crypto start from a place that already assumes the answer. People talk as if the presence of cryptography itself resolves the question, as if once data is hidden, the problem is finished. I don’t look at it that way. What interests me more is what kind of coordination burden privacy actually creates once you try to turn it into infrastructure. Midnight Network presents itself as a system that uses zero-knowledge proofs to preserve data protection without sacrificing utility, but I find myself asking a different question: what has to be true, at a system level, for that balance to hold over time?

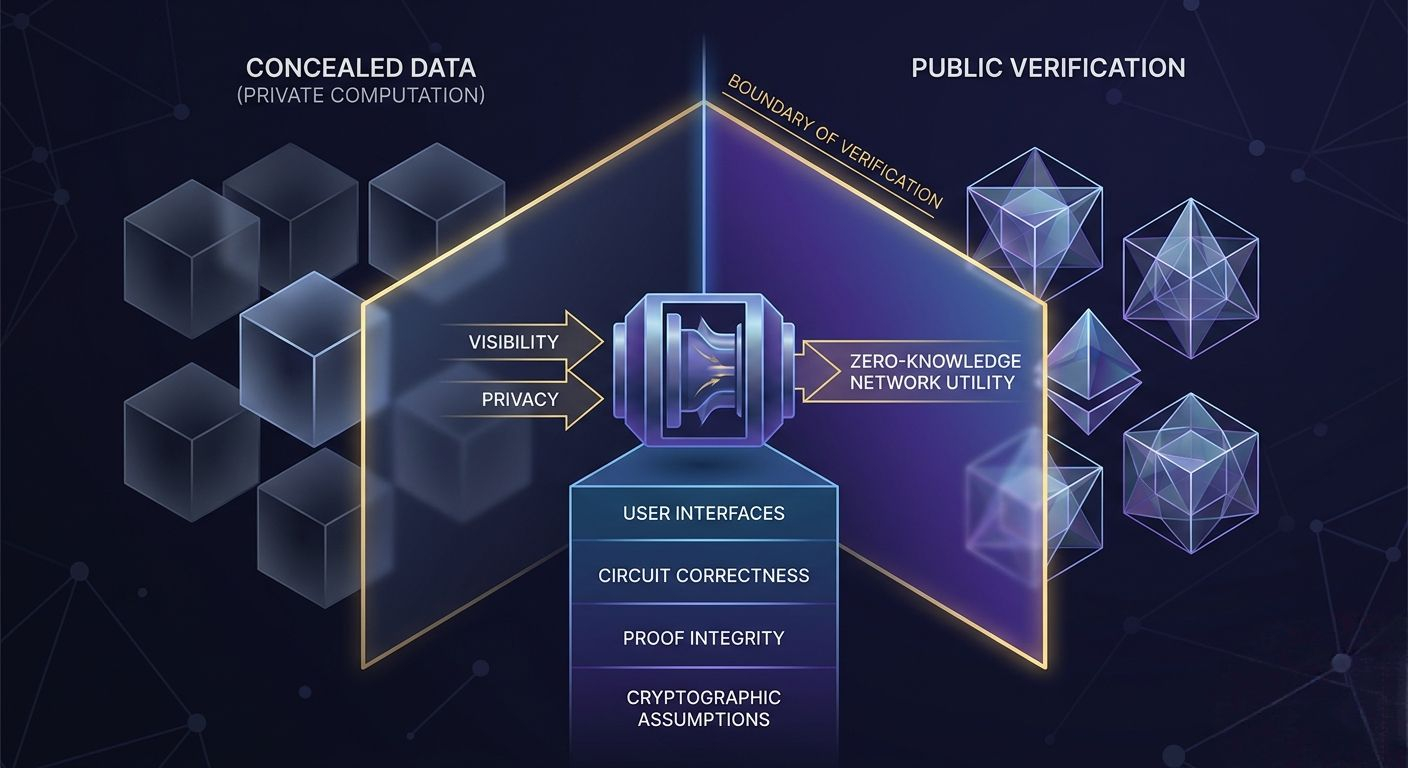

Because privacy, in practice, is not just about hiding information. It is about deciding who can verify what, when, and under which constraints. That is where things tend to get complicated. A network can claim strong privacy guarantees, but the moment it needs to interact with external systems—regulators, applications, users with conflicting incentives—it enters a space where verification cannot disappear. It only changes shape.

When I look at Midnight Network, I see an attempt to reposition that boundary. Zero-knowledge proofs are not being used simply as a feature, but as a structural layer that tries to reconcile two opposing demands: the need for confidentiality and the need for verifiability. On paper, this is elegant. You prove correctness without revealing the underlying data. You allow computation without exposure. But I keep coming back to the same thought: this only works if the system can sustain trust in the proofs themselves without recreating trust in some hidden intermediary.

That is the first tension I pay attention to. Zero-knowledge systems shift trust away from data visibility and into proof validity. This sounds like progress, but it introduces a new dependency. The entire network begins to rely on the correctness of circuits, the integrity of proving systems, and the assumptions embedded in cryptographic design. Most users will never see these layers. They will interact with interfaces that abstract everything away. From where I stand, that abstraction is not neutral. It changes where trust lives.

I keep asking what happens when something goes wrong. Not in the dramatic sense, but in the slow, almost invisible way systems tend to drift. If a proof system has a flaw, or if an implementation introduces subtle inconsistencies, how quickly can that be detected? And more importantly, who has the capacity to detect it? In transparent systems, failure often reveals itself through visible contradictions. In private systems, failure can remain hidden behind the very guarantees that are supposed to protect users.

This is not a criticism of zero-knowledge technology itself. If anything, it is an acknowledgment that Midnight Network is operating in a space where the margin for error is unusually thin. The system is trying to compress two opposing properties privacy and verifiability into a single operational model. That compression creates efficiency, but it also creates fragility.

At the same time, I can see where the design shows discipline. Midnight does not treat privacy as an optional add-on. It is embedded into the execution environment itself. This matters more than most people realize. When privacy is optional, it tends to degrade over time because users gravitate toward convenience. By making privacy a default condition, the network attempts to remove that drift. It forces every interaction to operate within the same constraint set.

But this introduces another coordination problem. If all transactions operate under privacy constraints, then the system must still provide enough composability for developers and applications to function effectively. That is not trivial. Composability often depends on shared visibility—smart contracts interacting with each other based on known states. Once those states become private, coordination between contracts becomes less straightforward.

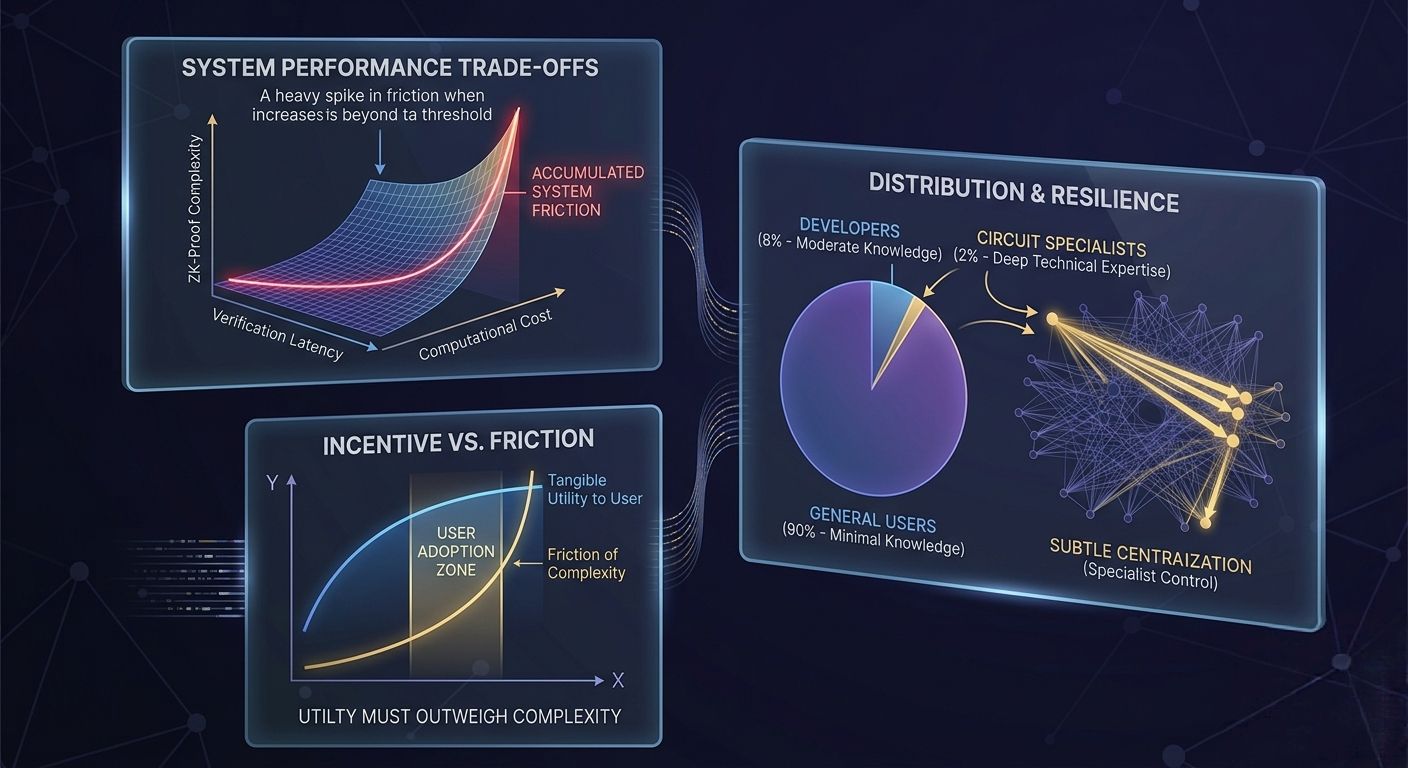

I find myself thinking about latency here, not just in terms of time, but in terms of informational delay. Zero-knowledge proofs are computationally expensive. Even with optimizations, there is a cost to generating and verifying proofs. That cost translates into friction. It may not be immediately visible to users, but it accumulates at the system level. If the network becomes too slow or too complex to interact with, participation starts to narrow. And when participation narrows, decentralization begins to weaken.

This is where incentive design becomes critical. For Midnight Network to sustain itself, it needs a broad base of participants who are willing to engage with its privacy model despite the additional complexity. That means the network must offer something that outweighs the friction. Not in a speculative sense, but in a practical one. Users need a reason to accept the trade-offs.

I don’t think that reason is purely ideological. Privacy, as a principle, is widely supported in theory but inconsistently adopted in practice. Most users will choose convenience unless the cost of exposure becomes tangible. Midnight seems to recognize this by focusing on utility—allowing applications to operate without compromising data ownership. But utility is not just about what the system enables. It is also about how reliably it delivers that experience over time.

Reliability, in this context, is not only technical. It is behavioral. The network must maintain consistent execution across different conditions, different user behaviors, and different external pressures. This is where governance enters the picture, even if it is not always visible. Decisions about upgrades, parameter changes, or security responses all carry weight. In a privacy-focused system, those decisions become harder to evaluate from the outside.

I keep circling back to the idea of visibility, not as a binary, but as a spectrum. Midnight reduces visibility at the data level, but it cannot eliminate the need for visibility at the system level. Users still need to trust that the network behaves as expected. Developers still need to understand how their applications interact with the underlying infrastructure. Regulators, whether welcomed or not, will still look for points of assurance.

This creates a layered trust model. At the surface, users trust the interface. Beneath that, they trust the application logic. Beneath that, they trust the proof system. And beneath that, they trust the cryptographic assumptions. Each layer introduces its own failure modes. What interests me is how well these layers align. If they drift apart, the system becomes harder to reason about.

There is also the question of distribution. Not in the token sense, but in terms of who actually participates in maintaining the network. Zero-knowledge systems often concentrate expertise. The ability to design, audit, and optimize circuits is not widely distributed. This can lead to a subtle form of centralization, where a small group of contributors holds disproportionate influence over the system’s evolution.

Midnight does not escape this dynamic. It may mitigate it through tooling and abstraction, but the underlying complexity remains. I wonder how this affects long-term resilience. If too much of the system depends on a narrow set of capabilities, then the network’s ability to adapt becomes constrained.

At the same time, I don’t think the answer is to avoid complexity altogether. The problems Midnight is trying to address are inherently complex. Data privacy, especially in a programmable environment, cannot be reduced to simple mechanisms. What matters is how that complexity is managed. Whether it is contained within well-defined boundaries or allowed to leak into every part of the system.

From what I can see, Midnight is making a deliberate effort to contain it. The architecture suggests a separation between private computation and public verification, even if the distinction is not always obvious at the user level. This separation could be a strength if it holds. It allows the system to maintain clarity about what is hidden and what is exposed.

But again, that clarity depends on consistent execution. If different parts of the system interpret privacy constraints differently, or if edge cases introduce ambiguity, the model begins to weaken. These are not immediate failures. They are gradual misalignments that accumulate over time.

I find myself less interested in whether Midnight succeeds in the short term and more interested in how it behaves under stress. What happens when usage increases? When applications push the limits of what the system can handle? When external pressures demand transparency in ways that conflict with the network’s design?

These are the moments that reveal the underlying structure. Not the polished narrative, but the actual coordination mechanisms that keep the system functioning. This is where credibility forms. Not in the claims, but in the consistency of behavior when those claims are tested.

I don’t see Midnight Network as a solution in the final sense. I see it as an attempt to redefine where the boundary between privacy and verification should sit in a decentralized system. That boundary is not fixed. It shifts depending on context, incentives, and external constraints.

What I keep coming back to is the idea that privacy does not remove trust. It redistributes it. Midnight is asking users to trust in proofs rather than visibility, in cryptographic guarantees rather than direct observation. That is a meaningful shift, but it is not a free one.

As I watch the system more closely, the question that stays with me is not whether zero-knowledge can work at scale. It is whether a network built on hidden states can maintain shared understanding among participants who cannot see the same thing. Because at some point, coordination depends not just on correctness, but on a kind of collective clarity. And I am not yet sure how that clarity is sustained when the system is designed, by definition, to conceal as much as it reveals.

@MidnightNetwork #night $NIGHT