I keep noticing how often systems that claim to distribute value fairly end up concentrating something more subtle instead interpretation Not control in the obvious sense but the quiet authority to decide what counts as valid who qualifies and when something is considered proven At first glance SIGN looks like it is solving a fairly mechanical problem verifying credentials and distributing tokens at scale But I do not look at this as a simple feature story What interests me more is the structure behind it I keep asking what has to be true for this model to actually hold That is the part I watch closely From where I stand credibility starts much earlier than most people think

SIGN presents itself as infrastructure That framing matters Infrastructure does not compete at the surface level it shapes what becomes possible for everything built on top of it In this case the promise is straightforward credentials can be verified globally and tokens can be distributed based on those credentials in a way that is transparent scalable and resistant to manipulation It sounds clean Almost inevitable But when I slow down and trace the edges of the system I start to see a deeper coordination problem forming underneath the surface

The question is not just whether credentials can be verified The question is who defines a credential in the first place and under what conditions that definition remains stable across contexts

Verification in theory is about reducing uncertainty But in practice it often relocates uncertainty rather than eliminating it If SIGN is responsible for verifying credentials then the system must rely on upstream sources of truth issuers attestations proofs or some form of identity anchoring Each of these introduces its own trust boundary And trust boundaries do not disappear just because they are encoded into a blockchain They become more rigid sometimes harder to question and occasionally more difficult to adapt

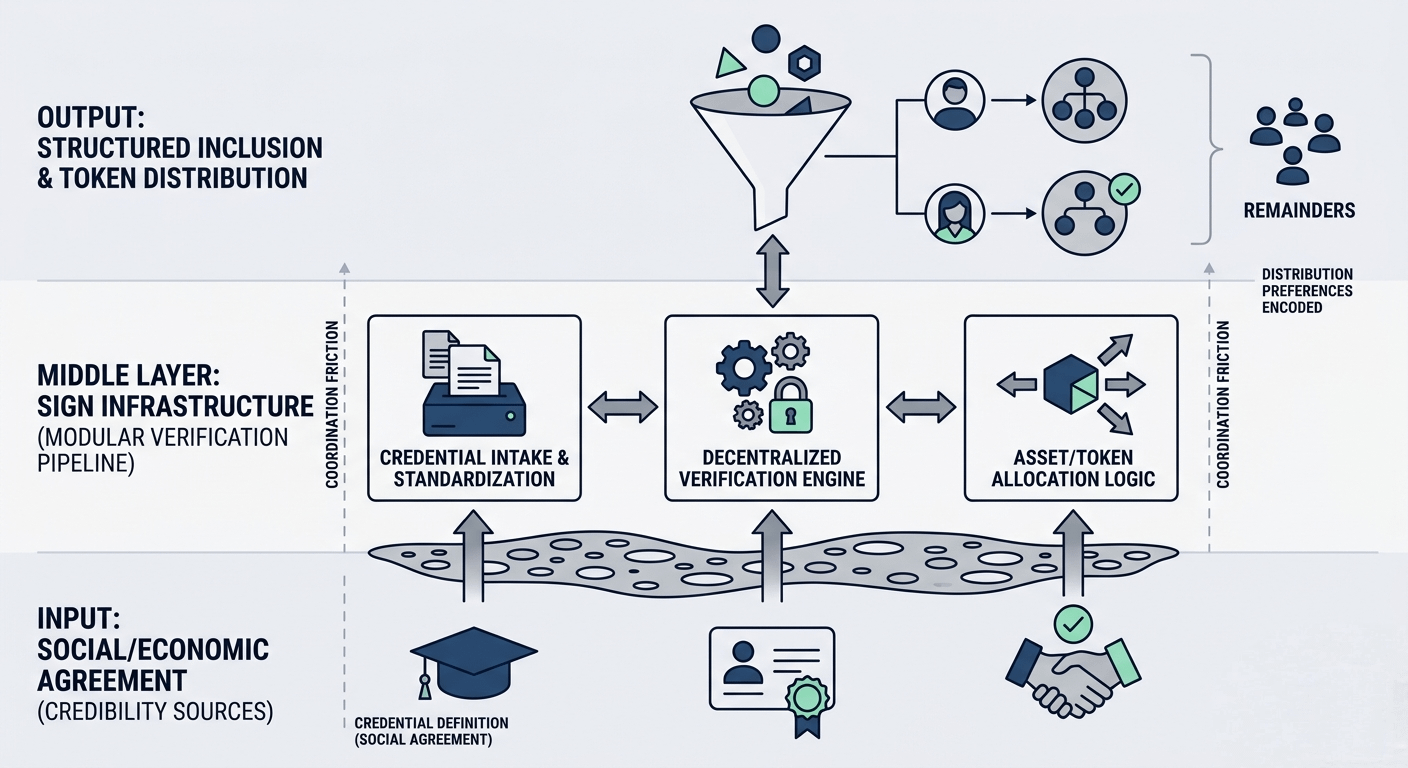

What I find interesting is how SIGN tries to compress multiple layers of coordination into a single pipeline someone issues a credential that credential is verified and then tokens are distributed based on that verification On paper it feels efficient But efficiency in distributed systems often comes from assuming that the edges of the system are already aligned I am not sure that assumption holds

Take credential issuance For the system to function meaningfully there must be entities or mechanisms that can produce credentials that others accept That acceptance is not purely technical It is social economic and sometimes political A credential only has value if other participants agree that it should So even if SIGN standardizes the format and verification process it still depends on a layer of shared belief about what those credentials represent

This is where the coordination problem becomes more visible SIGN is not just verifying credentials it is implicitly asking a network of participants to converge on a shared understanding of legitimacy And convergence is expensive It requires time repetition and often some form of incentive alignment that goes beyond the protocol itself

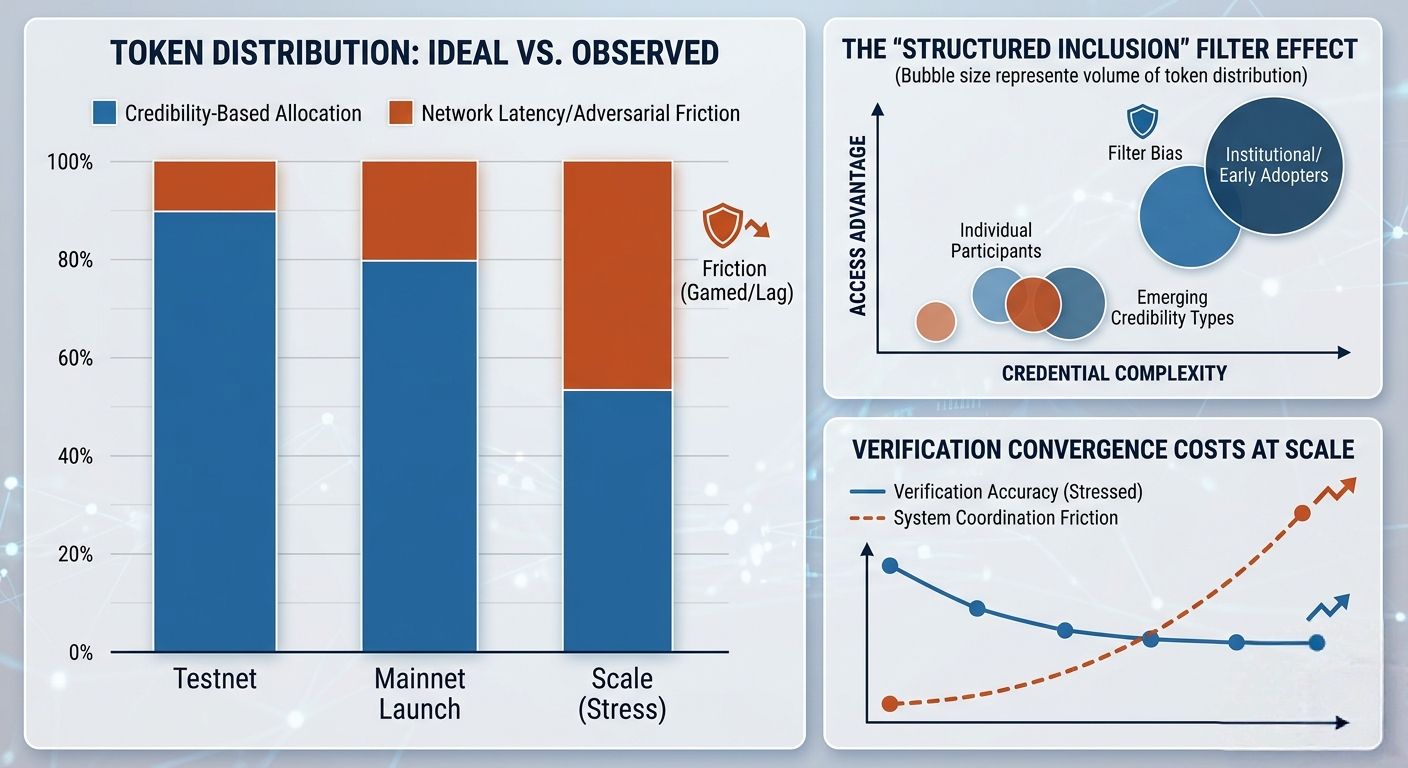

Then there is the distribution layer Token distribution is often framed as a fairness problem how do you allocate value to the right participants without being gamed SIGN approaches this by tying distribution to verified credentials In theory this reduces noise It filters out bots sybils and low quality participation But filtering is not neutral Every filter encodes a preference And preferences once embedded into infrastructure tend to persist longer than expected

I find myself wondering what kinds of participants are implicitly favored by this model If access to distribution depends on credentials then those who are better positioned to obtain or produce those credentials gain an advantage This is not necessarily a flaw It might even be intentional But it shifts the system away from open participation toward structured inclusion And structured inclusion always raises the question of who remains outside

There is also a temporal dimension that I do not think is immediately obvious Credentials are not static They change expire or lose relevance But token distributions often operate on discrete events snapshots campaigns allocations This creates a kind of latency between reality and representation A credential might be valid at the time of issuance but outdated by the time it is used for distribution Or the opposite a participant might become eligible just after a distribution window closes These edge cases are not just operational details They shape how fair the system feels over time

What I appreciate about SIGN is that it seems aware of some of these tensions The architecture suggests an attempt to separate concerns verification logic credential standards distribution mechanisms This kind of modularity is usually a sign of disciplined design It allows parts of the system to evolve without breaking the whole But modularity also introduces coordination overhead between modules Each boundary becomes a point where assumptions must be aligned again

I keep coming back to the idea of verification as a moving target In traditional systems verification often relies on centralized authorities precisely because they can update their criteria quickly In decentralized systems updating verification logic requires consensus governance or at least coordination among stakeholders This introduces friction And friction is not always visible until the system is under stress

Stress in this context could come from scale If SIGN succeeds and begins to handle large volumes of credentials and distributions the system will need to maintain consistency across a wide range of use cases Different applications may have different standards for what counts as a valid credential Some may prioritize strict verification others flexibility Balancing these without fragmenting the ecosystem is not trivial

Stress could also come from adversarial behavior Any system that allocates value based on credentials will attract attempts to game those credentials The question is not whether this will happen but how the system responds when it does Does it tighten verification potentially excluding legitimate participants Does it loosen standards risking dilution of value Or does it introduce additional layers of complexity to distinguish between genuine and manipulated signals

What I find particularly subtle is the governance layer implied by all of this Even if SIGN does not position itself as a governance heavy protocol decisions about credential standards verification rules and distribution mechanisms will inevitably require coordination among stakeholders Governance does not disappear just because it is minimized at the surface It reappears in the form of upgrade paths parameter changes and informal influence

And influence in systems like this often accumulates around those who can shape the definition of legitimacy Not necessarily those who hold the most tokens but those who can influence what counts as a valid credential or a worthy recipient This is a different kind of power Less visible but potentially more durable

At the same time I do not want to overlook what SIGN gets right There is a clarity in the attempt to tie verification directly to distribution It acknowledges that value allocation in crypto has often been noisy inconsistent and vulnerable to manipulation By introducing a structured way to verify participants SIGN is trying to raise the baseline quality of distribution That matters Especially in an environment where trust is often thin

There is also a certain honesty in focusing on infrastructure rather than applications Infrastructure does not need to be everything to everyone It needs to be reliable predictable and adaptable enough to support a range of use cases SIGN seems to understand that its role is not to define the end user experience but to provide the underlying logic that others can build on

Still I keep circling back to the same underlying tension The system is trying to formalize trust without fully owning the sources of that trust It verifies credentials but does not create them It distributes tokens but does not define their ultimate value It sits in the middle translating signals from one domain into outcomes in another

This middle position is powerful but also precarious It depends on both sides behaving in ways that remain compatible with the systems assumptions If issuers of credentials change their behavior or if token economies evolve in unexpected ways SIGN must adapt without losing coherence That is not just a technical challenge It is a coordination challenge that spans multiple layers of the ecosystem

I sometimes think about how systems like this age In the beginning they are defined by their architecture Clean intentional carefully designed Over time they are defined by their exceptions Edge cases patches workarounds The accumulation of these can either strengthen the system making it more resilient or gradually erode its clarity

What I would watch if I were observing SIGN over a longer horizon is not just adoption or transaction volume but how it handles misalignment When credentials mean different things to different participants how does the system reconcile that When distribution outcomes are contested where does resolution happen When the cost of verification rises who absorbs that cost

These are not questions with immediate answers And maybe that is the point Systems like SIGN are not static solutions They are ongoing negotiations between structure and behavior between what is defined and what emerges

In the end I do not find myself asking whether SIGN will succeed in the conventional sense That feels too narrow What stays with me instead is a quieter question if you build a system that tries to standardize how credibility is expressed and rewarded what happens when credibility itself refuses to stay standard.

@SignOfficial #SignDigitalSovereignInfra $SIGN