@SignOfficial #SignDigitalSovereignInfra

I’ve been spending a lot of time lately thinking about Sign Protocol, and honestly, it’s been keeping me up more than I expected.

Living here in Rawalpindi, privacy is a weird concept. You walk through the narrow lanes of Saddar or grab a burger near Committee Chowk, and everyone knows your business. It’s a culture built on "vouching" for people. But in the digital world, that doesn't really work. You can’t just have a shopkeeper say "he’s good for it" to a global database.

That’s where Sign Protocol first caught my eye. It felt like the digital version of that trust.

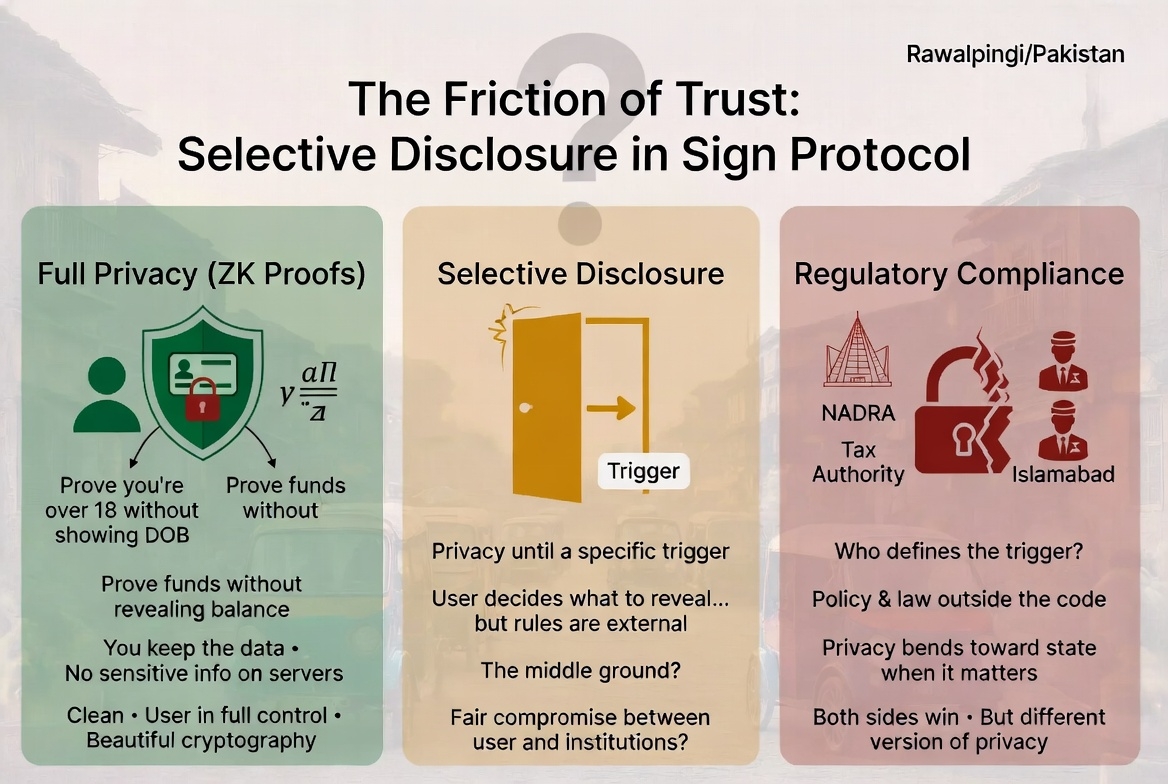

The technical side is honestly beautiful. It uses zero-knowledge proofs, which is basically a fancy way of saying you can prove you’re over 18, or that you have enough money in the bank, without actually showing your ID card or your balance. You keep the data; they get the proof. It’s clean. No sensitive info sitting on a random server waiting to be hacked.

But the more I sat with it—usually over a cup of tea while the rickshaws roared past—the more I realized there’s a massive tension under the surface.

On one side, you have this promise of total privacy. On the other, you have the reality of living in a country with strict regulations, tax authorities, and national systems like NADRA. Those systems don't care how cool your math is—they want to see what’s going on when it matters to them.

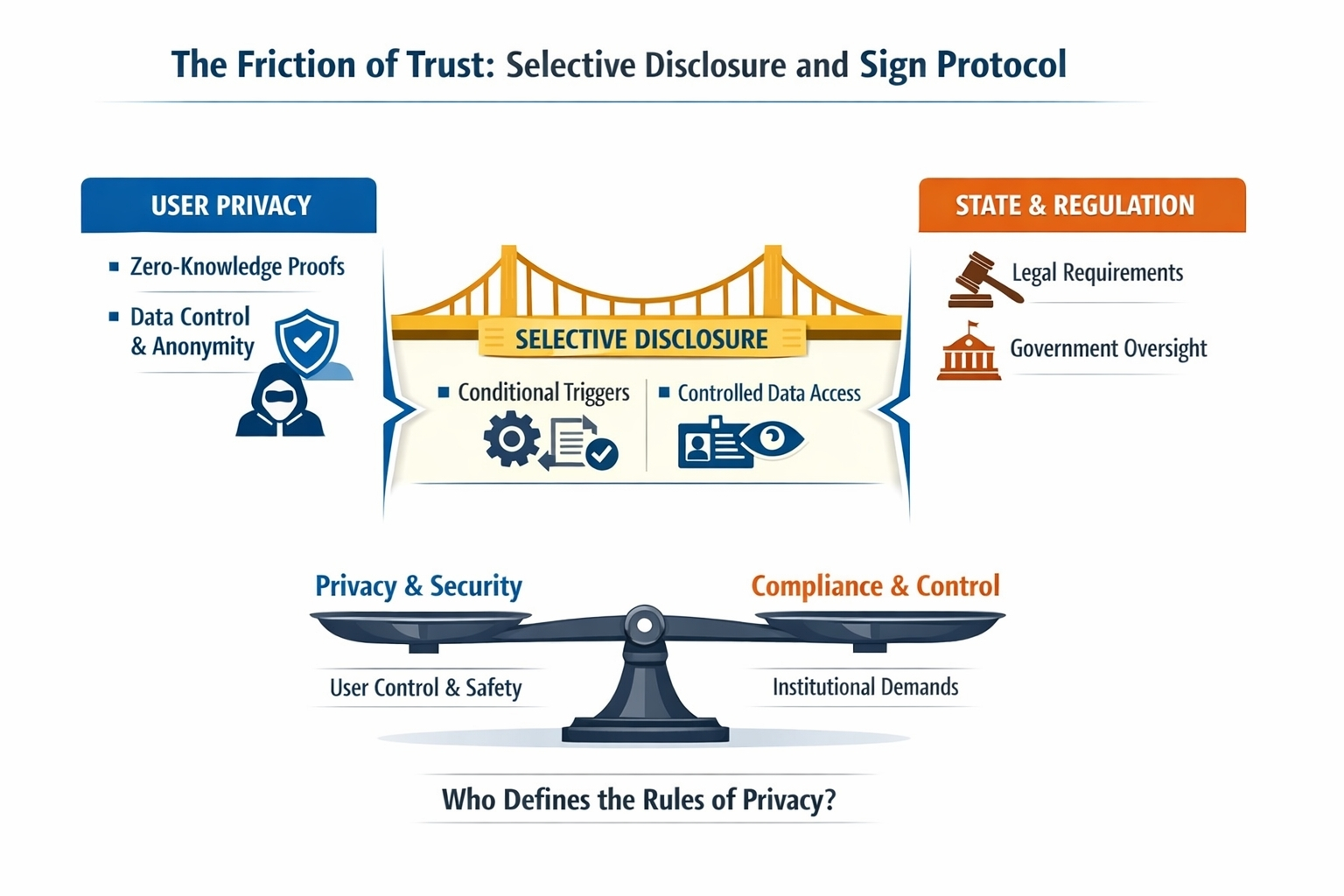

So, the "fix" is something called selective disclosure. Basically, the privacy is there until a specific "trigger" happens, and then certain info is revealed.

It sounds like a fair middle ground, right? A bridge between the user and the state.

But that’s where my brain starts to itch. Because once you make privacy conditional, you have to ask: who defines those conditions? Is it the code? Is it a guy in an office in Islamabad? Is it the protocol itself?

It changes what "privacy" actually means in the real world. At first, it feels like I’m the one in control—I decide what to reveal. But then you look closer, and you realize the rules are actually shaped by policy and law outside of the computer.

I’m not saying it’s a bad thing. If you want a system to actually work at a national level, you can’t just ignore the government. That’s just being realistic. You need compliance if you want to be more than just a niche tool for tech geeks.

But it does make the tradeoff very visible. Sign Protocol is trying to solve two opposite problems at the same time: keeping the user safe from data leaks while giving institutions the access they demand.

Both sides get a win, but they aren't getting the same version of privacy.

The cryptography is doing exactly what it’s supposed to do. It’s the human world around it—the laws, the politics, and the local rules—that defines how far that protection actually goes. I’m still trying to figure out where that balance eventually lands. It’s not about whether the system has privacy; it’s about who that privacy actually bends toward when things get complicated.