After a few days playing around with Binance AI Pro, I tried a small experiment. Nothing too technical, just something I was curious about. Same token, same timeframe, but I changed the way I asked the AI to analyze it. I wanted to see if the output stayed consistent or if it shifted depending on how I framed things.

Turns out, it shifted more than I expected.

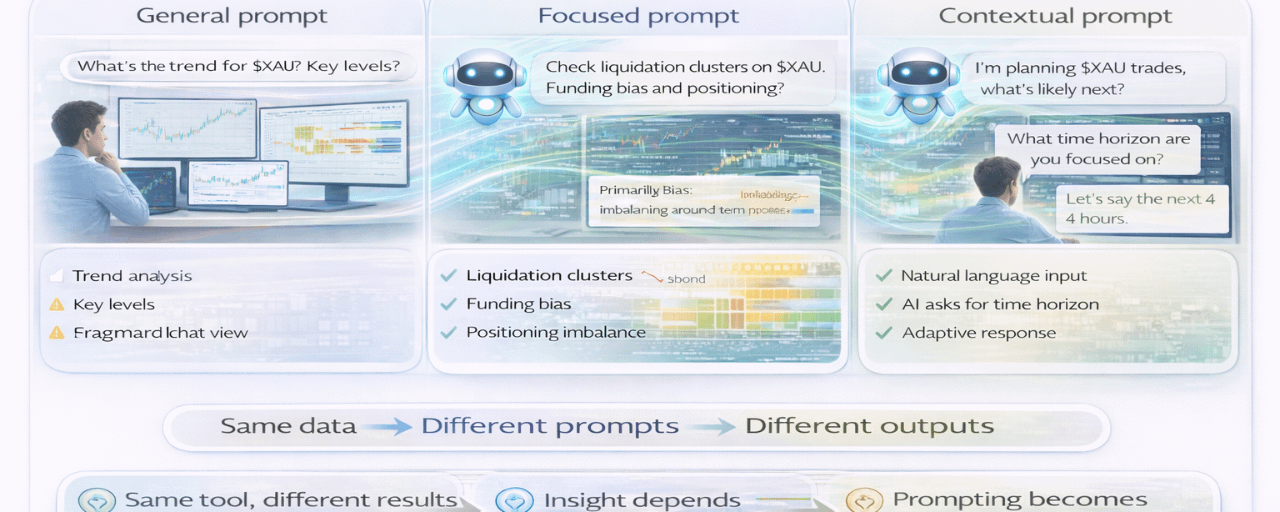

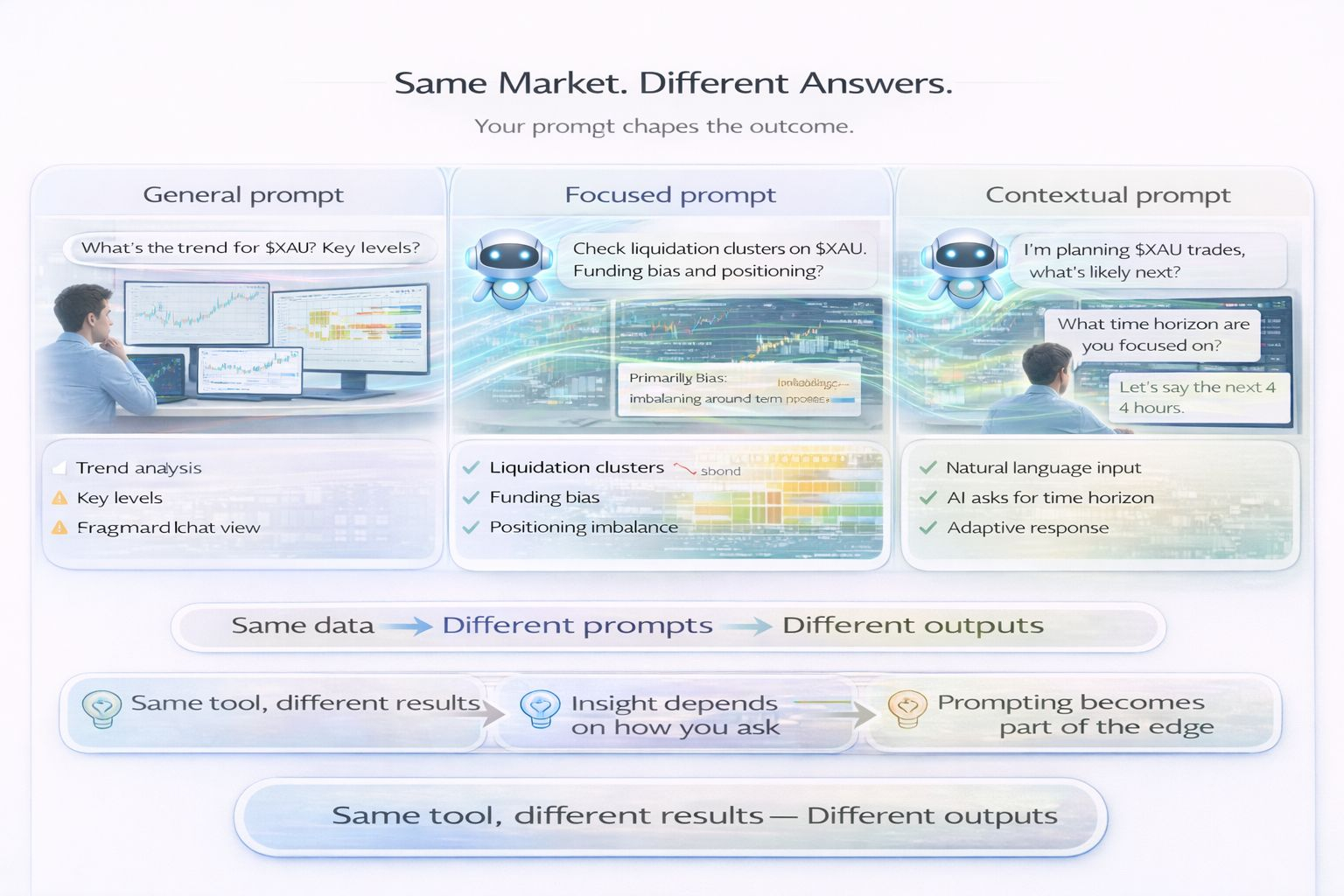

The first prompt was pretty general. Just asked it to break down the current structure and give a read. The response felt familiar, like something I’d get from a quick manual scan. It pointed out the range, highlighted a trend, mentioned a few levels. Clean, reasonable, but nothing that made me stop and rethink anything. If anything, it confirmed what I already saw.

Then I changed the angle. Second prompt was more focused, I asked about sentiment, funding, and whether there were any noticeable liquidation clusters around current price. This time the output felt… sharper. It brought up a liquidation zone I had seen earlier but kind of ignored. It also pointed out that the market had been leaning long for a while without price really justifying it. That part stuck with me because I remember noticing it, but I didn’t give it much weight.

So it wasn’t telling me something completely new, but it was rearranging importance in a way I wouldn’t naturally do after staring at the same chart for too long. That difference actually mattered more than I thought.

The third prompt was where things got interesting. I didn’t structure it much, just described what I was seeing in plain language and asked what it thought. Instead of jumping straight into analysis, the AI asked me a question back. It wanted to know my time horizon before giving a directional opinion. That caught me off guard a bit.

It made me realize something. This isn’t really built like a signal machine that spits out answers on command. It behaves more like something that needs context to be useful. And the more context you give, the more tailored the response becomes. Which sounds obvious, but I don’t think most people approach it that way at first.

I think a lot of users will treat this like a bot. Input some data, expect a clean output, move on. If that’s the approach, the results will probably feel average. The interaction itself is part of the process here. The way you ask becomes part of your strategy, not just a step before it.

I also started looking into the Skills layer a bit. There are these modules that extend what the AI can do depending on what you’re trying to analyze. Alpha for market reads, derivatives for futures positioning, margin for leveraged setups. But they’re not really pushed in your face when you start. You kind of have to go looking for them.

Which makes me think there’s a gap between what the system can do and what most people will actually use. The ceiling feels high, but the default experience is still pretty basic if you don’t tweak anything.

What keeps coming back in my head is how this changes the skillset a bit. Reading charts is still there, managing risk is still there. But now there’s this extra layer, knowing how to ask the right question. Not in a technical sense, more like knowing what you’re actually trying to understand before you ask.

And I guess that’s where the edge might be. Not because the AI is doing something magical, but because some people will get more out of the same tool just by interacting with it differently.

Still early for me, still testing different ways to use it. But it definitely feels deeper than what it looks like on the surface.

@Binance Vietnam $XAU #BinanceAIPro $BNB $BTC

Trading always involves risk. AI-generated suggestions are not financial advice. Past performance does not guarantee future results. Please check product availability in your region.