Mira Network is a new kind of system that aims to make artificial intelligence (AI) more trustworthy. Right now, many AI tools are good at sounding confident, but they sometimes give wrong information or biased answers. That can be a big problem when AI is used for important things like healthcare, legal advice, or financial decisions. Mira’s core idea is to verify AI outputs in a decentralized, secure way using blockchain and incentives so that the results are more reliable.

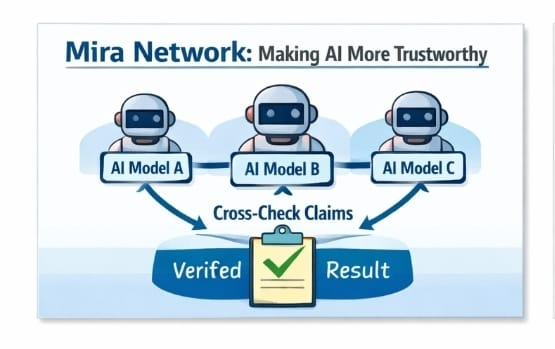

At its heart, Mira doesn’t try to make a single AI model perfect. Instead, it builds a network of independent AI models that check each other’s answers. When someone wants to confirm the truth of a piece of content from an AI, Mira breaks that content down into small, clear claims. Then many different verifier nodes—each running a different AI model—evaluate those claims independently. The system collects their responses and decides which are true based on consensus. Because no single model controls the verdict, the process reduces mistakes caused by one model’s blind spots or quirks.

All this happens on a blockchain—specifically the Base blockchain—so that the verdicts are recorded in a way that can’t be changed later. This gives users an auditable, tamper-resistant trail showing which claims were checked, how they were checked, and which models agreed on the result.

The network’s design doesn’t just rely on technology—it also uses economic incentives to encourage honest work. Node operators (the people or organizations that run the verifier systems) must lock up and stake the native $MIRA token. When they verify claims accurately, they earn rewards. But if they try to cheat or randomly guess answers, part of their staked tokens can be taken away as a penalty. This combination of incentives and penalties helps make sure verifiers are careful and honest.

The MIRA token is the fuel of this ecosystem. It’s used in many ways: paying for verification services, staking to secure the network, and even participating in governance decisions about how the system evolves. The total number of MIRA tokens is fixed at one billion, and only a portion is in circulation at first, with more gradually unlocked over time.

Mira’s approach is especially exciting because it could open the door to truly autonomous AI systems—systems that don’t need humans to double-check everything they produce. By making AI outputs verifiable and auditable, Mira could help companies trust AI with bigger responsibilities, like medical diagnoses or financial compliance tasks, where mistakes can be very costly.

Even though it’s still relatively new, Mira has seen real adoption. It has been rolled out to the mainnet, which means it’s live and handling real verification requests, and it’s supported by several exchanges and ecosystem apps that integrate its verification features.

The vision for Mira’s future is to build a “trust layer” for AI—a foundational layer that any AI system can tap into to prove its outputs are reliable. This could change how we build and use AI in critical fields because instead of taking an AI’s answer at face value, we would have a way to independently verify it. That could make AI more useful and safer in the real world.

In short, Mira Network is trying to solve one of the biggest challenges in AI by combining multiple AI verification, decentralized consensus, blockchain recording, and economic incentives to make AI outputs more trustworthy and useful for high-stakes applications.