Midnight Network keeps pulling me back for a simple reason.

The problem it is trying to solve is real.

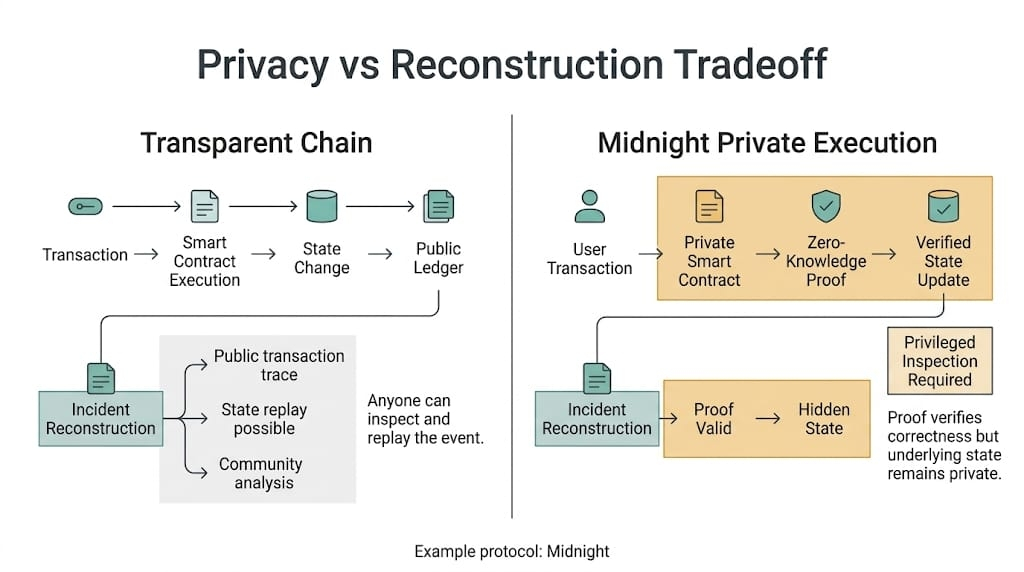

Public chains are good at one thing people in crypto like to romanticize: visible state. You can inspect it. Replay it. Argue over it in public. That works fine when the main activity is speculation dressed up as infrastructure.

It gets weaker once the thing on-chain is payroll, credit logic, internal treasury movement, business data, customer identity, or anything else that was never supposed to become public theater just because it touched a blockchain.

That part is obvious.

The harder part is what happens later.

Midnight’s pitch makes sense. Privacy built into the architecture. Zero-knowledge proofs doing the heavy lifting from the start instead of getting stapled on afterward. A programmable environment where confidentiality is part of the system, not an apology added after launch.

Fine.

The real question starts after a failure.

Not whether privacy matters. It does.

What matters is who can still explain the system once privacy is working exactly as intended and something goes wrong anyway.

Say a Midnight ( @MidnightNetwork ) app proves collateral sufficiency without exposing the whole balance sheet. Good. That’s the kind of thing private smart contracts are supposed to make possible.

Now say funds move somewhere they shouldn’t.

Not because the privacy layer failed. Not because the proof was fake. Because the contract logic around the proof had a weak assumption, a bad edge case, a branch nobody respected enough until someone leaned on it with size.

That’s where private systems stop sounding elegant and start asking for a chain of accountability.

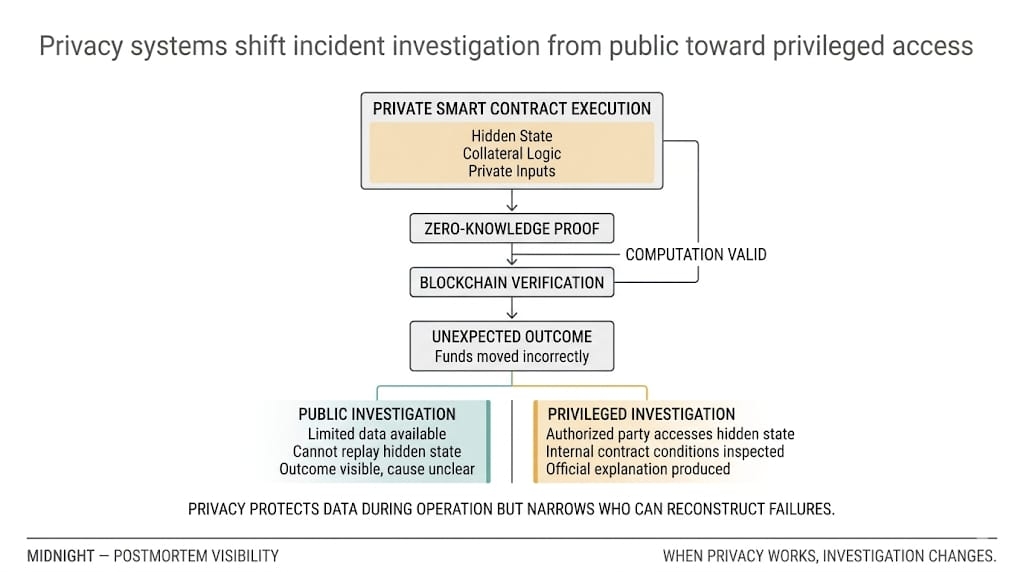

On a transparent chain, an incident is ugly but legible. People can reconstruct the path. They may get parts of it wrong, sure, but the evidence is at least contestable in public.

Midnight changes that trade.

The proof can still verify. The system can still say the computation was valid. And users can still be left staring at an outcome they cannot independently replay in any meaningful way.

That’s the pressure point.

Proofs only cover the thing inside the proof boundary. They do not rescue bad contract design. They do not tell you whether the modeled condition was the right one to enforce. They do not automatically create a public forensic trail after a failure.

So the real problem is not privacy.

It is postmortem authority.

Who gets privileged visibility after an incident.

Who can inspect the hidden state.

Who can say what actually happened.

Who has to accept that version because no one else can reproduce it.

That is where crypto gets uncomfortable.

Because once the curtain only lifts for a narrow group, accountability stops feeling native to the system and starts depending on process, disclosure policy, and institutional trust. Maybe not all the way back to “just trust us.” But definitely closer than people want to admit when they market privacy as if it settles the whole question.

And still, the answer is not to retreat back to full transparency. That model has its own ceiling, and it gets uglier the moment real businesses or real users have to expose things they should never have had to expose.

So no, I don’t think Midnight is solving a fake problem.

I think it is solving a real one and inheriting a second one at the same time.

Privacy can protect users during normal operation. It can also make incident explanation narrower, slower, and more permissioned once something breaks.

That doesn’t kill the model.

It just means the real test is no longer whether Midnight can hide sensitive state.

It’s whether Midnight can define, in advance and credibly, who gets to lift the curtain later... and under what rules... when the system fails and the official story is no longer enough.

And if you don't know about Midnight Network:

Midnight is a privacy-first chain from IOG where zero-knowledge proofs enable selective disclosure, and a dual-token system separates governance ( $NIGHT ) from network fees (DUST).