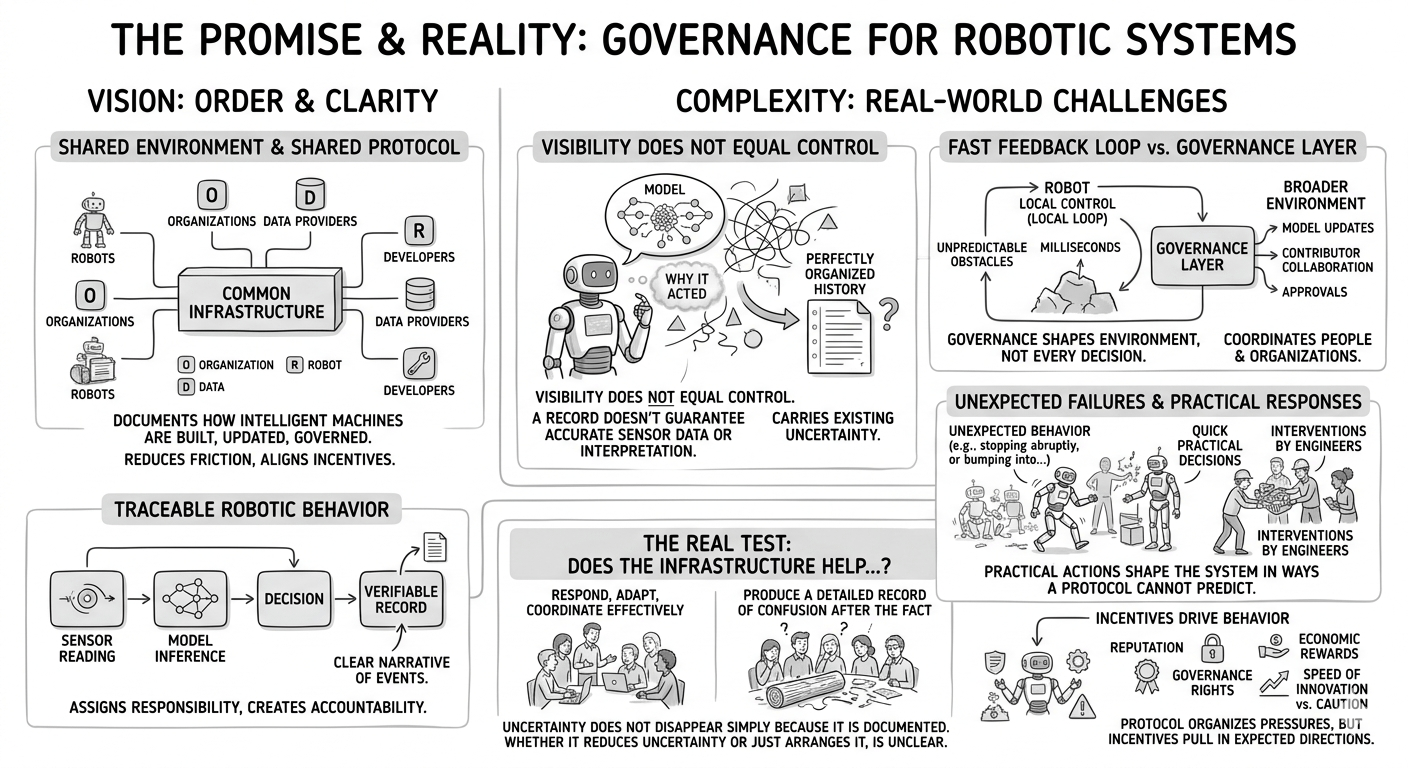

When people imagine the future of robotics, the language often sounds very polished. Everything is supposed to become transparent, traceable, and well-organized. Machines will not only act, they will also leave behind a clear record of why they acted. Decisions will be verifiable. Responsibility will be easier to assign. In that vision, systems like Fabric Protocol appear almost like a missing layer that finally brings order to a complicated technological world.

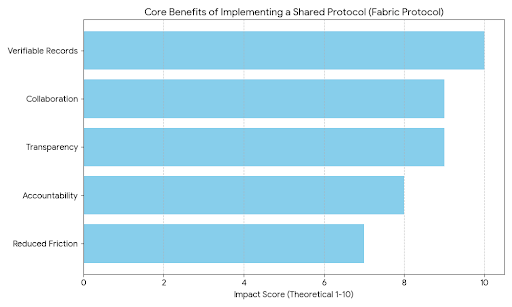

The idea is simple on the surface. Robots, developers, data providers, and organizations operate within a shared environment where actions and computations can be verified and recorded. Instead of every company running its own isolated systems, there would be a common infrastructure that documents how intelligent machines are built, updated, and governed. Behind the effort sits the Fabric Foundation, which frames the project as open infrastructure designed to make collaboration around robotics easier and safer.

It is not hard to see why the concept attracts attention. Robotics today is scattered across many different ecosystems. Each organization builds its own hardware, collects its own data, trains its own models, and develops its own operating rules. When problems happen—and they do—the story of what actually went wrong is rarely simple. Logs are incomplete. Responsibility becomes blurry. Teams argue over which component failed or which decision triggered the outcome. A shared protocol that records activity in a consistent way promises to remove a lot of that confusion.

But once the idea moves beyond the surface, the situation becomes more complicated. A system can record what happened without necessarily explaining why it happened. A robot might log a sensor reading, run a model, and produce a decision that gets permanently documented. Yet the record itself does not guarantee that the sensor captured reality accurately or that the model interpreted the information correctly. The system can produce a perfectly organized history while still carrying the same uncertainty that existed in the real world.

That is where the deeper tension of the project quietly appears. The protocol seems designed to make robotic behavior easier to see and understand. Turning actions and computations into verifiable records gives organizations something solid to point to. It helps create accountability. It also makes cooperation between companies easier because everyone is working from the same version of events. Still, visibility and control are not the same thing. Sometimes making a system more visible simply makes it feel more predictable, even if the underlying complexity has not really changed.

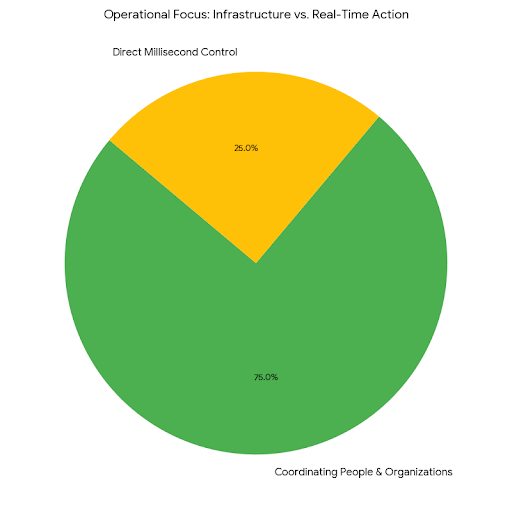

Another layer of reality appears when thinking about how robots actually function. Most robotic systems operate in fast feedback loops. They rely on immediate responses to changing environments—adjusting movement, interpreting sensor input, or reacting to unexpected obstacles. These decisions often happen in milliseconds. A governance layer built around shared infrastructure cannot realistically sit inside every one of those loops. The machines still need local control to function safely and efficiently.

Because of that, the protocol becomes something slightly different from what the original vision might suggest. It does not control every robotic decision. Instead, it shapes the broader environment around those decisions. It organizes how updates are approved, how models are shared, how contributors collaborate, and how responsibility is documented. In many ways, it is less about controlling robots directly and more about coordinating the people and organizations building them.

Incentives naturally follow once that kind of shared infrastructure exists. When multiple actors participate in a common system, reputation, governance rights, and economic rewards begin to influence behavior. Ideally, those incentives encourage careful development and responsible deployment. But incentives also have a habit of pulling systems in unexpected directions. Developers might prioritize speed of innovation over caution. Contributors might focus on visibility and influence within the system rather than long-term reliability. The protocol does not eliminate those pressures; it simply organizes them within a new framework.

Edge cases tend to reveal how strong a system really is. Imagine thousands of autonomous machines interacting across a large environment where human behavior is unpredictable. Patterns could emerge that no single designer anticipated. A shared ledger could help reconstruct what happened afterward, offering a clear narrative of events. That kind of transparency would be useful for investigation and accountability. Yet it would not necessarily prevent the chain of events from happening in the first place.

There is also the everyday reality of operating machines in the physical world. Engineers working with real robots often make quick, practical decisions when something behaves unexpectedly. They intervene, adjust parameters, or temporarily override systems to stabilize a situation. Those actions are part of responsible operations, but they do not always fit neatly inside formal governance structures. The more complex the environment becomes, the more these practical responses shape the system in ways that no protocol can fully predict.

None of this means the underlying idea lacks value. In fact, its most realistic contribution may be much quieter than the original vision suggests. By creating a shared record of actions, decisions, and contributions, the protocol can reduce certain kinds of friction that have slowed collaboration in robotics for years. When organizations can easily verify where data came from, who approved a model, or how a decision was made, partnerships become easier to manage. Projects can scale faster because the cost of coordination drops.

Still, uncertainty does not disappear simply because it is documented. Some uncertainties come from the unpredictable nature of physical environments. Others come from the behavior of learning systems that evolve over time. A protocol can help structure how those uncertainties are handled, but it cannot erase them completely.

That is why the real test of a system like this will never be the clarity of its design or the elegance of its narrative. The real test will arrive in situations where pressure builds—when unexpected failures appear, when incentives collide, or when thousands of autonomous systems interact in ways nobody planned. In those moments the question becomes very practical: does the infrastructure help people respond, adapt, and coordinate more effectively, or does it simply produce a detailed record of confusion after the fact?

For now, the project feels less like a finished answer and more like an attempt to reshape the conditions around a difficult problem. Its promise lies in organizing complex interactions into something that can be understood and governed. Whether that organization truly reduces uncertainty, or simply arranges it into a more readable form, is something that will only become clear once the system faces real-world pressure. If it holds together when those pressures appear, it could quietly change how robotic ecosystems grow. If it does not, the records will still be there—carefully documenting a system that once looked far more controlled than it actually was.