I remember my first hesitation in signing a transaction. Not because I didn’t understand it, but because it felt like it said so much about me.

The system worked as designed, yet it felt like I was being exposed in a way that I hadn’t agreed to.

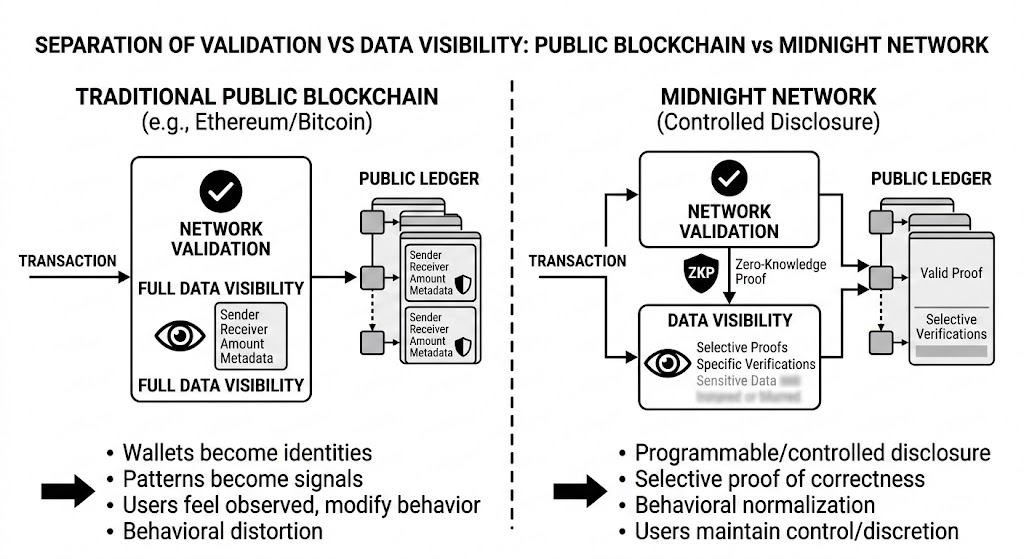

What began to feel off wasn’t the technology, it was how it shaped behavior. Wallets became identities, patterns became signals, and people started adjusting not for strategy, but for visibility.

Transparency, in theory, builds trust. In practice, it creates self awareness. And self aware participants rarely act naturally.

I started noticing how people fragmented activity, delayed actions, and avoided interactions. Not out of malice, but out of observation.

Public blockchains don’t just show transactions, they reveal context. Metadata, frequency, relationships. Over time, this creates a behavioral map far deeper than intended.

It’s not that the system is flawed. It’s that it reveals more than human behavior is comfortable sustaining.

And when people feel watched, they don’t become more honest, they become more careful.

That’s when I began questioning something I had long assumed: does more transparency actually lead to better systems?

I came across the idea of controlled disclosure in the @MidnightNetwork almost unintentionally. At first, it felt counterintuitive, like a step back.

Why would a system restrict visibility? Doesn’t that break trust?

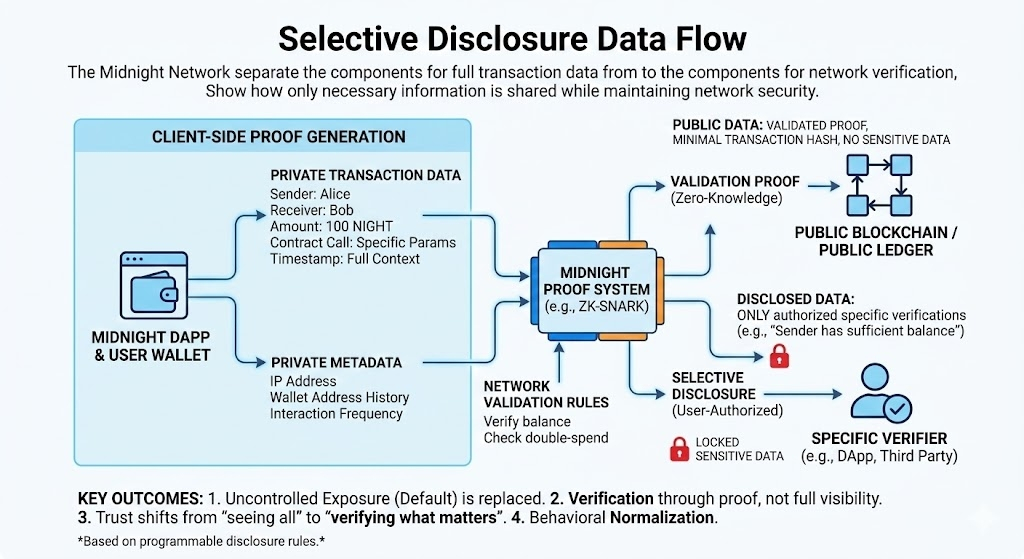

But then it clicked. It’s not about hiding information; it’s about proving correctness without leaking that information.

That’s a subtle distinction that completely flips everything.

What #night introduces isn’t opacity, it’s programmable disclosure. Through proof systems, actions can be validated without revealing full context.

At first, this felt like a small adjustment. But the behavioral implications were much larger.

Because what stood out wasn’t the technology, it was the change in assumptions about how people behave.

In a controlled disclosure system, users don’t need to simulate privacy. They don’t need to fragment identity or optimize for perception.

The system removes exposure as the default constraint.

And when exposure is no longer forced, behavior begins to normalize.

The reason this works is psychological as much as technical. People don’t want to hide everything, they want control over what is revealed.

There’s a difference between secrecy and discretion.

Midnight seems to be built around that distinction.

Trust, in this model, shifts from “seeing everything” to “verifying what matters.” And that’s a more scalable, realistic form of trust.

For builders, this changes the design space entirely. Applications are no longer forced into full transparency, they can be built around selective proofs.

Disclosure becomes conditional, not permanent. Visible when required, silent otherwise.

That nuance quietly unlocks new types of systems.

And from a market perspective, it reduces behavioral distortion. When participants aren’t constantly being observed, they stop optimizing for how they appear.

They start acting more naturally.

What I began to understand is that this is a bigger shift.

The early days of crypto were about radical transparency and proving that open systems could work.

But that’s not true anymore.

The market has moved forward.

The question is no longer “can everything be visible?” but “should everything be visible?”

Midnight doesn’t reject transparency, it refines it. It introduces control where there was previously none.

Because the real issue was never transparency itself.

It was the absence of choice within it.

Controlled disclosure resolves that tension by aligning systems with natural human behavior, selective, contextual, intentional.

It removes the need for workarounds and replaces them with structural guarantees.

I found myself wondering: what would markets look like if people didn’t feel constantly observed?

Not hidden, just not exposed by default.

I suspect they would feel less performative, and more honest.

At first, this idea felt like a compromise. Now, it feels like a correction.

We assumed more visibility would lead to better systems. But sometimes, better systems require better boundaries.

Controlled disclosure doesn’t remove trust, it reshapes it.

Not through constant exposure, but through the ability to prove, precisely when needed, that something is true.

In the end, the evolution isn’t from transparency to privacy.

It’s from uncontrolled exposure to intentional disclosure.

And the systems that understand that shift may not just change markets, they may change how people choose to participate in them.