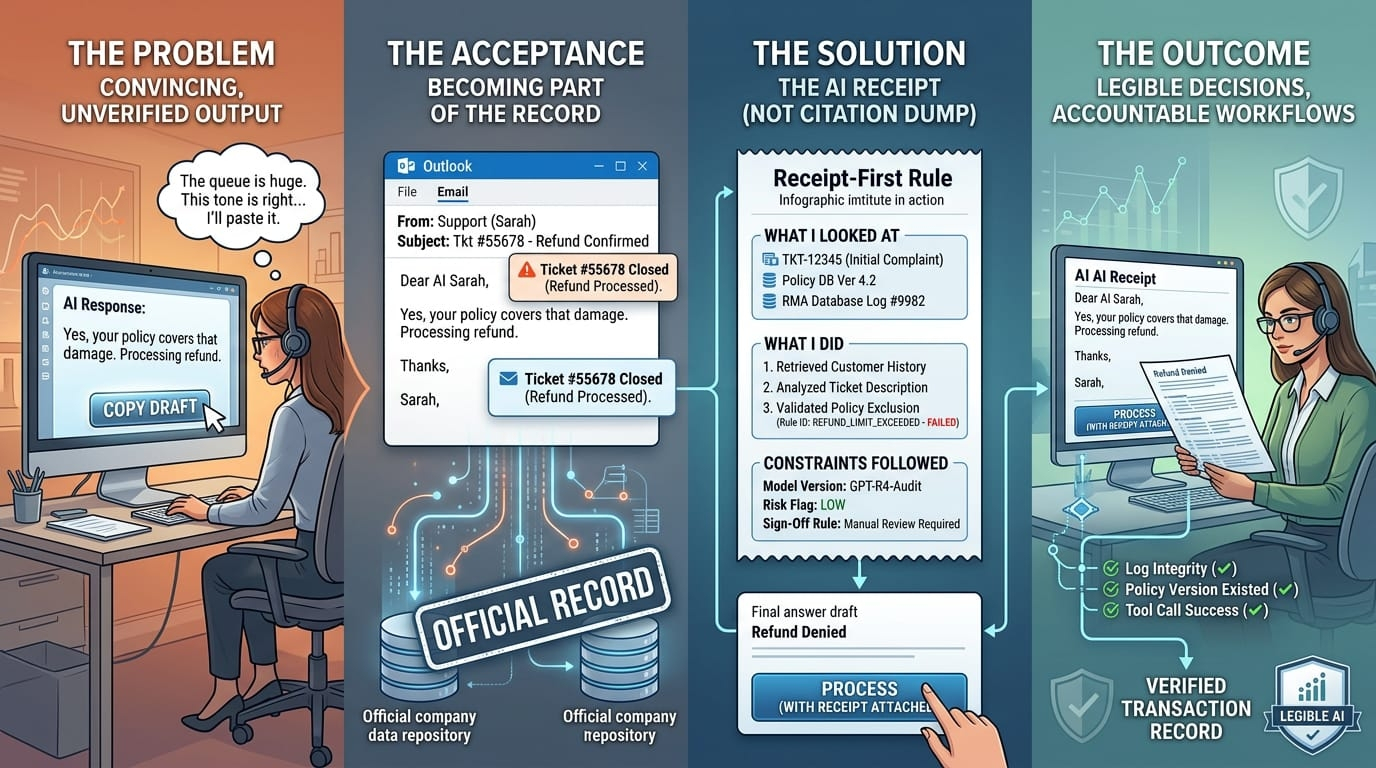

The most dangerous thing about a convincing AI answer is how quickly it becomes part of the record.

It starts small. A support rep pastes a draft into an email because the tone is right and the queue is long.The words travel. They pick up authority as they go, not because they’re proven, but because they’re written down.

That’s why the idea of a “receipt” matters. Not as a metaphor, but as a discipline.

In normal life, receipts are how we live with imperfect trust. A receipt doesn’t make the waiter honest or the store fair. It just gives you something to point at later. A time. A place. A price. A transaction ID. Enough structure that if the story changes, you can prove it changed.

AI answers rarely come with that structure. They arrive as finished products: clean sentences, confident verbs, tidy conclusions.

So imagine a different rule. Imagine that before an AI system could “ship” an answer—before it could send the email, reject the application, approve the refund, escalate the alert—it had to attach a receipt.

Not a citation dump. Not a vague “sources available upon request.” A real receipt: what it looked at, what it did, and what constraints it claims to have followed.

In a customer support setting, that could mean the draft response carries the ticket IDs it referenced, the policy article version it used, and the specific product logs it checked. In a finance setting, it might include the ledger entries and the risk rule IDs that triggered the recommendation. In a healthcare admin workflow, it might show which billing codes were matched, which notes were used, and what it chose to ignore because the data was missing or ambiguous.

This is not science fiction. Parts of it already exist in fragments—tool-call logs, retrieval citations, model versioning, approval workflows, audit trails. The gap is that we treat those fragments as internal telemetry instead of as part of the answer. We let the system speak in public and keep its working papers in a back room, if they exist at all.

A receipt-first rule forces the opposite. It makes the working papers the point.

You can feel how this would change the tone of AI systems immediately. A model that knows it needs to show its work becomes less freewheeling. It’s nudged toward claims it can support. It becomes more likely to say, plainly, “I can’t verify that,” because “I can’t verify that” is at least an honest line item on a receipt. It also becomes easier to challenge. Not emotionally—no more arguing with a chatbot like it’s a person—but procedurally. If a customer says, “That’s not what your policy says,” you can pull the policy version the AI referenced. If a regulator asks why a decision was made, you can point to the inputs and the rule path, not just the output prose.

Of course, there’s a reason we don’t already do this everywhere. Receipts cost money.

If the receipt includes sensitive data, someone has to redact it or gate it. If the receipt includes too little, it’s theater. If it includes too much, it becomes unusable and potentially dangerous.

There’s also the speed tradeoff. People like AI because it’s fast. Receipts add friction. They can add compute, too, especially when the system has to run checks, retrieve documents deterministically, or validate that a claim matches an underlying record. In a low-stakes setting, the friction will feel like overkill. In a high-stakes one, it will feel like overdue hygiene.

The hard part is deciding where the line is.

You don’t need a receipt to brainstorm taglines. You probably do need one when an AI system denies someone’s insurance claim. You might not need one for a first-pass code suggestion. You absolutely need one if the AI is allowed to merge into a production branch automatically. The word “allowed” does a lot of work here. Receipts matter most when models stop being assistants and start being actors.

Then comes the question that makes people uneasy: who verifies the receipt?

Internal verification is the obvious starting point. Your company already has compliance teams, auditors, security reviews, and incident response playbooks. A receipt can plug into those. It can make audits faster and failures more diagnosable. It can also become a liability if it exposes the mess behind the curtain. Many organizations prefer plausible deniability to detailed accountability, right up until the day they can’t.

Decentralized verification enters when you want the receipt to be more than an internal artifact. If an AI output is going to be treated as evidence outside your organization—by partners, customers, or regulators—you may not want to be the only party asserting that the receipt is real and complete. A network of independent verifiers could, in principle, check that the receipt’s references are consistent, that the logs haven’t been altered, that the claimed policy version existed at the time, that the tool calls succeeded, that the result matches the stated rules. It’s the same instinct behind independent audits, but expressed in infrastructure rather than in a single firm’s letterhead.

That approach has its own risks. Verifiers need incentives, or they don’t show up. Incentives can be gamed, or they become a new market for rubber-stamping. Verifiers need access, or they can’t verify. Access can leak, or you destroy the privacy you were trying to preserve. The moment you say “decentralized,” you inherit a new set of failure modes: collusion, spam, disputes that drag on, and the quiet problem of people verifying only what’s easiest to verify.

Still, the direction is compelling for a simple reason: AI outputs are starting to function like decisions, and decisions need governance. Not vibes.

A receipt won’t make AI infallible. It won’t stop a model from being confidently wrong. It won’t solve the deeper philosophical problem of how to encode judgment. What it can do is make the system legible. It can give you something concrete to interrogate when the answer causes harm. It can turn “the model said so” into “here’s what the model relied on, here’s what it did, and here’s who signed off.”

That’s what evidence looks like in the real world. Not perfection. Paper trails that can withstand pressure.

If AI needed a receipt before it shipped an answer, we’d get fewer magical moments. We’d also get fewer untraceable disasters. And as AI moves from producing text to moving money, approving access, and triggering actions, that trade starts to look less like a loss of convenience and more like the cost of being serious.