I remember hovering over the “stake” button, hesitating for no clear reason.

Everything looked fine, APY was attractive, lockup terms were standard. Still, something felt off.

I wasn’t committing to anything. I was just positioning capital.

And for a system that claimed to be “secured,” that realization felt strangely empty.

The more I observed, the more I noticed how easily participation had been abstracted.

Lock tokens, earn rewards, repeat.

But what exactly was being secured? There was no direct relationship between what someone did and what they could lose.

In purely digital systems, maybe that’s enough. But in networks coordinating real world work, machines, tasks, outcomes, it felt incomplete.

Shouldn’t security come from accountability, not just capital presence?

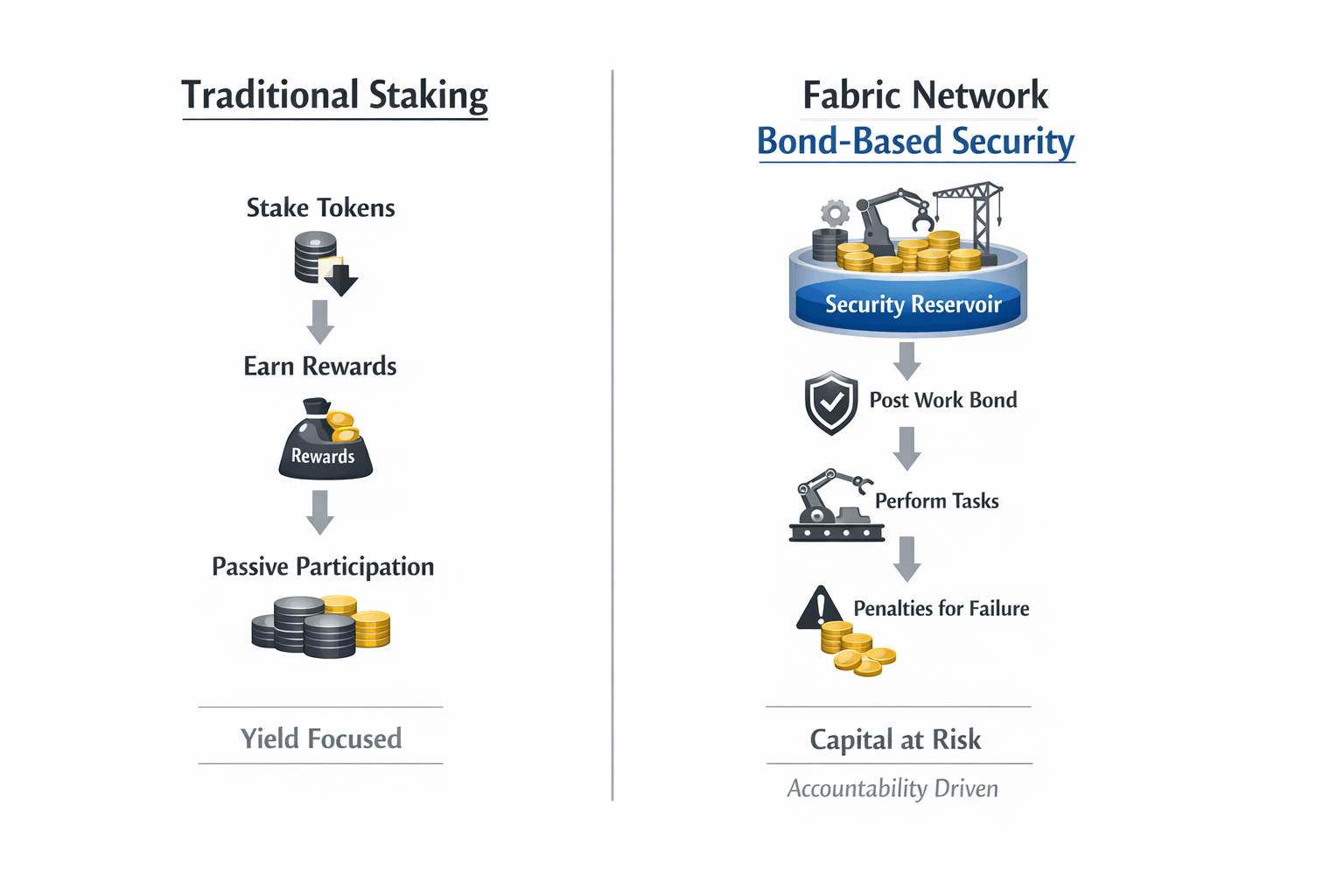

When I first came across the @Fabric Foundation and the design behind the #ROBO token, I expected a variation of the same model.

Another staking system, slightly reframed.

At first, the term “Security Reservoir” felt like unnecessary abstraction.

But upon reflection, it wasn’t abstraction, it was a shift in definition.

This wasn’t about locking tokens.

It was about putting capital behind behavior.

What stood out wasn’t the bond itself, it was what it meant.

In Fabric, operators post work bonds, performance deposits required to provide services, not vehicles for earning yield.

The more capacity an operator claims, the more capital they must commit. Not as a signal but as collateral.

At first, this felt restrictive.

But then I realized: it forces a simple question most systems avoid

Can you actually deliver what you claim?

The idea clicked for me when I understood the “reservoir.”

The bond isn’t locked per task. It acts as a shared pool of collateral, from which portions are dynamically allocated to ongoing work.

That means the same capital is continuously exposed across multiple tasks.

Not idle. Not static.

Just constantly at risk.

And that changes the nature of participation.

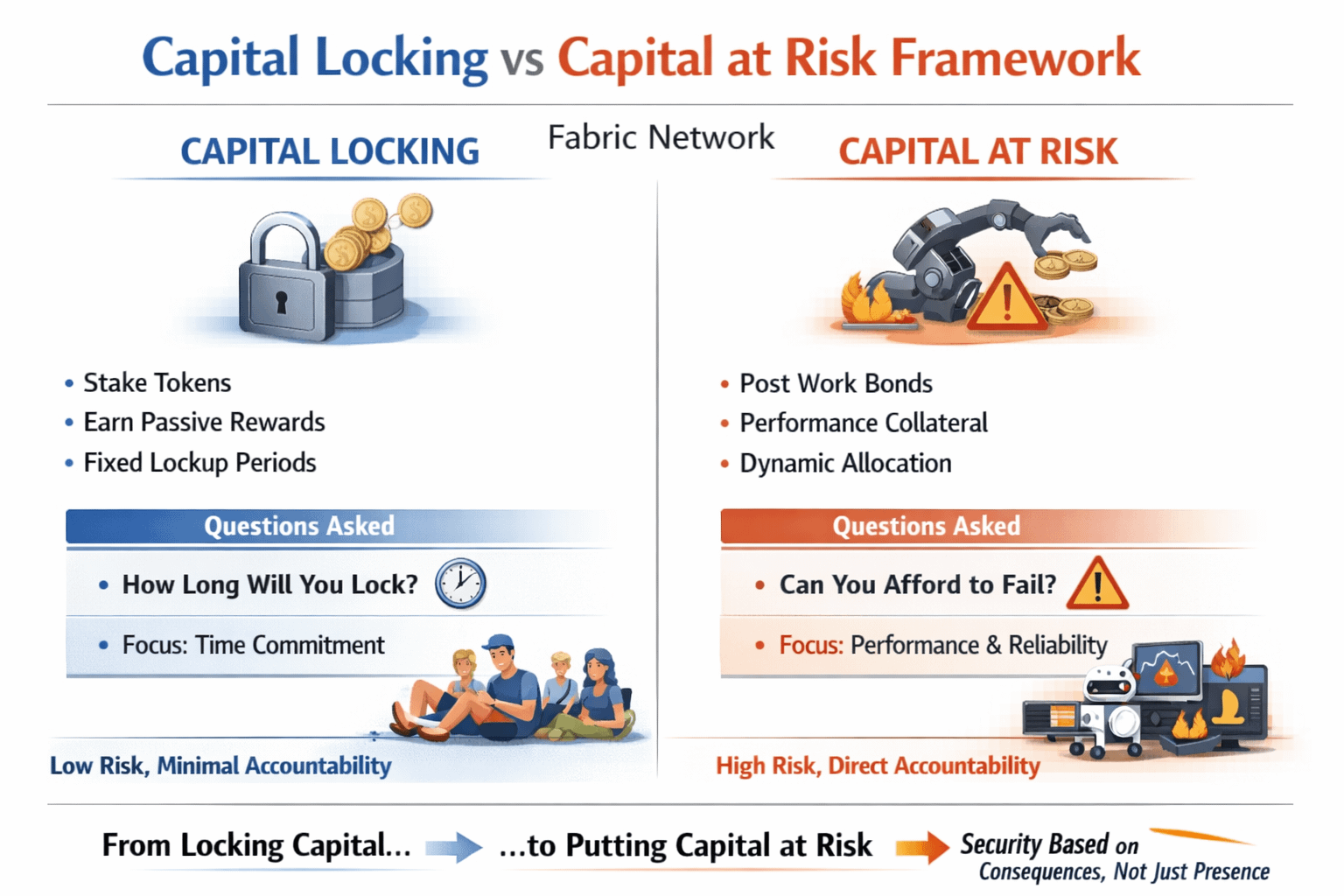

Most systems ask:

How long are you willing to lock your tokens?

This system asks something very different:

Can you afford to fail?

Because here, failure has consequences.

Fraud, downtime, or degraded quality can trigger slashing of the bond, ensuring that the cost of misbehavior exceeds any potential gain.

That single design choice transforms incentives.

You’re no longer optimizing yield.

You’re managing exposure.

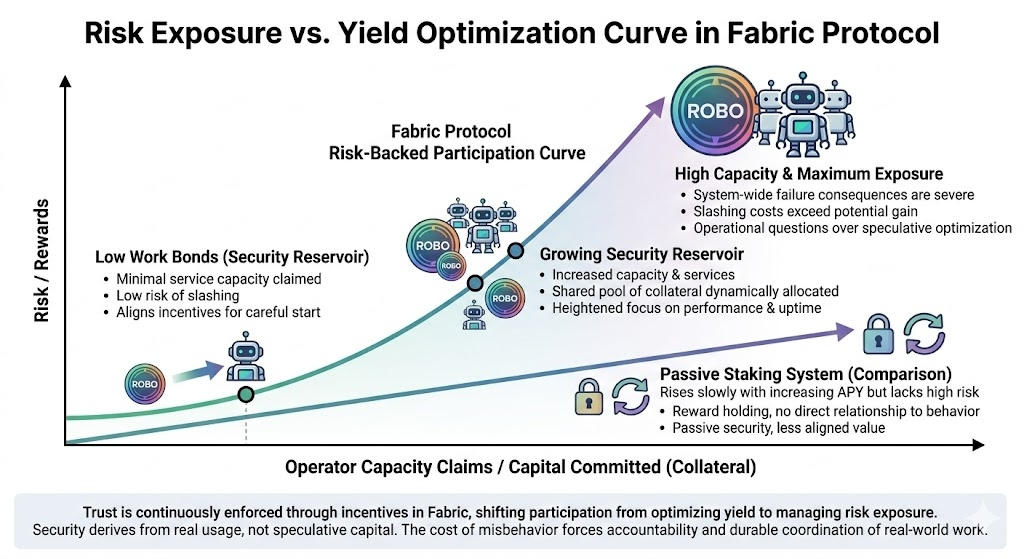

One detail I initially overlooked but now think is critical is how this model scales.

As more robots join the network and total capacity increases, the total bonded capital grows proportionally.

Security isn’t fixed.

It expands with real usage.

That means the system doesn’t rely on speculative capital to appear secure. It derives security directly from actual economic activity.

And quietly, that creates something stronger than locked value.

It creates aligned value.

But what really stayed with me wasn’t the mechanism, it was the psychology.

When capital is locked, you think about time.

When capital is at risk, you think about performance.

Can I maintain uptime?

Am I overcommitting?

Is this task worth the exposure?

These are operational questions.

And they filter participation in a way incentives alone never could.

Not by excluding people, but by making low quality participation economically irrational.

The more I thought about it, the more the system felt self regulating.

Operators behave carefully because their capital is directly exposed.

Validators are incentivized to detect fraud because they benefit from it.

Users interact with services backed by real collateral, not just reputation.

Trust isn’t assumed.

It’s continuously enforced through incentives.

And importantly, these bonds don’t generate passive returns, they exist purely as risk-bearing mechanisms to align behavior.

That distinction matters more than it seems.

What I’m starting to notice is a broader shift.

We’re moving away from systems that reward holding toward systems that require accountability.

And maybe that’s necessary.

Because as crypto moves closer to coordinating real world systems robots, infrastructure, services, the cost of misalignment increases.

Passive security doesn’t scale into active environments.

But risk-backed participation might.

At first, the Security Reservoir felt like a technical design.

Now it feels more like a statement.

Participation isn’t about how much you lock.

It’s about how much responsibility you’re willing to carry.

I used to think security came from how much value was locked in a system.

Now I think it comes from how much value is willing to be lost when something goes wrong.

Because in the end, systems aren’t secured by capital alone.

They’re secured by the consequences attached to it.