@Fabric Foundation I came back to Fabric the way I usually come back to projects that are trying to solve something slightly uncomfortable. Not because the token was newly visible, though that certainly brought more people in. Not because robotics is suddenly fashionable again. I came back because Fabric is built around a question that most systems would rather avoid: what does trust look like when the thing asking for payment, taking action, gathering data, and making decisions is no longer a person or a company clerk, but a machine acting in the world? Fabric’s own documents are unusually direct about that. They describe the protocol as an open network for building, governing, owning, and evolving general-purpose robots, while the Foundation presents itself as a non-profit trying to build the coordination layer that lets humans and intelligent machines work together safely and productively. That is a much more serious ambition than launching another chain with a themed narrative attached to it

What makes Fabric worth sitting with is that it starts from the mess, not the demo. Most people looking at robotics still focus on what the machine can do when everything is clean, connected, funded, and supervised. Fabric keeps pulling attention back to what surrounds the machine: identity, payment, accountability, human oversight, and the constant problem of proving what actually happened. The Foundation’s March 11 update says the bottleneck is no longer the robot itself but the infrastructure around deployment, coordination, and payment at scale. I think that framing is closer to reality than many people admit. In real environments, confidence does not collapse because a machine lacks intelligence in the abstract. It collapses because nobody can settle disputes cleanly, trace responsibility clearly, or decide who absorbs the loss when something goes wrong.

The December 2025 whitepaper makes that philosophy explicit. It does not present Fabric as a simple software layer sitting politely beside robotics. It treats blockchain as a possible alignment layer between humans and machines, a way to coordinate computation, ownership, and oversight through public ledgers rather than through closed internal systems. That matters because the trust problem in robotics is not only about whether a robot can act. It is about whether the surrounding system leaves behind enough evidence for a human community to remain calm under uncertainty. A family, hospital, warehouse operator, city authority, or insurer does not need magical language. They need confidence that when signals conflict, there is a durable record of what happened, who approved what, and what recourse exists afterward. Fabric is trying to design around that emotional reality, even if the protocol still has a long way to go.

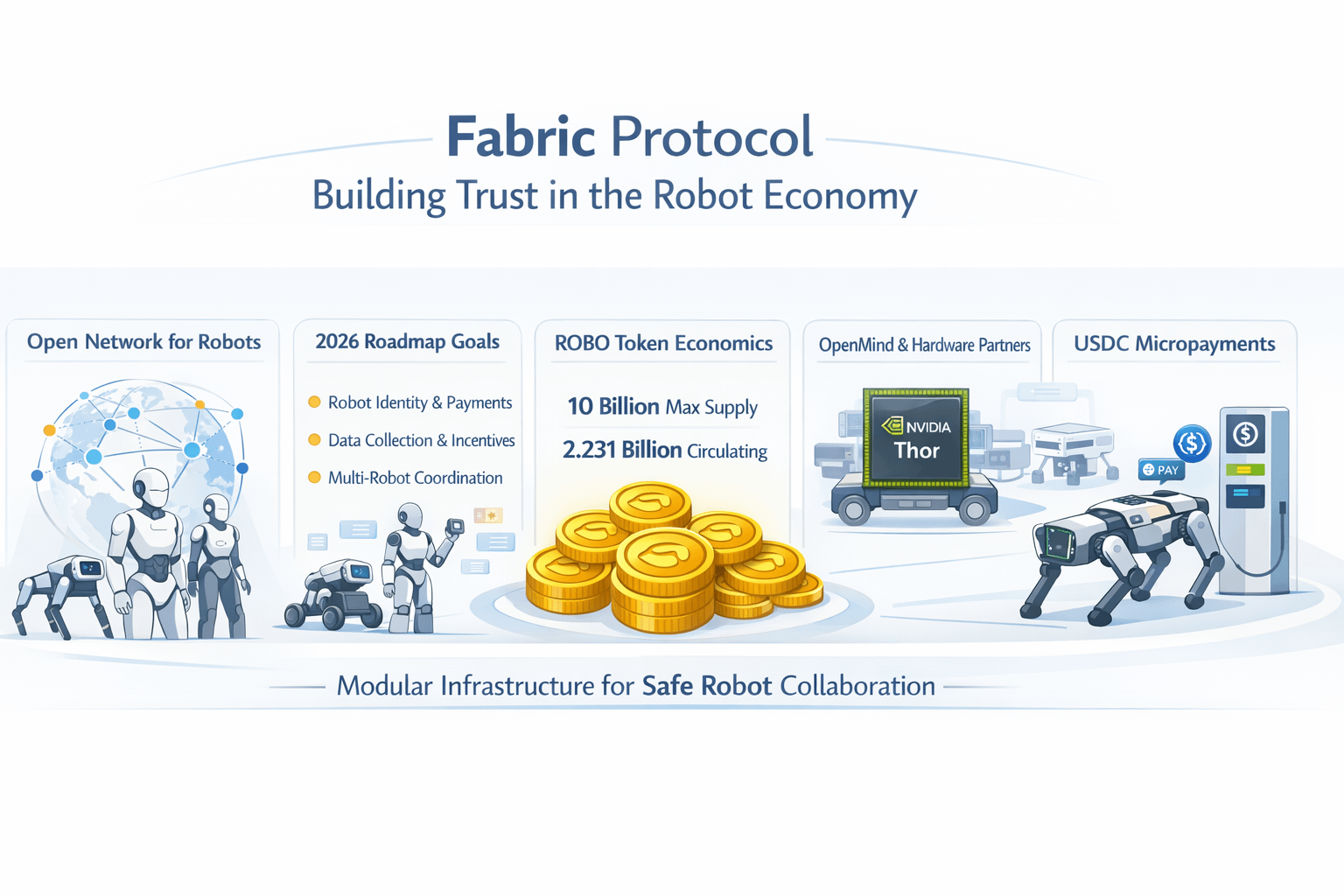

I also think Fabric is stronger when you read it as infrastructure under construction rather than as a finished robot economy. The project’s own materials support that more cautious reading. The token blog says the network will begin on Base and may later become its own Layer 1 as adoption grows, while the whitepaper describes a phased rollout in which present-day interoperability is used first and a more machine-native chain comes later. That is a revealing choice. It says the team understands that credibility here will not come from declaring finality too early. The near-term work is not to prove philosophical elegance. It is to collect operational data, observe how real deployments behave, and learn where theory breaks once machines leave the lab and start dealing with dirt, latency, human error, bad incentives, and patchy supervision.

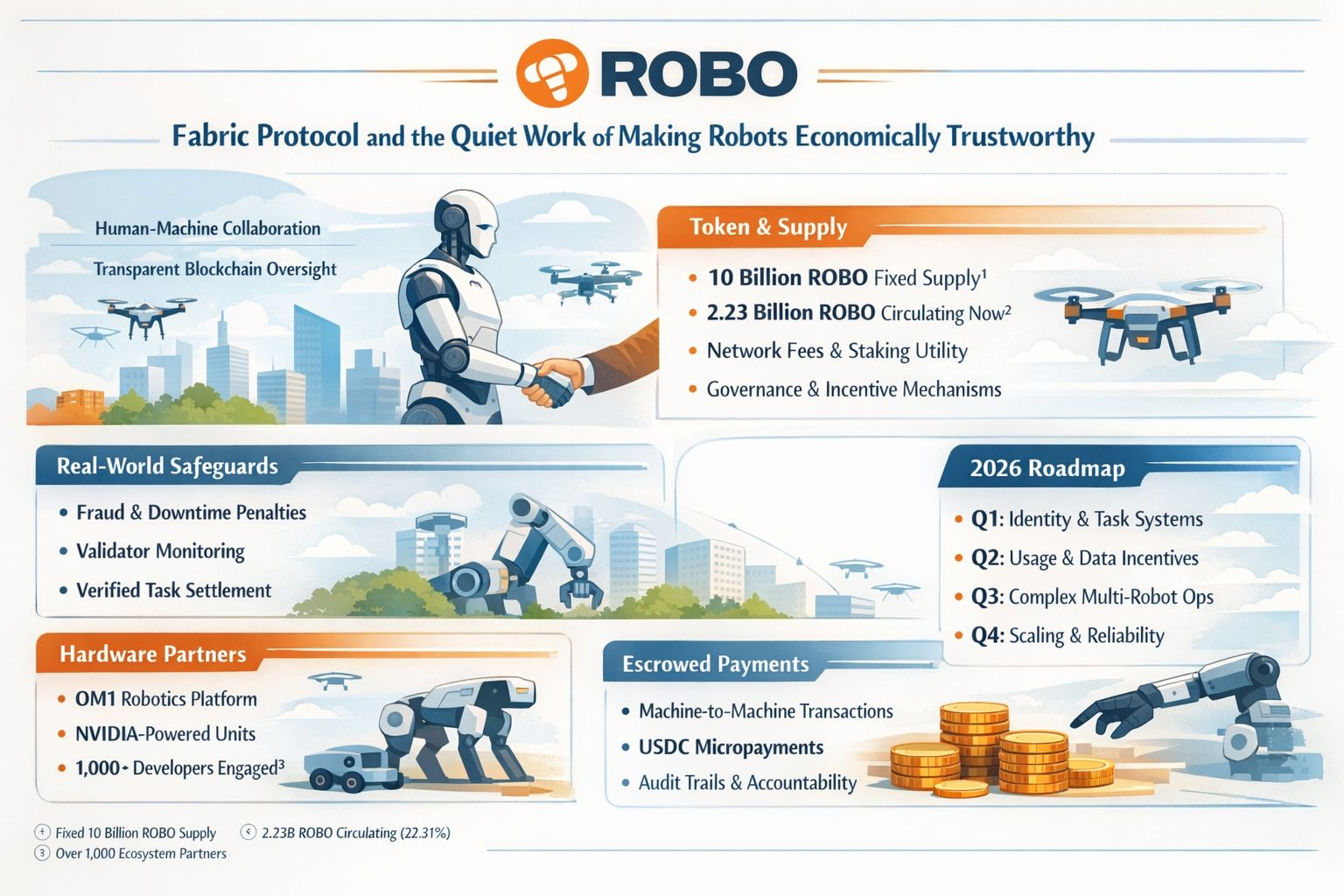

The 2026 roadmap reinforces that. Early this year the published plan centered on initial components for robot identity, task settlement, and structured data collection, then broader contribution-based incentives, wider developer participation, more data from more robot environments, support for more complex repeated work, and only later the preparation for larger-scale deployments. Past 2026, the whitepaper points toward a machine-native chain informed by accumulated real-world usage rather than by pure design preference. I find that sequence important because it tells me Fabric knows its future depends on lived evidence. In systems like this, reliability is not born from a beautiful diagram. It is born from repeated contact with small failures, contradictory data, and all the quiet edge cases nobody bothers to mention during launch week.

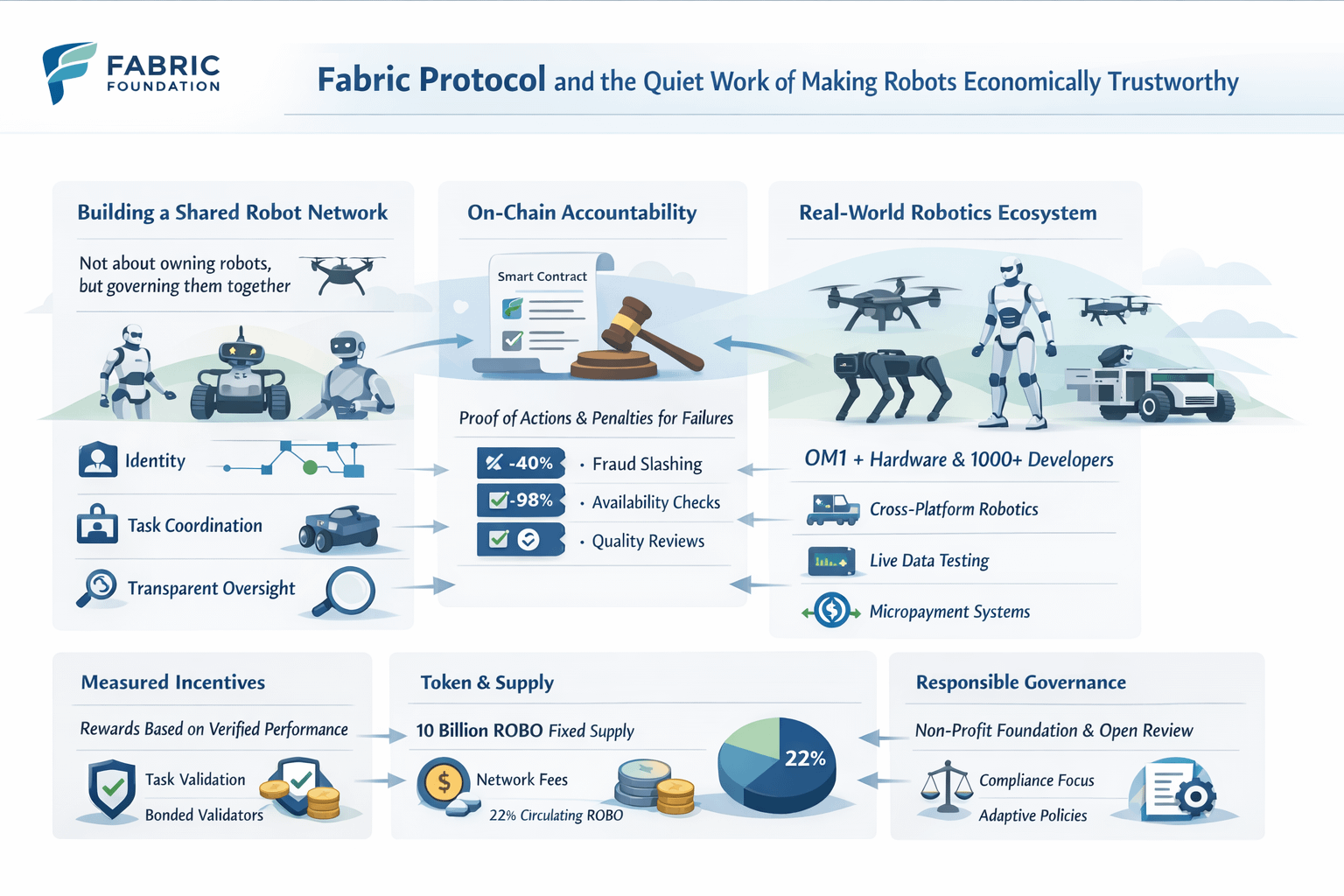

Where Fabric becomes more interesting to me is in the way it prices dishonesty. A lot of projects talk about accountability in broad moral language, but the whitepaper gets mechanical. It describes bonded participation, challenge processes, fee sharing for validators, and penalties tied to proven fraud, poor availability, and weak quality. The slashing ranges are not symbolic. Fraud can cut deeply into posted stake, sustained downtime below the stated threshold can wipe out epoch rewards and burn part of the bond, and quality weakness can suspend reward eligibility until the operator fixes the problem. That is a very specific way of saying something human and old: if you want honest behavior from machines and their operators, you cannot rely on branding or goodwill. You have to make unreliability expensive in a form people can understand. Under pressure, that matters more than ideology. When customers are angry, or a task has failed, or data from two sources does not match, trust survives only if the system already knows how to punish carelessness without improvising justice after the fact.

That same logic runs through the token, and this is where the recent data matters. Fabric’s own February material describes ROBO as the core utility and governance asset used for fees, participation, staking, verification, and ecosystem access, while the whitepaper is careful to strip out the usual fantasy language. It repeatedly says the token does not represent equity, debt, robot ownership, revenue rights, or a claim on cash flows. Participation is framed as operational access and coordination, not as a hidden wrapper around legal ownership. I actually think this restraint is one of the project’s more important signals. In a field where people are tempted to turn every real-world machine into a speculative receipt, Fabric is at least trying to keep a distinction between using a network and owning the underlying physical world. That distinction may sound dry, but it matters enormously when expectations rise faster than legal reality.

The supply picture is now much clearer than it was a few weeks ago. The whitepaper fixes total supply at 10 billion ROBO and lays out the allocation across investors, team and advisors, foundation reserve, ecosystem and community, community airdrops, liquidity and launch, and a small public sale. The insider-heavy buckets carry longer vesting, while launch-related tranches are more immediately available. On March 18, Binance’s announcement added the current market-side facts that matter most: 10 billion maximum supply, 100 million ROBO for that distribution, another 200 million earmarked for later marketing efforts, and a circulating supply upon listing of 2.231 billion ROBO, or 22.31% of the total. For a project built around long-cycle infrastructure, these numbers are not trivial. They shape how much speculation can leak into the story before the network has earned operational maturity.

Price discovery has already started to do what price discovery usually does: it has forced reality into the room. CoinGecko and CoinMarketCap both show ROBO trading actively with a circulating supply around 2.2 billion and a market cap in the high fifty-million-dollar range as of March 18, while 24-hour volume has been large relative to market cap. CoinGecko’s historical page also shows how quickly sentiment can cool even in the first days of public attention, with ROBO moving down materially over the past week and daily market-cap and volume numbers changing fast. I do not read that as a verdict on Fabric. I read it as the first honest stress test. Once a token is live, the ecosystem loses the luxury of purely internal storytelling. Every promise now sits beside a fluctuating public price that reflects confusion, enthusiasm, skepticism, and impatience all at once. That tension can be healthy if the protocol keeps building through it.

What helps Fabric here is that there is at least some surrounding infrastructure to point to, rather than just token mechanics. The Foundation’s partner page highlights OM1 as a modular robotics platform spanning different hardware categories and describes the broader stack as an open collaboration layer for data, tasks, and value. The March 11 foundation update says the ecosystem already involves more than 1,000 developers and names industrial partners across several robot categories. I would still treat those ecosystem numbers as early-stage claims rather than proof of durable deployment, but they matter because they shift the conversation away from abstraction. Fabric only becomes meaningful if heterogeneous machines can actually be brought under a common coordination logic. A robot economy does not fail because nobody can imagine it. It fails because incompatible machines, fragmented software, and isolated incentives make shared reliability too expensive.

The OpenMind side of the ecosystem is especially revealing because it shows what Fabric needs from the off-chain world in order to matter. OpenMind’s documentation describes hardware packages built around NVIDIA Thor, support for modern ROS2-based robotics workflows, and a software stack meant to run across different form factors with containerized services and autonomy tooling. NVIDIA’s own Jetson Thor materials underline why that is significant: up to 2070 FP4 TFLOPS, 128 GB of memory, and major efficiency gains over AGX Orin for physical AI workloads. None of that proves Fabric will win. What it proves is that Fabric is attaching itself to a hardware and software environment where robots are becoming capable enough that coordination infrastructure stops sounding theoretical. Once machines can navigate, perceive, recharge, and make more local decisions, the real question becomes who authorizes, records, pays, challenges, and audits those actions.

Payments make the same point from another angle. The Foundation’s public materials talk about machine and human identity, human-gated and location-gated payments, machine-to-machine communication, and decentralized task accountability. Circle’s March 10 post adds a concrete example from its collaboration with OpenMind: an autonomous robot dog using USDC-based nanopayments to complete a recharge loop. This is the kind of detail I pay attention to, because it shows where the soft part of the trust problem lives. A payment from a machine is not just a settlement event. It is a social event. Someone has to believe that the machine was authorized to spend, that the action happened where it was supposed to happen, that there is a record if the payment is contested, and that the machine cannot quietly become a self-spending liability. Fabric’s long-term value will depend less on whether it can produce on-chain transactions and more on whether those transactions feel governable to the humans standing nearby

I also respect that Fabric’s whitepaper does not pretend the governance questions are settled.

Fabric does not hide the fact that some big choices are still undecided. The community still needs to help define how rewards work across the network, what the first validator model should look like, and how success should be measured without turning the system into something people can easily manipulate. The whitepaper even says that revenue alone can be gamed, so it suggests using stronger measures like compliance, efficiency, energy use, and user feedback. That honesty is important. In serious systems, especially those tied to real machines and real environments, failure often begins when people trust simple metrics too much. If Fabric stays serious, it will need to remain suspicious of any number that becomes too flattering too quickly.

There is another quiet point in the legal structure that I think deserves attention. The whitepaper says the Foundation is the independent non-profit steward, while the token issuer is a BVI company wholly owned by the Foundation. It also makes a point of separating OpenMind from token issuance and market responsibility. That separation will not solve every future tension, but it tells me the people around Fabric understand that once robotics, tokens, public governance, and real-world deployment start touching each other, institutional clarity matters. When a project deals with software alone, ambiguity can survive longer. When it starts touching labor, payment, movement, and physical infrastructure, ambiguity turns into risk quickly. Trust, in that setting, is not a slogan. It is the absence of confusion at the exact moment confusion would be most convenient to the wrong actor.

So when I look at Fabric now, with its March updates, its public-market token data, its published supply structure, its early rollout plan, and its growing attempt to connect robotics software with accountable economic rails, I do not see a finished answer. I see a protocol trying to prepare for the moment when robots stop being isolated products and start behaving like participants in a shared economic environment. That is a heavier problem than most people realize. It means dealing with broken sensors, disputed records, opportunistic operators, temporary outages, legal uncertainty, uneven hardware quality, emotional fear from users, and the very human need to know that someone can still say no when the machine gets it wrong. Fabric has not solved all of that. At least Fabric is trying to face those hard questions instead of pretending they do not exist.

What stays with me most is not the token or the story around it, but the idea that quiet, behind-the-scenes infrastructure will decide whether people can truly accept intelligent machines in everyday life.Reliability rarely wins attention in the early phase. It looks slower than vision and less glamorous than price. But in the long run, reliability is what allows people to unclench around a system. It is what makes conflict survivable, mistakes recoverable, and coordination fair enough to continue. If Fabric is going to matter, it will matter there, in the background, where responsibility is hard to fake and where a durable record, a credible penalty, and a calm chain of accountability do more for human confidence than any burst of attention ever could.

@Fabric Foundation #ROBO $ROBO