The later I was seated with @Fabric Foundation , the more my mind continued to retain one ugly thought.

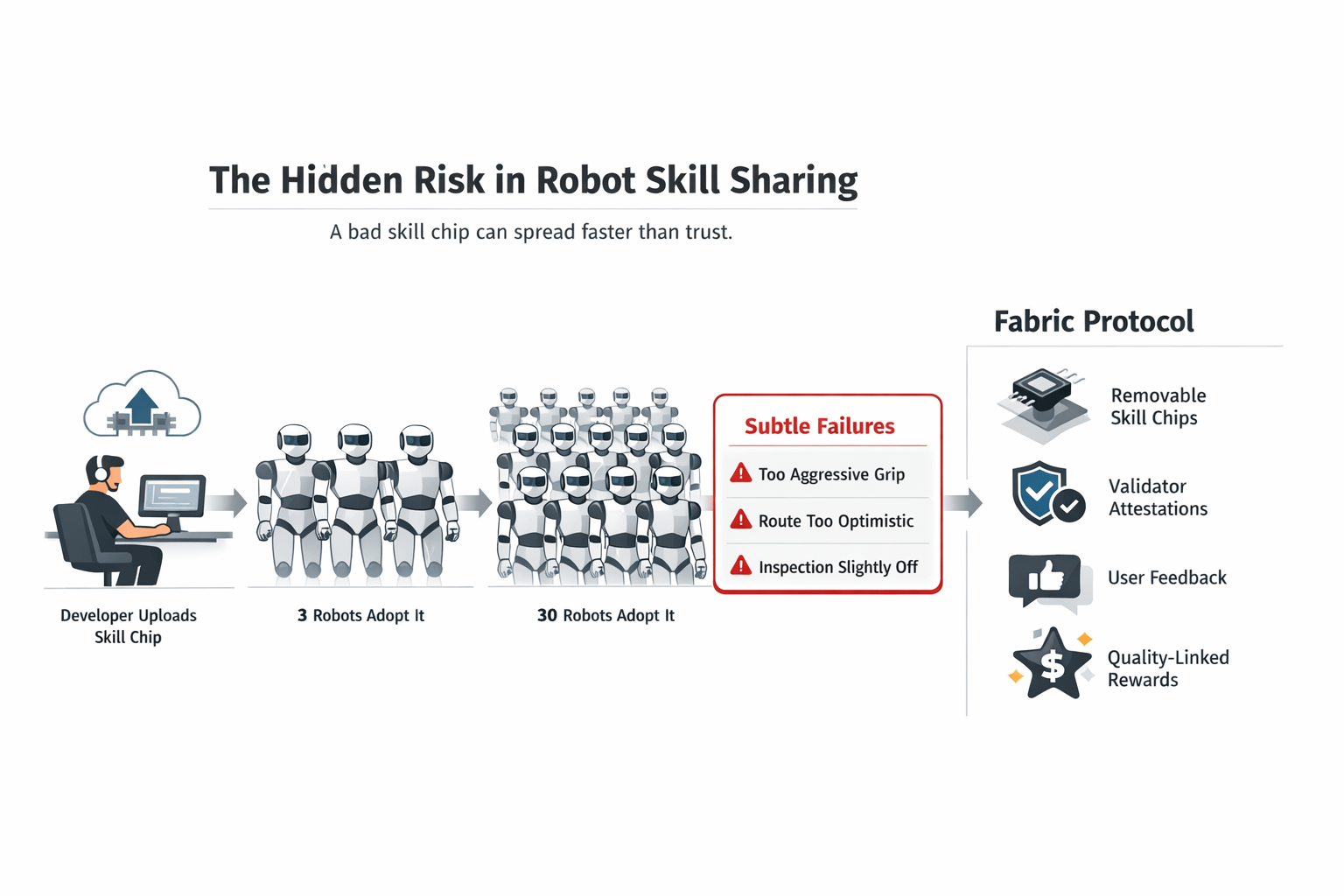

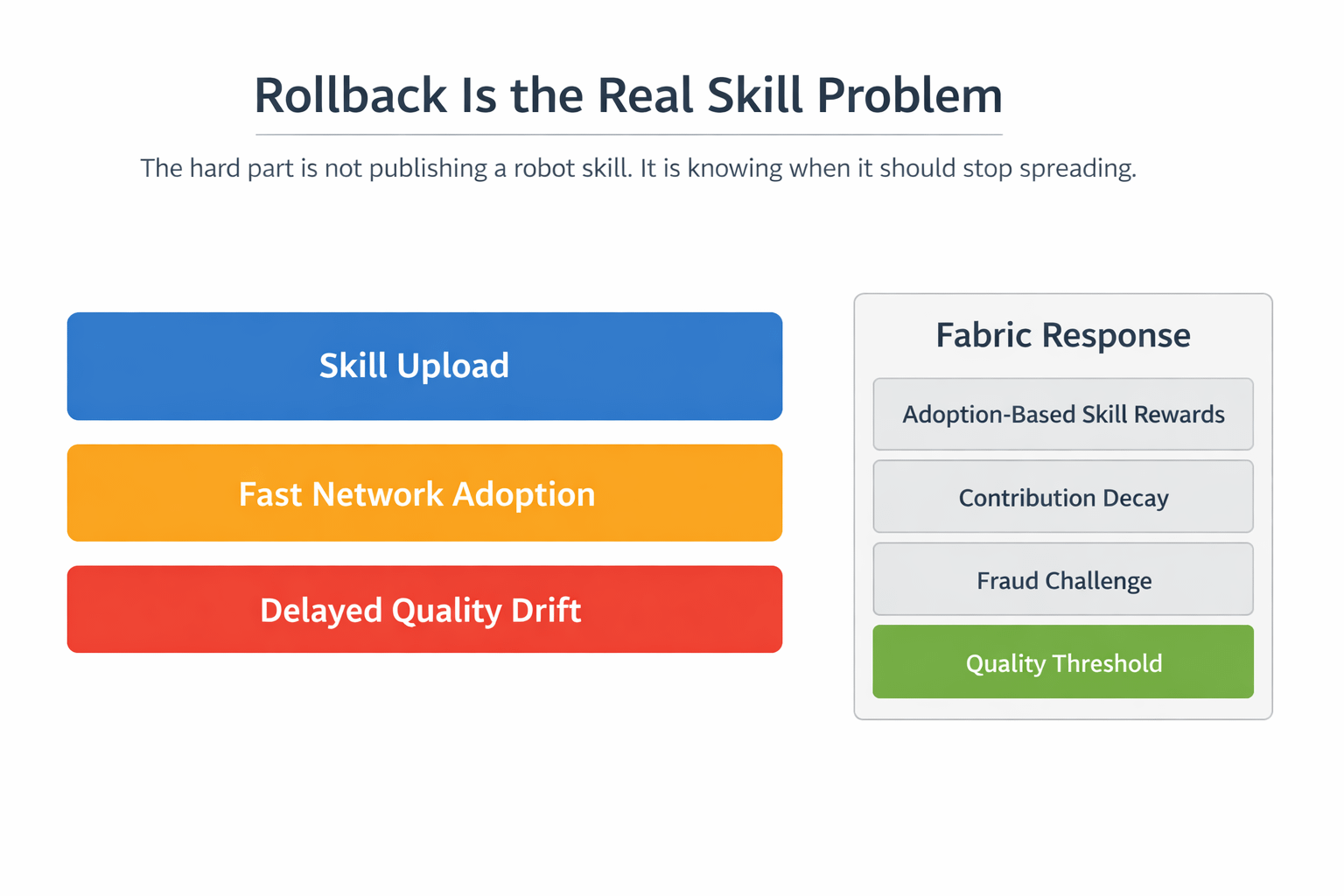

It is not the actual danger of teaching a robot a new skill. It is finding out late that the art was diffusing sooner than the faith about it. Fabric continues to revert to modularity-based skill chips that can be added and removed like apps, developers bring in modules that can be utilized by other machines throughout the network. That sounds clean at first. Installation is not the secret fee. It is rollback.

It is the omitted issue that I lack enough people to sit with.

A malfunctioning skill chip does not have to malfunction, as a broken file. It can collapse like a miniature act of omission seeming okay until the time they are repeated on a large scale. One manipulation skill picks up a package that is too grabby. One of the navigation skills clears routes somewhat optimistically. One of the inspection skills accepts technically complete but a bit out of standard outputs. The machine gets away with the first. The tenth makes it visible. The hundredth makes a local imperfection network contamination. The internal design of Fabric where skill modules, validation work, quality scoring, and adoption-based rewards are around, it is evident that this is not simply a matter of adding capabilities. It is the process of making choices of what is worthy of further propagation.

That is where I thought the project became more serious.

The majority of the audience takes the app-store concept to mean growth. I kept reading it as exposure. The quicker a robot network is able to share helpful behavior, the more harmful it is to be able to strengthen the incorrect behavior too soon. Something interesting is done here by fabric. It does not consider contribution as a unitary upload. Measurement of skill development is based on deployment and cumulative usage within the network although the rewards are still screened with quality multipliers based on the results of validation and user feedback. Poor quality is not merely unappealing to the eye. It acts on the reward layer against the grain.

That makes the workflow significantly more difficult and far more realistic.

A developer ships a new skill. It is used by the operators since it saves time. Usage rises. The reason why reward flow begins to care is due to visible adoption. Then edge cases are found in the field. Whether or not the module exists is no longer the question. The question is that, does the network have sufficient friction to prevent the normalization of weak behavior because it travels fast. The structure of fabric is tilted against that tension with validator attestation, user feedback, fraud challenge paths, contribution decay in the case of inactivity and a pressure to make reward eligibility requirements when the network quality falls to beneath the network threshold.

I found that to be the turning point.

I ceased regarding Fabric as a location where robot skills are shared, and began to regard it as a location where robot skills are socially stress-tested once they are released. That is a far more interesting dilemma. Publication of the capability is not the difficult part. It is the difficult part not to compound a weak capability since use early appeared so fine. The sense that cloth is stronger is precisely because the design continues to draw quality, validation and reputational memory back to the reward loop rather than feigning distribution as the sole line of evidence. The penalties of fraud may persist into new generations, and this is important when the actual harm caused by a low skill is not instantaneous, but usually delayed.

And that is where I lost feeling that $ROBO was decorative.

In case the skills are included in the productive surface of the network, then the token must be located within the segment that determines which contributions, in fact, have the right to continue existing. Fabric connects $ROBO to network-native charges and settlement, but it also connects distributions to validated work classes, which comprise skill development and validation work, as well as active contribution quality and not passive holding. Such a dissimilar token logic compared to the standard "creator uploads module and leaves price do the rest" model. In this case, ROBO brought nearer to the pressure system of skill circulation. It lays hand on the cost of participating, the rewarding track of actual adoption, and the layer of discipline, which penalizes bad output rather than neglecting it.

The pressure test is apparent though.

Will Fabric be able to maintain the skill layer and avoid popularity running out of control? Is it possible to identify weak modules in the form of a validator feedback or user feedback before they are ingrained as defaults? The question is, in practice can removable skill chips remain removable once they come to be relied upon by operators to provide throughput? And when the quality pressure begins to fester, does it make the app-store vision more robust or does it retard contribution such that growth becomes untidy? The fact that the questions are alive makes the project more interesting to me.

What stayed with me is simple.

The threat of robot skills market is not that machines do not learn.

It is because, they learn something slightly wrong, and the network is impressed by speed to an extent that it cannot halt it.

#ROBO $ROBO @Fabric Foundation