I once noticed a wallet interaction that looked perfectly normal on the surface I signed a message, confirmed the action, and expected everything to resolve instantly. But instead, there was a strange delay. Not a failure, not an error, just a quiet pause where the system seemed unsure of itself. That moment stayed with me because it exposed something deeper: verification isn’t always as seamless as we assume.

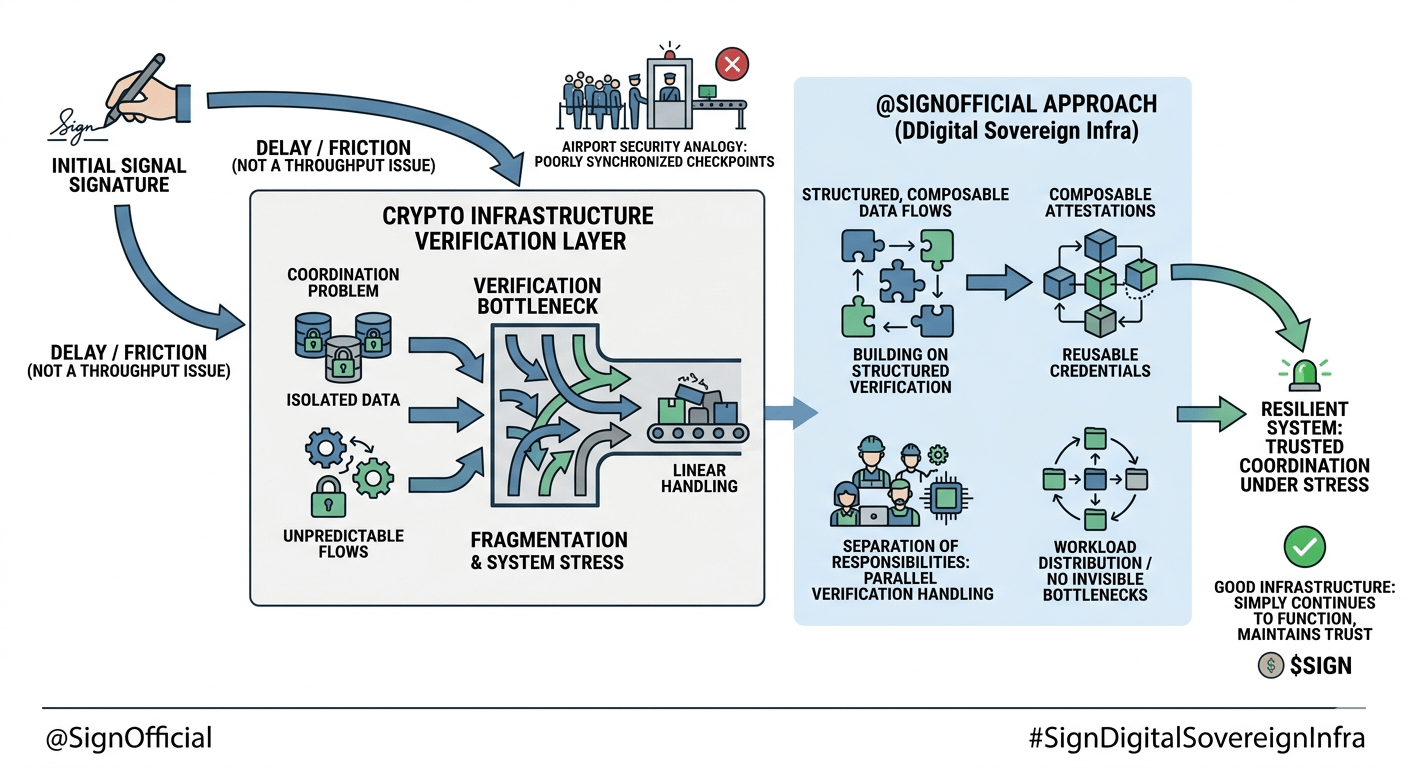

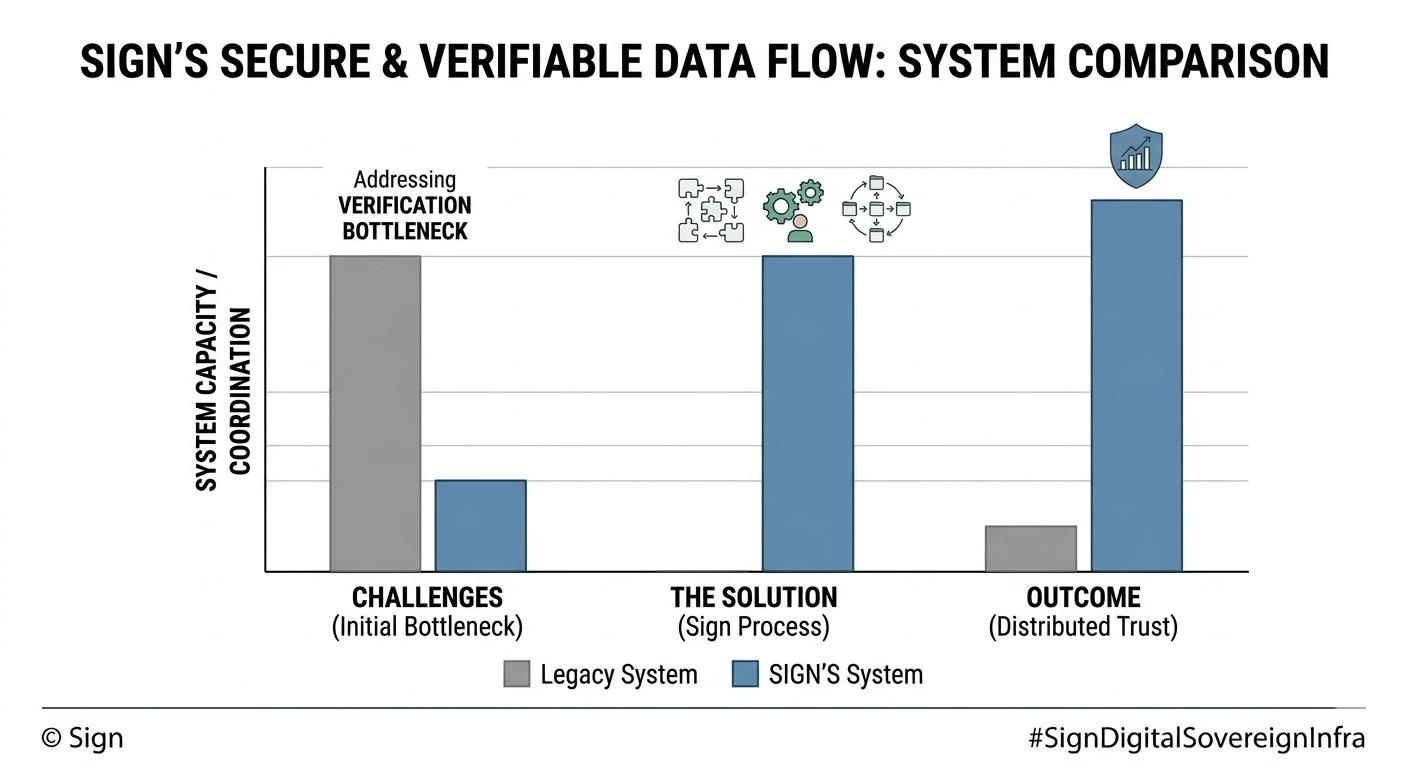

After seeing this happen across different chains and tools, what I noticed is that the real friction in crypto often sits in the layer we talk about the least how data is verified, shared, and trusted across systems. Transactions can be fast, blocks can be frequent, but when identity, credentials, or proofs need to move between environments, things become less predictable. It’s not a throughput issue. It’s a coordination problem.

From a system perspective, I think of it like airport security. It’s not the number of passengers that causes delays it’s how identity checks, scanning, and clearance steps are organized. If one checkpoint becomes overloaded or poorly synchronized with the rest, the entire flow slows down. Even if the infrastructure is technically capable, the experience breaks under pressure.

When I look at @SignOfficial , what caught my attention is how the system seems to focus exactly on this overlooked layer the structure of verifiable data itself. Instead of treating signatures and attestations as isolated actions, the design appears to frame them as part of a broader, composable flow. That shift in perspective feels subtle, but in practice, it changes how systems interact.

What interests me more is how $SIGN approaches the idea of digital sovereignty through attestations that can move across boundaries. In my experience watching networks evolve, one of the quiet limitations has always been fragmentation data that is valid in one context but difficult to reuse or verify in another. The approach here seems to acknowledge that problem directly, building around portability and structured verification rather than isolated trust assumptions.

Looking deeper, I find the separation of responsibilities particularly important. Verification doesn’t appear to rely on a single linear process, and tasks seem structured in a way that allows parallel handling without losing consistency. That balance between ordering and flexibility is something I’ve come to see as a defining trait of resilient systems.

Another thing I pay attention to is how systems behave under stress. When demand increases, weaker designs tend to create invisible bottlenecks. Queues build up, responses become inconsistent, and users are left guessing. What I notice here is an attempt to design for that reality to manage workload distribution and avoid forcing every verification through the same narrow path.

In my experience, that’s where infrastructure quietly succeeds or fails. Not in perfect conditions, but in moments of unpredictability.

What matters in practice is not how fast a system can move in isolation, but how clearly it can maintain trust and coordination when complexity increases. Good infrastructure doesn’t draw attention to itself it simply continues to function, even when the environment around it becomes uncertain.