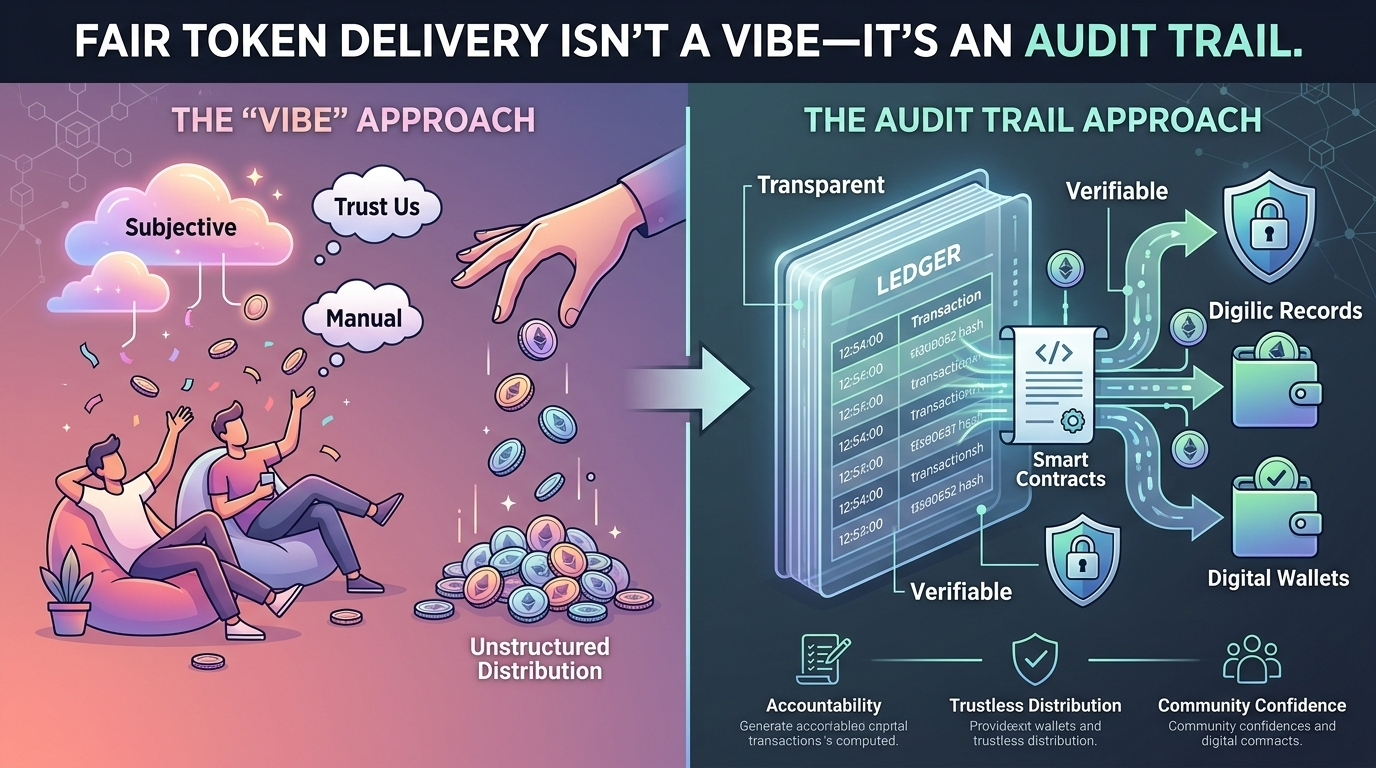

The loudest arguments about “fair” token distribution tend to happen after the tokens are gone. By then, the chain is settled, the screenshots are taken, and the only thing left to fight over is the story people tell about what happened. Someone insists the rules were clear. Someone else posts a thread of edge cases. A third person claims the whole thing was captured by farmers with a thousand wallets and a little patience. None of it is surprising. What’s surprising is how often the team running the drop can’t produce a clean, verifiable account of their own decisions.

In the days leading up to a distribution, fairness usually isn’t a principle. It’s a pile of files. There’s a snapshot taken at a specific block height, pulled from a node that may or may not have been lagging. There’s a query someone wrote against a subgraph, which times out if you widen the date range.

But people always look closely. The moment money is on the line, the internet becomes a forensic lab. Users compare notes in private group chats. They match each other’s onchain activity like detectives. They find wallets that look like they were generated in batches. They notice when the same cluster of addresses shows up across unrelated drops. They also notice when a real user—someone with months of activity, or a meaningful contribution—gets excluded for no obvious reason. When that happens, the team needs more than a confident statement. It needs an audit trail.

An audit trail isn’t a PR artifact. It’s a set of reproducible facts: the rule, the data source, the transformation, the final list, and the reason any exception was made. It’s the difference between “we filtered sybils” and “we excluded addresses that matched these criteria, using this method, at this time, and here’s what we did when we discovered false positives.” It’s not glamorous work. It’s change logs, timestamps, hashes, and a willingness to be pinned down.

Onchain systems are supposed to be good at this kind of honesty, yet token delivery often happens in a gray zone just off the chain. The chain can tell you what a wallet did. It can’t tell you who was behind it, or whether that action represents genuine participation or a scripted performance. Teams try to bridge the gap with heuristics—minimum transaction counts, unique contract interactions, gas spend thresholds. Those filters sometimes help. They also create new failure modes that are harder to defend because they’re subjective in disguise. A wallet that routed activity through a smart contract wallet might look “weird.” A privacy-minded user who rotates wallets might look “suspicious.” A person in a country with expensive gas might look “inactive.” The moment you encode guesswork into eligibility, you owe people an explanation that goes beyond “trust us.”

This is the practical case for trusted credentials in systems like SIGN. Not as a grand identity layer, not as a permanent dossier, but as a way to turn eligibility from an improvised narrative into a verifiable claim. A credential, done carefully, is a signed statement with a defined scope: this wallet passed a check by this issuer; this address corresponds to someone who completed a specific task; this participant belongs to a category under rules that were written down in advance. The signature matters because it moves the conversation from “who says?” to “who signed?” The scope matters because it limits what’s being asserted and how it can be reused.

You can feel the difference in the small details. Instead of a team manually merging three lists at 2 a.m., you can imagine a distribution pipeline that starts with claims issued over time, each with a clear provenance. Instead of storing sensitive user data in a shared drive because a vendor emailed an export, you can keep the personal details offchain and only use a proof that the check was completed. Instead of retroactively explaining why an address made it in, you can point to a credential that existed before the snapshot, with an issuer that can be audited and, if necessary, challenged.

None of this eliminates the hard choices. It just makes them harder to hide.

Because the uncomfortable truth is that fairness is political, even when it’s expressed as code. Someone decides what behavior counts as “real.” Someone decides which communities matter. Someone decides whether geographic restrictions apply, whether employees are excluded, whether market makers are treated differently, whether early testers should be rewarded even if they never became active users. These decisions can be defensible and still feel cruel at the edges. A good system doesn’t pretend otherwise. It records the decision and makes it legible.

Credentials introduce their own risks, and it’s better to name them than to wave them away. If one issuer becomes too central, the ecosystem starts to look like permissioning dressed up as neutrality. If credentials become long-lived and linkable, privacy erodes in slow motion, the way it often does—one “reasonable” reuse at a time. If the easiest credentials to issue are the ones tied to invasive checks, teams will default to them under pressure, and users will pay the cost. An honest audit trail has to include these tradeoffs: what was proven, what wasn’t, and why.

There’s also the gritty operational side that doesn’t fit in announcement posts. Token delivery isn’t a single event. It’s vesting schedules, lockups, employees who leave and need their allocations updated, investors who change custody providers, lost keys, compromised wallets, and the inevitable support queue where someone asks for an exception that might be legitimate. Every exception is a crack in the fairness story unless it’s handled with the same discipline as the main rule set. This is where credentials can be more than a gate. They can be a record of ongoing eligibility—revocable when circumstances change, inspectable when disputes arise, narrow enough that they don’t become a surveillance mechanism.

If you’ve ever watched a team scramble to answer basic questions—Why was this address included? Which dataset did you use? When did you apply the filter?—you understand what’s at stake. Without an audit trail, the system rewards confidence and punishes nuance. The loudest people win. The careful ones spend their time apologizing.

Fair token delivery, in the end, is not a feeling you can radiate into existence. It’s a discipline. It’s the mundane insistence that if you’re going to move value at scale, you should be able to show your work: the rulebook, the receipts, the method, the changes, the limits. That doesn’t guarantee everyone will be happy. It does something quieter and more important.