There’s a subtle shift you start to notice after spending enough time in certain games. You stop thinking about rewards directly. Not because they disappear, but because they stop being the main driver. You log in, do a few things, come back later. It feels less like optimization and more like habit. That’s rare in GameFi.

I didn’t expect that from @Pixels .At first, I thought it was just another farming loop with better UX. Same structure underneath. Do actions, earn tokens, refine your route, repeat. Efficient players win, everyone else fades out. That pattern has played out too many times to expect anything different.

But something didn’t quite fit. Players weren’t collapsing into a single optimal strategy. Some were slower, less efficient, even inconsistent. And yet, they stayed. That usually doesn’t happen in a pure extraction system. It suggests the system isn’t just rewarding activity. It’s shaping behavior in a more deliberate way.

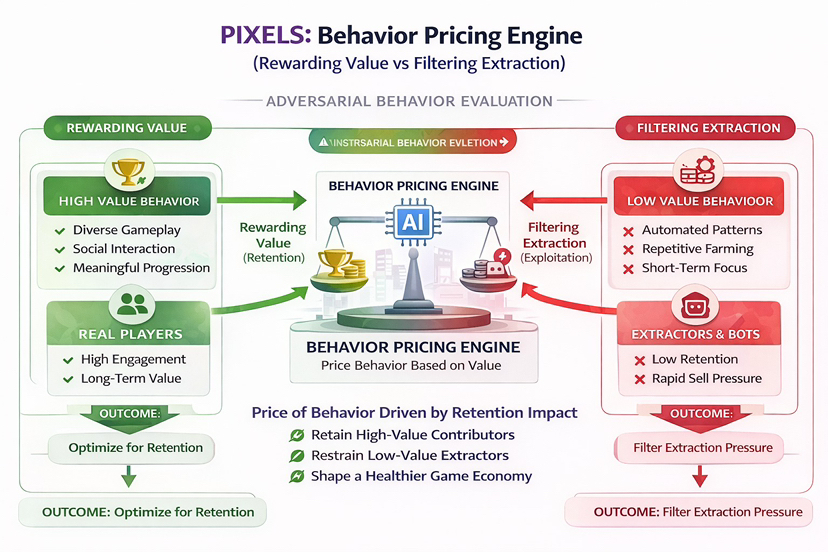

Most GameFi economies fail at the incentive layer. Not because gameplay is weak, but because rewards are static. Fixed outputs turn every action into a calculation. And once that happens, the system trains players to extract. Bots and optimized users don’t break the economy, they simply execute it better than everyone else.

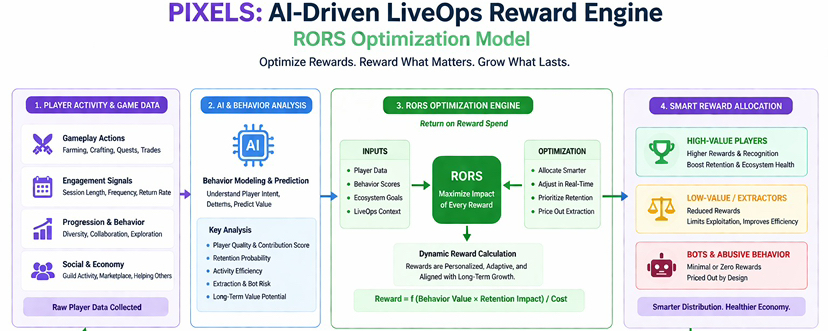

Pixels approaches this differently. It treats rewards less like emissions and more like capital that needs to be deployed with intent. The team calls this RORS, Return on Reward Spend. It’s not about how much you give out. It’s about how effectively each reward improves retention, engagement, and long-term value.

At its core, this is a data driven LiveOps engine. Not a fixed economy, but a system that continuously adjusts. Player behavior feeds into the model. The model reallocates rewards. Rewards shift behavior again. It’s not static design. It’s ongoing optimization happening in real time.

That changes how you think about anti bot systems. It’s not really about detecting bad actors perfectly. The system isn’t asking, “Is this a bot?” It’s asking, “Is this behavior worth paying for?” In an adversarial environment where players constantly optimize, that question matters more than identity. The system doesn’t eliminate extractors, it prices them out.

Not all players are treated equally, and that’s intentional. The system implicitly segments behavior. Some players generate long-term value. Others cycle through quickly. Instead of blocking one and rewarding the other outright, the system adjusts reward efficiency. Over time, value flows toward behavior that sustains the ecosystem.

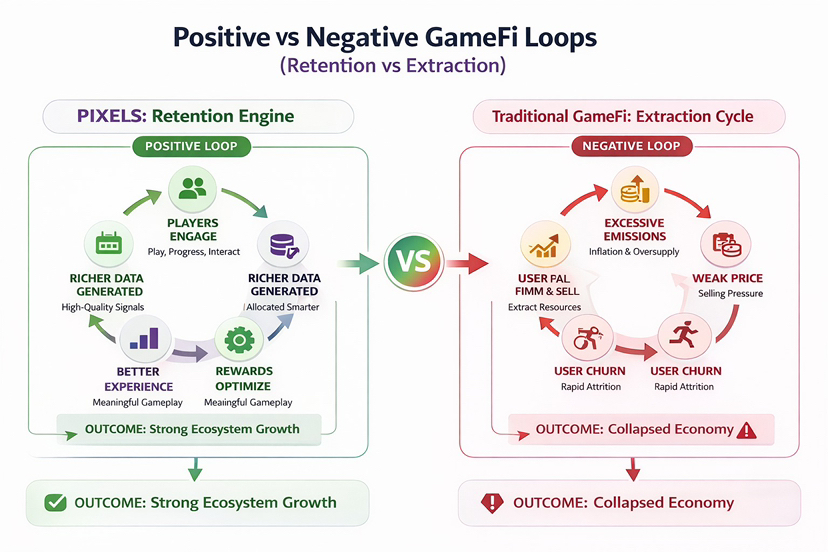

You can map the loop simply. Players generate data. Data improves reward allocation. Better rewards improve the experience. A better experience retains more players. More players generate better data. If it works, the system compounds. If it fails, it falls back into the familiar loop users farm, sell, price drops, users leave.

What makes this more interesting is how it scales. If this model extends across multiple games, the data advantage compounds. More environments, more behaviors, more signals. That feeds into sharper reward allocation. The publishing flywheel starts to form games bring users, users generate data, data improves rewards, better rewards attract more users.

But none of this removes the core difficulty. Systems like this need scale to function properly. Early on, the data is thin. Signal is noisy. It’s harder to distinguish between a genuinely engaged player and a highly optimized one. And in a system that constantly adjusts, players will adapt just as quickly.

That creates a moving equilibrium. The system evolves. Players evolve with it. Optimization doesn’t disappear, it just becomes harder to sustain. The question is whether the system can keep redirecting incentives faster than users can exploit them. That’s not a solved problem. It’s an ongoing contest.

The token sits right in the middle of this. $PIXEL can’t just be emission. If it is, then even an optimized system eventually feeds into inflation pressure. Supply expands, demand struggles to keep up. Without strong sinks and real in-game utility, efficiency only delays the outcome. It doesn’t change it.

Which brings everything back to retention. Not short term spikes or reward bursts, but actual behavior over time. Do players come back when rewards shift? Do they engage when there’s no obvious optimal path? Because utility only works if someone shows up again tomorrow.

So #pixel doesn’t really look like a typical GameFi project when you zoom out. It looks like a live reward engine operating under constant pressure. A system trying to allocate capital intelligently in an environment where every participant is trying to optimize it.

If behavior holds, everything else follows.