while scanning the reward flows last night

While looking through the Ronin block explorer sometime after midnight, I wasn't expecting Stacked to be the thing that made me pause. I was following Pixel token flows, cross-referencing withdrawal patterns since the platform went fully live on Ronin on March 26, 2026. Standard routine, honestly. But something in the behavior data caught my eye and I couldn't put it down.

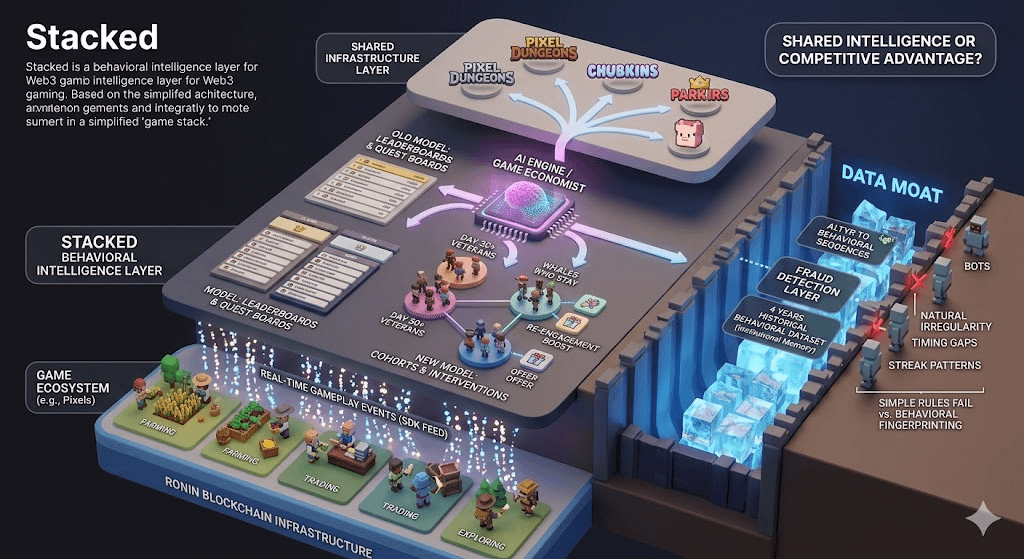

Most reward programs in web3 gaming are surface mechanics: complete a quest, earn a token, repeat. The loop is simple, predictable, and — as a result — instantly farmable. You've seen it play out in every P2E cycle. Quest boards fill up with identical behavior patterns, bots arrive, dilute, and eventually the reward pool collapses into something unsustainable. It's almost a rite of passage at this point.

What Stacked | #Stacked @Pixels is doing is structurally different, and I don't think that difference is being talked about enough.

The platform isn't a quest board. It's closer to a live behavioral intelligence layer embedded directly into the game stack. Studios feed gameplay events into the SDK in real-time — not aggregated daily summaries, actual granular events as they happen — and the AI engine on the other end starts building player cohorts, not just leaderboards.

Actually — I want to sit with that for a second. Cohorts, not leaderboards. That's the shift.

the contrast that stuck with me

Most gaming platforms hand studios a dashboard with aggregate numbers. DAU, session length, D7 retention. Broad strokes. A studio operator still needs a data scientist to translate those numbers into a specific intervention: who gets rewarded, for what, when.

Stacked collapses that translation layer. Studio operators can query the AI game economist in plain language. "Why are my Day 30+ veterans going quiet?" or "What separates whales who stay from whales who churn?" The system parses its behavioral dataset and surfaces cohort-level answers, then lets teams design and deploy targeted offers without the usual handoffs between departments.

I'll be real — when I first read this, I was skeptical. "AI-powered" has become something close to white noise in this space. But then I saw the internal numbers Pixels shared from their own ecosystem: when the AI engine was pointed at veteran players who hadn't spent in over 30 days and given personalized re-engagement offers, they recorded a 178% increase in conversion to spend and a 129% increase in active days. That's not a rounding error. That's the system working the way it's supposed to.

Pixels has $25 million in revenue and one million daily active users worth of behavioral history feeding this thing. That's what makes those numbers real, not theoretical. It's been running, adjusting, and getting corrected on live data for four years.

hmm… the data moat nobody's leading with

Here's the thing I think is genuinely underappreciated. The AI economist angle is compelling, sure. But the actual moat is older than the AI. It's the behavioral dataset underneath it.

Think about what four years of operating a live P2E economy at scale actually generates. You've seen every exploit pattern. Every bot signature. Every way a Taskboard gets gamed. Every behavioral fingerprint that precedes a player churning versus one that precedes a player spending. That dataset is what trained the fraud detection layer inside Stacked. And fraud detection in web3 gaming isn't a simple problem — it's a cat-and-mouse game where the attack vectors mutate constantly.

Stacked's anti-bot architecture works differently from most because it's not just filtering on wallet-level anomalies. It's filtering on behavioral sequences. A bot can mimic a wallet. It struggles to mimic the natural irregularity of a real human progressing through a game economy — the timing gaps, the spending decisions, the streak patterns with real-life friction baked in. The system knows what genuine progression looks like because it's seen millions of instances of it.

Compare that to a studio trying to bootstrap a reward system from zero. They'd write basic rules — flag wallets with identical timing, penalize rapid task completion. Competent bots bypass those in weeks. Stacked arrives with the equivalent of four years of adversarial training already done. That's not a feature you can replicate by integrating an SDK. It's institutional memory crystallized into logic.

There was a moment during the Q2 2025 blockchain gaming shutdowns — when dozens of Web3 games folded from low retention and unsustainable reward loops — where the absence of something like this became painfully clear. Games that had no behavioral context for their reward spend were essentially spraying tokens at anyone who showed up, bots included. The economics collapsed before the content could prove itself.

still pondering what this means going forward

Stacked is currently live inside Pixels, Pixel Dungeons, and Chubkins. That's the beta surface. The bigger implication — if the studio integrations open up at scale — is that behavioral intelligence becomes a shared infrastructure layer rather than a competitive advantage you have to build yourself.

That changes something fundamental about the cost structure of launching a Web3 game. Right now, every studio that wants to run a sustainable reward economy has to either hire a data science team or accept that their reward spend is leaking. Stacked positions itself as the third option: a system that already knows what good looks like.

I'm genuinely uncertain how quickly adoption scales outside the Pixels ecosystem. The SDK integration handles the technical side — but trust is slower to build. Studios hand Stacked visibility into their player event data. That's sensitive. Pixels has earned that trust by building in public for four years. A new partner studio needs to decide whether that track record transfers.

The reward-behavior linkage is also worth watching carefully. Right now the AI economist suggests experiments. Studios still run them and approve payouts. If that decision-making shifts more toward automation over time, the question of accountability gets more complex. Who owns a bad reward call made by an AI agent? Hmm. That line hasn't been tested yet.

And maybe the most interesting open question isn't about the platform at all. It's about what happens to the behavioral dataset itself as more studios integrate. Does cross-game behavioral data compound the AI's accuracy? If a player's $PIXEL engagement pattern in Chubkins helps predict their churn risk in a completely different game on the same platform — what does that mean for how studios compete for the same users?

I don't have a clean answer to that. But I keep thinking about it. create 3d image repesention of this