Every cycle rediscovers speed as if it were invention. Faster blocks. Higher TPS. Sub-second finality marketed like a moon landing. The vocabulary rotates, the charts stretch vertically, and the applause arrives on schedule. But beneath the spectacle, latency remains poorly understood.

Speed is not a headline. It is a discipline.

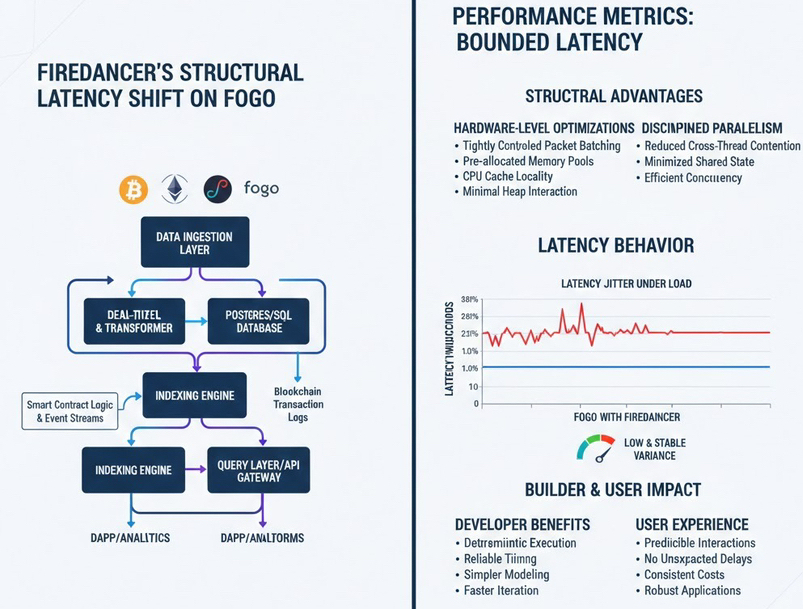

When I began looking closely at Fogo’s integration of Firedancer, I expected familiar territory: benchmark slides, peak throughput screenshots, carefully curated comparisons. What stood out instead was less theatrical and far more structural. The ambition was not merely to be fast. It was to make latency behave.

That distinction changes the conversation.

Most chains treat latency as a competitive metric. Lower is better. Faster wins. But raw speed without determinism is ornamental. A system can produce blocks quickly and still feel unstable under pressure. Execution may be rapid in isolation yet inconsistent in practices

Under load, variance reveals itself. Networking jitter compounds. Memory allocation patterns introduce micro-stalls. Queue contention surfaces at the worst moment. The advertised number drifts.

Firedancer’s promise inside Fogo is not simply reduced latency. It is bounded latency. The structural shift is not acceleration alone,it is predictability at high speed.

To understand why this matters, consider something mundane but consequential: packet processing and memory behavior.

Traditional validator clients often rely heavily on kernel networking stacks and dynamic memory allocation. Packets arrive, buffers are allocated, data is copied, structures are instantiated, and eventually freed. Under burst load, that dance becomes expensive. Cache misses accumulate. Memory fragmentation increases. Latency jitter follows.

Firedancer approaches this differently.

It uses tightly controlled packet batching and pre-allocated memory pools, reducing dynamic allocation overhead during critical execution paths. By keeping hot data structures aligned with CPU cache locality and minimizing unpredictable heap interactions, it narrows the variance window at the hardware level.

This is not glamorous engineering. It is disciplined engineering.

And when packet ingress, signature verification, and transaction scheduling avoid unnecessary cache thrashing or allocator contention, latency doesn’t just drop, it stabilizes.

Parallel execution is frequently advertised as the answer to scalability. More cores, more threads, more simultaneous work. But concurrency without control introduces race conditions, synchronization overhead, and scheduling unpredictability.

Firedancer’s parallelism is restrained. Workloads are decomposed with care. Responsibilities are isolated to reduce cross thread contention.

There’s an irony here. In a space obsessed with infinite scaling, the real breakthrough is often reducing friction rather than multiplying activity.

Inside Fogo, this restraint matters. Execution pipelines remain lean. Validation ordering remains coherent. Micro delays don’t cascade into systemic jitter.

In distributed systems, networking is frequently the hidden destabilizer. Packet loss, kernel queue delays, context switching overhead, small uncertainties that aggregate into observable delay.

By tightening packet handling paths and reducing dependency on heavyweight abstractions, Firedancer compresses that uncertainty band. Networking becomes less theatrical and more mechanical.

Ultra low latency that occasionally spikes is still volatility. Ultra low latency that remains within narrow, repeatable bounds becomes infrastructure.

Many blockchain systems treat hardware as interchangeable. Nodes run wherever they can, and performance variability is absorbed as an environmental tax.

Firedancer acknowledges hardware reality. It collaborates with CPU architecture instead of abstracting away from it. Cache locality is respected. Memory bandwidth is considered. Data movement is minimized.

Physics does not negotiate.

On Fogo, this manifests as execution pathways that feel contained. Transactions travel a predictable route from ingress to validation without unnecessary detours. Less wandering means fewer surprises.

The more subtle consequence of unpredictable latency is psychological.

Over time, that expectation shapes architecture. More buffers. Wider margins. Simplified interactions. Systems padded against instability.

Working inside a low variance environment shifts that instinct. If latency holds within tight bounds, modeling becomes direct. UX stops assuming worst case swings.

Ultra low latency reduces friction. Predictable latency reduces anxiety.

It is tempting to reduce Firedancer’s contribution to a throughput headline. But throughput without integrity is noise. High transaction counts mean little if execution timing fluctuates unpredictably.

Fogo’s target appears more measured: high performance within controlled variance. Deterministic execution sequencing. Deterministic latency allows systems to synchronize safely at speed.

Watching the broader market celebrate TPS milestones while ignoring latency variance has always felt slightly surreal. It resembles applauding a car’s top speed without examining steering stability.

By tightening packet paths, controlling memory allocation, preserving cache locality, and minimizing variance across execution pipelines, Firedancer enables Fogo to pursue speed without improvisation.

Latency ceases to drift and becomes governed.

And once speed becomes predictable rather than episodic, maturity stops being a promise and becomes infrastructure.