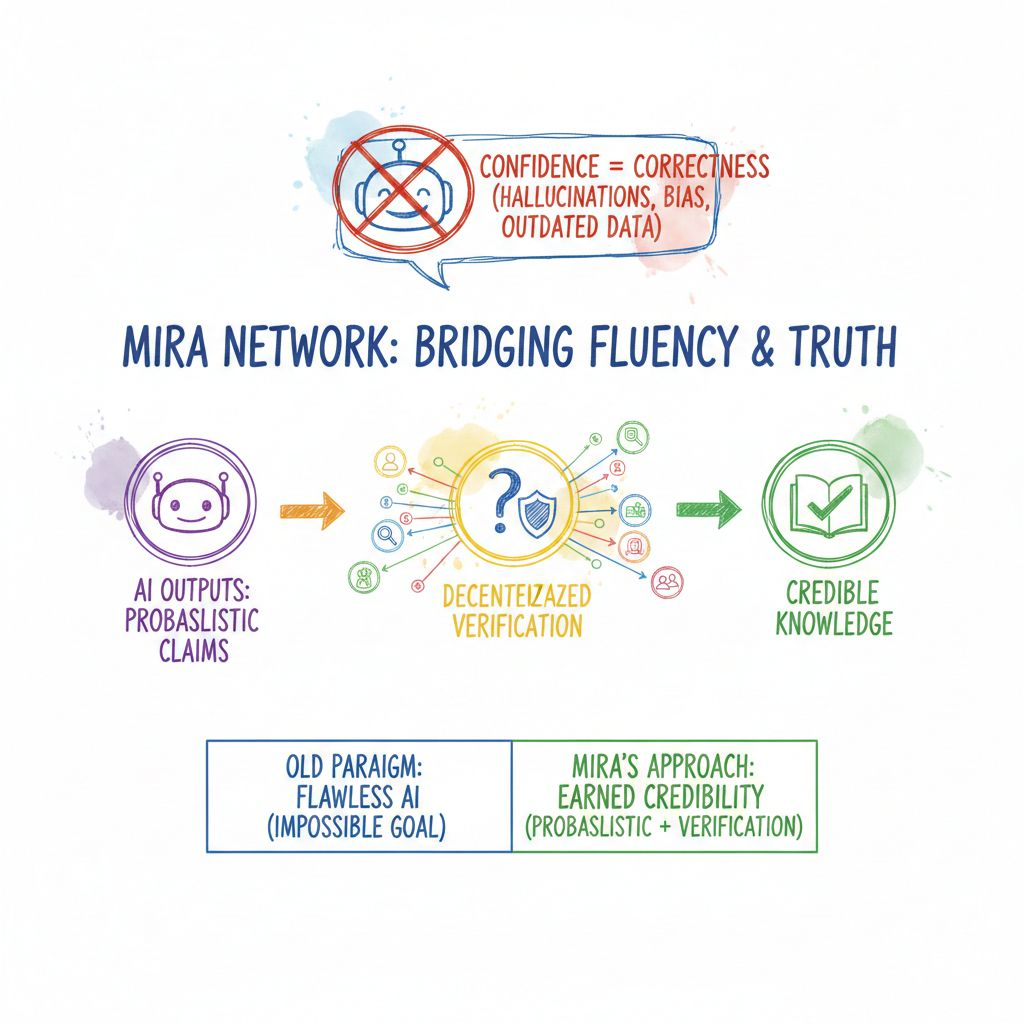

Artificial intelligence has become incredibly good at sounding right. It can explain complex legal issues, summarize medical studies, generate investment insights, and even hold convincing conversations. But anyone who works closely with AI knows the uncomfortable truth: confidence is not the same as correctness. Models can hallucinate facts, mix outdated data with current information, or subtly reinforce bias — all while sounding completely certain.

That gap between fluency and truth is where Mira Network positions itself.

Instead of trying to build a flawless AI model — an almost impossible task — Mira takes a different approach. It accepts that AI outputs are probabilistic by nature. They are educated guesses based on patterns, not guaranteed truths. So rather than trusting a single model, Mira turns AI responses into claims that must earn their credibility through decentralized verification.

Think of it less as “fact-checking AI” and more as building a system where truth emerges from coordination.

When an AI produces a complex answer, Mira’s system breaks it down into smaller, testable statements. Each statement becomes a verification task. These tasks are distributed across a network of independent validator nodes. The important detail here is independence. Different nodes may use different models, data sources, or validation techniques. This diversity reduces the risk that one shared blind spot becomes a systemic failure.

Validators don’t just participate casually. They stake the network’s native token to take part. Their capital is at risk. If they consistently validate accurately, they earn rewards. If they submit dishonest or careless judgments, they can be penalized. In other words, accuracy is not just encouraged — it is economically enforced.

This is where the token becomes meaningful. It is not decorative governance theater. It secures the network through staking, powers participation incentives, enables governance over protocol parameters, and acts as payment for verification services. Applications that require reliable AI outputs pay fees. Validators compete to provide accurate assessments. The token sits at the center of that economic loop.

The deeper idea here is powerful: reliability should not depend on trusting a company, a server, or a single model. It should come from transparent incentives and distributed agreement.

Architecturally, Mira does not try to replace AI models. It sits between AI outputs and real-world consequences. When an AI system generates a recommendation — whether for trading, lending, research, or autonomous decision-making — Mira can validate the underlying claims before they are executed. The final verification results are anchored on-chain as cryptographic proofs. This creates an audit trail without bloating the blockchain with raw data.

As AI agents begin interacting with financial systems, smart contracts, and decentralized applications, this verification layer becomes increasingly relevant. An autonomous agent executing trades or triggering on-chain actions cannot rely on unchecked probabilistic text. It needs confidence grounded in something stronger than a single model’s prediction.

That is where Mira’s long-term potential becomes clear. It operates as a trust layer for machine-to-machine economies.

Of course, the model is not without challenges. Validator diversity must remain genuine to avoid correlated bias. The network must stay cost-efficient so verification does not become prohibitively expensive. Incentive structures must be robust enough to resist collusion or manipulation. These are not trivial problems — they are fundamental tests of the protocol’s resilience.

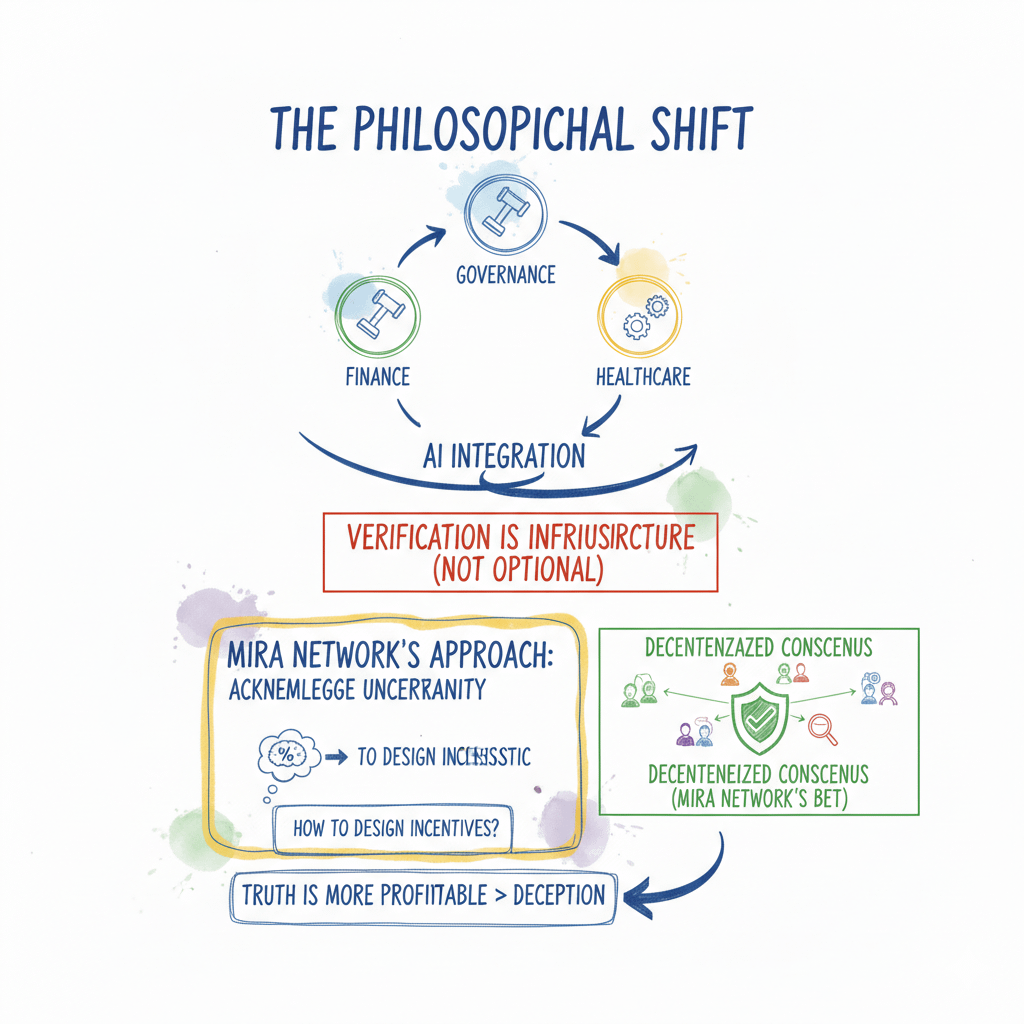

But what stands out is the philosophical shift. Mira does not claim to eliminate AI error. Instead, it acknowledges uncertainty and builds a structure around it. It accepts that AI is probabilistic and then asks: how can we design incentives so that truth is more profitable than deception?

If AI continues to integrate into finance, governance, healthcare, and automation, verification will not be optional. It will be infrastructure. The real question is whether decentralized consensus can deliver reliability more effectively than centralized oversight.

Mira Network is betting that it can.

And if that bet proves correct, the project will not just improve AI outputs — it will quietly redefine how digital systems establish trust in a world increasingly shaped by machines.